Christian Tarsney on future bias and a possible solution to moral fanaticism

By Robert Wiblin and Keiran Harris · Published May 5th, 2021

Christian Tarsney on future bias and a possible solution to moral fanaticism

By Robert Wiblin and Keiran Harris · Published May 5th, 2021

On this page:

- Introduction

- 1 Highlights

- 2 Articles, books, and other media discussed in the show

- 3 Transcript

- 3.1 Rob's intro [00:00:00]

- 3.2 The interview begins [00:01:20]

- 3.3 Future bias [00:04:33]

- 3.4 Philosophy of time [00:11:17]

- 3.5 Money pumping [00:18:53]

- 3.6 Time travel [00:21:22]

- 3.7 Decision theory [00:24:36]

- 3.8 Eternalism [00:32:32]

- 3.9 Fanaticism [00:38:33]

- 3.10 Stochastic dominance [00:52:11]

- 3.11 Background uncertainty [00:56:27]

- 3.12 Epistemic worries about longtermism [01:12:44]

- 3.13 Best arguments against working on existential risk reduction [01:32:34]

- 3.14 The scope of longtermism [01:41:12]

- 3.15 The value of the future [01:50:09]

- 3.16 Moral uncertainty [01:57:25]

- 3.17 Christian's personal priorities [02:17:27]

- 3.18 The state of global priorities research [02:21:33]

- 3.19 Competitive debating [02:28:34]

- 3.20 The Berry paradox [02:35:00]

- 3.21 Rob's outro [02:37:24]

- 4 Learn more

- 5 Related episodes

Most people would prefer to have had 10 hours of painful surgery yesterday than 1 hour of painful surgery coming up today. But as today's guest explains, this 'future bias' is harder to justify than it first appears.

If you think that there is no fundamental asymmetry between the past and the future, maybe we should be sanguine about the future — including sanguine about our own mortality — in the same way that we’re sanguine about the fact that we haven’t existed forever.

Christian Tarsney

Imagine that you’re in the hospital for surgery. This kind of procedure is always safe, and always successful — but it can take anywhere from one to ten hours. You can’t be knocked out for the operation, but because it’s so painful — you’ll be given a drug that makes you forget the experience.

You wake up, not remembering going to sleep. You ask the nurse if you’ve had the operation yet. They look at the foot of your bed, and see two different charts for two patients. They say “Well, you’re one of these two — but I’m not sure which one. One of them had an operation yesterday that lasted ten hours. The other is set to have a one-hour operation later today.”

So it’s either true that you already suffered for ten hours, or true that you’re about to suffer for one hour.

Which patient would you rather be?

Most people would be relieved to find out they’d already had the operation. Normally we prefer less pain rather than more pain, but in this case, we prefer ten times more pain — just because the pain would be in the past rather than the future.

Christian Tarsney, a philosopher at Oxford University’s Global Priorities Institute, has written a couple of papers about this ‘future bias’ — that is, that people seem to care more about their future experiences than about their past experiences.

That probably sounds perfectly normal to you. But do we actually have good reasons to prefer to have our positive experiences in the future, and our negative experiences in the past?

One of Christian’s experiments found that when you ask people to imagine hypothetical scenarios where they can affect their own past experiences, they care about those experiences more — which suggests that our inability to affect the past is one reason why we feel mostly indifferent to it.

But he points out that if that was the main reason, then we should also be indifferent to inevitable future experiences — if you know for sure that something bad is going to happen to you tomorrow, you shouldn’t care about it. But if you found out you simply had to have a horribly painful operation tomorrow, it’s probably all you’d care about!

Another explanation for future bias is that we have this intuition that time is like a videotape, where the things that haven’t played yet are still on the way.

If your future experiences really are ahead of you rather than behind you, that makes it rational to care more about the future than the past. But Christian says that, even though he shares this intuition, it’s actually very hard to make the case for time having a direction.

It’s a live debate that’s playing out in the philosophy of time, as well as in physics. And Christian says that even if you could show that time had a direction, it would still be hard to explain why we should care more about the past than the future — at least in a way that doesn’t just sound like “Well, the past is in the past and the future is in the future”.

For Christian, there are two big practical implications of these past, present, and future ethical comparison cases.

The first is for altruists: If we care about whether current people’s goals are realised, then maybe we should care about the realisation of people’s past goals, including the goals of people who are now dead.

The second is more personal: If we can’t actually justify caring more about the future than the past, should we really worry about death any more than we worry about all the years we spent not existing before we were born?

Christian and Rob also cover several other big topics, including:

- A possible solution to moral fanaticism, where you can end up preferring options that give you only a very tiny chance of an astronomically good outcome over options that give you certainty of a very good outcome

- How much of humanity’s resources we should spend on improving the long-term future

- How large the expected value of the continued existence of Earth-originating civilization might be

- How we should respond to uncertainty about the state of the world

- The state of global priorities research

- And much more

Get this episode by subscribing to our podcast on the world’s most pressing problems and how to solve them: type 80,000 Hours into your podcasting app. Or read the transcript below.

Producer: Keiran Harris

Audio mastering: Ryan Kessler

Transcriptions: Sofia Davis-Fogel

Highlights

Practical implications of past, present, and future ethical comparison cases

Christian Tarsney: I think there’s two things that are worth mentioning. One is altruistically significant, which is, if you think that one of the things we should care about as altruists is whether people’s desires or preferences are satisfied or whether people’s goals are realized, then one important question is, do we care about the realization of people’s past goals, including the goals of past people, people who are dead now? And if so, that might have various kinds of ethical significance. For instance, I think if I recall correctly, Toby Ord in The Precipice makes this point that well, past people are engaged in this great human project of trying to build and preserve human civilization. And if we allowed ourselves to go extinct, we would be letting them down or failing to carry on their project. And whether you think that that consideration has normative significance might depend on whether you think the past as a whole has normative significance.

Robert Wiblin: Yeah. That adds another wrinkle that I guess you could think that the past matters, but perhaps if you only cared about experiences, say, then obviously people in the past can’t have different experiences because of things in the future, at least we think not. So you have to think that the kind of fixed preference states that they had in their minds in the past, it’s still good to actualize those preferences in the future, even though it can’t affect their mind in the past.

Christian Tarsney: Yeah, that’s right. So you could think that we should be future biased only with respect to experiences, and not with respect to preference satisfaction. But then that’s a little bit hard to square if you think that the justification for future bias is this deep metaphysical feature of time. If the past is dead and gone, well, why should that affect the importance of experiences but not preferences? Another reason why the bias towards the future might be practically interesting or significant to people less from an altruistic standpoint than from a personal or individual standpoint, is this connection with our attitudes towards death, which is maybe the original context in which philosophers thought about the bias towards the future. So there’s this famous argument that goes back to Epicurus and Lucretius that says, look, the natural reason that people give for fearing death is that death marks a foundry of your life, and after you’re dead, you don’t get to have any more experiences, and that’s bad.

Christian Tarsney: But you could say exactly the same thing about birth, right? So before you were born, you didn’t have any experiences. And well, on the one hand, if you know that you’re going to die in five years, you might be very upset about that, but if you’re five years old and you know that five years ago you didn’t exist, people don’t tend to be very upset about that. And if you think that the past and the future should be on a par, that there is no fundamental asymmetry between those two directions in time, one conclusion that people have argued for is maybe we should be sanguine about the future, including sanguine about our own mortality, in the same way that we’re sanguine about the past and sanguine about the fact that we haven’t existed forever. Which I’m not sure if I can get myself into the headspace of really internalizing that attitude. But I think it’s a reasonably compelling argument and something that maybe some people can do better than I can.

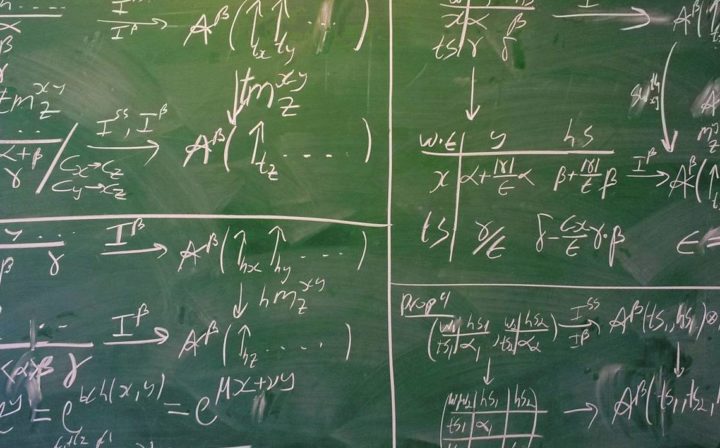

Fanaticism

Christian Tarsney: Roughly the problem is that if you are an expected value maximizer, which means that when you’re making choices you just evaluate an option by taking all the possible outcomes and you assign them numeric values, the quantity of value or goodness that would be realized in this outcome, and then you just take a probability-weighted sum, the probability times the value for each of the possible outcomes, and add those all up and that tells you how good the option is…

Christian Tarsney: Well, if you make decisions like that, then you can end up preferring options that give you only a very tiny chance of an astronomically good outcome over options that give you certainty of a very good outcome, or you can prefer certainty of a bad outcome over an option that gives you near certainty of a very good outcome, but just a tiny, tiny, tiny probability of an astronomically bad outcome. And a lot of people find this counterintuitive.

Robert Wiblin: So the basic thing is that very unlikely outcomes that are massive in their magnitude that would be much more important than the other outcomes in some sense end up dominating the entire expected value calculation and dominating your decision even though they’re incredibly improbable and that just feels intuitively wrong and unappealing.

Christian Tarsney: Well, here’s an example that I find drives home the intuition. So suppose that you have the opportunity to really control the fate of the universe. You have two options, you have a safe option that will ensure that the universe contains, over its whole history, 1 trillion happy people with very good lives, or you have the option to take a gamble. And the way the gamble works is almost certainly the outcome will be very bad. So there’ll be 1 trillion unhappy people, or 1 trillion people with say hellish suffering, but there’s some teeny, teeny, tiny probability, say one in a googol, 10 to the 100, that you get a blank check where you can just produce any finite number of happy people you want. Just fill in a number.

Christian Tarsney: And if you’re trying to maximize the expected quantity of happiness or the expected number of happy people in the world, of course you want to do that second thing. But there is, in addition to just the counterintuitiveness of it, there’s a thought like, well, what we care about is the actual outcome of our choices, not the expectation. And if you take the risky option and the thing that’s almost certainly going to happen happens, which is you get a very terrible outcome, the fact that it was good in expectation doesn’t give you any consolation, or doesn’t seem to retrospectively justify your choice at all.

Stochastic dominance

Christian Tarsney: My own take on fanaticism and on decision making under risk, for whatever it’s worth, is fairly permissive. A weird and crazy view that I’m attracted to is that we’re only required to avoid choosing options that are what’s called first-order stochastically dominated, which means that you have two options, let’s call them option one and option two. And then there’s various possible outcomes that could result from either of those options. And for each of those outcomes, we ask what’s the probability if you choose option one or if you choose option two that you get not that outcome specifically, but an outcome that’s at least that good?

Christian Tarsney: Say option one for any possible outcome gives you a greater overall probability of an outcome at least that desirable, then that seems a pretty compelling reason to choose option one. To give maybe a simple example would be helpful. Suppose that I’m going to flip a fair coin, and I offer you a choice between two tickets. One ticket will pay $1 if the coin lands heads and nothing of it lands tails, the other ticket will pay $2 if the coin lands tails, but nothing if it lands heads. So you don’t have what’s called state-wise dominance here, because if the coin lands heads then the first ticket gives you a better outcome, $1 rather than $0. But you do have stochastic dominance because both tickets give you the same chance of at least $0, namely certainty, both tickets give you a 50% chance of at least $1, but the second ticket uniquely gives you a 50% chance of at least $2, and that seems a compelling argument for choosing it.

Robert Wiblin: I see. I guess, and in a continuous case rather than a binary one, you would have to say, well, the worst case is better in say scenario two rather than scenario one. And the one percentile case is better and the second percentile case, the median is better, or at least as good, then the best case scenario is also as good or better. And so across the whole distribution of outcomes from worst to best, with probability adding them up as percentiles, the second scenario is always equal or better. And so it would seem crazy to choose the option that is always equally as good or worse, no matter how lucky you get.

Christian Tarsney: Right. Even though there are states of the world where the stochastically dominant option will turn out worse, nevertheless the distribution of possible outcomes is better.

Robert Wiblin: Okay. So you’re saying if you compare the scenario where you get unlucky in scenario two versus lucky in scenario one, scenario one could end up better. But ex-ante, before you know whether you got lucky with the outcome or not, it was worse at every point.

Christian Tarsney: Yeah, exactly.

The scope of longtermism

Christian Tarsney: There are two motivations for thinking about this. One is a worry that I think a lot of people have — certainly a lot of philosophers have — about longtermism, which is that it has this flavor of demanding extreme sacrifices from us. That maybe, for instance, if we really assign the same moral significance to the welfare of people in the very distant future, what that will require us to do is just work our fingers to the bone and give up all of our pleasures and leisure pursuits in order to maximize the probability at the eighth decimal place or something like that of humanity having a very good future.

Christian Tarsney: And this is actually a classic argument in economics too, that the reason that you need a discount rate, and more particularly, the reason why you need a rate of pure time preference, why you need to care about the further future less just because it’s the further future, is that otherwise you end up with these unreasonable conclusions about what the savings rate should be.

Robert Wiblin: Effectively we should invest everything in the future and kind of consume nothing now. It’d be like taking all of our GDP and just converting it into more factories to make factories kind of thing, rather than doing anything that we value today.

Christian Tarsney: Yeah, exactly. Both in philosophy and in economics, people have thought, surely you can’t demand that much of the present generation. And so one thing we wanted to think about is, how much does longtermism, or how much does a sort of temporal neutrality, no rate of pure time preference actually demand of the present generation in practice? But the other question we wanted to think about is, insofar as the thing that we’re trying to do in global priorities research, in thinking about cause prioritization, is find the most important things and draw a circle around them and say, “This is what humanity should be focusing on,” is longtermism the right circle to draw?

Christian Tarsney: Or is it maybe the case that there’s a couple of things that we can productively do to improve the far future, for instance reduce existential risks, and maybe we can try to improve institutional decision making in certain ways, and other ways of improving the far future, well, either there’s just not that much we can do or all we can do is try to make the present better in intuitive ways. Produce more fair, just, equal societies and hope that they make better decisions in future.

Robert Wiblin: Improve education.

Christian Tarsney: Yeah, exactly. Where the more useful thing to say is not we should be optimizing the far future, but this more specific thing, okay we should be trying to minimize existential risks and improve the quality of decision making in national and global political institutions, or something like that.

The value of the future

Christian Tarsney: There is this kind of outside view perspective that says if we want to form rational expectations about the value of the future, we should just think about the value of the present and look for trend lines over time. And then you might look at, for instance, the Steven Pinker stuff about declines in violence, or look at trends in global happiness. But you might also think about things like factory farming, and reach the conclusion that actually, even though human beings have been getting both more numerous and better off over time, the net effect of human civilization has been getting worse and worse and worse, as we farm more and more chickens or something like that.

Christian Tarsney: I’ll say, for my part, I’m a little bit skeptical about how much we can learn from this, because we should expect the outside view, extrapolative reasoning makes sense when you expect to remain in roughly the same regime for the time frame that you’re interested in. But I think there’s all sorts of reasons why we shouldn’t expect that. For instance, there’s the problem of converting wealth into happiness that we just haven’t really mastered, because, well, maybe we don’t have good enough drugs or something like that. We know how to convert humanity’s wealth and resources into cars. But we don’t know how to make people happy that they own a car, or as happy as they should be, or something like that.

Christian Tarsney: But that’s in principle a solvable problem. Maybe it’s just getting the right drugs, or the right kinds of psychotherapy, or something like that. And in the long term it seems very probable to me that we’ll eventually solve that problem. And then there’s other kinds of cases where the outside view reasoning just looks kind of clearly like it’s pointing you in the wrong direction. For instance, maybe the net value of human civilization has been trending really positively. Humanity has been a big win for the world just because we’re destroying so much habitat that we’re crowding out wild animals who would otherwise be living lives of horrible suffering. But obviously that trendline is bounded. We can’t create negative amounts of wilderness. And so if that’s the thing that’s driving the trendline, you don’t want to extrapolate that out to the year 1 billion or something and say, “Well, things will be awesome in 1 billion years.”

Externalism, internalism, and moral uncertainty

Christian Tarsney: Yeah, so unfortunately, internalism and externalism mean about 75 different things in philosophy. This particular internalism and externalism distinction was coined by a philosopher named Brian Weatherson. The way that he conceives the distinction, or maybe my paraphrase of the way he conceives the distinction, is basically an internalist is someone who says normative principles, ethical principles, for instance, only kind of have normative authority over you to the extent that you believe them. Maybe there’s an ethical truth out there, but if you justifiably believe some other ethical theory, some false ethical theory, well, of course the thing for you to do is go with your normative beliefs. Do the thing that you believe to be right.

Christian Tarsney: Whereas externalists think at least some normative principles, maybe all normative principles, have their authority unconditionally. It doesn’t depend on your beliefs. For instance, take the trolley problem. Should I kill one innocent person to save five innocent people? The internalist says suppose the right answer is you should kill the one to save the five, but you’ve just read a lot of Kant and Foot and Thompson and so forth and you become very convinced maybe in this particular variant of the trolley problem at least, that the right thing to do is to not kill the one, and to let the five die. Well, clearly there is some sense in which you should do the thing that you believe to be right. Because what other guide could you have, other than your own beliefs? Versus the externalist says well, if the right thing to do is kill the one and save the five, then that’s the right thing to do, what else is there to say about it?

Robert Wiblin: Yeah. Can you tie back what those different views might imply about how you would resolve the issue of moral uncertainty?

Christian Tarsney: The externalist, at least the most extreme externalist, basically says that there is no issue of moral uncertainty. What you ought to do is the thing that the true moral theory tells you to do. And it doesn’t matter if you don’t believe the true moral theory, or you’re uncertain about it. And the internalist of course is the one who says well no, if you’re uncertain, you have to account for that uncertainty somehow. And the most extreme internalist is someone who says that whenever you’re uncertain between two normative principles, you need to go looking for some higher-order normative principle that tells you how to handle that uncertainty.

Articles, books, and other media discussed in the show

Christian’s work

- Thank goodness that’s Newcomb: The practical relevance of the temporal value asymmetry

- Future bias in action: Does the past matter more when you can affect it?

- Exceeding expectations: stochastic dominance as a general decision theory

- The epistemic challenge to longtermism

Other links

- The big problem with the Apple Watch is that time is an illusion, Vox

- A paradox for tiny probabilities and enormous values, GPI working paper by Nick Beckstead and Teruji Thomas

- Fixed-point solutions to the regress problem in normative uncertainty, by Phil Trammell

- Lincoln-Douglas-style high school debate (this one judged by Christian)

- The Berry paradox

- Other paradoxes of self-reference, Stanford Encyclopedia of Philosophy

Transcript

Table of Contents

- 1 Rob’s intro [00:00:00]

- 2 The interview begins [00:01:20]

- 3 Future bias [00:04:33]

- 4 Philosophy of time [00:11:17]

- 5 Money pumping [00:18:53]

- 6 Time travel [00:21:22]

- 7 Decision theory [00:24:36]

- 8 Eternalism [00:32:32]

- 9 Fanaticism [00:38:33]

- 10 Stochastic dominance [00:52:11]

- 11 Background uncertainty [00:56:27]

- 12 Epistemic worries about longtermism [01:12:44]

- 13 Best arguments against working on existential risk reduction [01:32:34]

- 14 The scope of longtermism [01:41:12]

- 15 The value of the future [01:50:09]

- 16 Moral uncertainty [01:57:25]

- 17 Christian’s personal priorities [02:17:27]

- 18 The state of global priorities research [02:21:33]

- 19 Competitive debating [02:28:34]

- 20 The Berry paradox [02:35:00]

- 21 Rob’s outro [02:37:24]

Rob’s intro [00:00:00]

Hi listeners, this is the 80,000 Hours Podcast, where we have unusually in-depth conversations about the world’s most pressing problems, what you can do to solve them, and whether or not the past actually exists. I’m Rob Wiblin, Head of Research at 80,000 Hours.

The Global Priorities Institute at Oxford University has led to some of our most popular episodes in the past, thanks to Hilary Greaves and Will MacAskill — and in this episode we’re back for more fundamental thinking about what matters most with their colleague Christian Tarsney.

I was slightly worried this episode would be a bit too technical, but Christian turned out to be a great communicator who was able to zero in on the parts of his papers that really matter to those of us trying to make the world a better place.

Most importantly, I think this episode may contain a real solution to the problem of fanaticism and Pascal’s mugging cases, which have in recent years been used to challenge the merit of using expected value to make decisions in high-stakes situations.

I came into this interview not really understanding Christian’s research, but left able to explain it to my housemates, which counts as serious progress in my mind.

As always, we’ve got links to learn much more on the page associated with this episode, as well as a transcript and summary of key points. If your podcasting software allows it, we also support chapters so you can skip to whichever part of the conversation interests you most.

Without further ado, here’s Christian Tarsney.

The interview begins [00:01:20]

Robert Wiblin: Today, I’m speaking with Christian Tarsney. Christian is a philosopher at Oxford University’s Global Priorities Institute where he works with previous 80,000 Hours podcast guests Hilary Greaves and Will MacAskill. He did his PhD at the University of Maryland on how to make rational decisions when you’re uncertain about fundamental ethical principles, and his research interests include ethics and decision theory, as well as effective altruism and political philosophy. He’s published papers on — among many other things — the use of discount rates for climate policy and our attitudes towards past and future experiences. Fun stuff. Thanks for coming on the podcast, Christian.

Christian Tarsney: Thanks, Rob. Great to be here.

Robert Wiblin: I hope to get to talk about moral fanaticism and epistemic challenges that people have made to longtermism. But first, what are you working on at the moment and why do you think it’s important?

Christian Tarsney: So broadly, I’m a researcher in philosophy at the Global Priorities Institute, and we are trying to build a field of global priorities research, which means thinking about how altruistically motivated agents should use their resources to do the most good — and more specifically, what causes or problems they should focus on. At the moment we’re focused on building that field in philosophy and economics and trying to recruit the tools of those disciplines to answer questions that we think are really important. We think this is important because if we can come up with better answers to these questions, then hopefully that’ll influence what people actually do when they’re deciding where to allocate their resources.

Christian Tarsney: I think as a philosopher, you always have this background worry, are we actually improving our understanding of anything or are we just spinning our wheels? But optimistically, I think we’ve made some progress and are continuing to make progress on the low-hanging fruit because not a lot of people have thought really explicitly about this question of how to use resources to do the most good and how to prioritize among the many things that seem important and pressing. More specifically, my own research interests at the moment… I have a few things on my plate, but the things that are really gripping me, number one are epistemic issues to do with predicting and predictably influencing the far future. So insofar as at least one of the most important things we want to do with our resources is make the world a better place in the very long term, we want to be able to predict the long-term effects of our actions.

Christian Tarsney: And we just have very little empirical information on our ability to predict or predictably influence the future on the scale of centuries or millennia. It’s hard to see how we could have that data. And so we have to do some a priori speculating or modeling to try to figure out how we can do this well. And then the second related question that I’m interested in is, well, suppose it turns out that we have a limited ability to predict the far future, but we have enough that in expectation the far future really matters, so we can make a big difference to the expected value of the far future. But most of that expected value comes from tiny probabilities of having enormous, really persistent effects. Should we just naively maximize expected value in those situations? Or are there some other decision rules that apply when we’re dealing with those extreme probabilities? So those are two problems that seem pressing from the standpoint of cause prioritization, and are also neglected and hopefully tractable with the tools of philosophy and economics.

Future bias [00:04:33]

Robert Wiblin: Beautiful. Alright. Yeah. We’ll return to all of these issues that you raised through the course of the conversation, and also check in on how the field of global priorities research is going later on, but let’s waste no time getting into an interesting philosophical issue that you’ve looked into into the past, which is called future bias. You’ve got two papers out on this topic, called Thank goodness that’s Newcomb: The practical relevance of the temporal value asymmetry and Future bias in action: Does the past matter more when you can affect it? First off, what is future bias, for people who are not familiar with it?

Christian Tarsney: Broadly, future bias or the bias towards the future or the temporal value asymmetry is this phenomenon that people seem to care more about their future experiences than their past experiences. And that means, among other things, that you’d prefer — all else being equal — to have a pleasant or positive experience in the future, rather than the past. And you’d prefer to have a painful or a negative experience in the past, rather than the future. So there’s a number of cases or thought experiments that illustrate this, but a famous one from Derek Parfit goes like this: Imagine that you’re going to the hospital for an operation. And the operation requires you to be conscious and it will be very painful, but they’ll give you a drug afterwards to temporarily forget about it. So when you wake up after the operation, you won’t immediately remember that it’s happened. And so you wake up in the hospital and you can’t remember whether you’ve had the operation. And you call the nurse and the nurse comes over and you say, “Have I had my operation yet?”

Christian Tarsney: And they look at the foot of your bed, where there are two different charts for two patients. And they say, “Well, you’re one of these two, I don’t know which one is you. One of these patients had a three-hour operation yesterday and it was very long and painful and difficult, but it was a complete success. And that patient will be fine going forward. The other patient is due to have a one-hour operation later today, which will be much less painful and also expected to turn out well and so forth.” And the question is which patient would you rather be? And most people have the intuition that you would rather be the patient who had the three-hour operation yesterday rather than the one-hour operation later today, because then the pain is in the past.

Robert Wiblin: Yeah.

Christian Tarsney: So what’s odd about this is of course, normally we prefer less pain rather than more pain. In this case, we prefer more pain just because the pain would be in the past rather than the future.

Robert Wiblin: Yeah. So that feels very intuitive. I think to most people that they’d rather have had bad experiences in the past than have bad experiences coming up. What’s problematic about it? Is there some tension between that and maybe like other beliefs or commitments that we have?

Christian Tarsney: Yeah. So a few arguments potentially can be made for the irrationality of future bias. One is just that the burden of proof is on the person who wants to defend or justify future bias to explain what’s the relevant difference between the past and the future such that we should care more about the one than the other. And it turns out that this is just surprisingly difficult to do. So you can contest that the burden of proof actually goes that way. But for instance, there’s this famous argument from Parfit called future Tuesday indifference. He says, “Look, just imagine someone who is normal in every respect, except that they don’t care about what happens to them on future Tuesdays. So if they can have a one-hour operation next Monday or a three-hour operation next Tuesday, they’ll opt for the three-hour operation just because it’s on a Tuesday.”

Christian Tarsney: And we clearly think there’s something normatively defective about that person. I think many of us would be inclined to say they’re irrational just because something’s on a future Tuesday. Why is that a reason to care about it less? So similarly, just because an event is in the past, why should we care about it less?

Robert Wiblin: Okay. I guess I feel like it seems very natural that humans would have this intuition or that we would have kind of evolved or learned this intuition because our past experiences having already happened and not really being changeable and not going to happen again, it seems like you can’t really have any causal effect on them. So to some extent it’s kind of water under the bridge and it makes practical sense to ignore the past? Or I mean, maybe learn from the past, but to ignore things that happened in the past because they’re not going to be able to affect them in the same way that they can affect something else that might happen in future. Is that a good enough reason not to worry about them? Or maybe is it that it’s a good reason to not worry too much about the future, but inasmuch as in these hypothetical odd scenarios that we paint where you can, in some sense, have an effect on the past, that those are the cases where you should worry about your intuition is getting polluted by this, like by the normal thing where the past is unaffectable?

Christian Tarsney: Yeah. So I think a lot of people do take the view that our inability to affect the past has something centrally to do with our indifference toward past experiences. And actually in this paper Future bias in action recently published by myself and some collaborators at the University of Sydney, we tried to test this experimentally. And we found that in fact, when you ask people to imagine hypothetical scenarios where they can affect their own past experiences, they care about their past experiences more, which suggests that your inability to affect the past is one reason why you feel indifferent to it.

Christian Tarsney: But at the same time, if we’re asking the normative question of should we be indifferent to the past, then there are various reasons to think that our inability to affect the past is not a reason to judge that our past experiences don’t matter as much as our future experiences. So for instance, if that were true, then you should similarly be indifferent to inevitable future experiences. If you know for sure that something bad is going to happen to you tomorrow, you shouldn’t care about it. And in fact, we don’t have that kind of attitude. So that seems like at least a kind of inconsistency.

Robert Wiblin: Yeah. If I recall from that experiment that you did, the unaffectability of the past explained part of people’s different reactions.

Christian Tarsney: Yeah.

Robert Wiblin: But then when you got rid of the, or you tried to equalize the unaffectability, then there was still some future bias present.

Christian Tarsney: Yeah, that’s right. So what we ended up concluding in that paper is there are probably multiple explanations for future bias. The other explanation that people have prominently proposed is that we care more about the future because we have the intuitive belief that we’re moving through time. In some sense, that’s hard to explicate, but we have this intuition that we’re moving away from the past and towards the future, and that your future experiences are ahead of you rather than behind you, and that makes it rational to care more about the future than the past.

Robert Wiblin: So it’s like time is kind of playing a videotape, and the things that haven’t played yet are still coming up. And so you can still experience that pain, whereas the stuff in the past is somehow irrelevant or just wiped off of the ethical picture somehow.

Christian Tarsney: Yeah, that’s right. I mean, it turns out to be just very hard to explain, well, first of all, this idea of moving through time or time having a direction or a flow, and then second to explain why that should make it rational to care less about the past than the future in a way that doesn’t just become a roundabout way of saying, well, the past is in the past and the future is in the future, but a lot of people do see an intuitive connection here, including me.

Philosophy of time [00:11:17]

Robert Wiblin: Yeah. Okay. It sounds like we might have to take a detour into the philosophy of time, or understand what different models people have of the nature of time and the present in order to dissect whether this idea makes any sense. You want to give an intro to that?

Christian Tarsney: Sure. So the central debate in the philosophy of time over the last 100 years or so is whether this idea of time moving or flowing or us moving from the past towards the future corresponds to any objective feature of reality. And this is a debate that’s also playing out, for instance, in physics. It’s something that our best physical theories maybe give us some indications one way or another, but don’t seem to settle, and you have physicists as well as philosophers on either side of this debate. And various arguments have been proposed either way, but well, the debate is still very much unsettled. And it’s also a little bit unclear exactly what the debate is about.

Christian Tarsney: So one thing, for instance, that people seem to disagree about, is the present moment, the ‘now.’ Is there one moment in time that’s objectively now, and that moves from earlier times towards later times? Or is it just that, for instance, the current time slice of me happens to be located at this location in time, and when I say ‘now,’ well ‘now’ just works like ‘here’ as a way of indicating the place in time where I happen to be located. So that’s one aspect of this debate that people try to get a handle on.

Robert Wiblin: Right. I don’t know that much about the philosophy of time, but I think my understanding is that there are three big theories that people put forward with different levels of plausibility. One is I think presentism, which you were describing, which is like, only the present instance is ‘actual,’ I think is the term that we use. I guess I’m not entirely sure what ‘actual’ means in this context, maybe that’s probably what people debate a lot. People are like, only the present instant is actual. Then you’ve got the ‘growing block’ theory of time, where all of the past exists or is actual because that has kind of been locked-in, because it’s already happened. And I guess the present instant exists as well, and that instant is just constantly being added to this recording of time that gets locked in. But in that one, the future isn’t yet actual.

Robert Wiblin: And then I guess you have eternalism, which is the idea that the past, the present, and the future are all actual to the same degree. It’s just that we happen to be like… My personal self happens to be passing through this instant, but all of them exist in some sense. And I guess on that view that there would be symmetry between things that happened in the past and things that happened in the future and how ethically weighted they are.

Christian Tarsney: Yeah, that’s basically right. But there are two separate debates here that are worth teasing apart. So one is about what philosophers called the ontology of time, so what moments in time or parts of time exist. And that’s the debate that you were describing. And if you’re a presentist or a growing block theorist, then you’re basically committed to the passage of time and the movement from the past to the future being in some sense objectively real. But if you take this other view, eternalism, you think the past, the present, and the future are all equally real. That doesn’t necessarily commit you one way or another on this debate about the passage of time. So you can still believe that the past or the future are real, but the present is still uniquely and objectively present. It has some special status. So there’s what people call the ‘moving spotlight’ theory, which says there is this eternal block of time, past, present, future events, all existing. But one moment in the block is illuminated at any given moment. And that’s the present.

Robert Wiblin: I see, interesting. I guess on the growing block model, where what actually exists in this ontological sense is kind of increasing as time passes, that would seem to suggest in some way that maybe you care more about the past, right? Because the past is kind of actual and locked in. Whereas the future is this ethereal thing that hasn’t happened yet. I guess maybe you could say there’s a symmetry if the future will happen. So at some point it will matter, but inasmuch as it’s uncertain, the past matters potentially even more.

Christian Tarsney: Yeah. This is something that philosophers have remarked on repeatedly, and one thing that people often say is kind of surprising, that nobody defends ‘shrinking block’ theory, that says the present and the future are real and the past isn’t. That would be a really neat explanation for why the future matters more than the past. But interestingly, we have on the one hand this very strong intuition that the future matters more than the past. And on the other hand, many people have the intuition that the past is real in a way that the future isn’t.

Robert Wiblin: So what kind of resolutions have people proposed to this? And how do they interact with people’s broader philosophical attempts to make sense of the nature of time?

Christian Tarsney: Yeah, well, so there’s an ongoing debate — as there usually is in philosophy — about whether the bias towards the future is rational or irrational. And maybe at a finer level of grain, whether it’s rationally required to care more about the future or rationally required to be neutral between different times, or you’re just rationally permitted to do whatever you want. And the latest set of moves in this debate have involved pointing out various ways in which whether you care about the past or not can affect your choices. So the obvious boring case is, well, what if there’s backward time travel? And you could actually retro-causally affect your past experiences? But there are other interesting cases. So for instance, if you are risk averse, then whether you’re biased towards the future or not can make a difference to your choices. Because whether one option is riskier or less risky than another can depend on whether you’re counting the stuff in the past that’s already baked in — and it might, for instance, be correlated in certain ways with what’s going to happen in the future.

Robert Wiblin: Another approach that one might take to this would be to reject what you were saying earlier, that the burden of proof is on the person who says that they care more about the future. And you might say, well, maybe this is just like, rather than being something that seems more irrational, like the future Tuesday case, where you just, for some reason that you can’t explain, don’t care about Tuesdays, this is more like a taste thing. Where it’s like, I like apples, but I don’t like oranges. We don’t think that you have a special burden of proof there. It’s more just a matter of taste, and a matter of personal preference. Is it plausible to run that line of argument? That it’s just like, personally, I just care about the future, and I don’t care about the past, and that’s just how I am and I don’t have to justify myself?

Christian Tarsney: I think that’s plausible. There are a couple arguments you could mount against it. So one question or complication is whether the bias towards the future also affects your other-regarding or altruistic preferences. So this is something people seem to have different intuitions about. Some people think that the bias towards the future is exclusively first personal. So when I’m thinking about other people’s experiences, people I care about, I don’t particularly care whether their pain is in the past or the future. You can manipulate people’s intuitions about this. So if you think about someone far away on the other side of the world, maybe it doesn’t seem to matter that much, whether their pain happened yesterday or tomorrow. But if it’s, say, your partner who you live with, you’ll feel better if they’ve already had their painful operation yesterday rather than today.

Christian Tarsney: And of course, if you are biased towards the future, at least in some sort of other-regarding altruistic cases, then it seems like there’s a kind of higher burden of justification. It can’t just be your personal preference that their pains be in the past rather than the future. There’s also the set of ways in which the bias towards the future might affect your choices. So for instance, if you’re biased towards the future and risk averse in a particular way, you can be money pumped. So you can make choices that will result in you being definitely worse off than you otherwise might’ve been. And you might think any pattern of preferences that allows you to be money pumped is ipso facto irrational, and not just a matter of taste.

Money pumping [00:18:53]

Robert Wiblin: Yeah. Can you explain this concept of money pumping? It shows up a lot in this discussion of ethics and decisions theory and rationality and so on, but I think probably not everyone has heard the idea.

Christian Tarsney: Yeah. So a money pump basically is a sequence of choices where an agent with particular dispositions will choose a series of options that leave them definitely worse off than some other series of options they might have chosen would have. So the classic example is if you have cyclic preferences. If I have apples and oranges and bananas, and I prefer an apple to an orange and an orange to a banana, and a banana to an apple, then, well, you can say, “I have an apple,” and you can say, “Well, I’ll trade you your apple for a banana if you pay me one cent.” And I take that deal because I prefer bananas. And then you say, “Well, I’ll give you an orange in exchange for that banana, if you give me one cent.” And similarly then I can get you to trade back for the apple, and you’ve gotten three cents out of me, and I’m just stuck with the apple that I had in the first place. So all sorts of patterns of preference can give rise to these sequences of choices that leave you definitely worse off.

Robert Wiblin: Yeah. Sometimes people would defend that it’s acceptable in some way to hold a position where you can be money pumped. Often in philosophy you face unpleasant trade-offs, you have to choose a position that has one weakness, or a position that has another weakness. And this is one of the weaknesses that a view might have, is that it’s vulnerable to money pumping. And it’s an undesirable property, but not necessarily a completely decisive one if every other option also has some unpleasant side effects.

Christian Tarsney: Yeah. I think that’s right. There’s plenty of debate about how decisive money pumps should be. I think one distinction that’s worth making is between what are sometimes called ‘forcing’ versus ‘non-forcing’ money pumps. So something like having incomplete preferences. If I prefer apples to bananas, but oranges are just incomparable to both, like I have no preference between apples or bananas and oranges, then it seems naively like it’s rationally permissible for me to make a series of choices that’ll leave me worse off, but it’s also rationally permissible for me to not do that. And you can say, well, there’s just an extra rule of rationality that says I shouldn’t do the sequence of things that will constitute a money pump. But in other cases, like the transitivity case, your preferences seem to commit you or force you to do the thing that leaves you definitely worse off. And it seems at least intuitively compelling that having preferences that force you or commit you to make yourself definitely worse off, that that’s at least a significant theoretical cost.

Robert Wiblin: Yeah. There’s something more seriously problematic there.

Christian Tarsney: Yeah.

Time travel [00:21:22]

Robert Wiblin: Okay. So we’ve discussed a couple of different approaches that people might take to resolve this issue, or a couple of different positions that people might take. How do people respond to a time travel case where you imagine a world where time travel is possible? You can go back into the past and change how things went, and then make people experience less suffering in the past. Does that tend to make a big difference to people’s attitudes, to how important the past is to them?

Christian Tarsney: So this is what we investigated in this paper Future bias in action and we found that it does, to some extent. So it doesn’t in aggregate make people perfectly time neutral, people still on average care more about the future than the past, but the asymmetry becomes weaker when you consider backward time travel cases.

Robert Wiblin: Yeah. Interesting. I guess it’s a bit hard to know how to concretize the time travel case, because you imagine like, okay, so you can go back in time and then run things again and have them go better. But then I’m like, does that mean it’s happened twice? Does it now get double value? Or am I erasing the original run-through and causing it not to have had any more or consequences? It almost raises as many questions as it answers.

Christian Tarsney: Yeah. Your theory of time travel definitely makes a difference here. You might think, well, if you think of backward time travel in a way where events, say, happened the first time around in the past, but then you can go back and erase them, there’s this additional question: Do the events that you erased still matter, or are they no longer part of the timeline? I think it’s fair to say that most philosophers are inclined to think that with time travel — insofar as it’s metaphysically possible — there has to be one consistent timeline. And so anything that you do if you go back into the past was already part of the past, but you might have limited information.

Christian Tarsney: So the case that we described in our experiment, for instance, you know that you were tortured for some period of time in the past, but you don’t remember exactly how long you were tortured or how many times you were subjected to an electric shock. And you have the opportunity to affect that retro-causally to determine whether you had 1,000 shocks or 1,010 shocks, or something like that. But you know that you’re not erasing the past, you’re just influencing what the past already was.

Robert Wiblin: Philosophers think that time travel, or I guess physicists think that time travel is kind of conceptually possible, or like, I guess I should say retro-causality is possible, but you need to have a self-consistent loop—

Christian Tarsney: Mm-hmm.

Robert Wiblin: —where the past affects the present which causes the present to cause the past. And then you’ve got a consistent series of causes that all fit together like puzzle pieces. I don’t know whether you want to explain the philosophy of time travel, but is that right?

Christian Tarsney: Yeah. I’m not particularly… I’m venturing a little bit outside my area of expertise, but general relativity has solutions that involve backwards time travel, where you have what are called closed timelike curves moving into their own past. But yeah, those solutions all involve one self-consistent timeline rather than, for instance, branching timelines, or erasing events that originally happened in the past or anything like that.

Robert Wiblin: Yeah. I think this comes up in not just philosophy, because there’s like some theories within physics of like at the subatomic level, you could end up with retro-causal stuff, and then you want to figure out well, is that self-consistent in a way? Or is that going to violate some other fundamental principle of physics?

Decision theory [00:24:36]

Robert Wiblin: Okay. Coming back to future bias though, let’s talk about the interaction between future bias and decision theory, which is something that you looked into. First off, for people who aren’t familiar, what is decision theory, in brief? If it’s possible to do this one in brief.

Christian Tarsney: Sure. So decision theory is the theory of how people either do or should make decisions. So descriptive decision theory studies how people do make decisions, normative decision theory studies how they should make decisions. There are a number of questions that decision theorists ask. So there’s no one question that centrally characterizes the discipline. One major question is how we respond to risk or uncertainty. So for instance, should we maximize expected value or expected utility, or are we allowed to be risk averse in ways that violate the axioms of expected utility theory? There’s also this famous debate between evidential decision theorists and causal decision theorists about how to act in cases where your choices give you some information about the pre-existing state of the world.

Robert Wiblin: Yeah. Is there a simple thought experiment that kind of elucidates the difference between evidential and causal decision theory?

Christian Tarsney: Yeah. So the classic case is called Newcomb’s problem. The idea is that there is a predictor who’s just very good at analyzing human motivations and predicting human choices. And the predictor presents you with the following choice: There are two boxes in front of you. One of them is transparent, and you can see it contains $1,000. The other box is opaque. And what the predictor tells you is that your options are either to take just the opaque box and get whatever’s inside there, or to take the opaque box and the transparent box together. But if I predicted that you would take both boxes, then I left the opaque box empty. And if I predicted that you would take only the opaque box, I put $1 million inside. So evidential decision theorists say, well, if the predictor is really that great, either they’re infallible at predicting my choices or they’re just very, very good, then if I take the opaque box that tells me that the predictor certainly or almost certainly predicted that I would do that, and put $1 million inside. So I end up with $1 million. Whereas if I take both boxes, then I’ll only end up with $1,000, because the predictor won’t have put the $1,000 inside.

Christian Tarsney: Whereas a causal decision theorist says, okay, but your choice makes no difference causally to whether there’s $1 million in the opaque box or not. There either is or there isn’t. And in either case, taking both boxes leaves you $1,000 richer than you would have been had you taken only the opaque box. So the rational thing to do is take both boxes.

Robert Wiblin: Yeah. I think a thought experiment that feels more intuitive and a bit less scifi to me is I think the smokers’ lesion problem, where, so we find out that like a large part of the reason why smokers tend to die young isn’t just that they’re smoking, it’s that there’s some correlation say genetically between people who are predisposed to enjoy smoking and have a compulsion to smoke and people who happen to have a genetic predisposition for having brain lesions that then can kill them later in life. And so in that case, you got this question, if you smoke, or if you find that you enjoy smoking and want to smoke and decide to smoke, that gives you evidence that you’re more likely to have this deadly brain lesion disease for some genetic correlation reason.

Robert Wiblin: But then should you take that into account in your decision on whether to smoke, it lowers your life expectancy, but not kind of causally through smoking. It’s just because smoking gives you evidence about something else about yourself. And it’s kind of a bit of a puzzle. Smoking lowers your life expectancy more than it does causally, and should you therefore use that? And that one is more intuitive because it doesn’t require anything that’s like really outside of what we’re used to experiencing.

Christian Tarsney: Yeah. The smoking lesion case, that’s the classic counterexample to evidential decision theory, because, well, I always find it hard to remember what the right intuition is supposed to be here, but most people intuit that the rational thing to do is to smoke, because it doesn’t cause cancer. It just gives you information that you’re more likely to have cancer. But there are some complications about the case that make it possible for evidential decision theorists to try to start to explain it in a way.

Robert Wiblin: I’ve got some links to decision theory stuff for people who are interested. What’s the interaction between future bias and decision theory that you’ve looked into?

Christian Tarsney: Well. So the particular connection that I’ve explored in this paper Thank goodness that’s Newcomb is that if you’re an evidential decision theorist, then whether you do or don’t care about the past can affect your choices in ways that don’t require exotic backwards time travel or retro causation or anything like that. So I imagined basically a variant of the Newcomb case where the predictor kidnaps you and subjects you to electric shocks for a period of time. And then they give you the option at the end to shock yourself one more time before you’re released, but they made a prediction in advance about whether you would choose to give yourself that final shock. And if they predicted that you would, they shocked you fewer times over the last week that they were holding you and torturing you. And if they predicted that you wouldn’t, they shocked you more times.

Christian Tarsney: And of course, if you’re an evidential decision theorist and you’re time-a neutral, you want to minimize the total number of shocks that you’ve ever experienced. And so you’ll choose to shock yourself now. But if you’re either a causal decision theorist or you’re biased towards the future, then you would not choose to shock yourself.

Robert Wiblin: Okay. What should we make of that?

Christian Tarsney: Well, one thing you could make of it is that this is one more case where evidential decision theory tells us something silly. And so we should be causal decision theorists. Of course, then you can rerun a similar case, which is basically what we did in this experimental paper, where you use backwards time travel rather than predictors to give people the option of affecting their own past experiences. And many of the same sort of philosophical issues come up.

Christian Tarsney: My take in the original paper was that our intuitions about the irrelevance or our indifference towards our past experiences don’t change very much when we’re considering these cases where we can ‘affect’ our past experiences, or our choices give us evidence about our past experiences. So my own take was that this undercuts the idea that the reason we don’t care about the past is because it’s practically irrelevant. But then this experimental paper that we did actually finds that people do change their intuitions or their judgements, at least on average in these cases. So my own philosophical take turned out to be undercut anyway, by our experimental results.

Robert Wiblin: Interesting. Yeah, I feel in that case, I have the intuition that you want to do the thing that reduces the total amount of electric shocks over all periods of time, which I guess is what you found other people felt at least to some degree. And I wonder whether there’s something that’s going on where it kind of depends on whether you’re thinking about it from the prudential selfish perspective of you at this instant in time, or whether you’re thinking about what would be a better world, all things considered. And it seems like what would be a better world all things considered is less torture in total. Maybe like what’s best for me right now is minimizing the amount of future torture that I’m going to experience. But then it seems like maybe we’re running up against a tension between our prudential perspective and then our ethical commitments. And this is creating a tension that we somehow have to resolve.

Christian Tarsney: Yeah, that could be. So if you think that we are generally time neutral when we’re thinking about other people, and then in this case, you can put on your impartial altruist hat when thinking about your past self and just treat them as another person that you’re concerned with, then maybe that is one reason why you would be more inclined to accept additional future pain to avoid a greater amount of past pain. But it is, as I mentioned earlier, non-obvious whether we’re generally time neutral when we’re thinking about other people. So one view you could take that’s not completely counterintuitive is well, the past as a whole is just dead and gone, not just my experiences, but other people’s experiences. And so what we should be thinking about as altruists is not making the world as a whole across all the time and space a better place, but making the future better, because that’s what’s still out there to be experienced.

Eternalism [00:32:32]

Robert Wiblin: Okay. I want to push on from this in just a second, but it sounded like earlier you were saying that the growing block theory and presentism and eternalism are kind of all still philosophically acceptable, and there are advocates for all of them in philosophy and physics. I kind of understood that there were some thought experiments that had made eternalism, the idea that the past, the present, and the future are all actual in some sense, to be a more dominant view, at least among physicists anyway. Have I misunderstood that?

Christian Tarsney: I think you’re probably right, that it’s more dominant among physicists, and probably even more dominant among philosophers, although all of these views still have active defenders. Maybe the most powerful argument that has convinced a lot of people is just that a naive picture of time where there’s an objective present moment in moving from the past towards the future requires that you be able to chop the universe into time slices in this objective way, where we can say all of these events, at all these different locations across the universe, those are the ones that are present right now.

Robert Wiblin: We’re simultaneous.

Christian Tarsney: Right. But special relativity teaches us that actually, whether two events at different locations are simultaneous with each other depends on basically how fast you’re moving. Right? So two people in motion relative to each other will disagree about which events are simultaneous. And so it looks — at least in relativistic physics — like there just couldn’t be a privileged plane of simultaneity, that all of those events are present and nothing else is.

Robert Wiblin: Yeah. I think that this shows up in ethics elsewhere when you’re thinking about the ethics of the future. Because you can end up with these funny cases where someone who cares less about the future, say, because you ask them like how much would you pay to prevent something terrible happening in 1,000 years? And they say, well, not very much, because it’s so far away in the future. Then you do something where it’s like, you send them away at almost light speed and then they can come back in what is to them only a few minutes or only a few hours, and then arrive in effect 1,000 years in the future in this other location. And then the terrible thing happens. And you’re like, what is the amount of time that’s passed? Because this all depends on the speed they were going at, and what you traveled. So if this is like only a few hours away from your perspective, does that mean that the 1,000-year thing doesn’t matter?

Christian Tarsney: Yeah.

Robert Wiblin: It introduces this peculiar kind of inconsistency.

Christian Tarsney: I think that’s one very good argument against what’s called ‘pure time preference.’ Thinking that the mere passage of time or mere distance in time has ethical significance.

Robert Wiblin: Alright. We’ll put up a link to those papers and people can explore more if they would like, I’m sure there’s plenty more in there. Is there anything that people should take away in their practical life and their decision making altruistically from these past, present, future ethical comparison cases?

Christian Tarsney: I think there’s two things that are worth mentioning. One is altruistically significant, which is, if you think that one of the things we should care about as altruists is whether people’s desires or preferences are satisfied or whether people’s goals are realized, then one important question is, do we care about the realization of people’s past goals, including the goals of past people, people who are dead now? And if so, that might have various kinds of ethical significance. For instance, I think if I recall correctly, Toby Ord in The Precipice makes this point that well, past people are engaged in this great human project of trying to build and preserve human civilization. And if we allowed ourselves to go extinct, we would be letting them down or failing to carry on their project. And whether you think that that consideration has normative significance might depend on whether you think the past as a whole has normative significance.

Robert Wiblin: Yeah. That adds another wrinkle that I guess you could think that the past matters, but perhaps if you only cared about experiences, say, then obviously people in the past can’t have different experiences because of things in the future, at least we think not.

Christian Tarsney: Yeah.

Robert Wiblin: So you have to think that the kind of fixed preference states that they had in their minds in the past, it’s still good to actualize those preferences in the future, even though it can’t affect their mind in the past.

Christian Tarsney: Yeah, that’s right. So you could think that we should be future biased only with respect to experiences, and not with respect to preference satisfaction. But then that’s a little bit hard to square if you think that the justification for future bias is this deep metaphysical feature of time. If the past is dead and gone, well, why should that affect the importance of experiences but not preferences? Another reason why the bias towards the future might be practically interesting or significant to people less from an altruistic standpoint than from a personal or individual standpoint, is this connection with our attitudes towards death, which is maybe the original context in which philosophers thought about the bias towards the future. So there’s this famous argument that goes back to Epicurus and Lucretius that says, look, the natural reason that people give for fearing death is that death marks a foundry of your life, and after you’re dead, you don’t get to have any more experiences, and that’s bad.

Christian Tarsney: But you could say exactly the same thing about birth, right? So before you were born, you didn’t have any experiences. And well, on the one hand, if you know that you’re going to die in five years, you might be very upset about that, but if you’re five years old and you know that five years ago you didn’t exist, people don’t tend to be very upset about that. And if you think that the past and the future should be on a par, that there is no fundamental asymmetry between those two directions in time, one conclusion that people have argued for is maybe we should be sanguine about the future, including sanguine about our own mortality, in the same way that we’re sanguine about the past and sanguine about the fact that we haven’t existed forever. Which I’m not sure if I can get myself into the headspace of really internalizing that attitude. But I think it’s a reasonably compelling argument and something that maybe some people can do better than I can.

Robert Wiblin: I feel like that’s easy to resolve because I’m just like, yeah, it’s terrible that I didn’t used to exist. It’s terrible that I was born as late as I was. I should have been born 1 billion years earlier and lived through the entire length of it, but there’s not much I can do about that. I can go to the gym and try to live longer, but I can’t go to the gym and try to be born earlier. So it’s kind of water under the bridge, yeah?

Christian Tarsney: Yeah. Right. That could be the conclusion you reach too.

Robert Wiblin: Alright. We’ll stick up links to those papers and people can dig in if they’d like to learn more.

Fanaticism [00:38:33]

Robert Wiblin: Let’s move on and talk about a problem in moral philosophy known as fanaticism. Yeah, what is the problem of fanaticism for those who are not familiar?

Christian Tarsney: Roughly the problem is that if you are an expected value maximizer, which means that when you’re making choices you just evaluate an option by taking all the possible outcomes and you assign them numeric values, the quantity of value or goodness that would be realized in this outcome, and then you just take a probability-weighted sum, the probability times the value for each of the possible outcomes, and add those all up and that tells you how good the option is…

Christian Tarsney: Well, if you make decisions like that, then you can end up preferring options that give you only a very tiny chance of an astronomically good outcome over options that give you certainty of a very good outcome, or you can prefer certainty of a bad outcome over an option that gives you near certainty of a very good outcome, but just a tiny, tiny, tiny probability of an astronomically bad outcome. And a lot of people find this counterintuitive.

Robert Wiblin: So the basic thing is that very unlikely outcomes that are massive in their magnitude that would be much more important than the other outcomes in some sense end up dominating the entire expected value calculation and dominating your decision even though they’re incredibly improbable and that just feels intuitively wrong and unappealing.

Christian Tarsney: Well, here’s an example that I find drives home the intuition. So suppose that you have the opportunity to really control the fate of the universe. You have two options, you have a safe option that will ensure that the universe contains, over its whole history, 1 trillion happy people with very good lives, or you have the option to take a gamble. And the way the gamble works is almost certainly the outcome will be very bad. So there’ll be 1 trillion unhappy people, or 1 trillion people with say hellish suffering, but there’s some teeny, teeny, tiny probability, say one in a googol, 10 to the 100, that you get a blank check where you can just produce any finite number of happy people you want. Just fill in a number.

Christian Tarsney: And if you’re trying to maximize the expected quantity of happiness or the expected number of happy people in the world, of course you want to do that second thing. But there is, in addition to just the counterintuitiveness of it, there’s a thought like, well, what we care about is the actual outcome of our choices, not the expectation. And if you take the risky option and the thing that’s almost certainly going to happen happens, which is you get a very terrible outcome, the fact that it was good in expectation doesn’t give you any consolation, or doesn’t seem to retrospectively justify your choice at all.

Robert Wiblin: Yeah. I think this can show up in other ways as well. One that jumps to mind is the dominant view among people who study this kind of thing is that insects probably aren’t conscious, and if they are conscious, they’re probably not very conscious. But we’re not super sure about that, so maybe there’s a 1 in 1,000 chance that insects are conscious to a significant degree. And there’s so many insects, it’s just phenomenal how many insects there are relative to how many humans, it’s a very, very large multiple. A fanatical position might be someone who says, well, I’m just going to maximize expected value, and I think there’s a 1 in 1,000 chance that insects are conscious to an important degree, and so I’m going to focus all my attention on trying to improve the wellbeing of insects. So this is one that doesn’t involve the time as much, but involves a change of focus based on a longshot possibility that something really matters even though it probably doesn’t.

Christian Tarsney: I think in that case too it seems counterintuitive to throw away, for instance, the opportunity for a very good outcome for this very tiny probability of a much better outcome. But then I think the other important thing — and maybe something that people under appreciate — is just that there isn’t any great, at least any widely accepted positive argument for the kind of risk-neutral expected value maximization that leads you to fanaticism. And in fact, the standard expectational theory of decision making under risk doesn’t force you to be fanatical in that way.

Robert Wiblin: Okay, interesting. Maybe let’s first lay out what is the case in favor of having a fanatical style of decision making where you’re just going to let that tail wag the dog?

Christian Tarsney: There’s a few arguments you could make. One route is just to defend risk-neutral expected value maximization. What that means is you have some way of measuring value that’s independent of your preferences towards risk. So for instance just to simplify, I care about the number of happy people and the number of unhappy people that ever exist, and so the value of an outcome is just say number of happy people minus number of unhappy people. And you might just think, well, the intuitive response to risk is to value outcomes in proportion to quantitatively how good they are, and multiplying that by probability and risk-neutral expected value maximization just feels right.

Christian Tarsney: There’s also more theoretical arguments you can give. So for instance, Harsanyi’s aggregation theorem gets you something like at least risk neutrality in the number of people who you can benefit to a given degree. But it requires you to accept some controversial premises like the ex-ante Pareto principle. So if you assume that each individual is an expected utility maximizer and you say if some option gives greater expected utility for each individual, then we should prefer it. There are various reasons why you might reject that.

Robert Wiblin: The underlying principle there is that if someone’s better off and no one else is worse off, then it’s going to be better. And I guess Harsanyi tried to do a bit of mathematical alchemy to convert that into a view that you should maximize expected value, which is to say maximize the probability of each outcome by the value of that outcome and then add all those up and then maximize the total.

Christian Tarsney: So it’s a little complicated, for instance because the Harsanyi theorem allows individuals to be very risk averse, for instance, with respect to years of happy experience or whatever. But what it does say, roughly, is well, if I can say benefit N individuals to a certain degree with probability P or I can benefit M individuals to that same degree with probability Q then which thing I should do is just determined by multiplying the number of people times the probability.

Christian Tarsney: There’s another way of justifying fanaticism that doesn’t depend on a commitment to risk-neutral expected value maximization. And this is something that Nick Beckstead and Teruji Thomas have explored in a GPI working paper that’s based on a part of Nick Beckstead’s dissertation. Roughly the argument is, well, look, suppose that I can have some good outcome with probability P, or I can have a much better outcome with let’s say we multiply P by some factor, like 0.99 or something, so I reduce the probability of the good outcome by 1% but I can increase how good the outcome is by an arbitrarily large amount.

Christian Tarsney: There must be some amount by which you could increase the value of the outcome such that you’d be willing to accept a 1% decrease in this probability. And if you think for any probability in any magnitude of goodness or value, you’re willing to accept that 1% reduction in probability for a sufficiently large increase in the magnitude of the payoff, then you just iterate that enough times and ultimately you’re preferring a tiny probability of a ridiculously good payoff to certainty of even potentially a very good payoff. So that allows you to be for instance risk averse with respect to value, but nevertheless you end up being at least in theory in principle vulnerable to fanaticism.