Should you go into research? – part 1

Should you go into a research career? Here’s one striking fact about academic research that bears on this question: in most fields, the best few researchers get almost all the attention.

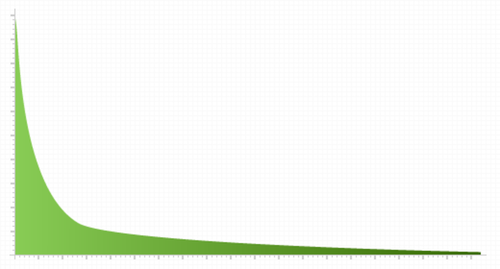

Most scientific articles get little to no attention (Van Dalen and Klamer 2005). One study found that 47 percent of articles catalogued by the Institute for Scientific Information have never been cited, and more than 80 percent have been cited less than 10 times (Redner 1998). Articles in the median social science journal, on average, get only 0.5 citations within two years of publication (Klamer and Dalen 2002). The mean number of citations per article in mathematics, physics, and environmental science journals is probably less than 1 (Mansilla et al. 2007).

By contrast, the top 0.1% of papers in the Institute for Scientific Information have been cited over 1000 times (Redner 1998). Citations per paper are basically distributed by a power law, which means that only a few papers dominate. This trend seems to hold across fields, even when the average number of citations per article varies widely (Radicchi, Fortunato, and Castellano 2008), and a similar distribution holds across individual researchers, not just articles (Petersen et al. 2011a).

What does this skewed distribution imply for the prospects of academic research as a high-impact career? You might think it means that becoming a researcher is a sure way not to have a significant impact. But what matters is the expected outcome of becoming a researcher. That the best researchers get all the attention only implies that the expected outcome of becoming a researcher may be dominated by your chance of becoming a giant in your field. The expected value of a research career could nonetheless be very high if you could have an astronomical impact as a leader in your discipline, even if the chances of reaching that position are low. So it would be too hasty to infer that you shouldn’t enter research because of the skewed distribution of output and reward.

The above only applies to the extent that (a) citations are a good measure of attention, and (b) attention is a good measure of impact. There may be problems with citation measurements (Seglen 1998), and there are different ways of using citations to assess attention (Bornmann et al. 2008). But citations, publications, and reputation seem to be the currency for influencing policymakers, university officials, students, and peer scholars (Dalen and Henkens 2004). If your potential to make impact through research in your field is directly related to the amount of credit and recognition you receive (Cash et al. 2003), then the skewed distribution of citations is highly relevant to your chances of making the most difference through academic research (Faria and Goel 2010). You might, however, aim to make an impact in other way, like teaching, or your contributions might be valuable regardless of uptake by your peers.

In the next post, I’ll discuss one possible cause of this skewed distribution.

Bornmann, L., Mutz, R., Neuhaus, C., & Daniel, H. D. (2008). Citation counts for research evaluation: standards of good practice for analyzing bibliometric data and presenting and interpreting results. Ethics in Science and Environmental Politics(ESEP), 8(1), 93102.

Cash, D. et al. 2003. “Salience, credibility, legitimacy and boundaries: Linking research, assessment and decision making.”

Costas, R., & Bordons, M. (2007). The h-index: Advantages, limitations and its relation with other bibliometric indicators at the micro level. Journal of Informetrics, 1(3), 193203.

Dalen, H.P., and K. Henkens. 2004. “Demographers and Their Journals: Who Remains Uncited After Ten Years?.” Population and Development Review 30(3): 489506.

Faria, J.R., and R.K. Goel. 2010. “Returns to networking in academia.” Netnomics 11(2): 103117.

Klamer, Arjo, and Hendrik P van Dalen. 2002. “Attention and the art of scientific publishing.” Journal of Economic Methodology 9(3): 289315.

Laband, D.N. (1986) Article popularity, Economic Inquiry 24: 173-80.

Mansilla, R. et al. 2007. “On the behavior of journal impact factor rank-order distribution.” Journal of Informetrics 1(2): 155160.

Petersen, A. M., Wang, F., & Stanley, H. E. (2010). Methods for measuring the citations and productivity of scientists across time and discipline. Physical Review E, 81(3), 036114.

Peterson, G. J., Pressé, S., & Dill, K. A. (2010). Nonuniversal power law scaling in the probability distribution of scientific citations. Proceedings of the National Academy of Sciences, 107(37), 1602316027.

Radicchi, Filippo, Santo Fortunato, and Claudio Castellano. 2008. “Universality of citation distributions: Toward an objective measure of scientific impact.” 105(45): 1726817272. http://www.pnas.org/content/105/45/17268.short.

Radicchi, F., Fortunato, S., & Vespignani, A. (2012). Citation Networks. Models of Science Dynamics, 233257.

Redner, S. 1998. “How popular is your paper? An empirical study of the citation distribution.” The European Physical Journal B 4(2): 131134.

Seglen, P. O. (1998). Citation rates and journal impact factors are not suitable for evaluation of research. Acta Orthopaedica, 69(3), 224229.

Van Dalen, H.P., and A. Klamer. 2005. “Is Science A Case of Wasteful Competition?.” Kyklos 58(3): 395414.