We prioritise problems that are especially large in scale, neglected by others, and solvable — because that’s where additional people can usually make the biggest difference. This list is our best guess at which problems score highest on those factors, and therefore most need more people working on them. This list is constantly evolving, and we expect to revise it over time. There are also many reasonable ways to disagree.

We’ve ranked risks from advanced AI and catastrophic pandemics as top issues since 2016. But with artificial general intelligence (AGI) possibly arriving within the next decade, AI-related issues seem even more urgent. Learn more about why we prioritise AI risks.

Our list of the most pressing world problems

We may develop very powerful AI systems before we’ve built adequate control mechanisms. If such systems accumulate power while misaligned with human values, the result could be catastrophic.

AI could turbocharge people’s efforts to seize and hold power. Surveillance, persuasion, and control technologies could let small groups or authoritarian leaders entrench their rule — potentially with little chance of recovery.

Pandemics are among the deadliest events in human history. Developments in biotechnology and AI may lower barriers for creating devastating biological weapons, and make future pandemics even worse.

- Digital minds may soon outnumber humans, raising moral questions.

- Competition could force humanity to incrementally cede control to AI.

- AI tools could improve humanity’s decisions at critical junctures.

- Early space governance decisions could shape humanity’s cosmic trajectory.

- AI could be used by malicious actors to create new and catastrophically dangerous weapons.

Beyond killing billions in worst-case scenarios, great power rivalry accelerates dangerous arms races that could spiral out of control.

There are trillions of farmed animals, and the vast majority are on factory farms. The conditions in these farms are far worse than most people realise.

There is an unfathomable number of wild animals. If many of them suffer in their daily lives, finding safe, effective methods to help them could do a lot of good.

Every year around 10 million people in poorer countries die of illnesses that can be very cheaply prevented or managed, including malaria, tuberculosis, and HIV.

Carbon emissions are raising global temperatures, which will result in many millions of avoidable deaths and widespread disruption in the coming decades.

Other problems we’ve written about

We’ve explored many global issues over the years. The following profiles relate to the areas above but offer different framings and additional information:

The following articles cover issues that remain important and underappreciated, but we think most people should consider the problems in our main list first:

FAQ

Our aim is to find the problems where an additional person can have the greatest social impact — given how effort is already allocated in society.

The primary way we do that is by trying to compare global problems based on their scale, neglectedness, and tractability. To learn about this framework, see our introductory article on prioritising world problems.

To assess problems based on this framework, we mainly draw upon research and advice from researchers and advisors working on effective altruism, AI, and existential risk, such as Coefficient Giving and Forethought. Read more about our research processes and principles.

To see the reasons we list each individual problem, click through to see the full profiles.

To be clear, comparing global problems is very messy and uncertain. We are far from confident that the exact ordering presented on this page is correct — in fact, we’re pretty sure that it’s incorrect in at least some ways (more on this below).

Moreover, assessments of the tractability and especially the scale of different global problems depend on your values and worldview. You can see some of the most important aspects of our worldview in our foundation series, especially our articles on how we define social impact and the weight we place on future generations.

Finally, it’s worth noting that 80,000 Hours is now focused on helping people work on safely navigating the transition to a world with AGI, following our April 2025 decision. This means we’re no longer actively investigating issues that aren’t related to the transformative effects advanced AI might have.

We still have views on these issues from previous research — e.g. we tend to think that due to the larger numbers of affected individuals and comparatively high neglectedness, people can often have a bigger impact working on factory farming than on global health or climate change. But as these issues evolve and new problems come up, our views on non-AI-related problems aren’t likely to stay current, since they’ll be based primarily on past research.

The short answer is: because we think the development of this technology might be the most consequential thing to happen in history. If it goes badly, we think it could cause multiple enormous problems — some potentially as severe as human extinction.

Plus, AI is advancing so quickly that if it does cause these problems, they could happen soon. This means that even if we’re not certain how AI will play out or whether it’s as dangerous as we fear, we can’t just wait to see how things go.

Moreover, the issue is still hugely neglected. Most people just aren’t aware of how consequential the technology might be, and there are less than 10,000 people working on reducing the risks worldwide — many fewer than other big world problems like climate change or global health.

Finally, we think AI might affect every other issue on this list — and in the world generally. So even if you wanted to prioritise another pressing global issue, e.g. factory farming, it makes sense to consider angles on it related to AI — e.g. how could you make it more likely that advancing AI reduces the problem rather than makes it worse? If we develop AGI in five years, does that reduce or increase the scale of the issue?

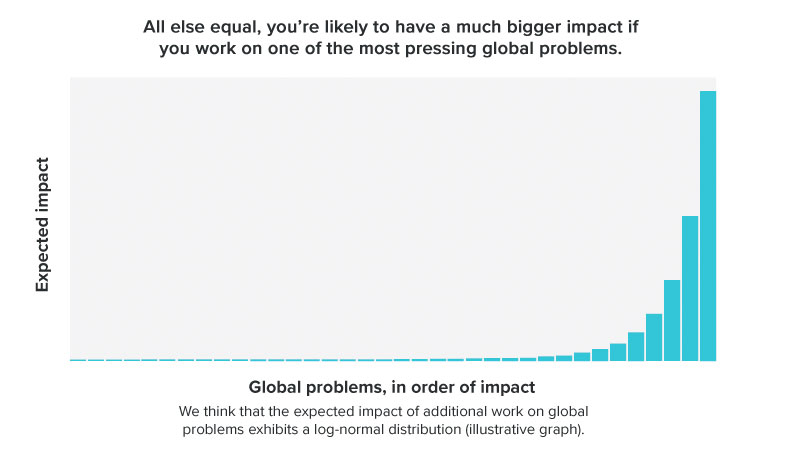

We think some problems are much more pressing than others, such that by choosing carefully, an additional person can have a far greater impact.

Holding all else equal, we think that additional work on the most pressing global problems can be between 100 and 1000(!) times more valuable in expectation than additional work on many more familiar social causes like education in rich countries. That’s because, in those cases, your impact is typically limited by the smaller scale of the problem (e.g. because it only affects people in one or a few countries), or because the best opportunities for improving the situation are already being taken by others. Moreover, it seems like some of the problems in the world that are biggest in scale — especially those that could affect the entire future of humanity, like mitigating risks from AI or biorisks — are also highly neglected. This combination means you can have an outsized impact by helping tackle them.

For this reason, we think our most important advice for people who want to make a big positive difference with their careers is to work on a very pressing problem. This page is meant to help readers do that. Read more about the importance of choosing the right problem.

A key consideration is how society is currently allocating resources. If a lot of people are already working on an issue, the best opportunities will have probably already been taken, which makes it harder for additional people to have an impact.

At the same time, you only have one career, so if you want to fulfil your potential to do good, you need to prioritise which issues you focus on.

One way to think about this is in terms of a ‘world portfolio’: What would the ideal allocation of resources be for all social issues? And which are farthest from that ideal allocation?

As an individual, the best you can do is pick one of these that you’re well placed to focus on.

This is why our list looks a bit surprising: we purposefully want to highlight problems that we think are furthest from getting the attention they need — such as reducing the risk of AI-enabled power grabs, which is a completely new field with only a handful of people working on it. Or take preventing engineered pandemics, which currently gets $1–2 billion of funding per year. That is only 1/500th of what a more widely recognised problem like climate change gets (which also needs more resources).

By their very nature, these issues will tend to be unusual — if working on them was common sense, they’d already have more attention, and we’d need to derank them!

If everyone followed our advice, our list would need to be totally different (but unfortunately, this will probably never happen).

Another factor that makes our list unusual is that we strive to value the interests of all sentient beings more equally — regardless of where they live, when they live, or even what species they are. We even consider digital beings to potentially be worthy of moral consideration.

Many people believe ‘charity begins at home,’ but we believe charity begins where you can help the most. This typically means looking for the groups who are most neglected by the current system, and this often means focusing on the world’s poorest people, animals, future generations, or maybe digital minds — rather than other citizens of the world’s richest countries.

To learn more about why we prioritise more neglected problems, see our article on comparing global problems in terms of scale, neglectedness, and solvability, and our foundation series.

Some find it objectionable to say one problem is more pressing than another — perhaps because they think it’s impossible to make such determinations, or because they think we should try to tackle everything at once.

We agree that it’s difficult to determine which problems are largest in scale, most neglected, and most tractable. The field of global priorities research exists because these questions are so complicated, and we are far from certain about our views (see below). But we think with careful thought and research, people can make educated guesses — and, crucially, do better than random.

We also agree that an individual can sometimes make progress on different problems at the same time, and advocating for more people or institutions to focus on helping others can increase the total amount of work done to solve all problems.

However, resources are still very much limited, and no one person can do everything at once. Given the seriousness of the many challenges humanity faces, we think we have to prioritise among problems to use our resources as effectively as we can to solve them.

Refusing to compare problems to one another doesn’t get you out of prioritising — it just means you’ll be choosing to prioritise some things over others without thinking much about it.

Definitely not. Though we’ve put a lot of work into thinking about how to prioritise global problems, ultimately, we are drawing on a modest amount of research to address an unbelievably large and complex question. We are very likely to be wrong in some ways (see below). You might be able to catch some of our mistakes.

Moreover, it’s often very useful for people trying to make the world a better place with their careers to develop their own views about what to prioritise — you’ll be more motivated and more able to help solve a problem if you understand the case for working on it and have chosen it for yourself.

To help you form your own views, below we suggest a rough process for creating your own list of problems.

The most important and controversial factors driving our lists are probably:

- Our view that AGI is likely to be a hugely transformative technology with effects that might last a very long time, and that could arrive very soon. This means we especially highly prioritise reducing existential risks from AI, as well as issues that might interact with AI risks (such as bioweapons), and emerging challenges that could arise sooner due to AI.

- Our focus on reducing existential and catastrophic risks, due to their extreme scale.

- Our view that all future generations matter, and that some issues can affect generations stretching into the long-run future. This further increases the importance we place on reducing existential risks and on events that could affect the long-run future, especially if they threaten to “lock in” power structures or values that could lastingly shape how the future unfolds.

- Our relative openness to issues where it’s very uncertain what to do, or even whether working on the issue has much impact at all. This is because we think the expected value of decisions matters a lot. Others have argued that it’s better to focus on issues where you can be more confident of making a positive impact.

If we were to reject all the ideas above, problems related to AI would stand out less, while working on issues like factory farming and global health would become relatively higher priorities. If we also rejected the idea that non-humans are worthy of moral concern, global health might be our top problem.

If we weren’t concerned about future generations or non-humans, but we did still think that AGI would be transformative and arrive as soon as 2030, AI-related risks and biorisks would plausibly still be our top issues due to the threats they pose to people alive today.

There are other parts of our broad worldview that could also be wrong. You can read about the thinking that underlies our views in our foundations series.

Previous versions of this list have resulted in thoughtful feedback, and we’ve adjusted our views over time. We anticipate this list will continue to evolve, as both the circumstances of the world change and we come to understand them better.

You can see some of our top uncertainties about each individual problem by clicking through to the individual profiles.

No. We don’t think everyone in our audience — let alone everyone in the world — should work on our top problems (even if everyone totally agreed with our views).

First, the pressingness of a problem is only one aspect — though a very important one — of our framework for comparing careers.

Different people will find different opportunities within each problem, and will have different degrees of personal fit for those opportunities. These other factors also really matter — you may well be able to have 100 times the impact in an opportunity that’s a better fit. That can make it higher impact to work on a problem you think is less pressing in general.

Moreover, there are reasons to spread out across problems:

- As more people work on a problem, it gets less neglected, and there are diminishing returns to additional work. This means that a group of people that’s large compared to the capacity for that problem to absorb people will start to run out of fruitful opportunities to make progress on it, making it better for new people to spread out into other areas.

- If you work with others, there is value of information in exploring new world problems — if you explore an area and find out that it’s promising, other people can enter it as well.

Among people who engage with our advice, we aim to help a majority shoot for one of the top world problems we list above, but that doesn’t mean we think everyone should work on the top one or two.

If we consider the world as a whole, not just our readers, it’s even more obvious that we don’t want everyone to work on our top-ranked problems. The world wouldn’t function if everyone tried to work on preventing AI takeover. Clearly, we need people working on a wide range of problems, as well as keeping society running and taking care of themselves and their families.

However, in practice it’s safe to assume that what most of the world does will remain unaffected by what we say. (If that changes, we’ll change our advice accordingly!) So we focus on finding the biggest gaps in what the world is currently doing in order to enable our readers to have as much impact as they can.

Some of our effort is allocated to creating resources that are useful no matter which problem you want to work on (such as our advice on career planning), but some resources are only relevant to a particular problem.

When it comes to these, we roughly try to allocate our efforts in line with what we think are the most pressing problems. This means we aim to spend most of our time learning, writing, and thinking about our highest-priority areas and less time on those we think are less pressing.

However, the distribution of our effort does not exactly match our views about which problems are most pressing. This is because we are a small team and there are returns to focusing and really learning about some problems, and also because we are better positioned to help people work on some problems vs others.

This means that we tend to put more effort into having great advice about the very top problems we prioritise and those for which we can have stellar advice compared to the other sources.

We’d love to have the resources to spend substantial effort on all the problems in the lists above. But given the size of our team, we aren’t able to do much more than write an article or two on many of the topics and point readers in the direction of more informed groups.

We are so glad you’d like to help! It can seem daunting, but we’ve seen lots of people make real contributions to these problems, including people who didn’t think they could when they first came across them.

The short answer is that the individual problem profiles each have a section on how to help tackle that problem, so click through to read the full profiles.

Also, see our career reviews page and job board to get ideas for specific jobs and careers that can help, and how to enter them.

If you want to think about what to do in more depth, see our materials on career planning.

We have guides to particular career paths that contain common early steps as well as pointers on how to eventually put all your experience and skill to the best use.

When you have some ideas, apply for free one-on-one career advice from our advisors, who can help you compare options and connect you with mentors and other opportunities.

The only thing you can control is contributing as well as you can — and that’s a matter not just of what the world needs, but also of your own motivation and abilities.

And balancing the two is an art.

On one hand, you might surprise yourself. We’ve worked with lots of people who weren’t immediately interested in a problem, but after they learned more and found interesting opportunities to address it, they became passionate about it over time. So don’t rule out everything you’re not immediately interested in.

But if you try to get motivated and it doesn’t work, you can try working on something else. Ultimately, finding a job that fits you is really important. It’s usually better to be doing something you’re great at rather than struggling to stay motivated working on something that’s important in the abstract. In the answer to the next question, you’ll find a few other lists of problems you can investigate besides ours.

You might also be interested in the resources from Probably Good, which supports people working on a wider set of issues, or our career guide, which has advice that can be applied to any area.

If you really want to help with these problems but don’t feel motivated to work on them directly, you could try helping by donating to organisations that work on them. If you do this as a primary aim of your career, we call it ‘earning to give.’ You can also donate 10% of your income (or however much you’re comfortable with).

Read more about how to have a positive impact in any job.

We have an article with a process for comparing global problems for yourself.

Other lists of pressing global problems we’ve seen, for inspiration:

- The United Nations’ sustainable development goals

- The United Nations’ more general list of global issues

- The Global Challenges Foundation’s list of global risks

- A big list of cause candidates from the Effective Altruism Forum

If you just want to dive into general high-impact career advice that has less focus on particular problems, you may want to check out our career guide or the resources from Probably Good.

Different problems do need different skills and expertise, so people’s ability to contribute to solving them can vary dramatically. That said, we think it’s generally underappreciated that there are many, many ways to contribute to solving a single problem, so you also shouldn’t assume you can’t help with something just because you don’t have some salient qualification.

To learn more about what’s most needed to address different world problems, click through to read the profiles above.

To explore your own skills and other aspects of your personal fit (especially early in your career) and find your comparative advantage, we encourage you to make a list of career ideas, rank them, identify key uncertainties about your ranking, and then try to do low-cost tests to resolve those uncertainties. After that, we often recommend planning to explore several paths if you’re able to.

You can find more thorough guidance in our resources on career planning.

You can also look at our list of the most valuable skills you can develop and apply to a variety of problems, and how to assess your fit with each one.

Read next: Work on the world's biggest challenge

Want to learn more about tackling the top AGI challenges? Our AGI careers hub is a good place to start.

Enter your email and we’ll mail you a book (for free).

Join our newsletter and we’ll send you a free copy of The Precipice — a book by philosopher Toby Ord about how to tackle the greatest threats facing humanity. T&Cs here.