Is effective altruism growing? An update on the stock of funding vs people

Note: On May 12, 2023, we released a blog post on updates we made to our advice after the collapse of FTX.

See a brief update Aug 2022.

In 2015, I argued that funding for effective altruism — especially within meta or longtermist areas — had grown faster than the number of people interested in it, and that this was likely to continue. This meant that there was a funding overhang, leading to a series of skill bottlenecks.

A couple of years ago, I wondered if this trend was starting to reverse. There hadn’t been any new donors on the scale of Good Ventures, which meant that total committed funds were growing slowly, giving the number of people a chance to catch up.

However, the spectacular asset returns of the last few years, and creation of FTX, seem to have shifted the balance back towards funding. Now the funding overhang seems even larger in absolute terms than 2015.

In the rest of this post, I make some rough guesses at total committed funds compared to the number of interested people, to see how the balance of funding vs. talent might have changed over time.

This will also give us an update on whether effective altruism is growing — with a focus on what I think are the two most important metrics: the stock of total committed funds, and committed people.

This analysis also made me make a small update in favour of giving now vs. investing to give later.

Here’s a summary of what’s coming up:

- How much funding is committed to effective altruism (going forward)? Around $46 billion.

- How quickly have these funds grown? ~37% per year since 2015, with much of the growth concentrated in 2020–2021.

- How much is being donated each year? Around $420 million, which is just over 1% of committed capital, and has grown maybe ~21% per year since 2015.

- How many committed community members are there? About 7,400 active members and 2,600 ‘committed’ members, growing 10–20% per year 2018–2020, and growing faster than that 2015–2017.

- Has the funding overhang grown or shrunk? Funding seems to have grown faster than the number of people, so the overhang has grown in both proportional and absolute terms.

- What might be the implications for career choice? Skill bottlenecks have probably increased for people able to think of ways to spend lots of funding effectively, run big projects, and evaluate grants.

To caveat, all of these figures are extremely rough, and are mainly estimated off the top of my head. However, I think they’re better than what exists currently, and thought it was important to try to give some kind of rough update on how my thinking has changed. There are likely some significant mistakes; I’d be keen to see a more thorough version of this analysis. Overall, please treat this more like notes from a podcast than a carefully researched article.

Table of Contents

- 1 Which growth metrics matter?

- 2 How many funds are committed to effective altruism?

- 3 How quickly have committed funds grown?

- 4 How much funding is being deployed each year?

- 5 How many engaged community members are there?

- 6 How quickly has the number of engaged community members grown?

- 7 How much labour is being deployed?

- 8 Changes in the overhang: How quickly has funding grown compared to people?

- 9 What about the future of the balance of funding vs. people?

- 10 Implications for career choice

- 11 Related posts

- 12 What’s next

Which growth metrics matter?

Broadly, the future1 impact of effective altruism depends on the total stock of:

- The quantity of committed funds

- The number of committed people (adjusted for skills and influence)

- The quality of our ideas (which determine how effectively funding and labour can be turned into impact)

(In standard economic growth models, this would be capital, labour, and productivity.)

You could consider other resources like political capital, reputation, or public support as well, though we can also think of these as being a special type of labour.

In this post, I’m going to focus on funding and labour. (To do an equivalent analysis for ideas, we could try to estimate whether the expected return of our best way of using resources is going up or down, with some kind of adjustment for diminishing returns.)

For both funding and labour, we can look at the growth of the stock of that resource, or the growth of how much of that resource is deployed (i.e. spent on valuable projects) each year.

If we want to estimate how quickly effective altruism is growing, then I think the stock is most relevant, since that determines how many resources will be deployed in the long term.

It’s true there’s no point having a big stock of resources if it’s not being deployed — so we should also want to see growth in deployed resources — however, there can be good reasons to delay deployment while the stock is still growing, such as (i) to gain better information about how to spend it, (ii) to build up grantmaking capacity, or (iii) to accumulate investment returns and career capital. So, if forced to choose between stock and deployment, I’d choose the stock as the best measure of growth.

Both the stock of resources and the amount deployed each year are also more important than ‘top-of-funnel’ metrics (like Google search volume for ‘effective altruism’) though we should watch the top-of-funnel metrics carefully — especially insofar as they correlate with future changes in the stock.

Finally, I think it’s very important to try to make an overall estimate of the total stock of resources. It’s possible to come up with a long list of EA growth metrics, but different metrics typically vary by one or two orders of magnitude in how important they are. Typically most growth is driven by one or two big sources, so many metrics can be stagnant or falling while the total resources available are exploding.

How many funds are committed to effective altruism?

Here are some very, very rough figures:

| Donor | Billions committed, present value | Notes |

|---|---|---|

| Good Ventures and Open Philanthropy | 22.5 | Forbes estimates that Dustin Moskovitz’s net worth is $25 billion. Assume 90% committed. |

| FTX team | 16.5 | In April 2021, Forbes estimated Sam Bankman-Fried’s net worth to be ~$9 billion. In July 2021, a private funding round valued FTX at $18 billion, which Forbes estimated would increase Sam’s net worth by a further $7.9 billion to $16.2 billion. Sam has been quoted in the media saying that he intends to donate most of the money, with a focus on supporting organisations safeguarding the future. There are other FTX and Alameda co-founders who also intend to donate to EA problem areas. Note that since the FTX founders hold the majority of the equity, if they tried to sell a large fraction of their stakes, the price would likely crash. |

| NPV of GiveWell donors (excluding Good Ventures) | 3.3 | $100 million per year, at a 4% discount rate |

| Other EA cryptocurrency donors | 2 | This could be a big underestimate. It’s hard to find good data on the net worth of these donors, and I’m unsure how much they intend to donate. |

| Founders Pledge | 0.8 | Assume 25% of $3.1 billion pledged |

| GWWC | 0.5 | $2.3 billion pledged; assume 80% drop out |

| Other medium-sized donors | 0.5 | My guess |

| Total | 46.1 | This could easily be off by $10 billion. |

I’ve tried to focus on funds that are already ‘committed’. I mostly haven’t adjusted them for the chance the person gives up on EA (except for GWWC), but I’ve also ignored the net present value of likely future commitments.

I’m aware of at least one new donor who is likely to donate to longtermist issues on the scale of Good Ventures, at around $100 million per year, with perhaps a net present value in the tens of billions.

There are several other billionaires who seem sympathetic to EA (e.g. Reid Hoffman has donated to GPI) — these are ignored.

I’m also ignoring people like Bill Gates who donate to things that EAs would often endorse.

Bear in mind that these figures are extremely volatile — e.g. the value of FTX could easily fall 80% in a market crash, or if a competitor displaces it. Many of the stakes that the wealth comes from are also fairly illiquid — if the owners tried to sell a significant fraction, it could crash the price.

Side note for EA investors

As an individual EA who’s fairly value-aligned with other EA donors, you should invest in order to bring the overall EA portfolio in line with the ideal EA portfolio, and to prefer assets that are uncorrelated with other EAs. The current EA portfolio is highly tilted towards Facebook and FTX, and more broadly towards Ethereum/decentralised finance and big U.S. tech companies. This overweight is much more significant if we risk-weight rather than capital-weight. For instance, Ethereum and FTX equity are probably about 5x more volatile and risky than Facebook stock, and so account for the majority of our risk allocation. This means you should only hold assets highly correlated to these if you think this overweight should be increased even further. It seems likier to me that most EAs should underweight these assets in order to diversify the portfolio.

How quickly have committed funds grown?

The committed funds are dominated by Good Ventures and FTX, so to estimate total growth, we mainly need to estimate how much they’ve grown:

- In 2015, Forbes estimated Moskovitz’s net worth was $8 billion, so it has grown by 2.6x since then (~20% per year). This is probably due to (i) Facebook stock price appreciation and (ii) the Asana IPO.

- FTX didn’t exist in 2015.

The impact of these two sources alone is growth of $33 billion since 2015, and the new total of $39 billion from both is about five-fold growth compared to $8 billion in 2015.

My rough estimate is the other sources have grown around 2.5x since 2015.

For instance, GiveWell donors (excluding Open Phil) were giving $80 million per year in 2019, up from about $40 million in 2015. We don’t yet have the finalised figures for 2020, but it seems to be significantly higher — perhaps $120 million (see below).

Many of the sources have grown faster. As one example, in 2015 David Goldberg estimated the value of pledges made by Founders Pledge members at $64 million, compared to over $3 billion today.

In total, I’d guess that committed funds in 2015 were ~$10 billion, so have grown 4.6x. This is 37% per year over five years.

You might worry that most of this growth was concentrated in the earlier years, and that recent growth has been slow. My guess is that if anything the opposite is the case — growth has been concentrated in the last 1–2 years, in line with the recent boom in technology stocks and cryptocurrencies, and the creation of FTX.

The situation for each cause could be different. My impression is that the funds available for longtermist and meta grantmaking have grown faster than those for global health.

How much funding is being deployed each year?

In early 2020, I estimated that the EA community was deploying about $420 million per year.

Around 60% was via Open Philanthropy, 20% from GiveWell, and 20% everyone else. The Open Phil grants were based on an average of their giving 2017–2019, which helps to smooth out big multi-year grants that are attributed to the year when they’re first made.

$420 million per year would be just over 1% of committed capital.

Even those who are relatively into patient philanthropy think we should aim to donate over 1% per year, and at the 2020 EA Leaders Forum, the median estimate was that we should aim to donate 3% of capital per year.

So, if we’re now at 1% per year, that’s one argument that we should aim to tilt the balance towards giving now rather than investing to give later. In contrast, in early 2020, I thought that longtermist donors were giving more like 3% of capital per year, so it wasn’t obvious whether this was too low or too high. (This argument is fairly weak by itself — the quality of the particular opportunities and our ability to make good grants are also big factors.)

How quickly have deployed funds grown?

Since 60% comes via Open Philanthropy, we can mainly look to their grants.

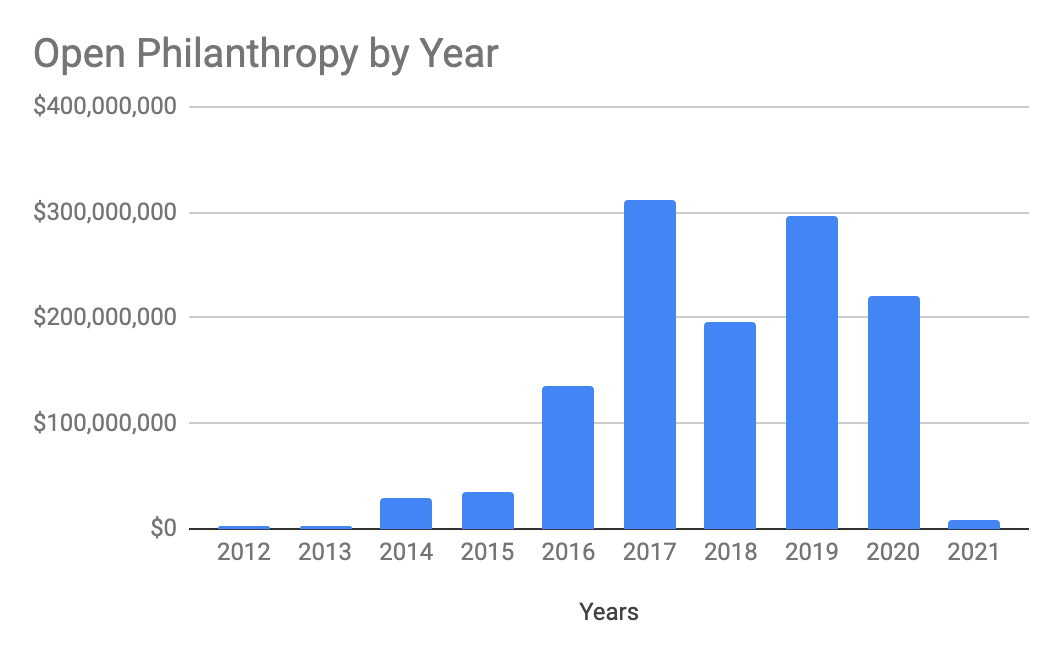

Around 2014–2015, Open Philanthropy was only making grants of around $30 million per year, which rapidly grew to a new plateau of $200–$300 million by 2017.

At that point, they decided to hold deployed funds constant for several years, in order to evaluate their progress and build staff capacity before trying to scale further.

Dustin Moskovitz and Cari Tuna have said they want to donate everything within their lifetimes. This will require hitting ~$1 billion deployed per year fairly soon, which I expect to happen. The Metaculus community agrees, forecasting donations of over $1 billion per year by 2030.

Note that the grants are very lumpy year-to-year. One reason for this is that Open Philanthropy sometimes makes three- or five-year commitments which all accrue to the first year. I think 2017 is unusually high due to the grants to CSET and OpenAI. You’ll get a more accurate impression from taking a three-year (or five-year) moving average, which currently stands at ~$240 million. (The chart below is from Applied Divinity Studies.)

FTX is new, so the founders have only been giving millions per year. Their money is not yet highly liquid, and they haven’t created a foundation, so we should expect it to remain low for a while.

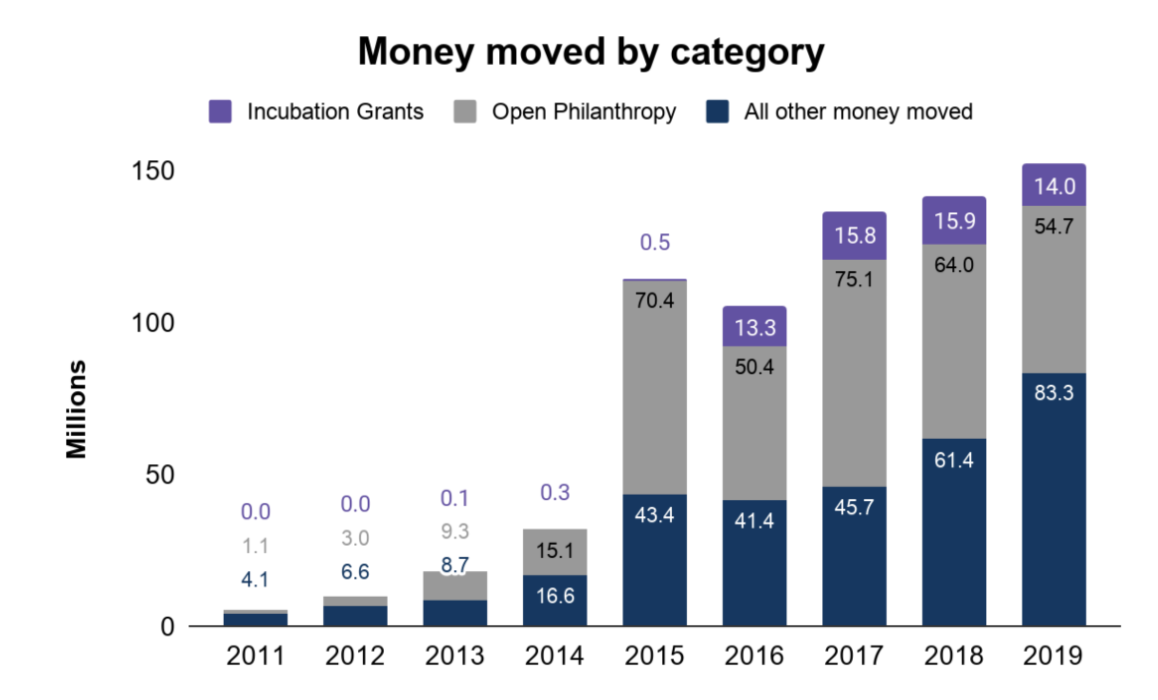

Money moved by GiveWell (excluding Open Philanthropy) hit a flat period from 2015–2017, but seems to have started growing again in 2018, by over 30% per year. I believe the 2020 figures are on track to be even better than 2019, but aren’t shown on this chart (or included in my deployed funds estimate).

My impression from the data I’ve seen is that funds donated by GWWC members, EA Funds, Founders Pledge members, Longview Philanthropy, SFF, etc. have all grown significantly (i.e. more than doubling) in the last five years.

Overall, I estimate the community would have been deploying perhaps $160 million per year in 2015, so in total this has grown 2.6-fold, or 21% per year over five years — somewhat slower than the growth of the stock of committed capital, but roughly in line with the number of people.

How many engaged community members are there?

The best estimate I’m aware of is by Rethink Priorities using data from the 2019 EA Survey:

We estimate there are around 2,315 highly engaged EAs and 6,500 (90% CI: 4,700–10,000) active EAs in the community overall.

‘Highly engaged’ is defined as those who answered 4 or 5 out of 5 for engagement in the survey, and ‘active’ is those who answered 3, 4 or 5.

A 4 on this scale is a fairly high bar for engagement — e.g. many people who’ve made career changes we’ve tracked at 80,000 Hours only report ‘4’ on this scale).

In 2020, I estimate about 14% net growth (see the next section), bringing the total number of active EAs to 7,400.

You can see some more stats on what these people are like in the EA Survey.

If we were to consider the number of people interested in effective altruism, it would be much higher. For instance, at 80,000 Hours we have about 150,000 people on our newsletter, and over 100,000 people have bought a copy of Doing Good Better.

How quickly has the number of engaged community members grown?

Unfortunately, it’s still very hard to estimate the growth rate in the number of committed people, since the data are plagued with selection effects and lag effects.

For instance, the data I’ve seen shows that it often takes several years for someone to go from having first heard about EA to filling out the EA Survey, and from there to reporting themselves as ‘4’ or ‘5’ for engagement. This means that many of the new members from the last few years are not yet identified — so most ways of measuring this growth will undercount it.

In mid 2020, I made ~6 different estimates for the annual growth rate of committed members, which fell in the range of 0-30% in the last 1–2 years. My central estimate was around 20% (+900 per year at ‘4’ or ‘5’ on the engagement scale in the survey).

More recently, we were able to re-use the method Rethink Priorities used in the analysis above, but with data from the 2020 EA Survey rather than 2019. This analysis found the total number of engaged EAs has grown about 14% in the last year, so would now be 7,400.

This is fairly uncertain, and there’s a reasonable chance the number of people didn’t grow in 2020.

The percentage growth rate would have been a lot higher in 2015–2017, since the base of members was much smaller, and I also think those were unusually good years for getting new people into EA.

Around 2017, there was a shift in strategy from reaching new people to getting those who were already interested into high-impact jobs. This meant that ‘top-line’ metrics — such as web reach and media impressions — slowed down.

My take is that this shift in strategy was at least partially successful, insofar as the number of committed EAs has continued to grow, despite flattish top-line metrics. (Though there’s a reasonable chance EA could have grown even faster if the top-line growth had continued.)

Going forward, we’ll eventually need to get the top-of-funnel metrics growing again, or the stock of ‘medium’ engaged people will run out, and the number of ‘highly’ engaged people will stop growing. Outreach to new people seems to be getting more highly prioritised going forward.

What about the skill level of the people involved?

This is a big uncertainty because one influential member (e.g. in a senior position at the White House) can achieve what it might take thousands of others to achieve.

My sense is that the typical influence and skill level of members has grown a lot, partly as people have grown older and advanced their careers. For example, there are now a number of interested people in senior government positions in the U.K. and U.S. who weren’t there in 2015.

In terms of the level of ‘talent’ of members, we don’t have great data. Impressions seem to be split between the level being similar to the past and being a bit lower. So if we averaged the two, in expectation there would be a small decrease.

How much labour is being deployed?

It’s hard to estimate how much labour is being ‘deployed’.

People can at most deploy one year per year if they focus on impact, but a proper estimate should account for:

- What fraction of people are focused on impact compared to career capital. According to the 2020 EA Survey, around two community members are prioritising career capital per person prioritising immediate impact.

Increasing productivity over time. The mean age in the community is 29, but most people only hit peak productivity around age 40–60 (though most of the increase happens by the early 30s).

Discounting the value of future years, especially for ‘drop out’, though potentially including the labour of future recruits.

Overall, my guess is that we’re only deploying 1–2% of the net present value of the labour of the current membership. This could be an argument for shifting the balance a bit more towards immediate impact rather than career capital — though this is a really complicated question. Young people often have great opportunities to build career capital, and if these increase their lifetime impact, they should take them, no matter what others in the community are doing.

How quickly has deployed labour increased?

If the percentage of people focused on career capital vs. impact is similar over time, then it should track the stock.

To claw together some rough data, according to the 2019 EA Survey, about 260 people said they’re working at ‘an EA org’ (which includes object-level charities). With an estimated 40% response rate, that would imply 650 people in total, which seems a lot higher than what I would have guessed in 2015.

My impression is that most of the most central EA orgs have also grown headcount ~20% per year (e.g. CEA, MIRI, 80K), roughly doubling since 2015, and keeping pace with growth in the number of people.

Working at an EA org is only one option, and a better estimate would aim to track the number of people ‘deployed’ in research, policy, earning to give, etc. as well.

Changes in the overhang: How quickly has funding grown compared to people?

In 2015, I argued there was a funding overhang within meta and longtermist causes. (Though note it’s less obvious there’s a funding overhang within global health, and to a lesser extent animal welfare.) How has this likely evolved?

During 2017–2019, I thought the number of people might have been catching up, but in 2020, it seemed like the growth of each had been roughly similar. A similar rate of proportional growth would mean the absolute size of the overhang was increasing.

As of July 2021 and the latest FTX deal, I now think the amount of funding has grown faster than people, making the growth of the overhang even larger.

Here are some semi-made-up numbers to illustrate the idea:

Suppose that, in 2015:

- There was $10 billion

- There were 2,500 people

- 30% of people are to be employed by EA donors

- The average cost of employing someone is $100,000

In that case, it would take $75 million per year to employ them. But $10 billion can generate perpetual income of $200 million,2 so the overhang is $125 million per year.

Suppose that in 2021:

- There is $50 billion

- There are 7,500 people.

Then, it would take $225 million to employ 30% of them, but you can generate $1,000 million of income, so the overhang is $775 million per year.

Another way to try to quantify the overhang is to try to estimate the financial value of the labour, and compare is to the committed funding. My rough estimate is that the labour is worth $50k to $500k per year. If, after accounting for drop out, the average career has 20 years remaining, that would be $1–10 million per person. (If this seems high, note that it’s driven largely by outliers.) If there are 7,400 people, that would be $7.4bn – $74bn in total (central around $20bn). In comparison, I estimated there is almost $50bn of committed capital, so the value of the labour is most likely lower. In contrast, in the economy as a whole, I think human capital is normally thought to be worth more than physical capital, so the situation in effective altruism is most likely the reverse of the norm.

Note that if there’s an overhang, the money can be invested to deploy later, or spent employing people outside of the community (e.g. funding academic research), so it’s not that the money is wasted — it’s more that we’ll end up missing some especially great opportunities that could have been taken otherwise. These will especially be opportunities that are best tackled by people who deeply share the EA mindset. I’ll talk more about the implications later.

What about the future of the balance of funding vs. people?

Financial investment returns

A major driver of the stock of capital will be the investment returns of Facebook, Asana, FTX, and Ethereum.

If there’s a crash in tech stocks and cryptocurrencies (which seems fairly likely in the short term), the balance could move somewhat back towards people.

In the longer term, I’ll leave it to the reader to forecast the future returns of a portfolio like the above.

Personally, I feel uneasy projecting that U.S. tech stocks will return more than 1–5% per year, due to their high valuations. I expect cryptocurrencies will return more, but with much higher risk.

New donors vs. new people

As noted above, I think it’s more likely than not that another $100 million per year/$20 billion NPV donor enters the community within the coming years. This would be roughly ~40% growth in the total stock, compared to 15% per year growth in people, which would shift the balance even more towards funding.

The Metaculus community also estimates there’s a 50% chance of another Good Ventures-scale donor within five years.

After this, I expect it’ll become harder to grow the pool of committed funds at current rates.

Going from $60 billion to $120 billion would require convincing a 100+ billionaire like Jeff Bezos to give a large fraction of their net worth to EA-aligned causes, or might require convincing around 10 ‘regular’ billionaires.

That said, it seems possible that the total pledged by all members of the Giving Pledge is around $600 billion. If 20% of them were into EA, that would be $120 billion; four-fold growth from today.

The total U.S. philanthropic sector is $400 billion per year, so if 1% of that was EA aligned, that would be $4 billion per year, which is 10-fold growth from today, and three-fold growth from where I expect us to be in 5–10 years.

Expanding the number of committed community members from ~5,000 to ~50,000 seems pretty achievable given enough time. Only about 10% of U.S. college graduates have even heard of EA, let alone seriously considered its ideas, and it’s even less well known in non-English-speaking countries.

A recent survey of Oxford students found that they believed the most effective global health charity was only ~1.5x better than the average — in line with what the average American thinks — while EAs and global health experts estimated the ratio is ~100x. This suggests that even among Oxford students, where a lot of outreach has been done, the most central message of EA is not yet widely known.

If it seems easier to grow the number of people 10-fold than to grow the committed funds 10-fold, then I expect the size of the overhang will eventually decrease, but this could easily take 20 years, and I expect the overhang is going to be with us for at least the next five years.

A big uncertainty here is what fraction of people will ever be interested in EA in the long term — it’s possible its appeal is very narrow, but happens to include an unusually large fraction of very wealthy people. In that case, the overhang could persist much longer.

Human capital investment returns

One other complicating factor is that, as noted, people’s productivity tends to increase with age, and many community members are focused on growing their career capital.

For instance, if someone goes from a masters student to a senior government official, then their influence has maybe increased by a factor of 1,000. This could enable the community to achieve far more, and to deploy far more funds, even if the number of people doesn’t grow that much.

Implications for career choice

Here are some very rough thoughts on what this might mean for people who want high-impact careers and feel aligned with the current effective altruism community. I’m going to focus on longtermist and meta causes, since they’re what I know the best and where the biggest overhang exists.

Which roles are most needed?

The existence of a funding overhang within meta and longtermist causes created a bottleneck for the skills needed to deploy EA funds, especially in ways that are hard for people who don’t deeply identify with the mindset.3

We could break down some of the key leadership positions needed to deploy these funds as follows:

- Researchers able to come up with ideas for big projects, new cause areas, or other new ways to spend funds on a big scale

- EA entrepreneurs/managers/research leads able to run these projects and hire lots of people

- Grantmakers able to evaluate these projects

These correspond to bottlenecks in ideas, management, and vetting, respectively.

Given that many of the most promising projects involve research and policy, I’d say there’s a special need to have these skills within those sectors, as well as within the causes longtermists are most focused on, such as AI and biosecurity (e.g. someone who can lead an AI research lab; the kind of person who can found CSET). That said, I hope that longtermists expand into a wider range of causes, and there are opportunities in other sectors too.

The skill sets listed above seem likely to always be very valuable: As an illustration, these skill sets also seem very valuable within global health — and typically more valuable than earning to give — though there’s less obviously an overhang there.

But the presence of the overhang makes them even more valuable. Finding an extra grantmaker or entrepreneur can easily unlock millions of dollars of grants that would otherwise be left invested.4

I have thought these roles were some of the most needed in the community since 2015, and now that the overhang seems even bigger — and seems likely to remain big for 10 years — I think they’re even more valuable than I did back then.

Personally, if given the choice between finding an extra person for one of these roles who’s a good fit or someone donating $X million per year, to think the two options were similarly valuable, X would typically need to be over three, and often over 10 (where this hugely depends on fit and the circumstances).

This would also mean that if you have a 10% chance of succeeding, then the expected value of the path is $300,000–$2 million per year (and the value of information will be very high if you can determine your fit within a couple of years).

The funding overhang also created bottlenecks for people able to staff projects, and to work in supporting roles. For each person in a leadership role, there’s typically a need for at least several people in the more junior versions of these roles or supporting positions — e.g. research assistants, operations specialists, marketers, ML engineers, people executing on whatever projects are being done, etc.

I’d typically prefer someone in these roles to an additional person donating $400,000–$4 million per year (again, with huge variance depending on fit).

The bottleneck for supporting roles has, however, been a bit smaller than you might expect, because the number of these roles was limited by the number of people in leadership positions able to create these positions.

I think for the more junior and supporting roles there was also a vetting bottleneck. I’m unsure if there were infrastructure or coordination bottlenecks beyond the factors mentioned, but it seems plausible.

How should individuals respond to these needs?

If you might be able to help fill one of these key bottlenecks, there’s a good chance it’ll be the highest impact thing you can do.

Ideally, you can shoot for the tail outcome of a leadership role within one of these categories (e.g. becoming a grantmaker, manager, or someone who finds a new cause area). Aiming for a leadership position also sets you up to go into a highly valuable supporting or more junior equivalent role (e.g. being a researcher for a grantmaker, or being an operations specialist working under a manager).

Your next step will likely involve trying to gain career capital that will accelerate you in this path. Depending on what career capital you focus on, there could be many other strong options you could switch to otherwise (e.g. government jobs).

Be aware that the leadership-style roles are very challenging — besides being smart and hardworking, you need to be self-motivated, independently minded, and maybe creative. They also typically require deep knowledge of effective altruism, and a lot of trust from — and a good reputation within — the community. It’s difficult to become trusted with millions of dollars or a team of tens of people.

So, no one should assume they’ll succeed, and everyone should have a backup plan.

The ‘supporting’ roles are also more challenging than you might expect. Besides also requiring a significant amount of skill and trust (though less than the leadership roles), there’s a lack of mentorship capacity, and their creation is limited by the number of people in leadership roles.

On our job board, I counted ~40 supporting roles within our top recommended problem areas posted within the last two months, so there are perhaps 240 per year.

This compares to ~7,400 engaged community members, of which perhaps ~1,000 are early career and looking to start these kinds of jobs.

So there are a significant number of opportunities, and given their impact I think many people should pursue them, but it’s important to know there’s a reasonable chance it doesn’t work out.

If you’re unsure of your chances of eventually being able to land a supporting role, then build career capital towards those roles, but focus on ways of gaining career capital that also take you towards 1–2 other longer-term roles you find attractive.

I want to be honest about the challenges of these roles so that people know what they’re in for, but I’m also very concerned about being too discouraging.

We meet many people who are under-confident in their abilities, and especially their potential to grow over the years.

I think it’s generally better to aim a bit high than too low. If you succeed, you’ll have a big impact. If it doesn’t work out, you can switch to your plan B instead.

Trying to fill the most pressing skill bottlenecks in the world’s most pressing problems is not easy, and I respect anyone who tries.

What does this mean for earning to give?

Note: On May 12, 2023, we released a blog post on updates we made to our advice after the collapse of FTX.

The success of FTX is arguably a huge vindication of the idea of earning to give, and so in that sense it’s a positive update.

On balance, however, I think the increase in funding compared to people is an update against the value of earning to give at the margin.

This doesn’t mean earning to give has no value:

- Medium-sized donors can often find opportunities that aren’t practical for the largest donors to exploit – the ecosystem needs a mixture of ‘angel’ donors to compliment the ‘VCs’ like Open Philanthropy. Open Philanthropy isn’t covering many of the problem areas listed here and often can’t pursue small individual grants.

- You can save money, invest it, and spend when the funding overhang has decreased, or in order to practice patient philanthropy more generally.

- You could support causes that seem more funding constrained, like global health.

But I do think the relative value of earning to give has fallen over time, as the overhang has increased.

Overall, I would encourage people early in their career to very seriously consider options besides earning to give first.

If you’re already earning to give — and especially if you don’t seem to have a chance of tail outcomes (e.g. startup exit) — I’d encourage you to seriously consider whether you could switch.

That said, there are definitely people for whom earning to give remains their overall top option, especially if they have personal constraints, can’t find another role that’s a good fit, have unusually high earnings, or are learning a lot from their job (and might switch out later).

Other jobs

I’ve focused on earning to give and jobs working ‘directly’ to deploy EA funds, but I definitely don’t want to give the impression these are the only impactful jobs.

I continue to think that jobs in government, academia, other philanthropic institutions and relevant for-profit companies (e.g. working on biotech) can be very high impact and great for career capital.

For instance, it would be possible for the community to have an absolutely massive impact via improving government policy around existential risks, and this doesn’t require anyone to get a job ‘in EA’.

I don’t discuss them more here because they don’t require EA funding to pursue, so their expected impact isn’t especially affected by the size of the funding overhang. I’d still encourage readers to consider them.

Related posts

You can comment on this post on the Effective Altruism Forum.

- Part 2: The allocation of resources across cause areas.

What does the growth of effective altruism imply about our priorities and level of ambition?

What’s next

If you think you might be able to help deal with one of the key bottlenecks mentioned, or are interested in switching out of earning to give, we’ve recently ended the waitlist for our one-on-one advice, and would encourage you to apply.

You might also be interested in:

- What the effective altruism community most needs. A conversation between me and Arden Koehler, covering talent vs. funding gaps in more depth.

Why do some organisations say their recent hires are worth so much?

Podcast: Sam Bankman-Fried on taking a high-risk approach to crypto and doing good

Stay up to date on new research like this by following me on Twitter.

Notes and references

- Looking backwards, the main thing we care about is actual impact. Personally I think the EA community has had a lot of success doing things like turning AI safety into an accepted field, funding malaria prevention, scaling up cage-free campaigns etc., though this is a matter of judgement.↩

- I assume a perpetual withdrawal rate of 2%. Studies of the market in the past often find that it’s possible to withdraw 2–3% from a portfolio that’s mainly equities and not decrease your capital in real terms. 2% is also in line with the current dividend yield of global stocks — the capital should roughly track global nominal GDP, so the 2% represents what can be withdrawn each year.

This 2% figure could be very conservative — if EA donors can earn higher returns than say an 80% equity portfolio, as they have historically, then it’ll be possible to withdraw a lot more. This would make the funding overhang a lot larger.

I’ve also compared a perpetual withdrawal rate with the current stock of people, but the typical career of a member will only last 30 years, so it might have been better to assume we also want to spend down the capital over 30 years. In that case, you could likely withdraw 3–4% (a typical retirement safe withdrawal rate for a 30-year retirement), which would up to double the available income.↩

- For instance, there’s a pre-existing community doing conventional biosecurity, which made it easier to turn money into progress on biosecurity despite a lack of EA community members working in the area. In contrast, there was no existing AI safety community, which has meant it has taken longer to deploy funds there.↩

- The more reason for urgency, the bigger these bottlenecks. If you’d like to see more patient philanthropy, then it might be fine just to keep all the funding invested to spend later.↩