Quantification as a Lamppost in the Dark – Quantification – Part 3

Late one evening a police officer comes across a man on the way home from a party. He is quite drunk and looking for something under a lamppost. “What are you looking for?” asks the policeman. “My keys,” the man replies, pointing down the road a little way, “I dropped them over there.” The policeman is baffled, “Then why are you looking for them here?”. “Because there’s no light over there.”

The joke is old but it gets to the heart of the debate over quantification. Is it best to look for keys under lampposts? Quantification lets us use a whole host of numerical tools to understand problems and develop solutions to them. But as I discussed last time there are dangers in using such tools on the wrong kinds of goals. In this way quantification is like the lamppost. It lets you see far more clearly what is going on, but only in some limited areas.

So what kinds of things tell us how good an idea it is to look under lampposts? (Or what kinds of problems should we use quantitative methods on).

- How many near misses there are. I’ll discuss this below.

- How bright the light is. i.e. how much information does this tool give you.

- Where you think you dropped the keys. i.e. if you suspect that the thing you are looking for cannot be analysed by these methods then don’t use them.

Last time we dealt with the biases and limitations around quantitative methods. With all these in mind we might think it at least possible that in fact the best interventions will be generally not quantifiable. We might think that the revolutionary overthrow of governments or some other project, is on average the best project; despite it being of high and almost impossible to quantify risk. I want to argue that even if you think that the best things aren’t under lampposts it can still be best to look there.

You can only do so much, so make it count.

The key fact in any intervention is that we have finite resources. You have only so many hours, only so much money, only so many favours you can call in. But there are many projects, there are 170,000 registered charities in the UK alone. And these are far from equally good. In health there are interventions out there that are 1000 times less effective than others. It isn’t like looking for one key, it’s like looking for the best thing you can find when you can only carry so much.

Imagine the street holds not keys but banknotes of all denominations. We can imagine there being a few $1000 bills in amongst the many $1 bills. Suppose you can only carry so many notes (you have only so many hours to work in a day). Now it most likely does make sense to look under a lamppost. If you look in the dark you pick up a random selection, a handful of notes with lots of $1s and a few $20s. If you look under a streetlamp you pick up only the $1000 bills. In fact, for it to make any sense to look in the dark you’d have to expect more than just the bills over there to be worth more on average. You’d have to expect those notes to be worth more than the very best notes under the lamppost.

The point is that however much quantitative methods limit our focus they let us pick things that are vastly more effective than the average. Other methods might promise a dramatic effect in some cases but quantitative methods promise to give you the best intervention of one type almost every time. For it to make sense to reject quantitative methods we’d have to expect that the average intervention from the non-quantitative camp would be better than the best method in the quantitative camp.

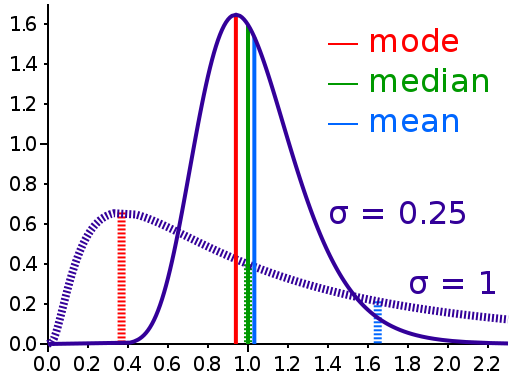

Image source

X-axis: Effectiveness

Y-axis: Number of interventions

Interventions are often distributed like a highly skewed log-normal distribution, a small number are vastly better than others. Choosing the best intervention is a very significant gain.

How good are the alternatives?

The next question is how much more information we get out of quantitative methods compared to other intuitive, philosophical or categorical tools. This is hard to measure exactly as, by definition, non-quantitative tools resist analysis of their effectiveness. But we do know that without care human thinking is riddled with biases and bad heuristic thinking. Most people will spend just as much money to save 2000 or 200,000 birds from an oil spill. This is typical of how we think. This means that interventions which seem intuitively good end up having no effect or even causing harm.

Different philosophical schools or other non-quantitative tools of analysis are not obviously good at combating these biases. Consider economics, academics who use non-quantitative approaches are as varied as Marxists and Friedmanites. There is nowhere close to this diversity in quantitative econometrics. Which begs the question of how confident the Friedmanite can realistically be that they are even close to a right answer. The light of quantification is

very bright indeed.

Final thought:

For a non-quantifiable intervention to be a sensible choice we have to be confident that we do not need the bright light of quantification. What interventions from hard to quantify areas like political lobbying do you expect to be 1000 times more effective than a typical measure in something like public health? Comments are open for thoughts on that or any other part of this post.

You might also be interested in: