Doing good together: how to coordinate effectively and avoid single-player thinking

Sapiens can cooperate in flexible ways with countless numbers of strangers. That’s why we rule the world, whereas ants eat our leftovers and chimps are locked up in zoos.

The historian, Yuval Harari, claims in his book Sapiens that better coordination has been the key driver of human progress. He highlights innovations like language, religion, human rights, nation states and money as valuable because they improve cooperation among strangers.1

If we work together, we can do far more good. This is part of why we helped to start the effective altruism community in the first place: we realised that by working with others who want to do good in a similar way — based on evidence and careful reasoning — we could achieve much more.

But unfortunately we, like other communities, often don’t coordinate as well as we could.

The effective altruism mindset can easily encourage a ‘single-player’ mindset – one which tries to identify the best course of action assuming what everyone else does is fixed. This can be a reasonable assumption in many circumstances, but once you’re part of a community that does respond to your actions, it can lead to suboptimal actions.

For instance, a single-player mindset can suggest trying to find a job that no one else would do, so that you’re not replaceable. But in a community where others share your values, someone else is going to fill the most impactful positions. Instead, it’s better to take a portfolio approach – think about the optimal allocation of job seekers across open roles, and try to move the allocation towards the best one. This means focusing more on your comparative advantage in relation to other job seekers.

Taking a community perspective can also suggest trying much harder to uphold certain character virtues, such as honesty, helpfulness and reliability; and to invest in community infrastructure.

In this article, we’ll provide an overview of everything we’ve learned about how best to coordinate and how individuals within a group can best work together to increase their collective impact.

We’ll start by covering the basic mechanisms of coordination, including the core concepts in economics that are relevant and some new concepts like indirect trade and shared aims communities. These mechanisms don’t only allow us to understand how to better work with others, but are also relevant to many global problems, which can be thought of as coordination failures.

We’ll then show how these mechanisms lead to some practical advice, focusing on the effective altruism community as a case study. However, we think the same ideas apply to many communities with common aims, such as environmentalism, social enterprise, international development, among others.

We still face a great deal of uncertainty about these questions – many of which have seen little study – which also makes it one of the more intellectually interesting topics we write about. We’d like to see a new research programme focused on these questions. See this research proposal by Max Dalton and the Global Priority Institute’s research agenda.

Reading time: 40 minutes.

Thank you especially to Max Dalton for comments on this article, as well as Carl Shulman, Will MacAskill, and many others.

Table of Contents

- 1 The bottom line

- 2 Theory: what are the mechanisms behind coordination?

- 2.1 Single-player communities and their problems

- 2.2 Market communities

- 2.3 How to avoid coordination failures in self-interested communities, and one reason it pays to be nice

- 2.4 Preemptive and indirect trade

- 2.5 Shared aims communities and ‘trade+’

- 2.6 Summary: how can shared aims communities best coordinate?

- 3 1. Adopt nice norms to a greater degree

- 4 2. Value ‘community capital’ and invest in community infrastructure

- 5 3. Take the portfolio approach

- 5.1 Introducing the approach

- 5.2 Be more willing to specialise

- 5.3 Do more to gain information

- 5.4 Spread out more

- 5.5 Consider your comparative advantage

- 5.6 When deciding where to donate, consider splitting or thresholds

- 5.7 Consider community investments as a whole

- 5.8 Wrapping up on the portfolio approach

- 5.9 Thinking about your career?

- 5.10 Additional topic: How to attribute impact in communities

- 6 What happens when you’re part of several communities?

- 7 Conclusion: moving away from a naive single-player analysis

- 8 Learn more

- 9 Read next

The bottom line

Through trade, coordination, and economies of scale, individuals can achieve greater impact by working within a community than they could working individually.

These opportunities are often overlooked when people make a ‘single-player’ analysis of impact, in which the actions of others are held fixed. This can lead to inaccurate estimates of impact, missed opportunities for trade, and leave people open to coordination failures such as the prisoner’s dilemma.

Coordinating well requires having good mechanisms, such as markets (e.g. certificates of impact), norms that support coordination, common knowledge, and other structures.

Communities of entirely self-interested people can still cooperate to a large degree, but “shared aims” communities can likely cooperate even more.

For the effective altruism community to coordinate better we should:

- Aim to uphold ‘nice norms’, such as helpfulness, honesty, compromise, and friendliness to a greater degree, and also be willing to withhold aid from people who break these norms.

Consider the impact of our actions on ‘community capital’ — the potential of the community to have an impact in the future. We might prioritise actions that grow the influence of the community, improve the community’s reputation, set an example by upholding norms, or set up community infrastructure to spread information and enable trades.

Work out which options to take using a ‘portfolio approach’ in which individuals consider how they can push the community towards the best possible allocation of its resources. For example, this means considering one’s comparative advantage as well as personal fit when comparing career opportunities.

When allocating credit in a community, people shouldn’t just carry out a simple counterfactual analysis. Rather, if they take an opportunity that another community member would have taken otherwise, then they also ‘free up’ community resources, and the value of these needs to be considered as well. When actors in a community are “coupled,” then they need to be evaluated as a single agent when assigning credit.

You can be coordinating with multiple communities at once, and communities can also coordinate among themselves.

Theory: what are the mechanisms behind coordination?

What is coordination, why is it important, and what prevents us from coordinating better? In this section, we cover these theoretical questions.

In the next section, we’ll apply these answers to come up with practical suggestions for how to coordinate better in the effective altruism community in particular. If you just want the practical suggestions, skip ahead.

Much of coordination is driven by trade — swaps that members of the community make with each other for mutual benefit. We’ll introduce four types of trade used to coordinate. Then we’ll introduce the concept of coordination failures, and explain how they can be avoided through norms and other systems.

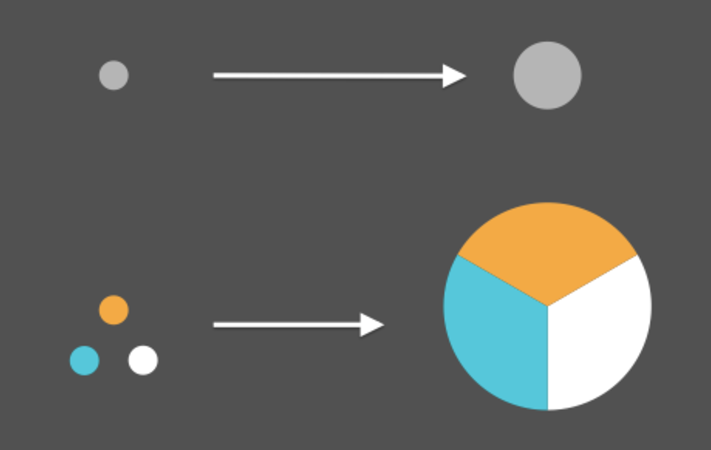

We’ll show how coordinating communities, such as ‘market’ and ‘shared aims’ communities can achieve a greater impact than “single-player” communities.

Single-player communities and their problems

When trying to do good, one option is to ignore coordination. In 2016, we called this the ‘single-player’ approach.

In the single-player approach, each individual works out which action is best at the margin according to their goals, assuming that everyone else’s actions stay constant.

Assuming what everyone else does is constant is a good bet in many circumstances. Sometimes your actions are too small to impact others, or other actors don’t care what you do.

For instance, if you’re considering donating in an area where all the other donors give based on emotional connection, then their donations won’t change depending on how much you give. So, you can just work out where they’ll give, then donate to the most effective opportunity that’s still unfunded.

This style of analysis is often encouraged within the effective altruism community, and introductions to effective altruism often include ‘thinking at the margin’ as a key concept.

However, when you’re part of a community (and in other situations), other actors are responsive to what you do, and this assumption breaks down. This doesn’t mean that thinking at the margin is totally wrong, rather it means you need to be careful to define the right margin. In a community, a marginal analysis needs to take account of how the actions of others will change in response to what you do.

Ignoring this responsiveness can cause you to have less impact in a number of ways.

First, it can lead you to over or underestimate the impact of different actions. For instance, if you think the Global Priorities Institute (GPI) currently has a funding gap of $100,000, you might make a single-player analysis that donations up to this limit will be about as cost-effective as GPI on average. But in fact, if you don’t donate, then additional donors will probably come in to fill the gap, at least over time, making your donations less effective than they first seem.2

On the other hand, if you think other donors will donate anyway, then you might think your donations will have zero impact. But that’s also not true. If you fill the funding gap, then it frees up other donors to take different opportunities, while saving the leaders of the institute time they would have spent fundraising.

Either way, you can’t simply ignore what other donors will do. We’ll explain how donors in a community should decide where to give and attribute impact later.

Second, and more importantly, taking a single-player approach overlooks the possibility of trade.

Trade is important because everyone has different strengths, weaknesses and resources. For instance, if you know a lot about charity A, and another donor knows a lot about charity B, then you can swap information. This allows you to both make better decisions than if you had decided individually.

By making swaps, everyone in the community can better achieve their goals — it’s positive sum. Much of what we’ll talk about in this article is about how to enable more trades.

Being able to trade doesn’t only enable useful swaps. Over time, it allows people to specialise and achieve economies of scale, allowing for an even greater impact. For instance, a donor could become a specialist in health, then tell other donors about the best opportunities in that area. Other donors can specialise elsewhere, and the group can gain much better expertise than they could alone. They can also share resources, such as legal advice, so each get more for the same cost.

Third, sometimes, following individual interest leads to worse outcomes for everyone. A single-player approach can lead to ‘coordination failures.’ The most well-known coordination problem is the prisoner’s dilemma.3 In case you’re not already familiar with the prisoner’s dilemma, one round works as follows:

Imagine that you and a co-conspirator have been arrested after robbing a bank, and are being held in separate jail cells. Now you must decide whether to ‘cooperate’ with each other — by remaining silent and admitting nothing — or to ‘defect’ from your partnership by ratting out the other to the police. You know that if you both cooperate with each other and keep silent, the state doesn’t have enough evidence to convict either one of you, so you’ll both walk free, splitting the loot — half a million dollars each, let’s say. If one of you defects and informs on the other, and the other says nothing, the informer goes free and gets the entire million dollars, while the silent one is convicted as the sole perpetrator of the crime and receives a ten-year sentence. If you both inform on each other, then you’ll share the blame and split the sentence: five years each.

Here’s a table of the payoffs:

| Other person | |||

|---|---|---|---|

| Remain silent (co-operate) | Rat out other person (defect) | ||

| You | Remain silent (co-operate) | You: $0.5m Them: $0.5m | You: 10 years jail Them: $1m |

| Rat out other person (defect) | You: $1m Them: 10 years in jail | You: 5 years jail Them: 5 years jail | |

Here’s the problem. No matter what your accomplice does, it’s always better for you to defect. If your accomplice has ratted you out, ratting them out in turn will give you five years of your life back — you’ll get the shared sentence (five years) rather than serving the whole thing yourself (ten years). And if your accomplice has stayed quiet, turning them in will net you the full million dollars — you won’t have to split it. No matter what, you’re always better off defecting than cooperating, regardless of what your accomplice decides. To do otherwise will always make you worse off, no matter what. In fact, this makes defection not merely the equilibrium strategy but what’s known as a dominant strategy.

In standard cases of trade, both people act in their self-interest, but it leads to a win-win situation that’s better for both. However, in the prisoner’s dilemma, acting in a self-interested way leads, due to the structure of the scenario, to a lose-lose. We’re going to call a situation like this a ‘coordination failure.’ (More technically, you can think of it as when the Nash equilibrium of a coordination game is not the optimum.)

We’re going to come back to this concept regularly over the rest of this article. Although there are other forms of coordination failure, the prisoner’s dilemma is perhaps the most important, because it’s very simple, but it’s also difficult to avoid because everyone has an incentive to defect.

This makes it a good test case for a community: if you can avoid prisoner dilemma style coordination failures, then you’ll probably be able to overcome easier coordination problems too. So, much of this discussion about how to coordinate is about how to overcome the prisoner’s dilemma.

Situations with a structure similar to the prisoner’s dilemma seem fairly common in real life. They arise when there is potential for a positive sum gain, but taking it carries a risk of getting exploited.

For instance, elsewhere, we’ve covered the example of two companies locked in a legal battle. If you hire an expensive lawyer, and your opponent doesn’t, then you’ll win. If your opponent hires an expensive lawyer as well, then you tie. However, if you don’t hire an expensive lawyer, then you’ll either tie or lose. So, either way, it’s better to hire the expensive lawyer.

However, it would have been best for both sides if they had agreed to hire cheap lawyers or settle quickly. Then they could have split the saved legal fees and come out ahead.4

If we generalise the prisoner’s dilemma to multiple groups, then we get a ‘tragedy of the commons.’ These also seem common, and in fact lie at the heart of many key global problems.

For instance, it would be better for humanity at large if every country cut their carbon emissions to avoid the possibility of catastrophic climate change.

However, from the perspective of each individual country, it’s better to defect — benefit from the cuts that other countries make, while gaining an economic edge.

Coordination failures also crop up in issues like avoiding nuclear war, and the development of new technologies that provide benefits to their deployer but create global risks, such as AI and biotechnology.

You can see a list of other examples on Wikipedia and in this article, or for a more poetic introduction, see Meditations on Moloch.

Summing up, there are several problems with single-player thinking:

- Broadly, if other actors in a community are responsive to what you do, then ignoring these effects will cause you to mis-estimate your impact.

- One particularly important mistake is to overlook the possibility of trade, which can enable each individual to have a much greater impact since it can be positive sum, and it can also enable specialisation and economies of scale.

- Single-player thinking also leaves you vulnerable to coordination failures such as the prisoner’s dilemma, reducing impact.

So, how can we enable trade and avoid coordination failures, and therefore maximise the collective impact of a community? We’ll now introduce several different types of mechanisms to increase coordination.

Market communities

One option to achieve more coordination is to set up a ‘market.’ In a market, each individual has their own goals, but they make bids for what they want with other agents (often using a common set of institutions or intermediaries). This is how we organise much of society — for instance, buying and selling houses, food, and cars.

Market communities facilitate trade. For instance, if you really want to rent a house with a nice view, then you can pay more for it, and in doing so, compensate someone else who doesn’t value the view as much. This creates a win-win — you get the view, and the other person gets more money, which they can use to buy something they value more.

If the market works well, then the prices will converge towards those where everyone’s preferences are better satisfied.

The price signals then incentivise people to build more of the houses that are most in-demand. And then we also get specialisation and economies of scale.

It’s possible to trade directly through barter, but this involves large transaction costs (and other issues). For instance, if you have a spare room in your house and want to use it in a barter, you need to find someone who wants your room who has something you want in return, which is hard. It’s far easier to use a currency — rent the room for money, then you can spend the money on whatever you want in return.

The introduction of a currency allows far more trades to be made, making it arguably one of the most important innovations in history.

There have been some suggestions to use markets to enable more trade within the effective altruism community, such as certificates of impact — an alternative funding model for nonprofits.

Today, nonprofits make a plan, then raise money from donors to carry out the plan. With certificates of impact, once someone has an impact, they make a certificate, which they sell to donors in an auction.

These certificates can be exchanged, creating a market for impact. This creates a financial incentive for people to accurately understand the value of the certificates and to create more of them (i.e. do more good). There are many complications with the idea, but there’s a chance this funding model could be more efficient than the nonprofit sector’s current one. If you’re interested, we’d encourage you to read more.

Market communities can support far more coordination, and are a major reason for the wealth of the modern world. However, market communities still have downsides.

The key problem is that market prices can fail to reflect the prices that would be optimal from a social perspective due to “market failures”.

For instance, some actions might create effects on third parties, which aren’t captured in prices — “externalities.” Or if one side has better information than another (“asymmetric information”), then people might refuse to trade due to fear of being exploited (the “lemon problem”).

Likewise, people who start with the most currency get the most power to have their preferences satisfied. If you think everyone’s preferences count equally, this could lead to problems.

Market communities can also still fall prey to coordination failures like the tragedy of the commons.

Even absent these problems, market trades often still involve significant transaction costs, so mutually beneficial trades can fail to go ahead. It’s difficult to find trading partners, verify that they upheld their end of the bargain, and enforce contracts if they don’t.

When these transaction costs get too high relative to the gains from the trade, the trade stops being worthwhile.

Transaction costs tend to be low in large markets for simple goods (e.g. apples, pencils), but high for complex goods where it’s difficult to ascertain their quality, such as hiring someone to do research or management. In these cases, there can be large principal-agent problems. The basic idea is that the hiring manager (the “principal”) doesn’t have perfect oversight. So if there are even small differences in the aims of the person being hired (the “agent”) and the person who hired them, then the agent will typically do something quite different from what the principal would most want in order to further the agent’s own aims.

For instance, in divorce proceedings, the lawyers will often find small ways to spark conflict and draw out the process, because they’re paid per hour. This can often make the legal fees significantly higher than what would be ideal from the perspective of the divorcing couple.

Principal-agent problems seem to be one of the mechanisms behind community talent constraints.5

Economists spend most of their efforts studying market mechanisms for coordination, which makes sense given that markets have unleashed much of the wealth of the modern world. However, it’s possible to have coordination through other means. In the next section, we’ll discuss some ways to avoid coordination failures and then non-market mechanisms of trade.

How to avoid coordination failures in self-interested communities, and one reason it pays to be nice

Even if agents are selfish, and only care about their own aims, it’s still possible to avoid coordination failures. We’ll cover three main types of solutions to coordination failures that communities can use to have a greater collective impact: coordination structures, common knowledge, and norms.

Through coordination structures

One way to avoid a prisoner’s dilemma is to give power to another entity that enforces coordination. For instance, if each prisoner is a member of the mafia, then they know that if they defect they’ll be killed. This means that each prisoner will be better off by staying quiet (regardless of what the other says), and this lets them achieve the optimal joint outcome. Joining the mafia changed the incentive structure, allowing a better result.

In a more moderate way, we do this in society with governments and regulation. Governments can (in theory) mandate that everyone take the actions that will lead to the optimal outcome, then punish those who defect.

Governments can also enforce contracts, which provide another route to avoiding coordination failures. Instead of the mafia boss who will kill prisoners who defect, each prisoner could enter into a contract saying they will pay a penalty if they defect (where the penalty is high enough to make it clearly worth coordinating).

In all modern economies, we combine markets with governments to enforce contracts and regulation to address market failures.

Most communities don’t have as much power as governments, but they can still set up systems to exclude defectors, and achieve some of these benefits.

Through common knowledge

In some coordination failures, a better outcome exists for everyone, but it requires everyone to switch to the better option at the same time. This is only possible in the presence of ‘common knowledge’ — each person knows that everyone else is going to take the better option.

This means that avoiding coordination failures often requires the dissemination of this knowledge through the community, whether through formal media or simple gossip. Communities that coordinate have developed mechanisms to spread this knowledge in a trusted way.

Read more about common knowledge.

Through nice norms and reputation

Perhaps the most important way to avoid coordination failures in informal communities is through norms and reputation.

In a community where you participate over time, you are essentially given the choice to cooperate or defect over and over again. The repeated nature of the game changes the situation significantly. Instead of defecting, (if reputation indeed spreads) it becomes better to earn a reputation for playing nice. This lets you cooperate with others, and do better over the long term.

These dynamics were studied formally by Robert Axelrod in a series of tournaments in 1980.

Rather than theorise about which approach was best, Axelrod invited researchers to submit algorithms designed to play prisoner’s dilemma. In each ’round’ each algorithm was pitted against another, and given the choice of defecting or cooperating. The aim was to earn as many points as possible over all the rounds. This research is summarised in a 1981 paper The Evolution of Cooperation (pdf), and later expanded into a book with the same name in 1984.

In a single round of prisoner’s dilemma the best strategy is to defect. However in the tournament with multiple rounds, the algorithm that scored the most was “tit for tat.” It always starts by cooperating, but if its opponent defects, it defects the next time too. This algorithm was able to cooperate with other nice algorithms, while never getting exploited more than once by defectors. This means that although tit for tat at best tied with its immediate opponent, it built up an edge over time.6

Axelrod also performed an ‘ecological’ version of the tournament, in which the number of copies of an algorithm in the tournament depends on its total score at that moment, with the aim of mimicking an evolutionary process. In this case, it was found that groups of cooperative algorithms all being nice to each other would become more and more prevalent over time.

What’s more, if every algorithm adopts tit for tat, then they all always cooperate, leading to the best outcome for everyone, so it’s a great strategy for a group to adopt as a whole (especially if common knowledge of this exists).

Further research suggested that sometimes it’s even better to employ “tit for two tats.”

In this approach, if someone defects against you, you give them a second chance to cooperate before also defecting on them.

This prevents a ‘death spiral’ in which initial defection causes two algorithms to get locked in a cycle of defecting. However, tit for two tats is a bit more vulnerable to algorithms that defect, so whether it’s better depends on the fraction of aggressive defectors in the pool.

Axelrod summed up the behaviour of the characteristics of the most successful algorithms as follows:7

- Be nice: cooperate, never be the first to defect.

- Be provocable: return defection for defection, cooperation for cooperation.

- Don’t be envious: focus on maximising your own ‘score,’ as opposed to ensuring your score is higher than your partners’.

- Don’t be too clever: or, don’t try to be tricky. Clarity is essential for others to cooperate with you.

It turned out that adopting these simple principles or ‘norms’ of behaviour led to the best outcome for the agent. These mirror the norms of ordinary ethical behaviour — kindness, justice, forgiveness, resisting envy and honest straightforwardness — suggesting that following these norms is not only better for others, but also benefits the agent concerned.

This probably isn’t a coincidence. Human societies have likely evolved norms that support good outcomes for the individuals and societies involved.

What are the practical implications of this research? It’s difficult to know the extent to which Axelrod’s tournament is a good model of messy real life, but it certainly suggests that if you have ongoing involvement in a community, it’s better to obey the cooperative norms sketched above.

Moreover, if anything there seems to be even greater reasons to cooperate in real life.

In Axelrod’s tournaments, the algorithms didn’t know how others had behaved in other games. However, in real life if you defect against one person, then others will find out. This magnifies the costs of defection, especially in a tight knit community. Likewise, if you have a history of cooperating, then others will be more likely to cooperate with you, increasing the rewards of being nice. And in the last one or two decades, technology has made it even easier to track reputation over time.

These norms also seem to be deeply rooted in human behaviour. People will often punish defectors and reward cooperation almost automatically, out of a sense of justice and gratitude, even if doing so is of no benefit to them.

One striking fact about all of the above is that it still applies even if you only care about your own aims: even if you’re selfish, it’s better to be nice. (This means they’re an example of “reciprocal altruism.”)

However, obeying these norms is not only better for the agent concerned — it’s also better for everyone else in the community. This is because it helps the community get into the equilibrium where everyone cooperates, maximising the collective payoff.

For more on how coordination failures can be solved, see An equilibrium of no free energy by Eliezer Yudkowsky.

Preemptive and indirect trade

Now let’s return to ways to increase trade beyond market communities. We saw that even if we have a market, many worthwhile trades can fail to go ahead due to transaction costs. Communities have come up with several ways to avoid this problem.

We call one of these mechanisms ‘preemptive trade.’ In a preemptive trade, if you see an opportunity to benefit another community member at little cost to yourself, you take it. The hope is that they will return the favour in the future. This allows more trades to take place, since the trade can still go ahead even when the person isn’t able to pay it back immediately and when you’re not sure it will be returned. Instead the hope is that if you do lots of favours, on average you’ll get more back than you put in.

Preemptive trade is another example of reciprocal altruism — you’re being nice to other community members in the hope they will be nice in return.

Going one level further, we get ‘indirect trade.’ For instance, in some professional networks, people try to follow the norm of ‘paying it forward.’ Junior members get mentoring from senior members, without giving the senior members anything in return. Instead, the junior members are expected to mentor the next generation of junior members. This creates a chain of mentoring from generation to generation. The result is that each generation gets the mentoring they need, but aid is never directly exchanged.

Other groups sometimes follow the norm of helping whoever is in need, without asking for anything directly in return. The understanding is that in the long term, the total benefits of being in the group are larger than being out of it.

For instance, maybe A helps B, then B helps C and then C helps A. Then, all three have benefited, but no direct trades were ever made. Rather than ‘I’ll scratch my back if you scratch mine’, it’s a ring of back scratching.

This is how families normally work. If you can help your brother with his homework, you don’t (usually) make an explicit bargain that your brother will help you with something else. Rather, you just help your brother. In part, there’s an understanding that if everyone does this, the family will be stronger, and you’ll all benefit in the long term. Maybe your brother will help you with something else, or maybe because your brother does better in school, your mother has more time to help you with something else, and so on.

We call this ‘indirect trade’ because people trade via the community rather than in pairs. It’s a way to have more trade without a market.

However, we can see that pre-emptive and indirect trade can only exist in the presence of a significant amount of trust. Normal markets require trust to function, because participants can worry about getting cheated, but ultimately people make explicit bargains that are relatively easy to verify. With indirect trade, however, you need to trust that each person you help will go on to help others in the future, which is much harder to check up on.

Moreover, from each individual’s perspective, there’s an incentive to cheat. It’s best to accept the favours without contributing anything in return. This means that the situation is similar to a prisoner’s dilemma.

Communities can avoid these issues through the mechanisms we’ve already covered. If community members can track the reputation of other members, then other members can stop giving favours to people who have a reputation for defecting. Likewise, if everyone obeys the cooperative norms we covered in the previous section, then these forms of trade can go ahead, creating further reason to follow the norms.

Pre-emptive and indirect trade can also only exist when each member expects to gain more from the network in the long-term than they put in, which could be why professional networks tend to involve people of roughly similar ability and influence.

We’ve now seen several reasons to default to being nice to other community members: avoiding coordination failures and generating pre-emptive and indirect trades. There’s also some empirical evidence to back up the idea that it pays to be nice. In Give and Take, Adam Grant presents data that the most successful people in many organisations are ‘givers’ — those who tend to help other people without counting whether they get benefits in return.

However, he also shows that some givers end up unsuccessful, and one reason is that they get exploited by takers. This shows that you need to be both nice and provocable. This is exactly what we should expect based on what we’ve covered.

Shared aims communities and ‘trade+’

Everything we’ve covered so far is based on the assumption that each agent only cares about their own aims i.e. is perfectly self-interested.

What’s remarkable is that even if people are self-interested, they can still cooperate to a large degree through these mechanisms, and end up ahead according to their own values. This suggests that even if you disagree with everyone in a community, you can still profitably work with them.

However, what about if the agents also care about each others’ wellbeing, or share a common goal? We call these ‘shared aims’ communities. The effective altruism community is an example, because at least to some degree, everyone in the community cares about the common goal of social impact, and our definitions of this overlap to some degree. Likewise, environmentalists want to protect the environment, feminists want to promote women’s rights, and so on. How might cooperation be different in these cases?

This is a question that has received little research. Most research on coordination in economics, game theory and computer science, has focused on selfish agents. Our speculation, however, is that shared aims communities have the potential to achieve a significantly greater degree of cooperation.

One reason is that members of a shared aims community might trust each other more, enabling more trade. But further than this, in a shared aims community you don’t even need to trade, because if you help another community member, then that achieves your goals as well. For instance, if you advise someone on their career and help them have a greater impact, you increase your own impact too. So, you both succeed.

We could call trade when nothing is given in return ‘trade plus’ or ‘trade+.’ It’s even more extreme than indirect trade, since it’s worth helping people even if you never expect anyone else in the community to give you anything in return.

Trade+ has the advantage of potentially even lower transaction costs, since you no longer need to verify that the party has given you what they promised. This is especially valuable with complex goods and in situations with principal-agent problems.

For instance, you could decide you will only give advice to people in the community in exchange for money. But, it’s hard to know whether advice will be useful before you hear it, so people are often reluctant to pay for advice. Providing advice for money also creates lots of overheads, such as a contract, tracking payments, paying tax and so on. A community can probably operate more efficiently if people provide advice for free when they see a topic they know something about.

On the other hand, trade+ can only operate in the presence of a large degree of trust. Each community member needs to believe that the others sincerely share the aims to a large enough degree. The more aligned you are with other community members, the more trade+ you can do.

As well as having the potential for more trade, shared aims communities should also in theory be the most resistant to coordination failures. Going back to the prisoner’s dilemma, if each agent values the welfare of the other, then they should definitely both coordinate.

They can also be more resilient to market failures, because people will care about the negative externalities of their actions, and have less incentive to exploit other community members.

In sum, shared aims communities can use all of the coordination mechanisms used by self-interested communities, but apply them more effectively, and use additional mechanisms, such as trade+.

To what extent is the effective altruism community a shared aims community?

It’s true there are some differences in values within the community, but there is also a lot of agreement. We mostly value welfare to a significant degree, including the welfare of animals, and we also mostly put some weight on the value of future generations.

What’s more, even when values are different, our goals can still converge. For instance, people with different values might still have a common interest in global priorities research, since everyone would prefer to have more information about what’s most effective rather than less.

People in the community also recognise there are reasons for our aims to converge, such as:

- Normative uncertainty — if someone similar to you values an outcome strongly, that’s reason to be uncertain about whether you should value it or not, which merits putting some value on it yourself. Find out more in our podcast with Will MacAskill.

Epistemic humility — if other agents similar to you think that something is true, then that’s evidence you should believe it too. Read more.

Taking these factors into account leads to greater convergence in values and aims.

Summary: how can shared aims communities best coordinate?

We’ve shown that by coordinating, the members of a community can achieve a greater impact than if they merely think in a single-player way.

We’ve also sketched some of the broad mechanisms for coordination, including:

- Trade.

- Specialisation and economies of scale (made possible by trade).

- Strategies for avoiding coordination failures, such as structural solutions, common knowledge, nice norms and reputation.

We’ve also shown there are several types of trade.

| Type of trade | What it involves | Need to share aims? | Example | Comments |

|---|---|---|---|---|

| Market trade via barter | Two parties make an explicit swap | No | You help a friend with their maths homework in exchange for help with your Chinese homework | Significant transaction costs finding parties and verifying the conditions are upheld. Market and coordination failures possible. |

| Market trade via currency | One party buys something off another, in exchange for a currency that can be used to buy other goods. | No | Buying a house. | Lower transaction costs finding parties, but still need to verify. Market failures and coordination failures possible. |

| Indirect trade and pre-emptive trade. | A group of people help each other, in the understanding that in the long-term they’ll all benefit | No | Some professional networks and groups of friends. Paying it forward. | Only works if there’s enough trust or a mechanism to exclude defectors, and the mutual gains are larger enough to make everyone win in expectation. |

| Trade+ | You help someone else because if they succeed, it furthers your aims too | Yes | You mentor a young person who you really want to see succeed. | Low transaction costs and resistant to coordination failures, but only works if there’s enough trust that others share your aims. |

And we’ve sketched three types of community:

- Single-player communities — where other people’s actions are (mostly) treated as fixed, and the benefits of coordination are mainly ignored.

Market communities — which use markets and price signals to facilitate trade, but are still vulnerable to market failures and coordination failures.

Shared aims communities — where members share a common goal, potentially allowing for trade+ and even more resilience to coordination failures, which may allow for the greatest degree of coordination.

We also argued that the effective altruism community is at least partially a shared aims community.

Now, we’ll get more practical. What specific actions should people in shared aims communities do differently in order to better coordinate, and therefore have a greater collective impact?

This is also a topic that could use much more research, but in the rest of this article we will outline our current thinking. We’ll use the effective altruism community as a case study, but we think what we say is relevant to many shared aims communities.

We’ll cover three broad ways that the best actions change in a shared aims community: adopt cooperative norms, consider community capital, and take the portfolio approach to working out what to do.

1. Adopt nice norms to a greater degree

Why follow cooperative norms?

Much of ‘common sense’ morality is about following ethical norms, such as being kind and honest — simple principles of behaviour that people aim to uphold. What’s more, many moral systems, including types of virtue ethics, deontology and rule utilitarianism, hold that our primary moral duty is to uphold these norms.

Effective altruism, however, encourages people to think more about the actual impact of their actions. It’s easy to think, therefore, that people taking an effective altruism approach to doing good will put less emphasis on these common sense norms, and violate them if it appears to lead to greater impact.

For instance, it’s tempting to exaggerate the positive case for donating in order to raise more money, or be less considerate to those around you in order to focus more on the highest-priority ways of doing good.

However, we think this would be a mistake.

First, effective altruism doesn’t say that we should ignore other moral considerations, just that more attention should be given to impact. This is especially true if you consider moral uncertainty.

Second, and more significantly, when we consider community coordination, these norms become vitally important. In fact, it might even be more important for members of the effective altruism community to uphold nice norms than is typical — to uphold them to an exceptional degree. Here are some reasons why.

First, as we’ve seen, upholding nice norms even allows self-interested communities to achieve better outcomes for all their community members, since it lets them trade more and avoid coordination failures. And being a shared aims community makes the potential for coordination, and therefore the importance of the norms, even higher.

What’s more, by failing to follow these norms, you not only harm your own ability to coordinate, but you also reduce trust in the community more broadly. You might also encourage others to defect too, starting a negative cycle. This means that the costs of defecting spread across the community, harming the ability of other people to coordinate too — it’s a negative externality. If you also care about what other community members achieve, then again, this gives you even greater reasons to follow nice norms.

The effective altruism community also has a mission that will require coordinating with other communities. This is hard if its members have a reputation for dishonesty or defection. Indeed, people normally hold communities of do-gooders to higher standards, and will be suspicious if people claim to be socially motivated but are frequently dishonest and unkind. We’ll come back to the importance of community reputation in a later section.

On the other side, if a community becomes well known for its standards of cooperativeness, then it can also encourage outsiders to be more cooperative too.

In combination, there are four levels of effect to consider:

| Effects on reputation | Effects on social capital | |

|---|---|---|

| Internal effects | Effects on your reputation within the community | Effects on community social capital |

| External effects | Effects on the community's reputation | Effects on societal social capital |

A self-interested person, however, would only care about the top left box. But if you care about all four, as you should in a shared aims community with a social mission, there is much greater reason than normal to follow norms. (You can see a more detailed version of an argument in “Considering Considerateness”. I also talk more about why effective altruism should focus more on virtue here.)

Which norms should the community aim to uphold in particular? In the next couple of sections we get more specific about which norms we should focus on, how to uphold them, and how to balance them against each other. If this is too much detail, you might like to skip ahead to community capital.

In summary, we’ll argue the following norms are important among the effective altruism community in particular.

- Be more helpful.

- But withhold aid from those who repeatedly don’t cooperate.

- Be more honest.

- Be more friendly.

- Be more willing to compromise.

Be more helpful

If you see an opportunity to help someone else with relatively little cost to yourself, take it.

Defaulting to helpfulness is perhaps the most important norm, because it underpins so many of the mechanisms for coordination we covered earlier. It can trigger pre-emptive and indirect trades; and it can help people cooperate in prisoner’s dilemmas. Doing both of these builds trust, allowing further trades and cooperation.

It’s likely that people aren’t as helpful as would be ideal even from a self-interested perspective. Like the other norms we’ll cover, being helpful involves paying a short-term cost (providing the help) in exchange for a long-term gain (favours coming back to you). Humans are usually bad at doing this.

Indeed, it can be even worse because situations where we can aid other community members often have a prisoner’s dilemma structure. In a single round, the dominant strategy is to accept aid but not give aid, since whatever the other person does, you come out ahead. It’s easy to be short-sighted and forget that in a community we’re in a long-term game, where it ultimately pays to be nice.

The general arguments for why shared aims communities should uphold nice norms to apply equally to helpfulness:

- It’s worth being even more helpful than normal due to the possibility of trade+.

- By being helpful you’ll also encourage others in the community to be more helpful, further multiplying the benefits.

- Being helpful can also help the community as a whole develop a reputation for helpfulness, which enables the community to be more successful and better coordinate with other groups. For this reason, it’s also worthwhile being helpful to other communities (though to a lesser degree than within the community since aims are less aligned).

How can we be more helpful?

One small concrete habit to be more helpful is “five minute favours” — if you can think of a way to help another community member in five minutes, do it.8 Two common five minute favours are making an introduction and recommending a useful resource.

But it can be worth taking on much bigger ways to help others. For instance, we know someone who lent another community member $10,000 so they could learn software engineering. This let the recipient eventually donate much more than they would have otherwise, creating much more than $10,000 of value for the community (and they repaid the loan).

Another option is that if you see a great opportunity, such as an idea for a new organisation, or a way to get press coverage, it can be better to give this opportunity to someone else who’s in a better position to take it. It’s like making a pass in football to someone who’s in a better position to score.

Withhold aid from those who don’t cooperate

One potential downside of being helpful is that it could attract ‘freeloaders’ — people who take help from the community but don’t share the aims, and don’t contribute back — especially as the community gains more resources. There are plenty of examples of people using community trust to exploit the members, such as in cases of affinity fraud.

But being nice doesn’t mean being exploitable. As we saw, the best algorithms in Axelrod’s tournament would withdraw cooperation from those who defected on those norms. We also saw that if freeloaders can’t be excluded from a community, it’s hard to maintain the trust needed for indirect trade. This means it’s important to track people’s reputation, and stop helping those who don’t follow the norms e.g. who are dishonest, or who accept aid without giving any in return.

However, this decision involves a difficult balancing act. First, withdrawing help too quickly could make the community unwelcoming. Second, negative information tends to be much more sticky than positive, so one negative rumour can have a disproportionately negative impact on someone’s reputation.

A third risk is ‘information cascades.’ For instance, if you hear something negative about Bob, then you might tell Cate your impression, giving Cate a negative impression too. Then if David hears that both you and Cate have a negative impression, that’s now two people, David could form an even more negative impression, and so on and so on. This is the same mechanism that contributes to financial bubbles.

(Information cascades can also happen on the positive side — a positive impression of a small number of people can spread into a consensus. Amanda Askell called this “buzztalk” and pointed out that it tends to disproportionately benefit those who are similar to existing community members.)

How can a community avoid some of these problems, while also being resistant to freeloaders?

One strategy is to be forgiving, especially with people who are new, but to be less forgiving over time – completely withholding aid from people who repeatedly defect

In Algorithms to Live By, the authors speculate that a strategy of “exponential backoff” could be optimal. This involves doubling the penalty each time a transgression is made. For instance, the first time someone makes a minor transgression, you might forgive them right away. The next time, you might briefly withhold aid for a month, then withhold aid for 2 months, 4 months and so on. You’re always willing to forgive but it takes longer and longer each time.

Of course, this also needs to be weighted by how serious the violation is, and some violations should earn permanent exclusion first time.

There’s a difficult question about how much to tell others about norm violations. Too much gossip leads to information cascades, but not enough means that people don’t hear about important information about people who might defect.

One factor is that it depends on how serious the norm violation was, and how important the relevant person’s position is. Roughly, the more powerful the person’s position and the more serious the offence, the more willing you should be to share this information. For instance, if someone is being considered for a leadership position, and you’re aware of a significant case of apparent dishonesty, then it’s probably worth telling the relevant decision-makers. If someone just seemed rude or unhelpful one time, though, this impression is probably not worth spreading widely.

If you’re receiving second-hand impressions about someone, always ask for the reasons behind them. Are their impressions based on information directly about the person’s abilities and cooperativeness, or are they just based on someone else’s impressions? This can help avoid information cascades.

Similarly, if you are sharing your view of someone, try to give the reasons for your assessment, not just your view. (Read more about “beliefs” vs. “impressions”.)

Another strategy is to have community managers and other structures to collect information. Julia Wise and Catherine Lowe serve as contact people for the effective altruism community, and are part of CEA’s community health team. They also have a form where you can submit concerns anonymously, and there are community managers who focus on major geographical areas.

If you’re concerned that someone is freeloading, or otherwise being harmful to the community, you can let a manager know. This helps them put together multiple data points. The community manager can also exclude people from events and let other relevant parties know.

Be more honest

By honesty, or integrity, we mean both telling important truths and fulfilling promises.

Honesty is vital because it supports trust. This is true even in self-interested markets — if people think a market is full of scammers who are lying about the product, they won’t participate. As we showed, trust is even more important in shared aims communities, and for communities that want a positive reputation with others.

Honesty is especially important within the effective altruism community, because it’s also an intellectual community. Intellectual communities have even greater need for honesty, because it’s hard to make progress towards the truth if people hide their real thinking.

What does greater-than-normal honesty look like? Normal honesty requires not telling falsehoods about important details, but it can be permissible to withhold relevant information. If you were fundraising from a donor, it wouldn’t be seen as dishonest if you didn’t point out the reasons they might not want to donate.

However, it seems likely we should hold ourselves to higher standards than this. For instance, in high-stakes situations, you should actively point out important negative information. Not doing so could lead the community to have less accurate beliefs over time, and withholding negative information still reduces trust.

A major downside of this norm is that sharing negative information makes the community less friendly. Being criticised makes most people defensive, demotivated and annoyed.

What’s more, negative information tends to be much more memorable than positive, so sharing criticism can have significant stakes. It’s common for rumours to persist, even when they’ve already been shown to be untrue. This is because not everyone who hears the original rumour will hear the debunking, so rumours are hard to unwind. And hearing the debunking of a rumour can make some people suspect it was true in the first place. Most people would not want to see the article “why [your name] is definitely not into bestiality” at the top of a Google search.

On the other hand, most people find it difficult to share criticisms, especially if they perceive the other party to be more powerful than them.

And it would be naive to believe that publicly criticising someone never has consequences – if they think your criticism is badly judged, they’ll think less of your reasoning, at the least.

We’re still unclear how to trade friendliness against honest criticism, though here are a few thoughts:

- Be as friendly as possible the rest of the time. This gives your relationship more ‘capital’ for criticism later.

Focus on sharing negative information that’s important i.e. is relevant to an important decision or involves a larger mistake.

Ask questions liberally. If something doesn’t make sense to you, ask about it. Questions can feel more constructive to the other person, and make your criticism better informed.

Make some extra effort to ensure that negative claims are accurate. For instance, you can give people a chance to comment on criticism before publishing it.

Consider engaging in-person. Online debate makes everyone much less friendly than they’d ever be in person, because it’s harder to read people, you get less feedback, and online debate easily turns into a competition to win in front of an audience (with the results recorded forever on the internet). If there’s a way to learn more about or discuss the issue in person, that’s often a good place to start (and you can always post public criticism later).

One form of honesty that doesn’t come across as unfriendly is honesty about your own shortcomings and uncertainties (i.e. humility). So, putting greater emphasis on humility is a way to make the community more honest with fewer downsides.

If in doubt, find a way to share your concerns. Although the friendliness of the community is important, it’s even more important to get to the truth and to identify people defecting on the community’s norms.

Read more about why to be honest in this essay by Brian Tomasik and Sam Harris’s book, Lying.

Be more friendly

As covered, the downside of being willing to withhold cooperation and being exceptionally honest is that it can make the community unwelcoming. So, we need the norm of friendliness to balance these others.

Unfriendliness also seems like a greater danger for the effective altruism community compared to other communities because a core tenet involves focusing on the most effective projects. This means ranking projects, and, implicitly not supporting the less effective ones. This is an unfriendly thing to do by normal standards.

We also observe that the community tends to attract people who are especially analytical and can be relatively weak on social skills. Combine these trends with a culture of honest criticism, and it’s easy for a new person to feel like people were being rude or made uncomfortable. And we do a lot of interaction online, which encourages unfriendliness.

By ‘unfriendly’ we mean a tendency toward having unpleasant social experiences when interacting with the community, especially in ways additional to what we’ve already covered, such as being rude, boasting, making people uncomfortable, and making people feel treated as a means to an end.

Why is friendliness so important? We’re all human, and we’re involved in an ambitious project, with people different from ourselves. This makes it easy for emotions to get frayed.

Frayed emotions make it hard to work together. They also make it hard to have a reasonable intellectual discussion and get to the truth. It will be hard to be exceptionally honest with each other if we don’t have a bedrock of positive interactions beforehand. In this way, friendliness is like social oil that enables the other forms of cooperation.

What’s more, many social movements fracture, and achieve less than they could. Often this seems to be based on personal falling outs between community members. If we don’t want this to happen, then we need to be even more friendly than the typical social movement.

It’s even worth being friendly and polite to those who aren’t friendly to you, since it lets you take the high-ground, and helps to defuse the situation. (Though you don’t need to aid and cooperate with them in other ways.)

What are some ways we can be more friendly?

One option is to get in the habit of pointing out positive behaviours and achievements. Our natural tendency is to focus on criticism, which can easily make the culture negative. But you can adopt habits like always pointing out something positive when you reply to someone online, or trying to say one positive thing at each event you go to.

We can also spend more time getting to know other community members as people: find out what they’re interested in, what their story is, and have fun. Since effective altruism is all about having an impact, it can be easy for people to feel like they’re just instruments in service of the goal. So, we need to make extra efforts to focus on building strong connections.

Both of the above are ways to build social capital which makes it easier to get through difficult situations. Another way to increase friendliness is to better deal with difficult situations when they’ve already arisen.

A common type of a difficult situation is a disagreement. If handled badly, a disagreement can lead each side to become even more entrenched in their views, and could ultimately cause a split in the community.

Here are a couple of great guides to dealing with disagreements. One is Daniel Dennett’s four steps. Robert Wiblin also wrote “Six ways to get along with people who are totally wrong”.

These guides recommend more specific applications of the general principles we’ve already covered: (i) always stay polite (ii) point out how you agree with your opponent and what you’ve learned with them (iii) when giving negative feedback, be highly specific, and only say what is necessary.

Be more willing to compromise

One special case of friendliness and helpfulness is compromising. Compromise is how to deal with other community members when you have a disagreement you’ve not been able to resolve.

If you’re aiming to maximise your impact, it can be tempting not to compromise, and instead to focus on the option you think is best. But from a community point of view, this doesn’t make as much sense. If others are being cooperative with you, then we argue it’s probably best to follow the following principle (quoting from Brian Tomasik):

If you have an opportunity to significantly help other value systems at small cost to yourself, you should do so.

Likewise, if you have the opportunity to avoid causing significant harm to other value systems by foregoing small benefit to yourself, you should do so.

Here’s why. Suppose there are two options you could take. By your lights, they’re about equally valuable, whereas according to others in the community, one is much better than the other:

| Option A | Option B | |

|---|---|---|

| Your assessment of value | 10 units | 11 units |

| Others’ assessment of value | 30 units | 10 units |

From a single-player perspective, it would be better to take option B, since it’s 1 unit higher impact. However, in a community that’s coordinating, we argue it’s often best to take option A.

First, you’re likely wrong about option A. Due to both epistemic humility and moral uncertainty, if others in the community think option A is much better, you should take that as evidence that it’s better than you think. At the very least, it could be worth talking to others about whether you’re wrong.

But, even if we put this aside and assume that everyone’s assessments are fixed, there’s still reason to take option A. For instance, you might be able to make a direct trade: you agree to take option A in exchange for something else. Since option A is only 1 unit of impact worse in your eyes, but it’s 20 units of impact higher for others, the others make a large surplus. This means it should be easy for them to find something else that’s of value to you to give in return. (Technically, this is a form of moral trade.)

However, rather than trying to make an explicit trade, we suggest it’s probably often better just to take option A. Just doing it will generate goodwill from the others, enabling more favours and cooperation in the future, to everyone’s benefit. This would make it a form of pre-emptive or indirect trade.

Compromise can also help avoid the unilateralist’s curse, which is another form of coordination problem. It’s explained in a paper by Bostrom, Sandberg and Douglas:

A group of scientists working on the development of an HIV vaccine have accidentally created an airborne transmissible variant of HIV. They must decide whether to publish their discovery, knowing that it might be used to create a devastating biological weapon, but also that it could help those who hope to develop defenses against such weapons. Most members of the group think publication is too risky, but one disagrees. He mentions the discovery at a conference, and soon the details are widely known.

In this case, the discovery gets shared, even though almost all of the scientists thought it shouldn’t have been, leading to a worse outcome according to the majority. In general, if community members act in a unilateralist way, then the agenda gets set by whichever members are least cautious, which is unlikely to lead to the best actions.

The authors point out that this could be avoided if the scientists agreed to compromise by going with the results of a majority vote, rather than each individual’s judgement of what’s best.

Alternatively, if all the scientists agreed to the principle above — don’t pursue actions that a significant number of others think are harmful — then they could have avoided the bad outcome too.

Unfortunately, we’ve seen real cases like this in the community.

Instead, it would be better if everyone had a policy of avoiding actions that others think are negative, unless they have very strong reasons to the contrary. When it comes to risky actions, a reasonable policy is to go with the median view.

How to get better at upholding norms?

We’ve covered a number of norms:

- Being exceptionally helpful, honest and friendly.

- Exclude those who violate the norms, but be forgiving, especially initially.

- Be more willing to compromise.

If we can uphold these norms, then we have the potential to achieve a much greater level of coordination.

These norms can allow us to achieve much more impact, though of course are not perfect guides. There will be times when it’s better to break a norm, or when they strongly conflict. In these cases, we need to slow down and think through what course of action will lead to the greatest long-term impact. However, the norms are a strong default.

How can we better uphold these norms?

As individuals, we should focus on building character and habits, which helps to build a broader culture of norm following.

Upholding these norms requires constant work, because they involve short-term costs for a long-term gain. For instance, having a reputation of being honest means people will trust you, which is vital. But maintaining this reputation will sometimes require revealing negative facts about yourself, which is difficult in the moment.

To overcome this, we can’t rely on willpower each time an opportunity to lie comes up. Rather, we need to build habits of acting honestly, so that our default inclination is to tell the truth. This creates a further reason to follow nice norms: following them when it’s easy builds our habits and character, making it easier to follow them when it’s hard.

As a community, we should state the norms we want to uphold, and set up incentives to follow these norms.

The Centre for Effective Altruism has published its guiding principles, and there is also a statement of the values of effective altruism in this introductory essay, which includes the value of collaborative spirit.

Having the norms written down also makes it easier for individuals to spot when the norms are violated, and then give feedback to people violating the norms, and eventually to withhold aid.

To handle more serious situations, we can appoint community managers. They can keep track of issues with the norms, and perhaps use formal powers, like excluding people from official events.

Organisations can consider the norms in deciding who to hire, or who to fund, or who to mentor.

Boards of organisations should assess the leadership in terms of these norms.

Finally, we can better uphold the norms by establishing community capital to share information, which is what the next section is about.

2. Value ‘community capital’ and invest in community infrastructure

What is community capital?

An individual can either try to have an immediate impact, or they can invest in their skills, connections and credentials, and put themselves in a better position to make a difference in the future, which we call career capital.

When you’re part of a community, there’s an analogous concept: the ability of the community to achieve an impact in the future, which we call ‘community capital.’

Community capital depends firstly on the career capital of its members. However, due to the possibility of coordination, community capital is potentially greater than the sum of individual career capital. Roughly, we think the relationship is likely:

Community capital = (Sum of individual career capital) * (Coordination ability)

This extra factor is why being in a community can let us have a greater impact.

Coordination ability depends on whether members use the mechanisms we cover in this article, such as norm following, infrastructure and the portfolio approach. It also depends on an additional factor — the reputation of the community — which we’ll cover shortly.

Coordination ability is similarly important to the sum of the individual career capital of the members because they multiply together. This could explain why small but well coordinated groups are sometimes able to compete with much larger ones, such as the campaign to ban landmines, and many other social or political movements.

This means that when comparing career options (or other types of options), you can also consider your impact on community capital as part of your potential for impact.

How can you help to build community capital?

The existence of community capital provides an extra avenue for impact: rather than do good directly, or build your individual career capital, you can try to build community capital.

To build community capital you can either increase the career capital of its membership, or you can increase its ability to coordinate.

One way to do this is to grow the membership of the community, which we discuss in our profile on building effective altruism. It often seems possible for one person to bring more than one other person who’s equally talented as them into the community, achieving a ‘multiplier’ on their efforts.

However, one downside of growing the number of people involved is that, all else equal, it makes it harder to coordinate, since it makes it harder to spread information, maintain good norms and avoid tragedies of the commons. This cost needs to be set against the benefits of growth.

It also suggests that rather than increasing the membership of the community, it’s also worth thinking about how to increase community capital by increasing the existing community’s abilities to coordinate.

One way to increase the community’s ability to coordinate is by upholding the norms covered earlier. Here are two more: setting up community infrastructure and improving the community’s reputation.

The importance of the community’s reputation

If you do something that’s widely seen as bad, it will harm your own reputation, but it will also harm the reputation of the communities you’re in. This means that when you join a community, your actions also have externalities on other community members. These can often be bigger than the effects of your actions on yourself.

For instance, if you hold yourself to high standards of honesty, then it builds the community’s reputation for honesty, making the positive impact of your honesty even greater than it normally would be.

On the other hand, a positive reputation is a major asset for a community’s members. It lets them quickly establish trust and credibility, which makes it easier to work with other groups. It also lets the community grow more quickly to a greater scale.

For instance, the startup accelerator Y Combinator started to take off after Airbnb and Dropbox became household names, because that gave them credibility with investors and entrepreneurs. Our community needs its own versions of these widely recognised success stories.

In this way, reputation effects magnify the goodness or badness of your actions in the community, especially those that are especially salient and memorable.

The importance of these externalities can increase with the size of the community. In a community of 100 people, if you do something controversial, then it harms or benefits the reputations of 100 other people; while in a community of 1,000, it affects the reputations of 1,000.

Of course, as the community gets larger, your actions don’t matter as much — you make up a smaller fraction of the community, which means you have less impact on its reputation.

In most cases, we think the two effects roughly cancel out, and reputation externalities don’t obviously increase or decrease with community size. But we think there’s one important exception: viral publicity, especially if it’s negative.

If someone in the community was in the news because they committed murder, this would be really bad for the community’s reputation, almost no matter its size. This is because a single negative story can still be memorable and spread quickly, and so have a significant impact on how the community is perceived independent of whether the story is representative or not. It’s the same reason that people are far more afraid of terrorism and plane crashes than the statistics suggest they should be.

This would explain why organisations seem to get more and more concerned to avoid negative stories as they get larger.

Perhaps the most likely way for the effective altruism community to have a major setback is to get caught up in a major negative scandal, which permanently harms our reputation. (This was originally written in 2017!)

The importance of avoiding major negative stories has a couple of consequences.

First, it means we should be more reluctant to take on controversial projects under the banner of effective altruism than we would if we were only acting individually.

Second, it makes it more worth investing time in better framing our messages, to avoid being misunderstood or sending an unintended message.

Third, it makes it even more important to uphold the cooperative norms we covered earlier. Being dishonest, unfriendly and unhelpful is a great way to ruin the community’s reputation as well as your own.

Setting up community infrastructure

We can also set up systems to make it easier to coordinate.

For instance, when there were only 100 people in the community, having a job board wasn’t really needed, since the key job openings could spread by word-of-mouth and it was easy to keep track of them. However, when there are thousands of people and dozens of organisations, it becomes much harder to keep track of the available jobs. This is why we set up a job board in 2017, and it now contains thousands of roles.

Community infrastructure can become more and more valuable as the community grows. If you can make 1,000 people 1% more effective, that’s like having the impact of 10, while making 10,000 people 1% more effective is like having the impact of 100.9 This means that as the community grows, it becomes worth setting up better and better infrastructure.

Community infrastructure enables coordination in a number of ways:

- Lets people share information about needs and resources, which helps people make better decisions and also facilitates trades.

- Lets people share information about reputation, encouraging people to uphold the norms.

- Provides mechanisms through which trades can be made.

- Provides ways to share common resources, leading to economies of scale.

Here are some more examples of infrastructure we already have:

- EA Global conferences and local groups provide a way to meet other community members, share information, and start working together.

- EA Funds enables multiple small donors to pool their resources, paying for more research.

- The EA Forum is a venue for sharing information about different causes and opportunities, as well as discussion of community issues.

- The Effective Altruism Newsletter aggregates information about all the content and organisations in the community.

- The EA Hub hosts profiles of community members and a group directory in order to facilitate collaboration.

- Content published by effective altruism organisations (such as 80,000 Hours’ problem profiles) aggregates information and research, to avoid doubling up work.

What are some other examples of infrastructure that might be useful?

We could see attempts to set up a market for impact as a type of community infrastructure, as we covered earlier.

A more recent kind of effort are forecasting platforms, such as Metaculus and Manifold, which provide a way to aggregate judgements made by lots of community members, providing a kind of epistemic infrastructure.

There are many more ways we could better pool and share information. For instance, donors often run into questions about how to handle tax when donating a large fraction of their income, and these issues are often not well handled by normal accountants because it’s rare to donate so much. It could be useful for community members to pool resources to pay for tax advice, and then turn it into guides that others could use.