Reducing global catastrophic biological risks

Summary

Plagues throughout history suggest the potential for biology to cause global catastrophe. This potential increases in step with the march of biotechnological progress. Global Catastrophic Biological Risks (GCBRs) may compose a significant share of all global catastrophic risk, and, if so, a credible threat to humankind.

Despite extensive existing efforts addressed to nearby fields like biodefense and public health, GCBRs remain a large challenge that is plausibly both neglected and tractable. The existing portfolio of work often overlooks risks of this magnitude, and largely does not focus on the mechanisms by which such disasters are most likely to arise.

Much remains unclear: the contours of the risk landscape, the best avenues for impact, and how people can best contribute. Despite these uncertainties, GCBRs are plausibly one of the most important challenges facing humankind, and work to reduce these risks is highly valuable.

After reading, you may also like to listen to our podcast interview with the author about this article and the COVID-19 pandemic.

This profile replaces our 2016 profile on biosecurity.

Our overall view

Recommended

This is among the most pressing problems to work on.

Scale

We think work to reduce global catastrophic biological risks has the potential for a very large positive impact. GCBRs are both great humanitarian disasters and credible threats to humanity’s long-term future.

Neglectedness

This issue is somewhat neglected. Current spending is in the billions per year, although this large portfolio is not perfectly allocated. Our guesstimated quality adjustment yields ~ $1 billion per year.

Solvability

Making progress on reducing global catastrophic biologial risks seems moderately tractable. Attempts to complement the large pre-existing biosecurity portfolio have fair promise. On the one hand, there seem to be a number of pathways through which risk can be incrementally reduced; on the other, the multifactorial nature of the challenge suggests there will not be easy ‘silver bullets’.

Profile depth

Medium-depth

This is one of many profiles we've written to help people find the most pressing problems they can solve with their careers. Learn more about how we compare different problems and see how this problem compares to the others we've considered so far.

This article is our full report into reducing global catastrophic biological risks. For a shorter introduction, see our problem profile on preventing catastrophic pandemics.

Table of Contents

What is our analysis based on?

I, Gregory Lewis, wrote this profile. I work at the Future of Humanity Institute on GCBRs. It owes a lot to helpful discussions with (and comments from) Christopher Bakerlee, Haydn Belfield, Elizabeth Cameron, Gigi Gronvall, David Manheim, Thomas McCarthy, Michael McClaren, Brenton Mayer, Michael Montague, Cassidy Nelson, Carl Shulman, Andrew Snyder-Beattie, Bridget Williams, Jaime Yassif, and Claire Zabel. Their kind help does not imply they agree with everything I write. All mistakes remain my own.

This profile is in three parts. First, I explain what GCBRs are and why they could be a major global priority. Second, I offer my impressions (such as they are) on the broad contours of the risk landscape, and how these risks are best addressed. Third, I gesture towards the best places to direct one’s career to reduce this danger.

Motivation

What are global catastrophic biological risks?

Global catastrophic risks (GCRs) are roughly defined as risks that threaten great worldwide damage to human welfare, and place the long-term trajectory of humankind in jeopardy.1 Existential risks are the most extreme members of this class. Global catastrophic biological risks (GCBRs) are a catch-all for any such risk that is broadly biological in nature (e.g. a major pandemic).

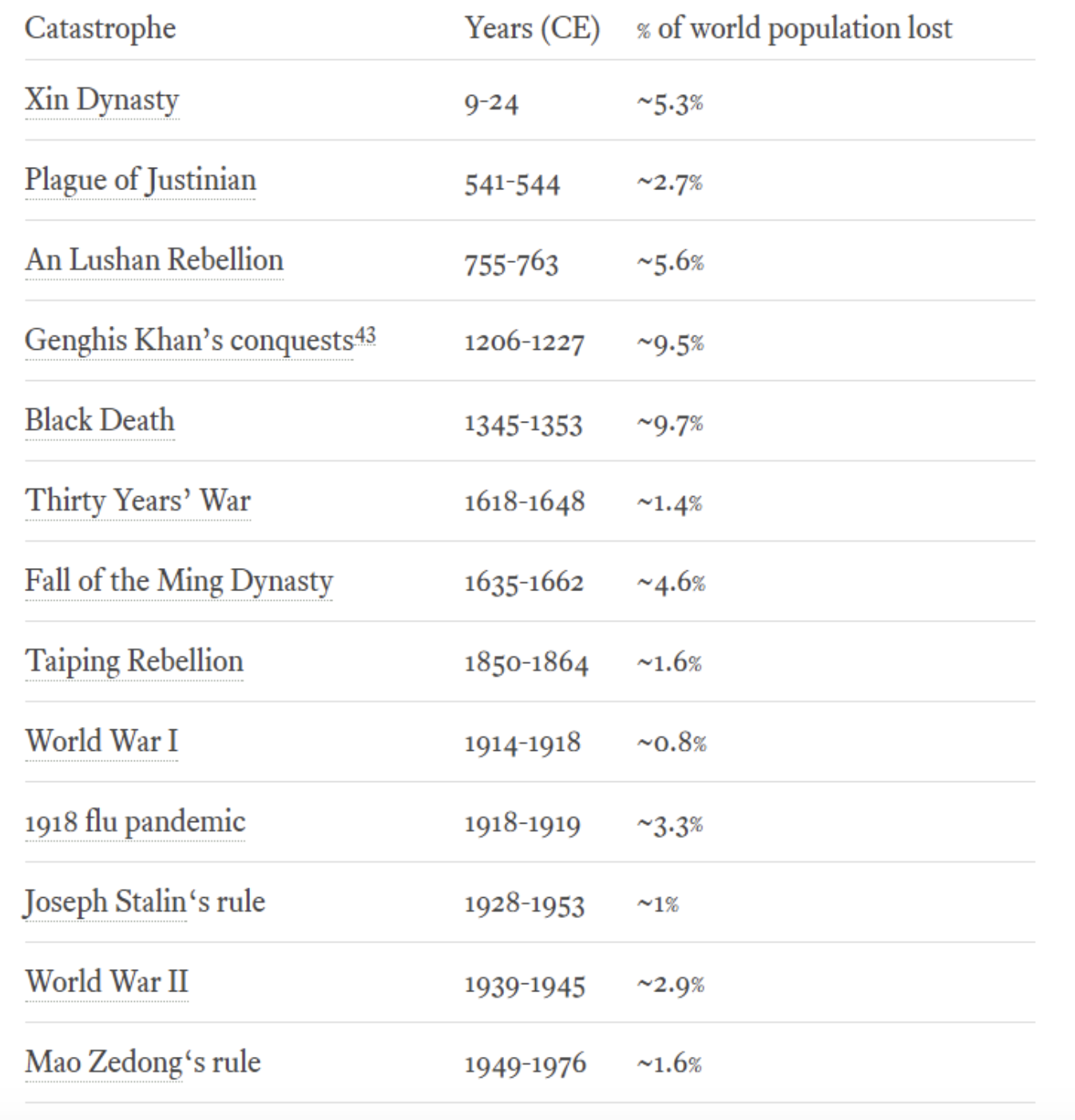

I write from a broadly longtermist perspective: roughly, that there is profound moral importance in how humanity’s future goes, and so trying to make this future go better is a key objective in our decision-making (I particularly recommend Joseph Carlsmith’s talk).2 When applying this perspective to biological risks, the issue of whether a given event threatens the long-term trajectory of humankind becomes key. This question is much harder to adjudicate than whether a given event threatens severe worldwide damage to human welfare. My guesswork is the ‘threshold’ for when a biological event starts to threaten human civilisation is high: a rough indicator is a death toll of 10% of the human population, at the upper limit of all disasters ever observed in human history.

As such, I believe some biological catastrophes, even those which are both severe and global in scope, would not be GCBRs. One example is antimicrobial resistance (AMR): AMR causes great human suffering worldwide, threatens to become an even bigger problem, and yet I do not believe it is a plausible GCBR. An attempt to model the worst case scenario of AMR suggests it would kill 100 million people over 35 years, and reduce global GDP by 2%-3.5%.3 Although disastrous for human wellbeing worldwide, I do not believe this could threaten humanity’s future – if nothing else, most of humanity’s past occurred during the ‘pre-antibiotic age’, to which worst-case scenario AMR threatens a return.

To be clear, a pandemic that killed less than 10% of the human population could easily still be among the worst events in our species’ history. For example, the ongoing COVID-19 pandemic is already a humanitarian crisis and threatens to get much worse, though it is very unlikely to threaten extinction according to this threshold. It is well worth investing great resources to mitigate such disasters and prevent more from arising.

The reason to focus here on events that kill a larger fraction of the population is firstly, that they are not so unlikely, secondly, that the damage they could do would be vastly greater still — and potentially even more long-lasting.

These impressions have pervasive influence on judging the importance of GCBRs in general, and choosing what to prioritise in particular. They are also highly controversial: One may believe that the ‘threshold’ for when an event poses a credible threat to human civilisation is even higher than I suggest (and the risk of any biological event reaching this threshold is very remote). Alternatively, one may believe that this threshold should be set much lower (or at least set with different indicators) so a wider or different set of risks should be the subject of longtermist concern.4 On all of this, more later.

The plausibility of GCBRs

The case that biological global catastrophic risks are a credible and urgent threat to humankind arises from a few different sources of evidence. All are equivocal.

- Experts express alarm about biological risks in general, and some weak evidence of expert concern about GCBRs in particular. (Yet other experts are sceptical.)

- Historical evidence of ‘near-GCBR’ events, suggesting a ‘proof of principle’ there could be risks of something even worse. (Yet none have approached extinction-level nor had discernable long-run negative impacts on global civilisation that approached GCBR levels.)

- Worrying features of advancing biotechnology.

- Numerical estimates and extrapolation. (Yet the extrapolation is extremely uncertain and indictable.)

Expert opinion

Various expert communities have highlighted the danger of very-large scale biological catastrophe, and have assessed that existing means of preventing and mitigating this danger are inadequate.5

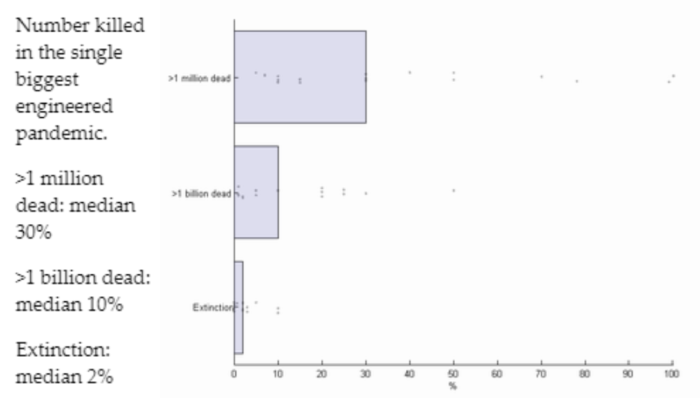

Yet, as above, not all large scale events would constitute a GC(B)R. The balance of expert opinion on the likelihood of these sorts of events is hard to assess, although my impression is that there is substantial scepticism.6 The only example of expert elicitation addressed to this I am aware of is a 2008 global catastrophic risks survey, which offers these median estimates of a given event occurring before 2100:

Table 1: Selected risk estimates from 2008 survey

| At least 1 million dead | At least 1 billion dead | Human extinction | |

|---|---|---|---|

| Number killed in the single biggest engineered pandemic | 30% | 10% | 2% |

| Number killed in the single biggest natural pandemic | 60% | 5% | 0.05% |

This data should be weighed lightly. As Millett and Snyder-Beattie (2017) note:

The disadvantage is that the estimates were likely highly subjective and unreliable, especially as the survey did not account for response bias, and the respondents were not calibrated beforehand.

The raw data also shows considerable variation in estimates,7 although imprecision in risk estimates is generally a cause for greater concern.

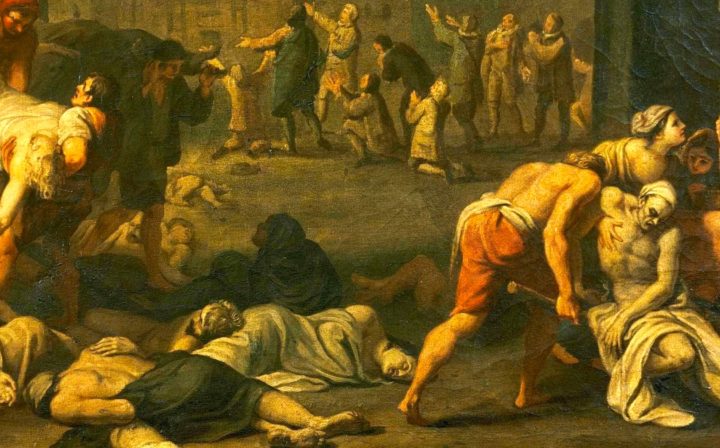

‘Near-GCBR’ events in the historical record

‘Naturally arising’ biological extinction events seem unlikely given the rarity of ‘pathogen driven’ extinction events in natural history, and the 200,000 year lifespan of anatomically modern humans. The historical record also rules against a very high risk of ‘naturally arising’ GCBRs (on which more later). Nonetheless history has four events that somewhat resemble a global biological catastrophe, and so act as a partial ‘proof of principle’ for the danger:8

- Plague of Justinian (541-542 CE): Thought to have arisen in Asia before spreading into the Byzantine Empire around the Mediterranean. The initial outbreak is thought to have killed ~6 million (~3% of world population),9 and contributed to reversing the territorial gains of the Byzantine empire around the Mediterranean rim as well as (possibly) the success of its opponent in the subsequent Arab-Byzantine wars.

- The Black Death (1335-1355 CE): Estimated to have killed 20-75 million people (~10% of world population), and believed to have had profound impacts on the subsequent course of European history.

- The Columbian Exchange (1500-1600 CE): A succession of pandemics (likely including smallpox and paratyphoid) brought by the European colonists devastated Native American populations: it is thought to contribute in large part to the ~80% depopulation of native populations in Mexico over the 16th century, and other groups in the Americas are suggested to have suffered even starker depopulation – up to 98% proportional mortality.10

- The 1918 Influenza Pandemic (1918 CE): A pandemic which ranged almost wholly across the globe, and killed 50-100 million people (2.5% – 5% of world population) – probably more than either World War.

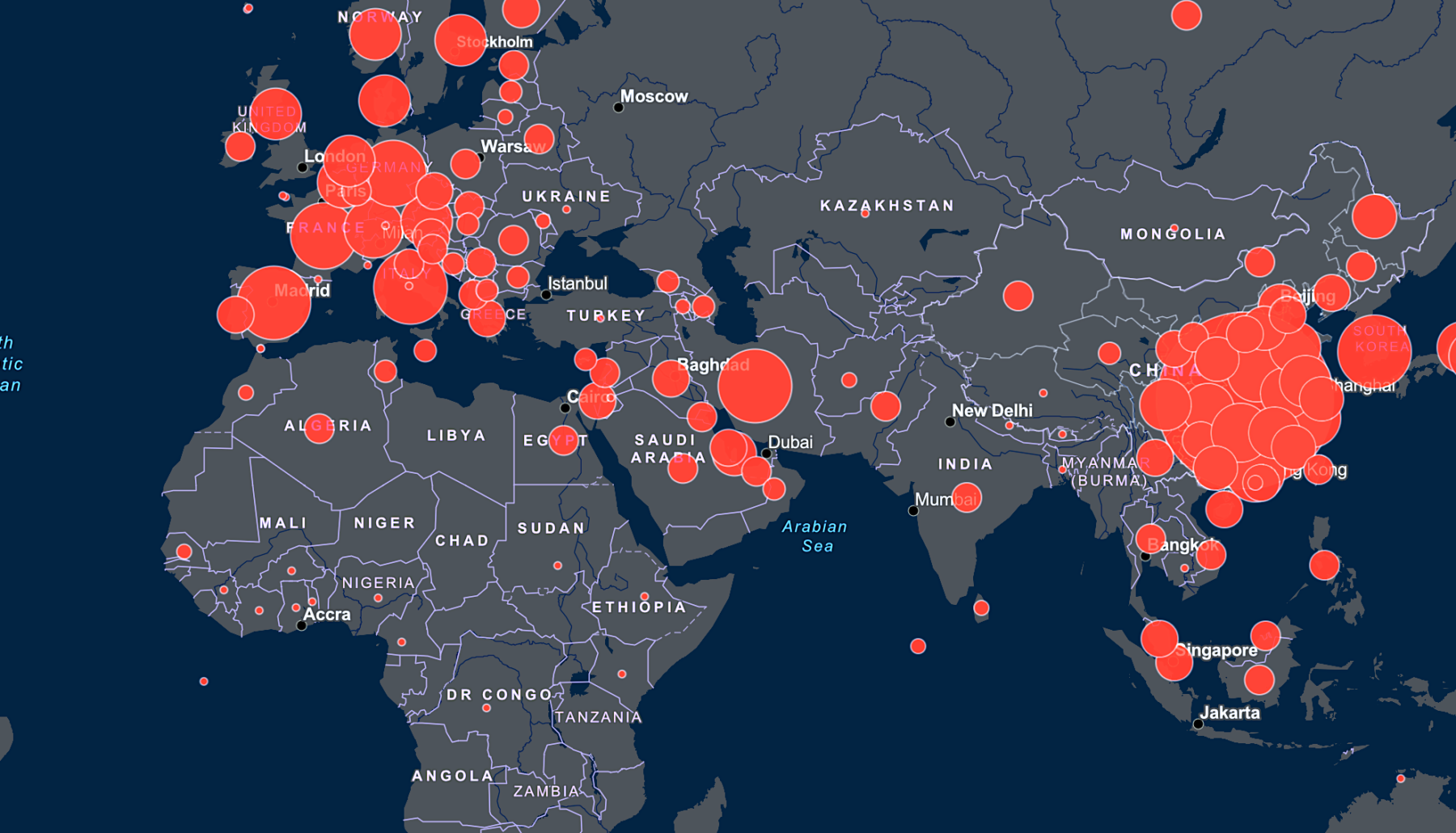

COVID-19, which the World Health organization declared a global pandemic on March 11th 2020, has already caused grave harm to humankind, and regrettably is likely to cause much more. Fortunately, it seems unlikely to cause as much harm as the historical cases noted here.

All of the impacts of the cases above are deeply uncertain, as:

- Vital statistics range from at best very patchy (1918) to absent. Historical populations (let alone their mortality rate, and let alone mortality attributable to a given outbreak) are very imprecisely estimated.

- Proxy indicators (e.g. historical accounts, archaeology) have very poor resolution, leaving a lot to educated guesswork and extrapolation (e.g. “The evidence suggests, in European city X, ~Y% of the population died due to the plague – how should one adjust this to the population of Asia?”)

- Attribution of historical consequences of an outbreak are highly contestable: other coincident events can offer competing (or overdetermining) explanations.

Although these factors add ‘simple’ uncertainty, I would guess academic incentives and selection effects introduce a bias to over-estimates for historical cases. For this reason I’ve used Muelhauser’s estimates for ‘death tolls’ (generally much more conservative than typical estimates, such as ’75-200 million died in the black death’), and reiterate the possible historical consequences are ‘credible’ rather than confidently asserted.

For example, it’s not clear the plague of Justinian should be on the list at all. Mordechai et al. (2019) survey the circumstantial archeological data around the time of the Justinian Plague, and find little evidence of a discontinuity over this period suggestive of a major disaster: papyri and inscriptions suggest stable rates of administrative activity, and pollen measures suggest stable land-use (they also offer reasonable alternative explanations for measures which did show a sharp decline – new laws declined during the ‘plague period’, but this could be explained by government efforts at legal consolidation having coincidentally finished beforehand).

Even if one takes the supposed impacts of each at face value, each has features that may disqualify it as a ‘true’ global catastrophe. The first three, although afflicting a large part of humanity, left another large part unscathed (the Eurasian and American populations were effectively separated). 1918 Flu had a very high total death toll and global reach, but not the highest proportional mortality, and relatively limited historical impact. The Columbian Exchange, although having high proportional mortality and crippling impact on the affected civilisations, had comparatively little effect on global population owing to the smaller population in the Americas and the concurrent population growth of the immigrant European population.

Yet even though these historical cases were not ‘true’ GCBRs, they were perhaps near-GCBRs. They suggest that certain features of a global catastrophe (e.g. civilisational collapse, high proportional mortality) can be driven by biological events. And the current COVID-19 outbreak illustrates the potential for diseases to spread rapidly across the world today, despite efforts to control it. There seems to be no law of nature that prevents a future scenario more extreme than these, or that combines the worst characteristics of those noted above (even if such an event is unlikely to naturally arise).

Whether the risk of ‘natural’ biological catastrophes is increasing or decreasing is unclear

The cases above are ‘naturally occuring’ pandemic diseases, and most of them afflicted much less technically advanced civilisations in the past. Whether subsequent technological progress has increased or decreased this danger is unclear.

Good data is hard to find: Burden of endemic infectious disease is on a downward trend, but this gives little reassurance for changes in the far right tail of pandemic outbreaks. One (modelling) datapoint comes from an AIR worldwide study to estimate the impact if the 1918 influenza outbreak happened today. They suggest that although the absolute numbers of deaths would be similar (tens of millions), the proportional mortality of the global population would be much lower, due to a 90% reduction in case fatality risk.

From first principles, considerations point in both directions. On the side of natural GCBR risk getting lower:

- A healthier (and more widely geographically spread) population.

- Better hygiene and sanitation.

- The potential for effective vaccination and therapeutics.

- Understanding of the mechanisms of disease transmission and pathogenesis.

On the other hand:

- Trade and air travel allow much faster and wider transmission.11 For example, air travel seems to have played a large role in the spread of COVID-19.

- Climate change may (among other effects) increase the likelihood of new emerging zoonotic diseases.

- Greater human population density.

- Much larger domestic animal reservoirs.

There are many other relevant considerations. On balance, my (highly uncertain) view is that the danger of natural GCBRs has declined.

Artificial GCBRs are very dangerous, and increasingly likely

‘Artificial’ GCBRs are a category of increasing concern, owed to advancing biotechnological capacity alongside the increasing risk of its misuse.12 The current landscape (and plausible forecasts of its future development) have concerning features which, together, make the accidental or deliberate misuse of biotechnology a credible global catastrophic risk.

Replaying the worst outbreaks in history

Polio, the 1918 pandemic influenza strain, and most recently horsepox (a close relative of smallpox) have all been synthesised ‘from scratch’. The genetic sequence of all of these disease-causing organisms (and others besides) are publicly available, and the progress and democratisation of biotechnology may make the capacity to perform similar work more accessible to the reckless or malicious.13 Biotechnology therefore poses the risk of rapidly (and repeatedly) recreating the pathogens which led to the worst biological catastrophes observed in history.

Engineered pathogens could be even more dangerous

Beyond repetition, biotechnology allows the possibility of engineering pathogens more dangerous than those that have occurred in natural history. Evolution is infamously myopic, and its optimisation target is reproductive fitness, rather than maximal damage to another species (cf. optimal virulence). Nature may not prove a peerless bioterrorist; dangers that emerge by evolutionary accident could be surpassed by deliberate design.

Hints of this can be seen in the scientific literature. The gain-of-function influenza experiments, suggested that artificial selection could lead to pathogens with properties that enhance their danger.14 There have also been instances of animal analogues of potential pandemic pathogens being genetically modified to reduce existing vaccine efficacy.

These cases used techniques well behind the current cutting edge of biotechnology, and were produced somewhat ‘by accident’ by scientists without malicious intent. The potential for bad actors to intentionally produce new or modified pathogens using modern biotechnology is harrowing.

Ranging further, and reaching higher, than natural history

Natural history constrains how life can evolve. One consequence is the breadth of observed biology is a tiny fraction of the space of possible biology.15 Bioengineering may begin to explore this broader space.

One example is enzymes: proteins that catalyse biological reactions. The repertoire of biochemical reactions catalysed by natural enzymes is relatively narrow, and few are optimised for very high performance, due to limited selection pressure or ‘short-sighted evolution’.16 Enzyme engineering is a relatively new field, yet it has already produced enzymes that catalyse novel reactions (1, 2, 3), and modifications of existing enzymes with improved catalytic performance and thermostability (1, 2).

Similar stories can be told for other aspects of biology, and together they suggest the potential for biological capabilities unprecedented in natural history. It would be optimistic to presume that in this space of large and poorly illuminated ‘unknown unknowns’ there will only be familiar dangers.

Numerical estimates

Millett and Snyder-Beattie (2017) offer a number of different models to approximate the chance of a biological extinction risk:

Table 2: Estimates of biological extinction risk 17

| Model | Risk of extinction per century | Method (in sketch) |

|---|---|---|

| Potentially Pandemic Pathogens | 0.00016% to 0.008% | 0.01 to 0.2% yearly risk of global pandemic emerging from accidental release in the US. Multiplied by 4 to approximate worldwide risk. Multiplied by 2 to include possibility of deliberate release 1 in 10000 risk of extinction from a pandemic release. |

| Power law (bioterrorism) | 0.014% | Scale parameter of ~0.5 Risk of 5 billion deaths = (5 billion)-0.5 10% chance of 5 billion deaths leading to extinction |

| Power law (biowarfare) | 0.005% | Scale parameter of ~0.41 Risk of 5 billion deaths = (5 billion)-0.41 A war every 2 years 10% chance of massive death toll being driven by bio 10% chance of extinction |

These rough approximations may underestimate by virtue of the conservative assumptions in the models, that the three scenarios do not mutually exhaust the risk landscape, and that the extrapolation from historical data is not adjusted for trends that (I think, in aggregate) increase the risk. That said, the principal source of uncertainty is the extremely large leap of extrapolation: power-law assumptions guarantee a heavy right tail, yet in this range other factors may drive a different distribution (either in terms of type or scale parameter). The models are (roughly) transcribed into a guesstimate here.18

GCBRs may be both neglected and tractable

Even if GCBRs are a ‘big problem’, this does not entail more people should work on it. Some big problems are hard to make better, often because they are already being addressed by many others, or that there are no good available interventions.19

This doesn’t seem to apply to GCBRs. There are good reasons to predict this is a problem that will continue to be neglected; surveying the area provides suggestive evidence of under-supply and misallocation; and examples of apparently tractable shortcomings are readily found.

A prior of low expectations

Human cognition, sculpted by the demands of the ancestral environment, may fit poorly with modern challenges. Yudkowsky surveys heuristics and biases that tend to mislead our faculties: GC(B)Rs, with their unpredictability, rarity, and high consequence, appear to be a treacherous topic for our minds to navigate.

Decisions made by larger groups can sometimes mitigate these individual faults. But the wider social and political environment presents its own challenges. There can be value divergence: a state may regard its destruction and outright human extinction as similarly bad, even if they starkly differ from the point of view of the universe. Misaligned incentives can foster very short time horizons, parochial concern, and policy driven by which constituents can shout the loudest instead of who is the most deserving.20 Concern for GCBRs – driven in large part by cosmopolitan interest in the global population, concern for the long-run future, and where most of its beneficiaries are yet to exist – has obvious barriers to overcome.

The upshot is GC(B)Rs lie within the shadows cast by defects in our individual reasoning, and their reduction to a global and intergenerational public good standard theory suggests markets and political systems will under-provide.

The imperfectly allocated portfolio

Very large efforts are made on mitigating biological risks in the general sense. The US government alone planned to spend around $3 billion on biosecurity in 2019.21 Even if only a small fraction of this is ‘GCBR-relevant’ (see later), it looks much larger than (say) $10s of millions yearly spending on AI safety, another 80,000 Hours priority area.

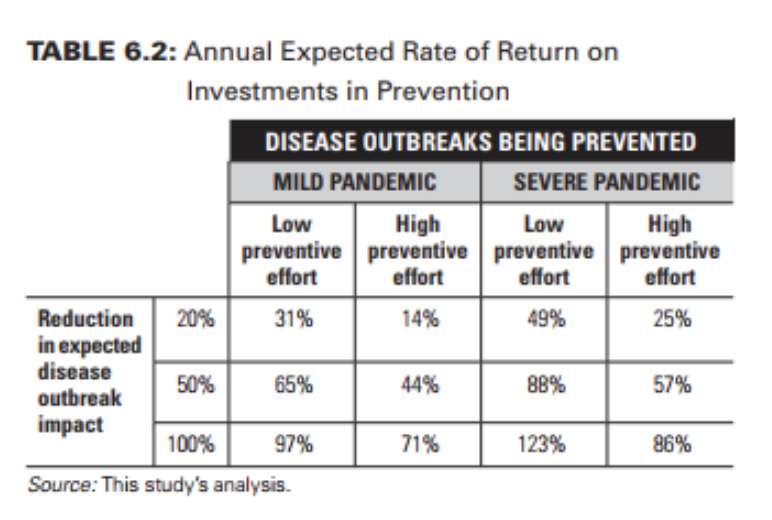

Most things are relatively less neglected than AI safety, yet they can still be neglected in absolute terms. A clue for this being the case in biological risk generally is evidence of high marginal cost-effectiveness. One example is pandemic preparedness. The World Bank suggests an investment of 1.9B to 3.4B in ‘One Health‘ initiatives would reduce the likelihood of pandemic outbreaks by 20%. At this level, the economic rate of return is a (highly favourable) 14-49%. Although I think this ‘bottom line’ is optimistic,22 it is probably not so optimistic for its apparent outperformance to be wholly overestimation.

There is a story to be told of insufficient allocation towards GCBRs in particular, as well as biosecurity in general. Millett and Snyder-Beattie (2017) offer a ‘black-box’ approach (e.g. “X billion dollars would reduce biological existential risk by Y% in expectation”) to mitigating extremely high consequence biological disasters. ‘Pricing in’ the fact extinction not only kills everyone currently alive, but also entails the loss of all people who could live in the future, they report ‘cost per QALY’ of a 250 billion dollar programme that reduces biological extinction risks by 1% from their previous estimates (i.e an absolute risk reduction of 0.02 to ~2/million over a century) to be between $0.13 to $1600,23 superior to marginal health spending in rich countries. Contrast the billion dollar efforts to develop and stockpile anthrax vaccines.

Illustrative examples

I suggest the following two examples are tractable shortcomings in the area of GCBR reduction (even if they are not necessarily the best opportunities), and so suggest opportunities to make a difference are reasonably common.

State actors and the Biological Weapons Convention

Biological weapons have some attractive features for state actors to include in their portfolio of violence: they provide a novel means of attack, are challenging to attribute, and may provide a strategic deterrent more accessible than (albeit inferior to) nuclear weapons.24 The trend of biotechnological progress may add to or enhance these attractive features, and thus deliberate misuse by a state actor developing and deploying a biological weapon is a plausible GCBR (alongside other risks which may not be ‘globally catastrophic’ as defined before, but are nonetheless extremely bad).

The principal defence against proliferation of biological weapons among states is the Biological Weapons Convention. Of 197 state parties eligible to ratify the BWC, 183 have done so. Yet some states which have signed or ratified the BWC have covertly pursued biological weapons programmes. The leading example was the Biopreparat programme of the USSR,25 which at its height spent billions and employed tens of thousands of people across a network of secret facilities, and conducted after the USSR signed onto the BWC:26 their activities are alleged to have included industrial-scale production of weaponised agents like plague, smallpox and anthrax, alongside successes in engineering pathogens for increased lethality, multi-resistance to therapeutics, evasion of laboratory detection, vaccine escape, and novel mechanisms of disease not observed in nature.27 Other past and ongoing violations in a number of countries are widely suspected.28

The BWC faces ongoing difficulties. One is verification: the Convention lacks verification mechanisms for countries to demonstrate their compliance,29 and the technical and political feasibility of such verification is fraught – similarly, it lacks an enforcement mechanism. Another is states may use disarmament treaties (BWC) included as leverage for other political ends: decisions must be made by unanimity, and thus the 8th review conference in 2017 ended without agreement due to the intransigence of one state.30 Finally (and perhaps most tractably) is that the BWC struggles for resources; it has around 3 full-time staff, a budget less than the typical McDonalds, and many states do not fulfil their financial obligations: the 2017 meeting of states parties was only possible thanks to overpayment by some states, and the 2018 meeting had to be cut short by a day due to insufficient funds.31

Dual-use research of concern

The gain-of-function influenza experiments is an example of dual-use research of concern (DURC): research whose results have the potential for misuse. De novo horsepox synthesis is a more recent case. Good governance of DURC remains more aspiration than actuality.

A lot of decision making about whether to conduct a risky experiment falls on an individual investigator, and typical scientific norms around free inquiry and challenging consensus may be a poor fit for circumstances where the downside risks ramify far beyond the practitioners themselves. Even in the best case, where the scientific community is solely composed of those who only perform work which they sincerely believe is on balance good for the world, this independence of decision making gives rise to a unilateralist curse: the decision on ‘should this be done’ defaults to the most optimistic outlier, as only one needs to mistakenly believe it should be done for it to be done, even if it should not.

In reality, scientists are subject to other incentives besides the public good (e.g. publications, patents). This drives the scientific community to make all accessible discoveries as quickly as possible, even if the sequence of discoveries that results is not the best from the perspective of the public good: it may be better that safety-enhancing discoveries occur before (easier to make) dangerous discoveries (cf. differential technological development).

Individually, some scientists may be irresponsible or reckless. Ron Fouchier, when first presenting his work on gain of function avian influenza, did not describe it in terms emblematic of responsible caution: saying that he first “mutated the hell out of the H5N1 virus” to try and make it achieve mammalian transmission. Although it successfully attached to mammalian cells (“which seemed to be very bad news”) it could not transmit from mammal to mammal. Then “someone finally convinced [Fouchier] to do something really, really stupid” – using serial passage in ferrets of this mutated virus, which did successfully produce an H5N1 strain that could transmit from mammal-to-mammal (“this is very bad news indeed”).32

Governance and oversight can mitigate risks posed by individual foibles or mistakes. The track record of these identifying concerns in advance is imperfect. The gain of function influenza work was initially funded by the NIH (the same body which would subsequently declare a moratorium on gain of function experiments), and passed institutional checks and oversight – concerns only began after the results of the work became known. When reporting de novo horsepox synthesis to the WHO advisory committee on Variola virus research, the scientists noted:

Professor Evans’ laboratory brought this activity to the attention of appropriate regulatory authorities, soliciting their approval to initiate and undertake the synthesis. It was the view of the researchers that these authorities, however, may not have fully appreciated the significance of, or potential need for, regulation or approval of any steps or services involved in the use of commercial companies performing commercial DNA synthesis, laboratory facilities, and the federal mail service to synthesise and replicate a virulent horse pathogen.

One underlying challenge is there is no bright line one can draw around all concerning research. ‘List based’ approaches, such as select agent lists or the seven experiments of concern are increasingly inapposite to current and emerging practice (for example, neither of these would ‘flag’ horsepox synthesis, as horsepox is not a select agent, and de novo synthesis, in itself, is not one of the experiments of concern). Extending the lists after new cases are demonstrated does not seem to be a winning strategy, yet the alternative to lists is not clear: the consequences of scientific discovery are not always straightforward to forecast.

Even if a more reliable governance ‘safety net’ could be constructed, there would remain challenges in geographic scope. Practitioners inclined (for whatever reason) towards more concerning work can migrate to where the governance is less stringent; even if one journal declines to publish on public safety grounds, one can resubmit to another who might.33

Yet these challenges are not insurmountable: research governance can adapt to modern challenges; greater awareness of (and caution around) biosecurity issues can be inculcated into the scientific community; one can attempt to construct better means of risk assessment than blacklists (cf. Lewis et al. (2019)); broader intra- and inter-national cooperation can mitigate some of the dangers of the unilateralist’s curse. There is ongoing work in all of these areas. All could be augmented.

Impressions on the problem area

Even if the above persuades that GCBRs should be an important part of the ‘longtermist portfolio’,34 it does not answer either how to prioritise this problem area relative to other parts of the ‘far future portfolio’ (e.g. AI safety, Nuclear security), nor which areas under the broad heading of ‘GCBRs’ are the best to work on. I survey some of the most important questions, and (where I have them) offer my impressions as a rough guide.

Key uncertainties

What is the threshold for an event to threaten global catastrophe?

Biological events vary greatly in their scale. At either extreme, there is wide agreement of whether to ‘rule out’ or ‘rule in’ an event as a credible GCBR: a food poisoning outbreak is not a GCBR; an extinction event is. Disagreement is widespread between these limits. I offered before a rough indicator of ‘10% of the population’, which suggests a threshold for concern for GCBRs at the upper limit of events observed in human history.

As this plays a large role in driving my guesses over risk share, a lower threshold would tend to push risk ‘back’ from the directions I indicate (and generally towards ‘conventional’ or ‘commonsense’ prioritisation), and vice-versa.

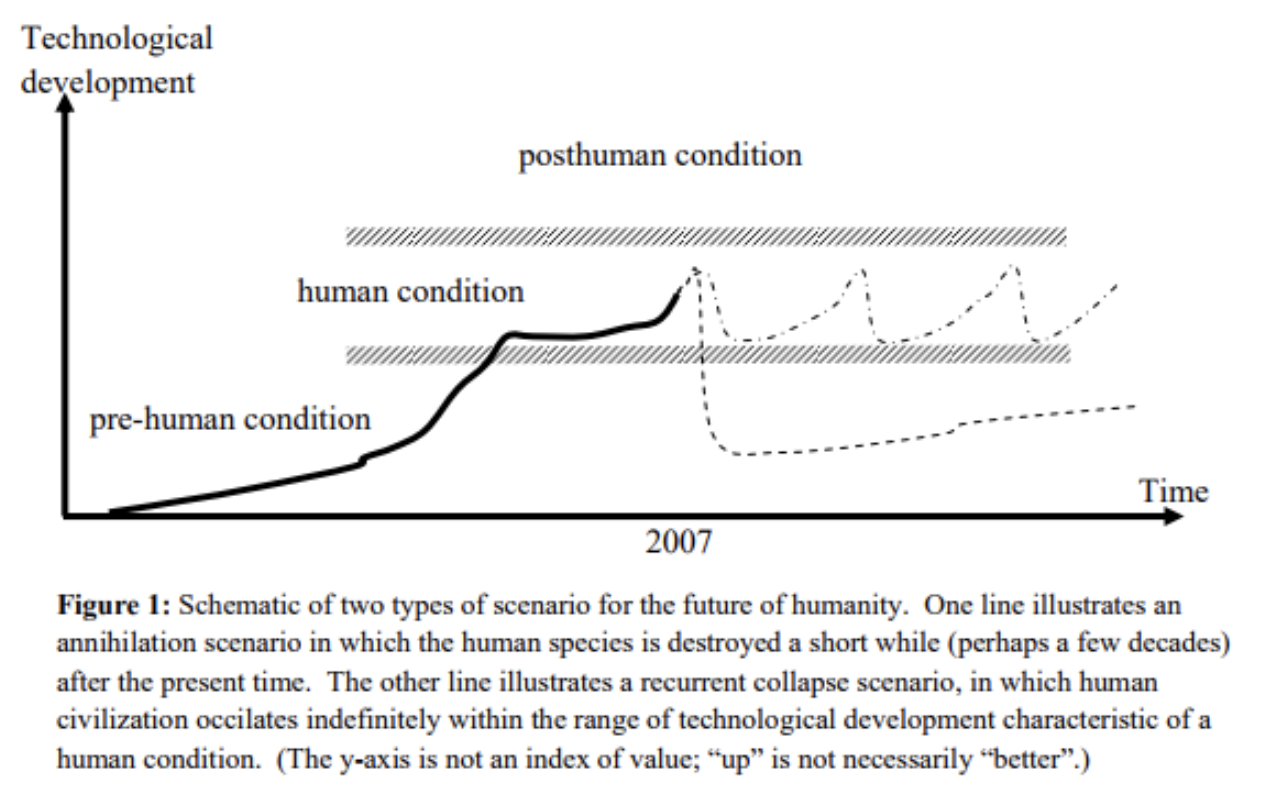

How likely is humanity to get back on track after global catastrophe?

I have also presumed that a biological event which causes human civilisation to collapse (or ‘derail’) threatens great harm to humanity’s future, and thus such risks would have profound importance to longtermists alongside those of outright human extinction. This is commonsensical, but not inarguable.

Much depends on how likely humanity is to recover from extremely large disasters which nonetheless are not extinction events. An event which kills 99% of humankind would leave a population of around 78 million, still much higher than estimates of prehistoric total human populations (which survived the 200,000 year duration of prehistory, suggesting reasonable resilience to subsequent extinction). Unlike prehistory, the survivors of a ‘99%’ catastrophe likely have much greater knowledge and access to technology than earlier times, better situating them for a speedy recovery, at least relative to the hundreds of millions of years remaining of the earth’s habitable period.35 The likelihood of repeated disasters ‘resetting’ human development again and again through this interval looks slim.

If so, a humanity whose past has been scarred by such a vast disaster nonetheless still has good prospects to enjoy a flourishing future. If this is true, from a longtermist perspective, more effort should be spent upon disasters which would not offer reasonable prospect of recovery, of which risks of outright extinction are the leading (but may not be the only) candidate.36

Yet it may not be so:

- History may be contingent and fragile, and the history we have observed can at best give limited reassurance that recovery would likely occur if we “blast (or ‘bio’) ourselves back to the stone age”.

- We may also worry about interaction terms between catastrophic risks: perhaps one global catastrophe is likely to precipitate others which ‘add up’ to an existential risk.

- We may take the trajectory of our current civilisation to be unusually propitious out of those which were possible (consider reasonably-nearby possible worlds with totalitarian state hyper-power, or roiling great power warfare). Even if a GCBR ‘only’ causes a civilisational collapse which is quickly recovered from, it may still substantially increase risk indirectly if the successor civilisations tend to be worse at navigating subsequent existential risks well.37

- Risk factors may be shared between GCBRs and other global catastrophes (e.g. further proliferation of weapons of mass destruction do not augur well for humanity navigating other challenges of emerging technology). Thus the risk of large biological disasters may be a proxy indicator for these important risk factors.38

The more one is persuaded by a ‘recovery is robust and reliable’ view, the more one should focus effort on existential rather than globally catastrophic dangers (and vice versa). Such a view would influence not only how to allocate efforts ‘within’ the GCBR problem area, but also in allocation between problem areas. The aggregate risk of GCBRs appears to be mainly composed of non-existential dangers, and so this problem area would be relatively disfavoured compared to those, all else equal, where the danger is principally one of existential risk (AI perhaps chief amongst these).

Some guesses on risk

How do GCBRs compare to AI risk?

A relatively common view within effective altruism is that biological risks and AI risks comprise the two most important topics to work on from a longtermist perspective.39 AI likely poses a greater overall risk, yet GCBRs may have good opportunities for people interested in increasing their impact given the very large pre-existing portfolio, more ‘shovel-ready’ interventions, and very few people working in the relevant fields who have this as their highest priority (see later).

My impression is that GCBRs should be a more junior member of an ideal ‘far future portfolio’ compared to AI.40 But not massively more junior: some features of GCBRs look worrying, and many others remain unclear. When considered alongside relatively greater neglect (at least among those principally concerned with the longterm future), whatever gap lies between GCBRs and AI is unlikely so large as to swamp considerations around comparative advantage. I recommend those with knowledge, skills or attributes particularly well-suited to working on GCBRs explore this area first before contemplating changing direction into AI. I also suggest those for whom personal fit does not provide a decisive consideration consider this area as a reasonable candidate alongside AI.

Probably anthropogenic > natural GCBRs.

In sketch, the case for thinking anthropogenic risks are greater than natural ones is this:

Our observational data, such as it is, argues for a low rate of natural GCBRs:41

- Pathogen-driven extinction events appear to be relatively rare.

- As Dr Toby Ord argues in the section on natural risks in his book ‘The Precipice’, the fact that humans have survived for 200,000 years is evidence against there being a high baseline extinction risk from any cause (biology included), and so a low probability of occuring in (say) 100 years.42

- A similar story applies to GCBRs, given we’ve (arguably) not observed a ‘true’ GCBR, and only a few (or none) near-GCBRs.

One should update this baseline risk by all the novel changes in recent history (e.g. antibiotics, air travel, public health, climate change, animal agriculture – see above). Estimates of the aggregate impact of these changes are highly non-resilient even with respect to sign (I think it is risk-reducing, but reasonable people disagree). Yet it seems reasonable given this uncertainty that one should probably not be adjusting the central risk estimate upwards by the orders of magnitude necessary to make natural GCBR at 1% or greater this century.43

I think anthropogenic GCBR is around the 1% mark or greater this century, motivated partly by the troubling developments in biotechnology noted above, and partly by the absence of reassuring evidence of a long track record of safety from this type of risk. Thus this looks more dangerous.

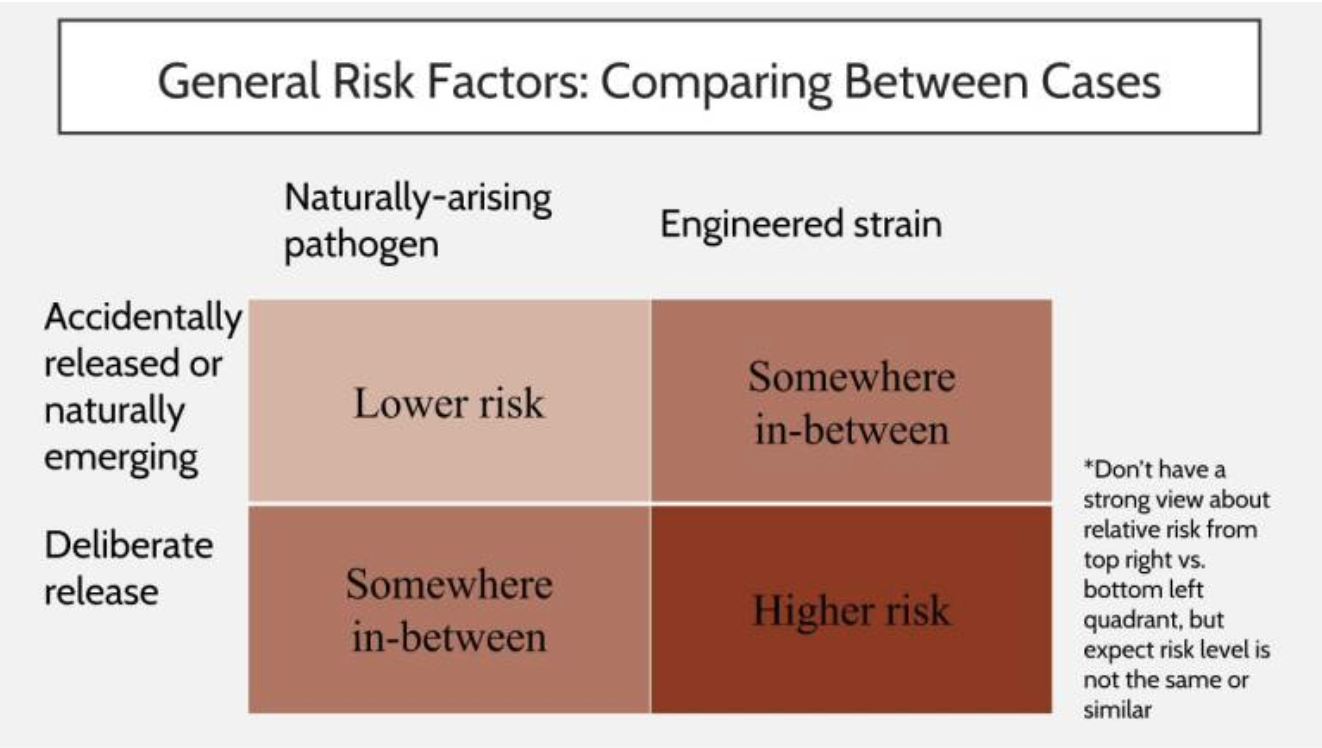

Perhaps deliberate over accidental misuse

Within anthropogenic risks one can (imperfectly) subdivide them into deliberate versus accidental misuse (compare bioterrorism to a ‘science experiment gone wrong’ scenario).44

Which is more worrying is hard to say – little data to go on, and considerations in both directions. For deliberate misuse, the idea is that scenarios of vast disaster are (thankfully) rare among the space of ‘bad biological events’ (however cashed out), and so are more likely to be found by deliberate search rather than chance; for accidents, the idea is that (thankfully) most actors are well intentioned, and so there will be a much higher rate of good actors making mistakes than bad actors doing things on purpose. I favour the former consideration more,45 and so lean towards deliberate misuse scenarios being more dangerous than accidental ones.

Which bad actors pose the greatest risk?

Various actors may be inclined to deliberate misuse: from states, to terrorist groups, to individual misanthropes (and others besides). One key feature is what we might call actor sophistication (itself a rough summary of their available resources, understanding, and so on). There are fewer actors at a higher level of sophistication, but the danger arising from each is higher: there are many more possible individual misusers than possible state misusers, but a given state programme tends to be much more dangerous than a given individual scheme (and states themselves could vary widely in their sophistication).

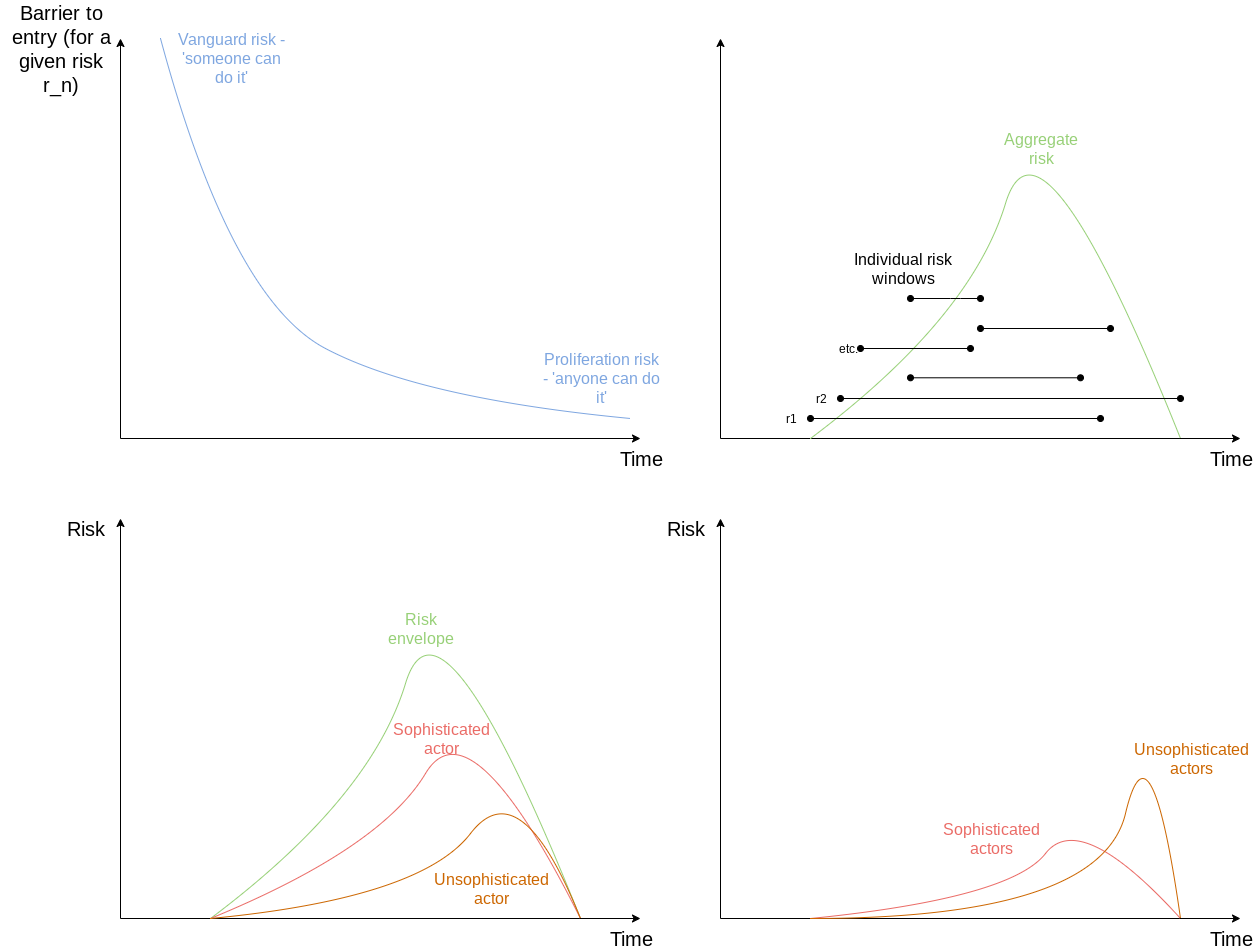

My impression is that one should expect the aggregate risk to initially arise principally from highly sophisticated bad actors, this balance shifts over time towards less sophisticated ones. My reasoning, in sketch, is this:

For a given danger of misuse, the barrier to entry for an actor to utilise this starts very high, but inexorably falls (top left panel, below). Roughly, the risk window is opened by a vanguard risk where some bad actor can access it, and saturates when the danger has proliferated to the point where (virtually) any bad actor can access it.46

Suppose the risk window for every danger ultimately closes, and there is some finite population of such dangers distributed over time (top right).47 Roughly, this suggests cumulative danger first rises, and then declines (cf., also). This is similar to the danger posed by a maximally sophisticated bad actor over time, with lower sophistication corresponding to both a reduced magnitude of danger, and a skew towards later in time (bottom left – ‘sophisticated’ and ‘unsophisticated’ are illustrations; there aren’t two neat classes, but rather a spectrum). With actors becoming more numerous with less sophistication, this suggests the risk share of total danger shifts from the latter to the former over time (bottom right – again making an arbitrary cut in the hypothesised ‘sophistication spectrum).

Crowdedness, convergence, and the current portfolio

If we can distinguish GCBRs from ‘biological risks’ in general, ‘longtermist’ views would recommend greater emphasis be placed on reducing GCBRs in particular. Nonetheless, three factors align current approaches in biosecurity to GCBR mitigation efforts:48

- Current views imply some longtermist interest: Even though ‘conventional’ views would not place such a heavy weight on protecting the long-term future, they would tend not to wholly discount it. Insofar as they don’t, they value work that reduces these risks.

- GCBRs threaten near-term interests too: Events that threaten to derail civilisation also threaten vast amounts of death and misery. Even if one discounts the former, the latter remains a powerful motivation.49

- Interventions tend to be ‘dual purpose’ between ‘GCBRs’ and ‘non-GCBRs’: disease surveillance can detect both large and small outbreaks, counter-proliferation efforts can stop both higher and lower consequence acts of deliberate use, and so on.

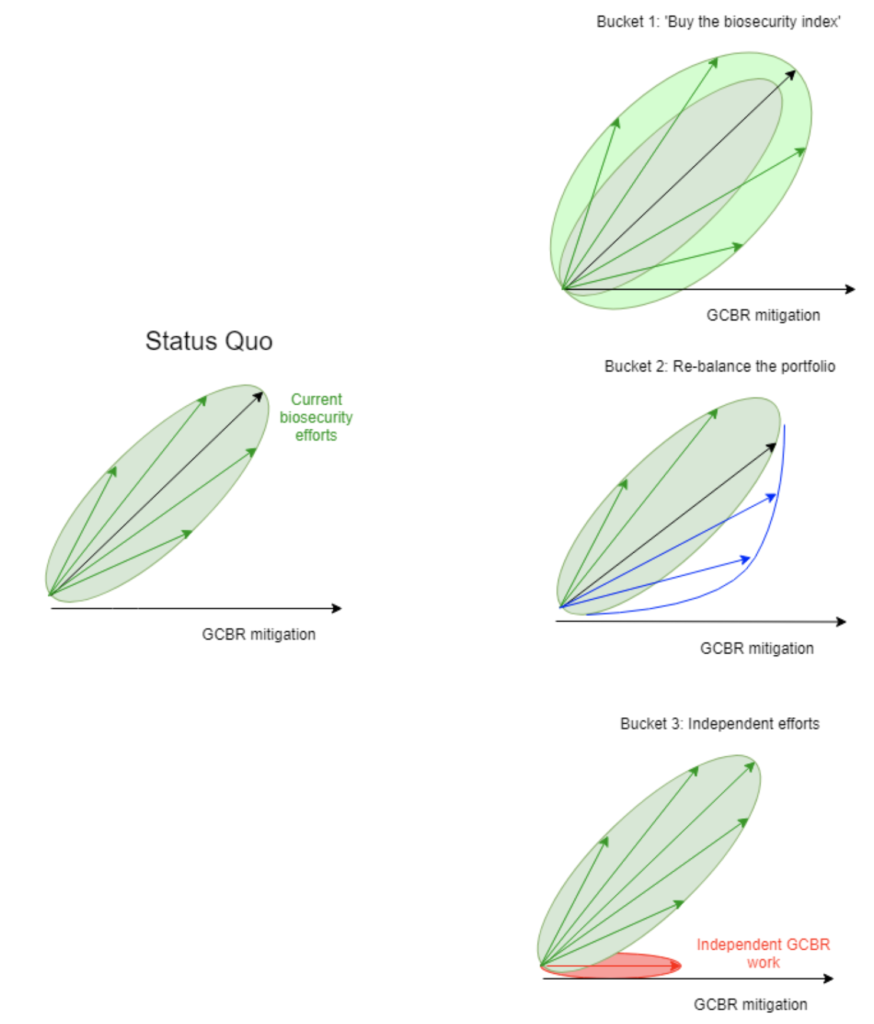

This broad convergence, although welcome, is not complete. The current portfolio of work (set mainly by the lights of ‘conventional’ views) would not be expected to be perfectly allocated by the lights of a longtermist view (see above). One could imagine these efforts as a collection of vectors, their length corresponding to the investment they currently receive, and of varying alignment with the cause of GCBR mitigation, the envelope of these having a major axis positively (but not perfectly) correlated with the ‘GCBR mitigation’ axis:

Approaches to intervene given this pre-existing portfolio can be split into three broad buckets. The first bucket simply channels energy into this portfolio without targeting – ‘buying the index’ of conventional biosecurity, thus generating more beneficial spillover into GCBR mitigation. The second bucket aims to complement the portfolio of biosecurity effort to better target GCBRs: assisting work particularly important to GCBRs, adapting existing efforts to have greater ‘GCBR relevance’, and perhaps advocacy within the biosecurity community to place greater emphasis on GCBRs when making decisions of allocation. The third bucket is pursuing GCBR-reducing work which is fairly independent of (and has little overlap with) efforts of the existing biosecurity community.

I would prioritise the latter two buckets over the first: I think it is possible to identify which areas are most important to GCBRs and so for directed effort to ‘beat the index’.50 My impression is the second bucket should be prioritised over the third: although GCBRs have some knotty macro-strategic questions to disentangle,51 the area is less pre-paradigmatic than AI risk, and augmenting and leveraging existing effort likely harbours a lot of value (as well as a wide field where ‘GCBR-focused’ and ‘conventional’ efforts can mutually benefit).52 Of course, much depends on how easy interventions in the buckets are. If it is much lower cost to buy the biosecurity index because of very large interest in conventional biosecurity subfields, the approach may become competitive.

There is substantial overlap between ‘bio’ and other problem areas, such as global health (e.g. the Global Health Security Agenda), factory farming (e.g. ‘One Health‘ initiatives), or AI (e.g. due to analogous governance challenges between both). Although suggesting useful prospects for collaboration, I would hesitate to recommend ‘bio’ as a good ‘hedge’ for uncertainty between problem areas. The more indirect path to impact makes bio unlikely to be the best option by the lights of other problem areas (e.g. although I hope bio can provide some service to AI governance, one would be surprised if work on bio made greater contributions to AI governance than working on AI governance itself – cf.), and so further deliberation over one’s uncertainty (and then committing to one’s leading option) will tend to have a greater impact than a mixed strategy.53

How to help

General remarks

What is the comparative advantage of Effective Altruists in this area?

The main advantage of Effective Altruists entering this area appears to be value-alignment – in other words, appreciating the great importance of the long-run future, and being able to prioritise by these lights. This pushes towards people seeking roles where they influence prioritisation and the strategic direction of relevant communities, rather than doing particular object level work: an ‘EA vaccinologist’ (for example) is reasonably replaceable by another competent vaccinologist; an ‘EA deciding science budget allocation’ much less so.54

Desirable personal characteristics

There are some characteristics which make one particularly well suited to work on GCBRs.

- Discretion: Biosecurity in general (and GCBRs in particular) are a delicate area – one where mistakes are easy to make yet hard to rectify. The ideal norm is basically the opposite of ‘move fast and break things’, and caution and discretion are essential. To illustrate:

- First, GCBRs are an area of substantial information hazard, as a substantial fraction of risk arises from scenarios of deliberate misuse. As such certain information ‘getting into the wrong hands’ could prove dangerous. This not only includes particular ‘dangerous recipes’, but also general heuristics or principles which could be used by a bad actor to improve their efforts: the historical trend of surprising incompetence from those attempting to use disease as a weapon is one I am eager to see continue.55 It is important to recognise when information could be hazardous; to judge impartially the risks and benefits of wider disclosure (notwithstanding personal interest in e.g. ‘publishing interesting papers’ or ‘being known to have cool ideas’); and to practice caution in decision-making (and not take these decisions unilaterally).56

- Second, the area tends to be politically sensitive, given it intersects many areas of well-established interests (e.g. state diplomacy, regulation of science and industry, security policy). One typically needs to build coalitions of support among these, as one can seldom ‘implement yourself’, and mature conflicts can leave pitfalls that are hard to spot without a lot of tacit knowledge.

- Third, this also applies to EA’s entry into biosecurity itself. History suggests integration between a pre-existing expert community and an ‘outsider’ group with a new interest does not always go well. This has gone much better in the case of biorisk so far, but hard won progress can be much more easily reversed by inapt words or ill-chosen deeds.

- Focus: If one believes the existing ‘humanity-wide portfolio’ of effort underweights GCBRs, it follows much of the work that best mitigates GCBRs may be somewhat different to typical work in biosafety, public health, and other related areas. A key ability is to be able to prioritise by this criterion, and not get side-tracked into laudable work which is less important.

- Domain knowledge and relevant credentials: Prior advanced understanding and/or formal qualifications (e.g. a PhD in a bioscience field) are a considerable asset, for three reasons. First, for many relevant careers to GCBRs, advanced credentials (e.g. PhD, MD, JD) are essential or highly desirable. Second, close understanding of relevant fields seems important for doing good work: much of the work in GCBRs will be concrete, and even more abstract approaches will likely rely more on close understanding rather than flashes of ‘pure’ conceptual insight. Third, (non-pure) time discounting favours those who already have relevant knowledge and credentials, rather than those several years away from getting them.

- US Citizenship: The great bulk of biosecurity activity is focused in the United States. For serious involvement with some major stakeholders (e.g. the US biodefense community), US citizenship is effectively pre-requisite. For many others, it remains a considerable advantage.

Work directly now, or build capital for later?

There are two broad families of approach to work on GCBRs, which we might call explicit versus implicit. Explicit approaches involve working on GCBRs expressly. Implicit (indirect) approaches involve pursuing a more ‘conventional’ career path in an area relevant to GCBRs, both to do work that has GCBR-relevance (even if that is not the primary objective of the work) and also aim to translate this influence and career capital to bear on the problem later.57 Which option is more attractive is sensitive to the difficult topics discussed above (and other things besides).

Reasonable people differ on the ideal balance of individuals pursuing explicit versus implicit approaches (compare above on degree of convergence between GCBR mitigation and conventional biosecurity work): perhaps the strongest argument for the former is that such work can better uncover considerations that inform subsequent effort (e.g. if ‘technical fixes’ to GCBRs look unattractive compared to ‘political fixes’, this changes which areas the community should focus on); perhaps the strongest argument for the latter is that there are very large amounts of financial and human capital distributed nearby to GCBRs, so efforts to better target this portfolio are very highly leveraged.

That said, this discussion is somewhat overdetermined at present by two considerations: the first is both appear currently undersupplied with human capital, regardless of one’s view of what the ideal balance between them should be. The second is that there are few immediate opportunities for people to work directly on GCBRs (although that will hopefully change in a few years, see below), and so even for those inclined towards direct work, indirect approaches may be the best ‘holding pattern’ at present.

Tentative career advice

This section offers some tentative suggestions of what to do to contribute to this problem. It is hard to overstate how uncertain and non-resilient these recommendations are: I recommend folks considering a career in this area to heavily supplement this with their own research (and talking to others) before making big decisions.

1. What to study at university

There’s a variety of GCBR-relevant subjects that can be studied, and the backgrounds of people working in this space are highly diverse.58 One challenge is GCBRs interface fuzzily with a number of areas, many of which themselves are interdisciplinary.

It can be roughly divided into two broad categories of technical and policy fields. Most careers require some knowledge of both. Of the two, it is probably better to ‘pick up’ technical knowledge first: this seems generally harder to pick up later-career than most non-technical subjects, and career trajectories of those with a technical background moving towards policy are much more common than the opposite.

Technical fields

Synthetic biology (roughly stipulated as ‘bioengineering that works’) is a key engine of biological capabilities, and so also one that drives risks and opportunities relevant to GCBRs. Synthetic biology is broad and nebulous, but it can be approached from the more mechanistic (e.g. molecular biology), computational (e.g. bioinformatics), or integrative (e.g. systems biology) aspects of biological science.

To ‘become a synthetic biologist’ a typical route is a biological sciences or chemistry undergrad, with an emphasis on one or more of these sub-fields alongside laboratory research experience, followed by graduate training in a relevant lab. iGEM is another valuable opportunity if one’s university participates. Other subfields in biology also provide background and experience relevant commensurate to their proximity to synthetic biology (e.g. biophysics is generally better than histology).

Another approach, particularly relevant for more macrostrategy-type research, are areas of biology with a more abstract bent (e.g. mathematical and theoretical biology, evolutionary biology, ecology). They tend to be populated by a mix of mathematically-inclined biologists and biologically inclined mathematicians (/computer scientists and other ‘math-heavy’ areas).

A further possibility is scientific training in an area whose subject-matter will likely be relevant to specific plausible GCBRs (examples might be virology or microbiology for certain infectious agents, immunology, pharmacology and vaccinology for countermeasures). Although a broad portfolio of individuals with domain-specific scientific expertise would be highly desirable in a mature GCBR ecosystem, its current small size disfavours lots of subspecialisation, especially with the possibility that our understanding of the risk landscape (and thus which specialties are most relevant) may change.

If there are clear new technologies that we will need to develop to mitigate GCBRs, it’s possible that you could also have a significant impact as a generalist engineer or tech entrepreneur. This could mean that general training in quantitative subjects, in particular engineering, would be helpful.

Policy fields

Unlike technical fields, policy fields are accessible to those with backgrounds in the humanities or social sciences as well as ‘harder’ science subjects.59 They therefore tend to be better approaches for those who have already completed an undergraduate degree in the humanities or social sciences looking to move into this area than technical fields (but people with technical backgrounds are often highly desired for government jobs).

The most relevant policy subjects go under the labels of ‘health security’, ‘biosecurity’, or ‘biodefense’.60 The principal emphasis of these areas is the protection of people and the environment from biological threats, so giving it the greatest ‘GCBR relevance’. Focused programmes exist, such as George Mason University’s Biodefense programmes (MS, PhD). Academic centres in this area, even if they do not teach, may take research interns or PhD students in related disciplines (e.g. Johns Hopkins Centre for Health Security, Georgetown Centre for Global Science and Security). The ELBI fellowship is also a good opportunity (albeit generally for mid or later-career people) to get further involved in this arena.

This area is often approached in the context of other (inter-)disciplines. Security studies/IR ‘covers’ aspects of biodefense, with (chemical and) biological weapon non-proliferation the centre of their overlap. Science and technology studies (STS), with its interest in socially responsible science governance, has some common ground with biosecurity (dual use research of concern is perhaps the centre of this shared territory). Public health is arguably a superset of health security (sometimes called health protection), and epidemiology a closely related practice. Public policy is another relevant superset, although fairly far-removed in virtue of its generality.

1. ‘Explicit’ work on GCBRs

There are only a few centres which work on GCBRs explicitly. To my knowledge, these are the main ones:

- The Centre for Health Security (CHS)

- The Nuclear Threat Initiative (NTI)

- Centre for the Study of Existential Risk (CSER)

As above, this does not mean these are the only places which contribute to reducing GCBRs: a lot of efforts by other stakeholders, even if not labelled as (or primarily directed towards) GCBR reduction nonetheless do so.

It is both hoped and expected that the field of ‘explicit’ GCBR-reduction work will grow dramatically over the next few years, and at maturity be capable of absorbing dozens of suitable people. At the present time, however, prospects for direct work are somewhat limited: forthcoming positions are likely to be rare and highly competitive – the same applies to internships and similar ‘limited term’ roles. More contingent roles (e.g. contracting work, self-contained projects) may be possible at some of these, but this work has features many will find unattractive (e.g. uncertainty, remote work, no clear career progression, little career capital).

2. Implicit approaches

Implicit approaches involve working at a major stakeholder in a nearby space, in the hopes of enhancing their efforts towards GCBR reduction and cultivating relevant career capital. I sketch these below, in highly approximate order of importance:

United States Government

The US government represents one of the largest accessible stakeholders in fields proximate to GCBRs. Positions are competitive, and many roles in a government career are unlikely to be solely focused on matters directly relevant to GCBRs. Individuals taking this path should not consider themselves siloed to a particular agency: career capital is transferable between agencies (and experience in multiple agencies is often desirable). Work with certain government contractors is one route to a position at some of these government agencies.

Relevant agencies include:

- Department of Defence (DoD)

- Defence Advanced Research Projects Agency (DARPA)

- Defence Threat Reduction Agency (DTRA)

- Office of the Secretary of Defence

- Offices that focus on oversight and implementation of the Cooperative Threat Reduction Program (Counter WMD Policy & Nuclear, Chem Bio Defense)

- Office of Net Assessment, (including Health Affairs)

- State Department

- Bureau of International Security and Nonproliferation

- Biosecurity Engagement Program (BEP)

- Department of Health and Human Services (HHS)

- Centers for Disease Control and Prevention (CDC)

- Office of the Assistant Secretary for Preparedness and Response (ASPR)

- Biomedical Advanced Project and Development Agency (BARDA)

- Office of Global Affairs

- Department of Homeland Security

- Federal Bureau of Investigation, Weapons of Mass Destruction Directorate

- U.S. Agency for International Development, Bureau of Global Health, Global Health Security and Development Unit

- The US intelligence community (broadly)

- Intelligence Advanced Research Projects Agency (IARPA)

Post-graduate qualifications (PhD, MD, MA, or engineering degrees) are often required. Good steps to move into these careers are the Presidential Management Fellowship, the AAAS Science and Technology Policy Fellowship, Mirzayan Fellowship, and the Epidemic Intelligence Service fellowship (for Public Health/Epidemiology).

Scientific community (esp. synthetic biology community)

It would be desirable for those with positions of prominence in academic scientific research or leading biotech start-ups, particularly in synthetic biology, to take the risk of GCBRs seriously. Professional experience in this area also lends legitimacy when interacting with these communities. A further dividend is this area is a natural fit for those wanting to work directly on technical contributions and counter-measures.61

International organisations

The three leading candidates are the UN Office for Disarmament Affairs (UNODA), the World Health Organisation (WHO), and the World Organization for Animal Health (OIE).

WHO positions tend to be entered into by those who spent their career at another relevant organisation. For more junior roles across the UN system, there is a programme for early-career professionals called The Junior Professional Officers (JPO) programme. Both sorts of positions are extraordinarily competitive. A further challenge is the limited number of positions orientated towards GCBRs specifically: as mentioned before, the biological weapons implementation and support unit (ISU) comprises three people.

Academia/Civil society

There are a relatively small number of academic centres working on related areas, as well as a similarly small diaspora of academics working independently on similar topics (some listed above). Additional work in these areas would be desirable.62

Relevant civil society groups are thin on the ground, but Chatham House (International Security Department), and the National Academies of Sciences, Engineering, and Medicine (NASEM) are two examples.

(See also this guide on careers in think tanks).

Other nation states

Parallel roles to those mentioned for the United States in security, intelligence, and science governance in other nation states likely have high value (albeit probably less so than either the US or international organisations). My understanding of these is limited (some pointers on China are provided here).

Public Health/Medicine

Public health and medicine are natural avenues from the perspective of disease control and prevention, as well as the treatment of infectious disease, and medical and public health backgrounds are common in senior decision makers in relevant fields. That said, training in these areas is time-inefficient, and seniority in these fields may not be the most valuable career capital to have compared to those above (cf. medical careers).

3. Speculative possibilities

Some further (even) more speculative routes to impact are worth noting:

Grant-making

The great bulk of grants to reduce GCBRs (explicitly) are made by Open Philanthropy (under the heading of ‘Biosecurity and Pandemic Preparedness‘).63 Compared to other potential funders loosely in the ‘EA community’ interested in GCBRs, Open Phil has two considerable advantages: i) a much larger pool of available funding; ii) staff dedicated to finding opportunities in this area. Working at Open Phil, even if one’s work would not relate to GCBRs, may still be a strong candidate from a solely GCBR perspective (i.e. ignoring benefits to other causes).64

Despite these strengths, Open Phil may not be able to fill all available niches of an ideal funding ecosystem, as Carl Shulman notes in his essay on donor lotteries.65 Yet even if other funding sources emerge which can fill these niches, ‘GCBR grantmaking capacity’ remains in short supply. People skilled at this could have a considerable impact (either at Open Phil or elsewhere), but I do not know how such skill can be recognised or developed. See 80,000 Hours’ career review on foundation grantmaking for a general overview.

Operations and management roles

One constraint on expanding the number of people working directly on GCBRs is limited by operations and management capacity. Common to the wider EA community, people with these skills remain in short supply (although this seems to be improving).66

A related area of need is roughly termed ‘research management’, comprising an overlap between management and operations talent and in depth knowledge of a particular problem area. These types of roles will be increasingly important as the area grows.

Public facing advocacy

It is possible public advocacy may be a helpful lever to push on to mitigate GCBRs, although also possible doing so is counter-productive. In the former case, backgrounds in politics, advocacy (perhaps analogous to nuclear disarmament campaigns), or journalism may prove valuable.67 Per the previous discussion about information continence, broad coordination with other EAs working on existential risk topics is critical.

Engineering and Entrepreneurship

Many ways of reducing biorisk will involve the development of new technologies. This means there may also be opportunities to work as an engineer or tech entrepreneur. Following one of these paths could mean building expertise through working as an engineer at a “hard-tech” company or working at a startup, rather than building academic biological expertise at university. Though if you do take this route, make sure the project you’re helping isn’t advancing biotech capabilities that could make the problem worse.

Other things

Relevant knowledge

GCBRs are likely a cross-disciplinary problem, and although it is futile to attempt to become an ‘expert at everything’, basic knowledge of relevant fields outside one’s area of expertise is key. In practical terms, this recommends those approaching GCBRs from a policy angle acquaint themselves with the relevant basic science (particularly molecular and cell biology), and those with a technical background the policy and governance background.

Exercise care with original research

Although reading around the subject is worthwhile, independent original research on GCBRs should be done with care. The GCBR risk landscape has a high prevalence of potentially hazardous information, and in some cases the best approach will be prophylaxis: to avoid certain research directions which are likely to uncover these hazards. Some areas look more robustly positive, typically due to their defense-bias: better attribution techniques, technical and policy work to accelerate countermeasure development and deployment, and more effective biosurveillance would be examples of this. In contrast, ‘Red teaming’ or exploring what are the most dangerous genetic modifications that could be made to a given pathogen are two leading examples of research plausibly better not done at all, and certainly better not done publicly.

Decisions here are complicated, and likely to be better made by the consensus in the GCBR community, rather than amateurs working outside of it. Unleashing lots of brainpower on poorly-directed exploration of the risk landscape may do more harm than good. A list of self-contained projects and research topics suitable for ‘external’ researchers is in development: those interested are encouraged to get in touch.

Want to work on reducing global catastrophic biorisks? We want to help.

We’ve helped dozens of people formulate their plans, and put them in touch with academic mentors. If you want to work on this area, apply for our free one-on-one advising service.

Find opportunities on our job board

Our job board features opportunities in biosecurity and pandemic preparedness:

Learn more

- All 80,000 Hours’ content on reducing risks of catastrophic pandemics

- Greg’s ultra-rough reading list.

- Chris Bakerlee’s reading list.

- Good online sources to keep abreast of developments in the broad field (from a primarily US perspective) are the GMU Pandora report and the CHS Health Security Headlines mailing list.

- The Precipice: Existential Risk and the Future of Humanity by Toby Ord — see Chapters 3 and 5

- Discussion of how GCBRs should be defined from the Centre for Health Security

- You can find a discussion of GCBRs in chapters 14 and 20 of Global Catastrophic Risks edited by Bostrom and Cirkovic (2007)

- Think about applying to work with one of the experts on this list.

Podcast interviews about GCBRs

Other relevant 80,000 Hours articles

- Why despite global progress, humanity is probably facing its most dangerous time ever

- Introducing the long-term value thesis

Notes and references

- Different attempts at a definition of GC(B)Rs point in the same general direction:

[GCRs are a] risk that might have the potential to inflict serious damage to human well-being on a global scale.

[W]e use the term “global catastrophic risks” to refer to risks that could be globally destabilising enough to permanently worsen humanity’s future or lead to human extinction.

The Johns Hopkins Center for Health Security’s working definition of global catastrophic biological risks (GCBRs): those events in which biological agents—whether naturally emerging or reemerging, deliberately created and released, or laboratory engineered and escaped—could lead to sudden, extraordinary, widespread disaster beyond the collective capability of national and international governments and the private sector to control. If unchecked, GCBRs would lead to great suffering, loss of life, and sustained damage to national governments, international relationships, economies, societal stability, or global security.↩

- For more on longtermism, see other 80000 Hours work here.↩

- Although the modelling (by KPMG and RAND Europe) was only directed to illustrate a possible worst case scenario, a plausible ‘worst case’ from AMR is less severe.

- The Rand scenario of all pathogens achieving 100% resistance to all antibiotics in 15 years is implausible – many organisms show persistent susceptibility to antimicrobials used against them for decades.

- The KPMG assumptions of a 40% increase in resistance also seems overly pessimistic. Further, the assumption that resistance would lead to doubled transmission seems unlikely.

- Incidence of infection is held constant, whilst most infectious disease incidence is on a declining trend, little of which can be attributed to antimicrobial use.

- Mechanisms of antimicrobial resistance generally incur some fitness cost to the pathogen.

- No attempts at mitigation or response were modelled (e.g. increasing R&D into antimicrobials as resistance rates climb).

- E.g. Saskia Popescu has written a paper on “The existential threat of antimicrobial resistance“, illustrating that pervasive antimicrobial resistance could compound the danger of an influenza outbreak (typically, large proportions of influenza deaths are due to secondary bacterial infection).

One explanation of this apparent disagreement is simply that Popescu’s meaning of ‘existential threat’ is not the same as (longtermist-style) existential risk. However, I suspect she (and I am confident others) would disagree with my ‘ruling out’ AMR as a plausible GCBR.↩

- E.g. The Harvard Global Health Institute report on Global Monitoring of Disease Outbreak Preparedness:

Few natural hazards threaten more loss of life, economic disruption, and social disorder than serious infectious disease outbreaks. A pandemic of influenza or similarly transmissible disease could infect billions, kill millions, and knock trillions of dollars off global gross domestic product. Even a more contained epidemic could kill millions and cost tens or hundreds of billions of dollars. Yet compared to the resources devoted to mitigating other global risks such as terrorism, climate change, or war, the global community invests relatively little to prevent and prepare for infectious disease outbreaks. The typical pattern of response can be characterised as a cycle of panic and neglect: a rushed deployment of considerable resources when an outbreak occurs, followed by diminishing interest and investment as memories of the outbreak fade. The consequent underinvestment in preparedness, and over reliance on reactive responses is enormously costly in terms of both lives and dollars, and aggravates global risk.

The Blue Ribbon Study Panel of Biodefense’s 2014 report (albeit in a US context):

The biological threat carries with it the possibility of millions of fatalities and billions of dollars in economic losses. The federal government has acknowledged the seriousness of this threat and provided billions in funding for a wide spectrum of activities across many departments and agencies to meet it. These efforts demonstrate recognition of the problem and a distributed attempt to find solutions. Still, the Nation does not afford the biological threat the same level of attention as it does other threats: There is no centralised leader for biodefense. There is no comprehensive national strategic plan for biodefense. There is no all-inclusive dedicated budget for biodefense.

[…]

The biological threat has not abated. At some point, we will likely be attacked with a biological weapon, and will certainly be subjected to deadly naturally occurring infectious diseases and accidental exposures, for which our response will likely be insufficient. There are two reasons for this: 1) lack of appreciation of the extent, severity, and reality of the biological threat; and 2) lack of political will. These conditions have reinforced one another.↩

- The roots of this scepticism are hard to disentangle (owed partly to GCBR as a term of art being inaugurated in ~2017, and also to uncertainty about how ‘big’ a risk needs to be to threaten global catastrophe). I think it is best sketched as sceptical impressions of the motivating reasons I offer here, i.e.:

* Expert community alarm can be highly error prone (and at risk of self-serving conflict of interest: “Well of course people who make careers out of addressing the risk in question are not going to say it is no big deal”.)

* The track record of outbreaks should be taken as reassuring, especially for the likelihood of an event even worse than something like Black Death or 1918 influenza. (“These are extremely rare events, and their risk has credibly declined due to advances in (e.g.) modern medicine – if we extrapolate out the right tail even further we should deem the risk as incredibly remote”).

* Scepticism of ‘novel’ risk generators (“The risk of (e.g.) bioterrorism is greatly overhyped: in a given year it kills many fewer people (i.e. ~0) than virtually any infectious disease – and orders of magnitude less than the annual flu season. Even apart from the track record, there are lots of object-level reasons why ‘bioterrorists release a superbug that kills millions’ is much easier said than done.”)↩

-

↩

↩ - Luke Muelhauser has compiled a list of the worst historical events (roughly defined as killing 1% or more of the human population over 30 years). Of the 14 listed, 3 were biological catastrophes (Plague of Justinian, Black Death, 1918 Influenza):

- The precise death toll of the Justinian plague, common to all instances of ‘historical epidemiology’, is very hard to establish – note for example this recent study suggesting a much lower death toll. Luke Muehlhauser ably discusses the issue here: I might lean towards somewhat higher estimates given the circumstantial evidence for an Asian origin of this plague (and thus possible impact on Asian civilisations in addition to Byzantium), but with such wide ranges of uncertainty, quibbles over point estimates matter little.↩

- It may have contributed to subsequent climate changes (‘Little Ice Age’).↩

- Although this is uncertain. Thompson et al (2019) suggest greater air travel could be protective, the mechanism being that greater travel rates and population mixing allow wider spread of ‘pre-pandemic’ pathogen strains, and thus build up cross-immunity in the world population, giving the global population greater protection to the subsequent pandemic strain.↩

- One can motivate this by a mix of qualitative and quantitative metrics. On the former, one can talk of recent big biotechnological breakthroughs (CRISPR-Cas9 genome editing, synthetic bacterial cells, the Human Genome Project, etc). Quantitatively, metrics of sequencing costs or publications show accelerating trends.↩

- Although there’s wide agreement on the direction of this effect, the magnitude is less clear. There (thankfully) remain formidable operational challenges beyond the ‘in principle’ science to perform a biological weapon attack, and historically many state and non-state biological weapon programmes stumbled at these hurdles (Ouagrham-Gormley 2014). Biotechnological advances probably have lesser (but non-zero) effect in reducing these challenges.↩

- The interpretation of these gain of function experiments is complicated by the fact that the resulting strains, although derived from highly pathogenic avian influenza and could be transmitted between mammals, also suggested said strains had relatively ineffective mammalian transmission and lower pathogenicity.↩

- Drew Endy: “Most of biotechnology has yet to be imagined, let alone made true.” Also see Hecht et al. (2018) “Are natural proteins special? Can we do that?”↩

- Often selection pressure will ensure ‘good enough’ performance, but with little incremental pressure between ‘good enough’ and catalytic perfection. Perhaps a common example is Anapleurotic reactions, which replenish metabolic intermediaries, where the maximum necessary rate is limited by other enzymes ‘up’ or ‘down’ stream, or by flux through the pathway ‘capping out’ in normal organism function.↩

- These are essentially a hundred times larger than the quoted annual risks: the correction factor for conditional events of Century risk = 1 – (1 – Annual Risk)^100 at these small values of annual risk only affects the 5th significant digit.↩

- It is worth noting this transcription is very rough, and the judgements around appropriate credence intervals and distributions used are far from unimpeachable. Readers are welcome to duplicate the model and adjust parameters as they see fit.