Transcript

Rob’s intro [00:00:00]

Hi listeners, this is the 80,000 Hours Podcast, where we have unusually in-depth conversations about the world’s most pressing problems, what you can do to solve them, and how the alligators feel for once. I’m Rob Wiblin, Head of Research at 80,000 Hours.

Today’s episode is with Robert Wright, host of the popular podcast The Wright Show — and it’s being released on both feeds.

As a result, it’s a little different from most of our episodes. At times I’m interviewing Bob, and at times he’s interviewing me.

I was especially interested in talking to Bob, as he’s started a new project he calls ‘The Apocalypse Aversion Project’, and I was keen to see where his thinking aligns with ours at 80,000 Hours.

Bob is interested in creating the necessary conditions for global coordination, and in particular he thinks that might come from enough individuals “transcending the psychology of tribalism”.

This focus might sound a bit odd to some listeners, and I push back on how realistic this approach really is, but it’s definitely worth talking about.

Bob starts by questioning me about effective altruism, and we go on to cover a bunch of other topics, such as:

- Specific risks like climate change and new technologies

- Why Bob thinks widespread mindfulness could have averted the Iraq War

- The pros and cons of society-wide surveillance

- How I got into effective altruism

- And much more

If you’re interested to hear more of Bob’s interviews you can subscribe to The Wright Show anywhere you’re getting this one.

I think our listeners might be particularly interested in a recent episode from May 5 which he did with the psychologist Paul Bloom called Despite Our Best Intentions. It covers the value of defying your tribe, the principal attribution error, and in their words, “the thin line between between courage and acting like an a*****e”

Alright, without further ado, I bring you Bob Wright.

The interview begins [00:01:55]

Rob Wiblin: Today, I’m speaking with Robert Wright. Bob is an American journalist and author who writes about science, history and politics. He’s the author of The Moral Animal: The New Science of Evolutionary Psychology, Nonzero: The Logic of Human Destiny, The Evolution of God, and Why Buddhism Is True, among others. He was also into podcasting long before it was cool, having helped set up Bloggingheads.tv in 2005. Since then he has hosted the popular podcast The Wright Show.

Rob Wiblin: For that reason, today is a slightly unusual episode, and we plan to post it on both of our podcast feeds, which means we’ll aim to both ask and answer questions in roughly equal measure. And I guess we’ll see how that experiment goes. Thanks for coming on the podcast and your own podcast, Bob.

Bob Wright: Yeah. Well, thanks for having me. And you can now thank me for having you, I guess.

Rob Wiblin: [Laughing] Yeah. On my end, I hope we’ll get to chat about your views on how to reduce the risk of global catastrophes, and whether or not I should start meditating.

Effective altruism [00:02:47]

Rob Wiblin: But first off, I guess I’m curious to know how you would define effective altruism, and what you currently like and dislike about what you’ve heard of it?

Bob Wright: Well first of all, let me say that I’m really happy to have the chance to interrogate you about it. Partly because I want to explore synergies between it and my own current obsession, which I call The Apocalypse Aversion Project, and which I write about in my newsletter on Substack, The Nonzero Newsletter. I suspect there are real synergies and maybe some contrast.

Bob Wright: As for how I would define effective altruism… I probably first heard the term from Peter Singer. I actually co-taught a graduate seminar at Princeton with Peter maybe eight or nine years ago. It wasn’t about this. It was about the biological basis of moral intuition. But after that, I remember he was working on his book, which became the book The Most Good You Can Do, about effective altruism.

Bob Wright: And it’s funny. I remember having lunch with him after the book was finished, and he had a different title in mind. I don’t remember what it was, but he had just learned that that title was a phrase that was trademarked by Goodwill Industries. So he probably couldn’t use it. And I remember joking with him that he should say to Goodwill, if you don’t let me use your trademarked phrase, I will call my book Goodwill Is Not Enough.

Bob Wright: So this is my understanding of effective altruism, is that good intentions are not enough. They need to be guided by reason if you want to do good in the world. That’s the most generic way I would put it. I think you may warn me that there’s some danger in associating the phrase too closely with Peter Singer, because Peter has a whole worldview that may not be exactly the same as effective altruism, but would you say that as I’ve articulated it so far, that is effective altruism?

Rob Wiblin: Yeah, I think that’s basically right. I guess I think of effective altruism as the intellectual project of trying to figure out how we can improve the world in the biggest way possible, and then hopefully doing some of that as well. But in a sense it’s a very open-ended sort of question or project, and it’s a somewhat difficult thing to explain because people are used to understanding an ideology in terms of specifically what it does, or some set of specific beliefs in a program.

Rob Wiblin: Whereas this is more of a question, and maybe a general way of thinking about it. But obviously people have lots of different views on exactly how you would do the most good. So yeah, you can’t define it as just a specific set of things like making a lot of money and giving it to charity or just doing evidence-based work to reduce poverty. Those are popular things, but there’s a much wider range of things that people think might be really promising.

Bob Wright: Right. I think many people think of it as a quantitative exercise. In other words, Peter Singer is a utilitarian. That means he thinks that in principle, a good ethical system is one that maximizes human wellbeing or happiness or however you want to define that end product technically. And of course he recognizes that as a practical matter, you can’t go around quantifying that all the time. But still, it is implicitly kind of quantitative. And if you associate that with effective altruism, people saying, well, you should take this dollar that you’re giving to charity and you could increase its return by 1% if you moved it to this charity. And actually I think there are cases in which you kind of do talk like that, right? It’s just not the whole story.

Rob Wiblin: Yeah, exactly. So the question is how to do the most good, and people have latched onto different strategies that they might use in order to try to have more impact. And one of them is taking the approach that you’re alluding to there, which is being really quantitative, being really rigorous about what evidence you demand for telling whether something is having an impact.

Rob Wiblin: And that’s one way that you might be able to get an edge and have more impact than you would otherwise. Really thinking about things carefully and being skeptical about whether stuff is having an impact and trying to evaluate whether you’re actually having an impact. And a lot of people are trying to use that as a way of doing more good, but then there’s other approaches that people take. Probably the one that I’m most excited about is trying to improve the long-term future of humanity, because it’s a neglected issue where potentially if you succeed, the value of the gains is really enormous. Because there could be so many people in the future and their lives could be so much better if we make the right decisions, which is an area where we have a lot of overlap. I’ve been reading about your Apocalypse Aversion Project and there’s a ton of overlap between the kind of things that you’re saying and the kind of things that I’m often saying and that 80,000 Hours is writing about.

80,000 Hours [00:07:27]

Bob Wright: Right. So the idea of 80,000 Hours is that’s how many hours there are in a career. 80,000 Hours, we should say of course, is the name of this organization that you’re the Head of Research of, and it was founded by Will MacAskill. I actually had a conversation with him in the kind of distant past. So the idea is, if you want to devote some of your time to doing good — and you’re young, I mean, I take it that I personally don’t have 80,000 hours of career, we probably agree — although, you never know, I mean, maybe one of your people will invest a lot of money in life extension and—

Rob Wiblin: I think that’s on one of our lists of promising areas that we haven’t looked into quite so much yet.

Bob Wright: My goal is to live until life expectancy is increasing by more than one year per year. But anyway, I think you engage largely with young people, college age and so on. And so you’re talking to people who have many tens of thousands of hours left in their career. And the idea is, I gather, if you want to spend some of them doing good, let’s think about that clearly.

Rob Wiblin: Yeah, basically. So 80,000 hours is 40 hours a week at 50 weeks a year over a 40-year period, so it’s the number of hours that you might expect to work over a career. And given that you’re going to be spending so much time — and a lot of people, especially our readers, want to improve the world with their career — it makes sense to spend a significant fraction of that time figuring out how you could do more good with all of the time that you’re going to be spending working.

Rob Wiblin: The broader goal of the organization is to provide research and advice to people in order to allow them to have more impact with their career. A lot of the materials are focused on younger people I guess, like 18 to 30, but at least on our podcast, I know that we have a lot of older listeners who are in the middle of their career and are maybe using the information that we’re providing in the interviews and the problems and potential solutions that we discuss on here to have more impact even in their forties or fifties or sixties.

Bob Wright: Is it possible to say what some of the most common confusions are that you have to dispel? I mean not to put it too condescendingly, but if some college freshmen comes up to you and says, here’s what I’m planning to do, what’s a common thing you have to try to dissuade them from doing?

Rob Wiblin: I think maybe the thing that we spend the most time talking about relative to other sources of career advice is getting people to think a lot about the different problems in the world and trying to find a problem that has a really large scale.

Rob Wiblin: So that if you succeed in making a dent on this problem, it benefits a lot of people or animals in a really big way — and it’s plausible you’re going to be able to make progress. And also that it’s not already saturated with lots of people trying to fix it, taking all the low-hanging fruit already, and where there’s potentially a good personal fit for you, where you have relevant skills or relevant passion or you’re in a country where you can actually work on that problem, that sort of thing.

Rob Wiblin: Most career advice, even career advice that’s about how you can potentially improve the world, doesn’t tend to have that focus on finding the right problem that’s a match for you and a match for what the world most needs. And that’s something that we spend quite a lot of time thinking and writing about.

Bob Wright: I guess there are two main dimensions. I mean, they need a problem they’re genuinely interested in, because they need to be motivated, and then they need to have a particular skill set that they can apply.

Bob Wright: And ‘skill set’ may include money, right? I know one thing that Peter Singer has said for some time, and I know is kind of part of the idea, is that you should ask yourself whether your labor is most efficiently spent going to work for an NGO — going and handing out mosquito nets or something — or going to work on Wall Street, becoming ridiculously wealthy, and giving some of that money to people who will go hand out mosquito nets and things. Right? I’ve always thought that was kind of a dicey thing. Because it’s easy to go to Wall Street with good intentions, but… There’s a famous phrase about people who go to Washington DC as public servants, “They came to do good and stayed to do well.” But still, this is one of the fundamental questions you get people to ask themselves, right?

Rob Wiblin: Yeah, exactly. So I guess one way in which we’re maybe a little different than other sources of advice is that we do get people to potentially open their mind and consider the full range of different ways that they might be able to contribute to solving really important pressing problems. Obvious ones would be working at an NGO like I do, and there’s lots of ways to have impact there, but maybe you should… Especially once you have a problem in mind, it might be that a more effective approach to solving 1% or 10% of that problem might be to go into politics, or to go into policy formation. Or perhaps it makes sense to go into research, become an academic, or do R&D in a business.

Rob Wiblin: And yeah, another approach is to just go and try to make a bunch of money and then figure out how you can contribute to solving this problem by giving away substantial sums to whoever might be able to have the biggest impact on it.

Rob Wiblin: In some cases, or for some people, that might be the right way to have a larger impact. For other people, they’re going to be better suited to e.g. politics, or the problem that they want to work on is one where the main bottleneck is something to do with e.g. politics, rather than to do with funding.

Rob Wiblin: So it’s a matter of finding a match between yourself and your skills and your passions, and then a problem that you might go and work on that the world really needs solved. And then also trying to find the approach that really deals with…we call it the main bottleneck. Like the thing that’s holding back progress on fixing this problem in the biggest way.

Long-term threats to the planet [00:12:52]

Bob Wright: I think a lot of people would associate the example I just mentioned, mosquito nets, with effective altruism, because there is this idea that, well, this is this kind of calculation you do. That you can say, well, how do you save more lives? With mosquito nets, or distributing vaccines or something, whatever, and you can do the math. But it sounds like you and I have something in common, which is that we’re concerned about long-term threats to the planet, either truly existential threats — although honestly, most catastrophes would not kill every single human on the planet, but still, you’d rather not learn your lesson the hard way, right? The way World War I and World War II taught us lessons, and the Black Death or whatever.

Bob Wright: And so I’m curious, I mean… Some of the things I know you’ve thought about, I’ve thought about. Like bioweapons, for example. Very challenging problem, given the growing accessibility of the things you need in order to design a bioweapon. There are lots of other things I could list that I worry about, but I’m wondering — you’re in touch with people coming out of college — how many people coming out of college are concerned about things like that?

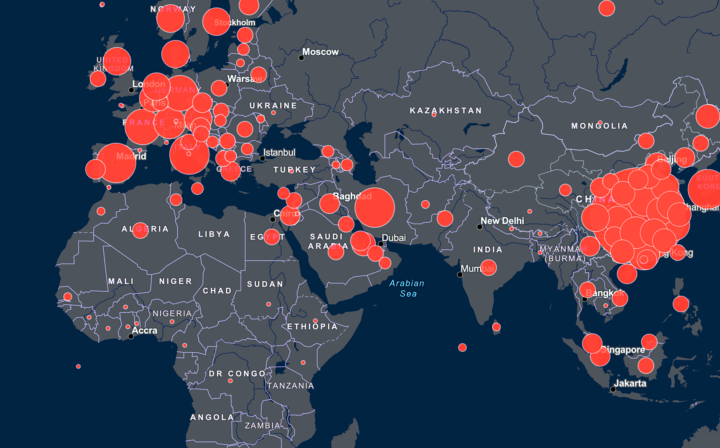

Bob Wright: My experience is that if you say to people you’re worried about the long-term health of the planet, young people go, “Oh yeah, climate change.” And climate change is an important problem, I just don’t think it’s the only one. And yet I think it soaks up an overwhelming majority of the attention, so far as I can tell, among people who think about long-term threats to the planet. Is that your experience?

Rob Wiblin: I spend a lot of my time making the podcast, and we have other people who do one-on-one advising, so maybe they’re more in touch with what young people are thinking today. But my impression is that people have all kinds of different views about what are the most pressing problems, or what they’re most passionate about. I guess within people who are focused on global catastrophes, or preserving the future of humanity, I think climate change is probably the biggest grouping. It is a topic that’s gotten a lot of coverage over the years. It makes a lot of sense. We have a pretty good idea of the nature of the problem and how we would fix it. So it makes sense that it’s one that’s extremely salient to people.

Rob Wiblin: I think people are open to other ideas as well when you point out… Well, let’s say we’re just trying to make civilization more resilient, more robust, make the future go better. Another really significant problem is that the United States and China might have a nuclear war, and then it would just completely derail everything. People are completely open to that. And if they can see a way of working on that, maybe that’s a better fit for their skills. Then they could work on that instead of climate change.

Rob Wiblin: The other issues, I mean, we’ve been on this pandemic thing for many, many years. We made I think 10 hours worth of material on how to prevent a really bad pandemic back in 2017 and 2018. And sometimes you get a little bit of skepticism from people, but we just know from history that there are lots of pandemics, and that’s another way that things could really go off the rails.

Bob Wright: You’ll be getting less skepticism on that one in the next few years, I think, than you did in the previous year probably…

Rob Wiblin: Yeah. So we’re not kind of against any specific problems that people are already interested in working on, but I suppose we want to open their minds and get them to think in a quantitative way. Get them to consider many different options and then try to choose the one where they think they can do the most good, rather than go with the one that’s most salient to them or the one that they heard about earliest, which I think is an easy thing to potentially slip into.

Bob Wright: Yeah. And then of course one thing about climate change is, as you said, we have a pretty clear idea of what to do about it. I mean, not all the questions are answered. For example, do you need an international agreement with teeth that actually sanctions countries that don’t comply, countries that aren’t reducing their carbon emissions? Or will it work to do it in a more normative way with peer group pressure, where some nations say, “Hey we’re doing our part, don’t you want to do your part?”

Bob Wright: And the Paris Accord is kind of a halfway between the two. I mean, it’s on paper, but there are no… It doesn’t really have teeth, but it seems to be having some effect. And it’s a very interesting question. How many of these problems can we solve normatively? But then you get into other problems and things aren’t even that clear, right? I mean, you’ve wrestled with this presumably.

Rob Wiblin: Yeah, exactly. So, I mean, we could spend hours on the podcast… We have spent hours talking about climate change and the various arguments in favor of spending your career working on that, and the various arguments why it might be not as impactful as other things.

Rob Wiblin: The main arguments in favor would be that we have a great understanding of the problem. We know that it’s going to be a serious issue. So we’re quite confident that there’s a large scale. And also we have a pretty good idea about what to do about it. There’s quite a lot of tractable approaches to ameliorating it, so you can have reasonable confidence that you’ve got a good shot.

Rob Wiblin: I guess one option is taking the politics and international treaty route. Maybe the one I perhaps feel most optimistic about in my heart is just trying to drive down the cost of renewable energy. So it’s like solar R&D, and scaling that up, and then research into batteries. Because if a bunch of people just go away and do the science and then figure out a way to make this cheaper than other stuff, it doesn’t require any virtue on the part of other countries or corporations. Because they’ll just implement it in their own self-interest.

Bob Wright: So should I buy a Tesla? I’m not going to, but I mean, I’m serious in the sense that there are people who say, well, if you really do all the math, I mean, let’s look at what it takes to make these batteries. Ultimately this depends heavily on how much you and your area are paying for electricity. But is it your view that it’s just a safe bet that pretty much wherever you are in America, an electric vehicle all told has a lower carbon footprint?

Rob Wiblin: This is very much outside of my area of expertise.

Bob Wright: Oh okay, nevermind. Because I’m not going to buy a Tesla.

Rob Wiblin: I used to be very skeptical of electric cars. There’s more embodied energy in manufacturing them. That was very much true in the past, and I think it probably is somewhat still true today. And obviously if you’re then getting the electricity from coal-fired power stations, then there’s a whole lot of emissions that are hidden from you.

Rob Wiblin: I think that’s gradually changing as less electricity is generated from coal and more from other sources. And these cars are becoming less heavy and less difficult to manufacture. I mean, if I had $30,000 or $40,000 or $50,000 to spend trying to reduce climate change, I wouldn’t spend it on a car. I think I could find a better way to use it. But buying a Tesla or buying solar panels for that matter, it probably does prompt… Some of that money then goes towards research and development to make those kinds of cars and those kinds of batteries cheaper, or the solar panels cheaper. And in the long term, driving down the cost of those products is probably the largest effect that it has and probably makes it… My guess is that it’s a net positive, but we’re really off base for me.

Bob Wright: My next car will probably be at least a hybrid, conceivably an all electric vehicle. Anyway, back to these long-term risks to the planet. What other ones do you… So there’s bioweapons. There’s also the inadvertent release (as may have happened, for all we know, in the case of COVID) — accidental release from a laboratory where they were working with good intentions to understand the nature of the disease and the prospects for future mutations that might increase lethality or transmissibility or something. So there’s that. What are other close-to-existential risks, or at least long-term risks facing the whole planet that you personally prioritize?

Rob Wiblin: So we’ve covered some of the big ones. We’ve got bioweapons as well as natural pandemics. We’ve got climate change, we’ve got a great power war, or a nuclear war of any kind. Then I guess we’ve got risks from just other emerging technologies, of which AI is maybe the most prominent one. So if you had an advanced AI deployed in some really important function, or given a lot of influence, and we haven’t figured out how to make it aligned with our interests, then there’s some risk that it could really go off the rails and do a bunch of damage.

Bob Wright: And make too many paper clips. That’s the most paradigmatic thought experiment, right? Suppose you tell an AI to make as many paperclips as possible. And it—

Rob Wiblin: I think we’ve somewhat moved on from that thought experiment. That one goes back to the late 2000s, before… It probably wasn’t the best branding. I mean, mainstream people who are studying machine learning, trying to figure out how to make AI systems do useful stuff, they regularly find that these systems end up doing stuff that they didn’t intend, and sometimes doing things that they really wouldn’t like if they were scaled up into the real world.

Rob Wiblin: One example is deployed algorithms that recommend things to people that end up trying to manipulate them to potentially have particular views, because that makes them more likely to stay on the given website, which probably wasn’t necessarily intended and the effects weren’t thought through. We can expect machine learning or AI to be able to do a lot more stuff in 20 or 30 years. But even just in the systems that we have now, which are relatively weak, we already see issues with them not doing what we intended and potentially causing damage. I think it’s just a projection of that forward into the future.

Rob Wiblin: AI is one of these emerging technologies, but I expect that over the next century we’ll see other things arising. Whenever humanity does something completely new for the first time — especially when it’s producing something that is able to spread and have a large influence — there’s some risk that it could go wrong. We should probably have people looking out for those possibilities and trying to figure out how to avoid them.

Bob Wright: Yeah. Also, these things are evolving in a context of intense international competition. Let’s say we avoid an actual war between China and the U.S., but each of them are developing AI in a context of intense suspicion about what the other one is doing with it. Or, more troubling still, at least to my mind, they are deciding how to regulate human genetic engineering in a context of fear and suspicion. So somebody comes along and says, well, shouldn’t we put some regulations on what kinds of things parents can try out in creating babies? And somebody else says, well the Chinese are creating these super smart, super strong people who will vanquish us someday, so it has to be no holds barred in terms of what people can do. Or in a way more troubling still, the Pentagon should have this huge budget devoted to creating these super smart superwarriors. This is a real concern to me in a number of areas.

The Apocalypse Aversion Project [00:23:52]

Rob Wiblin: Maybe this is a really good segue to introducing The Apocalypse Aversion Project, which is I guess this new book and maybe broader project that you’re thinking of pursuing over the next few years?

Bob Wright: Yeah, I hope it’ll be a new book. The way that part developed is that I had a newsletter for a while called The Nonzero Newsletter. A few months ago, like everybody else, I decided to create a paid version to help keep it going, and I decided that the paid version would be more clearly focused than the average issue newsletter. The Apocalypse Aversion Project is a somewhat tongue-in-cheek title, though not as tongue-in-cheek as I’d like, because I do think there’s a real risk of things going horribly awry. And it’s kind of an extension of my book Nonzero, which had this whole argument about looking at all of human history as an exercise in game theory, and as new technology is coming along, people often deploying it to successfully play non-zero-sum games, sometimes zero-sum games, whatever, but anyway, by the end of the book, I’m arguing that where we are now is on the threshold of global community, you could say.

Bob Wright: My book had charted the growth in the scope and depth of social complexity, from hunter-gatherer village to ancient city state, ancient state, empire nation state. Increasingly we are close to having what you could call a global social organization, and as has happened in the past, there’s non-zero-sum logic behind moving to a higher level of social organization — in the sense that there are a number of problems that the world faces that can be described as non-zero-sum relationships among nations. Climate change is one. Addressing it is, broadly speaking, in the interest of nations everywhere. Different nations are impacted differently by climate change, but still, the average nation is better off by cooperating on this, and even sacrificing to some extent, so long as other nations agree to sacrifice. You bring the non-zero-sum problem to a win-win solution. All the problems I’ve been describing, I think, are like that.

Bob Wright: A nuclear arms race is like that. You can both save money and reduce the chances of catastrophe by exercising mutual restraint. That’s what an arms agreement is. And I now think we’re going to see… Again, we need to think about whole new kinds of arms races, bioweapons, weapons in space, human genetic engineering is an arms race. AI is an arms race. The idea grows out of my whole Nonzero Project. A distinctive feature of my project — I’ve really become more and more aware of this since I started writing about it in the newsletter, and in fact, in the last issue, it really hit home — my view is that it definitely is important to start thinking about what the solutions to these problems would look like.

Bob Wright: In some cases it’s very challenging, but at the same time, we need to recognize that right now the world’s political system is not amenable by and large to implementing the solutions anyway, and there’s another big thing that has to happen, aside from figuring out how to solve the problems: creating a world where there is less intense competition among nations, less suspicion among them, and also less strife within them. The United States is in no position to agree to anything ambitious on the international front. We just don’t have our act together politically. And to deepen the challenge, one of the big political factions — the ethno-nationalist faction — is very suspicious of this whole international governance thing, international institutions, international agreements.

Bob Wright: So, it seems to me that we have to work to reduce the amount of international strife and the amount of domestic strife, and I think the problems we face there can largely be subsumed under the heading ‘the psychology of tribalism.’ That’s a catchphrase. Some people don’t like the phrase for various reasons, and some of these reasons are good, but people know what I mean. I don’t just mean rage and hatred and violence. Unfortunately, in a way, the problem is subtler than that.

Bob Wright: It gets down to cognitive biases, confirmation bias, and a bias that I think gets too little attention called attribution error, which we can talk about if we have time. But the point is, we are naturally inclined — I would say by natural selection, which I wrote about in my book The Moral Animal — to just have a biased accounting system. People naturally think they contributed more to a successful project than they did. They think they have more valid grievances than the other side. And this plays out at the level of political parties, at the level of nations, and so on. And so, I think we have to tackle what is in some ways a psychological problem, as we tackle the policy problems. And that’s maybe what’s a little distinctive about the focus as I see where I think my project is heading.

Rob Wiblin: Yeah. Among people who are worried about the impact that artificial intelligence might have on the future, I think one of the scenarios that they’re most worried about is that there is a race to deploy really advanced AI systems — either because of military users or international competition, or commercial competition for that matter. I guess across almost all of these issues, it does seem like it would make a huge difference if countries got along better and felt less competitive pressure, and I guess if people were just in general more coordinated. We talk about this as ‘global coordination’ or ‘international coordination.’

What should we actually do? [00:29:33]

Rob Wiblin: There’s another big class of ways that things could go wrong, which is just outright error. Everyone thinks that something is safe, for example, and then they do it, and it turns out that it’s dangerous, and it has nothing to do with coordination problems. It’s just human frailty, and that we make mistakes sometimes. For example, you can have a situation where everyone agrees that we should have research into some very dangerous virus in order to protect ourselves from it, and we think that the labs are safe and that it can’t escape, but then it turns out that it does, just because humans are error prone. But yeah, the coordination one is a huge and really important class. What do you think you’re going to write in the book about how we ought to tackle that? It’s a problem that’s as old as time, and we’re muddling through at the moment. How can we make things better?

Bob Wright: I think it’s very challenging. What you just said brought to mind the thing about viruses escaping from labs. It’s important to understand what happened with how this pandemic got started. It’s always good to have clarity, but you see two different forces impeding that, I think. You have, on the one hand, the far right in America. Well, I wish it were far right. It actually represents a fair number of people. But they’re saying, “Well, it came from a bioweapons lab.” Even that it was intentionally released. You hear that from Peter Navarro, who was a high-ranking official in the Trump Administration, he thinks there’s a good chance it was intentionally released. When you think about it, it really doesn’t make sense for China to release a biological weapon in their own country.

Rob Wiblin: Bit of a high-risk move.

Bob Wright: Kind of high risk. But anyway, on the one hand, you hear that, and then on the other hand, there are scientists who have been involved in this so-called ‘gain of function’ research — which has the best intentions of learning more about the viruses — who are discouraging looking into the possibility that that’s what happened, that it was an innocent release from a lab. So, I don’t know if that was worth the tangent, but the point is, it’s just an example of how subtle human biases… There’s on the one hand, an ideological bias there, and on the other hand, what you might call a different kind of self-serving bias impeding our view. But the main thing I’m trying to say is I think that, sadly, in a way, those are both subtle examples of the psychology of tribalism.

Bob Wright: They’re both people who are trying to defend the interests of their tribe. And I bring those up because they are such subtle examples, in a way. And I think we have to attack the problem on all fronts, and it calls for a lot of self-reflection. and I have not figured out yet how you… Certain kinds of cognitive biases are getting attention, certain aren’t, but I’m really curious — there’s probably some people you’re associated with who would have good ideas about this — how do you mobilize people?

Bob Wright: How do you get them to recognize the connection between say, on the one hand, just getting people to be a little less reactive on social media, a little more reflective, and saving the world, in the sense of making it at least incrementally less likely that we will have a big bioweapons incident or AI will get out of control? Because the calmer and more rational the discourse on social media, the more likely the world is to handle these problems wisely, because there’ll be less internal strife in nations and there will be less international strife. I’m not, by my nature, a movement organizer — and yet, I think something along the lines of a movement needs to happen here. Again, there are things moving in that direction.

Rob Wiblin: If we could get people to be more thoughtful, more careful in how they acted, more careful in how they thought about things, less reactive, potentially I guess less vengeful, more inclined towards cooperation… It seems like it would put us in a much better position, or you’d be more optimistic about humanity’s prospects, I guess. If I’m applying my kind of mindset where I’m trying to analyze, is this the thing that I would want to spend my career on? My main concern is just that it seems like a really heavy lift. People have been trying to encourage people to have these virtues since the beginning of… I guess since there were written records. People aren’t all bad, and we have made a bunch of progress in civilizing ourselves and finding ways to control our worst instincts.

Rob Wiblin: But I suppose if I was one person considering using my career on this, I’d think, “Do I have a really good angle that’s different, or that I think is really going to move the needle and change how a lot of people think or how a lot of people behave?” I might go into it if I did have an idea for that, for something that would make a difference given that there’s lots of people already talking about this, or it’s a kind of one-end problem. But yeah, it seems like it could be hard. Just saying the things that have been said many times before, I’m not sure how much that is going to change global culture.

Bob Wright: You’re right. People have been saying this forever. And you’re right, we’ve made progress. And yet, I was just reading this book on the origins of World War I. It’s called The Sleepwalkers, and here’s a line from it. It says, “This was a world in which aggressive intentions were always assigned to the opponent and defensive intentions to oneself.” So this is in large part how the war got started. When they mass troops, it’s a threat and maybe we should stage a preemptive strike. When we mass troops, it’s just defense. First of all, what that shows is that failing to correct for a fundamental natural human cognitive bias can get millions of people killed, and also that we actually haven’t made much progress. Look at international affairs today and look at how in the American media, the behavior of say Iran, China, and Russia — probably the three countries most consistently considered adversaries — look at the way their behavior is reported in the American media, as opposed to the way America’s behavior is reported.

Bob Wright: When they talk about American military maneuverings, it’s always defensive. Moreover, there’s always reporting about the political constraints that make it hard for our leaders to behave more charitably on the international front, so you generally don’t see that in the foreign reporting. So, you’re right, it’s a heavy lift, but if you’re interested in this category of problems, these quasi-existential threats, well, you’re in for a heavy lift. The whole nature of the thing is… In other words, it’s a kind of intervention that may have a low probability of success but the magnitude of the success is so high. If you succeed, it still has what economists call a high expected return. So, that’s the nature of the endeavor, so I’m sticking with it.

Rob Wiblin: It reminds me of this joke idea I read once, I can’t remember where, about how to reduce the risk of nuclear war, which is that before any world leader can get the authorization to use nuclear weapons, they have to get together with all of the other leaders who have the authorization to use nuclear weapons and take MDMA together. In order to generate potentially some kind of sense of belonging and sense of love for one another. Obviously that doesn’t work, but I—

Bob Wright: Well, actually, honestly-

Rob Wiblin: It’s an interesting idea to explore, right?

Bob Wright: I’m not sure it wouldn’t.

Rob Wiblin: I think it might be hard to get—

Bob Wright: But it’s not going to happen too many times.

Rob Wiblin: I know. That’s one we’ll call not a very tractable policy proposal.

Mindfulness [00:36:56]

Rob Wiblin: This is making me think about, if we narrow down the approach, or the problem a little bit, then maybe we’ll be able to get more leverage on this. So, if the project was to get more mindfulness and meditation and reflectiveness among the U.S. foreign policy elite, to me, that sounds like a better project because it’s hard to change any single one person’s personality. It’s a whole bunch of work to convince people to meditate every day, and a lot of people don’t stick with it. So, we want each person who we convince to generate a lot of value in terms of making the world more stable. If you could just get the U.S. president doing it, or other military decision makers, then that seems like it carries a bigger punch.

Bob Wright: Yeah, and I’m an advocate of mindfulness. For example, in the case of social media, if you’re doing mindfulness meditation, trying hard to be in touch with the way your feelings are guiding your thoughts and so on, you’re probably more likely on social media to pause before re-tweeting something just because it makes you feel good, just because it seems to validate your tribe or diminish the other tribe, re-tweeting it without even reading the thing you’re re-tweeting, you’re less likely to do that. You’re less likely to react in anger, and there’s a lot of subtler things that you’re less likely to do. So, I’m an advocate of all that. At the same time, even I wouldn’t want the world’s fate to depend on convincing all the world’s leaders to be mindful. In addition, there’s the fact that in a way, strictly speaking, mindfulness is a neutral tool.

Bob Wright: I think it does tend to make us better people, better citizens. At the same time, you can, in principle, use mindfulness as a cognitive skill to do bad things. I do talk about mindfulness in the newsletter. I wouldn’t want to confine our repertoire to that. And so there are other things I like to emphasize, like cognitive empathy. It’s not feeling-your-pain-type empathy. It’s just understanding, trying to understand your perspective. So, to get back to the World War I case, it would be like working very hard to really understand why this other country is doing what it’s doing.

Bob Wright: There’s actually a recent example, fairly recent, of Russia massing troops on the Ukrainian border. I don’t applaud that. There’s a lot of things Russia has done that I don’t applaud, but it does seem to be the case that there had been a massing of Ukrainian troops on this dividing line where there’s a de facto division, and it’s just good to know. It doesn’t justify it. It’s just good to know, it doesn’t mean they’re planning to attack. Apparently they were sending a signal, like don’t even think about it, and then they withdrew their troops. So, I can imagine, just leave aside whether you’re interested in mindfulness, just programs in trying to convince people of the importance of cognitive empathy. And it can have very self-serving value. It can make you better at negotiating things and trying to make people better at it, I guess.

Rob Wiblin: Let’s imagine that we’re trying to use this approach to, say, stop a really bad pandemic. I guess the scenarios that we’re most worried about are like, perhaps like bioweapons from North Korea, I guess possibly in decades time some synthetic biology researchers who really go off the rails and try to make a pandemic that’s going to kill as many people as possible, and then I guess you’ve got escape of something from a research lab, maybe a research lab that’s doing good.

Rob Wiblin: If we could make people more reflective, get people to be more mindful… With North Korea, I guess it’d be great if we get the North Korean elite to do that. A little bit difficult to do, but I suppose in that case, you could argue that getting people in U.S. foreign policy to understand where North Korea is coming from, even if we think they are completely evil, might reduce the risk of a miscalculation that would then cause the North Koreans to retaliate. Seems a bit hard in the case of the synthetic biology researcher gone rogue, because you’d have to reach so many people in order to prevent that risk. I might be inclined to go for a technological solution to that one, because you don’t know who you have to convince to start meditating.

Bob Wright: Yeah, but what I would say there about the connection I see between mindfulness and these various kinds of cognitive interventions is, here’s the way I look at that, any global treaty that is up to the challenge in the realm of bioweapons, synthetic weapons, whatever, is going to infringe on national sovereignty to an extent that we’re not used to. Because so long as any lab anywhere in the world is a rogue lab, it is not regulated, is not subjected to a degree of transparency that is necessary to prevent this kind of thing. Then we’re in trouble. And Americans often have trouble understanding this. If you want other nations to submit to that degree of transparency, we’re going to have to do the same.

Bob Wright: So, we’re going to have to agree that whether it’s international inspectors, or electronic monitoring equipment that’s accessible to the international community, or whatever, we’re going to have to sacrifice some… In a way, it’s not a sacrifice of sovereignty because when you think of sacrificing sovereignty, you think of losing control over your future. You’re actually gaining control of your future. Gaining one kind of sovereignty by sacrificing another. But in any event, America’s political system right now is nowhere near being ready to sign on to meaningful changes in the calibration of sovereignty of this kind.

Bob Wright: So, what do you need? Well, you need an America less consumed by strife. You need, more specifically, and this is advice I would give to my tribe, the blue tribe in America, is you need to do fewer things that gratuitously antagonize and alienate the red tribe, because the more freaked out they get, the more they’re going to oppose these kinds of policies that we need to keep the world safe. So, this is where it gets back to what I said about thinking that there’s a psychological revolution that’s needed before we can implement all the policies we need. To get back to cognitive empathy, if the blue tribe understands the red tribe better, we will understand what is and is not productive feedback to give them. I’m telling you, the blue tribe spends a ton of time doing counterproductive messaging in this sense. Making Trump supporters feel exactly what they fear, that we look down on them, that we hold them in contempt, that we think they’re stupid.

Rob Wiblin: An interesting one, because I guess sometimes people do that because they have these aggressive emotions, but other times it happens just because people feel like they want to tell the truth, or they’d want to call people out on bad behavior. I guess if you want to clamp down on this, you need to say to people look, it would feel good, and in some sense it might be righteous to say this thing, but think about the actual impact that this is having on discourse and how people feel, and maybe in reality, it’s going to make the world a worse place, even if it’s true, or even if in some sense it’s justified to be frustrated with someone.

Bob Wright: None of us lives our lives going around telling everyone the truth. “My, you have unattractive children.” Nobody says that. And so, there is that, but there’s also… I think, yes, we should understand that it doesn’t always make sense to say something you feel is true, but I think we should also be examining whether the things we think are true actually are true. And this particular context of red/blue America brings me to this — I think underappreciated — cognitive bias, which is attribution error.

Bob Wright: When somebody does something good or bad, do you say to yourself, “Well, yeah, that’s the kind of person they are,” or do you say, “Well, they did that because of extenuating circumstances.” Say you’re in a checkout line and somebody in front of you is rude to the clerk. Do you say, “That guy’s a jerk, what a rude person,” or do you say, “Well, maybe he just got some super bad news about his family or something, and he’s just in a bad mood. He’s stressed out for some reason.”

Bob Wright: The pattern in human cognition is that when we’re talking about friends and allies, or ourselves, and they do something good, we attribute it to the kind of people they are, to their character, their disposition. If they do something bad, we explain it away. “Oh, there’s peer group pressure.” “She didn’t have her nap that day,” whatever. With our enemies and rivals, if they do something bad, we attribute it to the kind of people they are. If they do something good, we say, “Oh, they were just showing off,” or “They had just done some ecstasy or something,” whatever. It doesn’t reflect their true character.

Bob Wright: So in this tribal context, you look at Trump supporters and they do something you think is bad, like they oppose immigration, and you say, “Well, yeah. They’re racist.” Well, they may be. Some are, some aren’t. It depends on how you define racism and a whole lot of other things, but the point is, once you’ve defined somebody as the adversary, you’re naturally inclined to attribute the things they do that you don’t approve of to their character, as opposed to saying, “Well, maybe this is a guy who grew up, got a good union job, and now his son can’t get a good job, and he looks at the local meatpacking plant, and it’s all immigrants doing the work.” It could be that. We don’t know. And so, I think you’re right. You don’t have to tell the truth, but be evaluating your conception of the truth.

Rob Wiblin: Do you know if there’s any research on how to reduce this? Because I agree, if we could get people to have that kind of bias in favor of their own stories… I can’t remember where I heard this, but apparently if you ask people to talk about a time that they wronged someone else or harmed someone else, there’s basically almost always exactly the same story structure. It’ll begin with several days before, all of the mitigating circumstances, and how they affected… But of course, we don’t come up with those stories whenever someone else screws us over.

Bob Wright: That’s exactly right.

Rob Wiblin: And to be fair, I guess we don’t know what the mitigating circumstances are, so it’s easy to assume the worst. I’d be really interested to know if there’s any research on how to get people to do that perspective taking or try to brush things off more.

Bob Wright: You’re right, we don’t know enough about them to know the extenuating circumstances, but if it’s a friend or ally or family member, your mind at least starts doing that exploration of like, well, what could it have been? My daughter was mean on the playground. Did she get a nap that day? Whereas, a kid is mean to your daughter on the playground, it’s like, that kid is trouble. Now as for exercises, I’m not that well-versed. It’s a good question. When you’re talking to the person, when you’re in communication with them, there are exercises. It’s not so hard then, but a lot of the problem comes with people that we wind up not communicating with.

Bob Wright: Here’s a little semi-extraneous thing that I learned in terms of framing conversations with people. I think you mentioned that I started this bloggingheads TV thing about 15 years ago, and it was online conversations. What we found is that you could take two bloggers who had been sniping about each other and said only mean things about each other in print, and if you put them in conversation with each other, civilizing instincts kick in. So, little things like that can matter. But I think you’re pointing to the kind of thing I need to get better versed in, and maybe people out there know and can tell me, but what are successful techniques for enhancing cognitive empathy and ways to motivate people to pursue it?

Rob Wiblin: Yeah. I mean, I’m sure there’s ways to do this that are very time consuming or very difficult. But if we want to reach hundreds of millions of people and shift culture, then ideally we want something that’s in people’s own interests. I mean this one definitely can be in your own interests because it’s much nicer to go around thinking that people are mostly nice and not trying to screw you over, rather than constantly imagining the worst. A tip that I’m happy to share is whenever you notice that there are regular ways in which people are rubbing you the wrong way, check if there’s a systematic reason, or a way things are set up such that that would happen, even if what they’re doing is completely reasonable. So for example, I’ve recently been living with a whole bunch of people, and there’s a bunch of housework to do.

Rob Wiblin: And we noticed that there’s a bunch of stuff that wasn’t getting done, basically. A bunch of chores that no one was opting in for. And this was frustrating for people. But then we realized oh, it’s because it’s not on the chore spreadsheet. So people don’t actually get any credit for these ones, because they weren’t, in our mind, classified as the work that you contribute to. And then as soon as you put them on this thing, so that it’s part of the system that works, then that thing gets fixed. You also notice people often rub one another the wrong way in an organization. And, if you think about it, it’s because they have somewhat conflicting goals for the teams. So it’s nothing personal, it’s just that they have different objectives that, to some extent, are in tension.

Bob Wright: Yeah. Well, one finding is I think that, say you take two people from different ethnicities, particularly in a charged context, like Northern Ireland Catholics and Protestants, or something. You bring them together. And some people bring people together in a naive hope that that will automatically build bridges. And it turns out that what you need to do is put them in a non-zero sum situation, put them in some sense on the same team. They could be literally on the same team, on the same athletic team. Or you could just give them a common goal where they both benefit if they achieve the goal together. But structuring things that way can help somewhat resolve some of the more divisive tensions.

Social cohesion [00:50:14]

Rob Wiblin: Yeah. Here’s a question out of left field that actually came from someone on Twitter, when I mentioned that we were going to be speaking. This person points out that a very common thread through your work, especially in Nonzero, is that it would be good if we could find more ways to get societies cooperating. People cooperating. Reducing problematic competition. But they asked, in the light of that, what do you think of the argument that societies have been substantially kept functional by competitive pressures? And without fear of invasion or bankruptcy, both business and government tend to accumulate layer upon layer of rent-seeking and sclerotic rules at great cost? So, in some cases we know there are benefits to competition. Could we potentially have too little of it?

Bob Wright: Yeah. Actually the book had a chapter called “War, what is it good for?” Which is a song that you’re too young to remember. And the point was actually intense competition among nations makes things within the nations more non-zero sum. They all have a higher stake in successful collaboration, and it has led to a lot of improvements of various kinds — technological, organizational, and so on. It can also lead to bad things, like the restriction of civil liberties and so on, but it can lead to cohesion. I was happy to say, I think, whatever good war may have done, it’s now outlived its usefulness, given nuclear weapons and a lot of other things. But the point is a good one. And one of the takeaways for me is that, although I think we need more international governments in the sense of international agreements and institutions, I don’t think we want a global government, with all that connotes.

Bob Wright: A kind of centralized, powerful thing that would, among other things, put an end to the dynamic that’s being described there. It’s a difficult question though, the role that competition plays in internal cohesion. Because, well, I guess here’s the big challenge, it seems to me that the good news is that people find internal cohesion easier when they see an external threat. The reason I say that’s good news is because the planet has a lot of what you could call external threats, which we’ve been describing. Climate change, pandemics, bioweapons, arms races. There are plenty of genuine threats to the planet that could congeal us at the international level. The bad news is that it seems like, in terms of human nature, in terms of how we’re designed by natural selection, the kinds of external threats we respond most readily to are other human beings.

Bob Wright: I mean, look at the U.S. and China. Five years ago, there wasn’t this intense sense of menace that there is now. It’s not a hard thing to trigger. And I’m not saying there’s nothing to worry about with China’s behavior, but there isn’t 50 times as much to worry about now compared to five years ago. And yet that is the extent to which it’s been amped up. And the reason is it’s easy to point to foreign human beings and convince people that they’re threatening. It’s harder to point to more abstract threats and convince people they’re worth worrying about. I mean, there is a little more good news, which is that there was this classic social science experiment — which would not be permitted now for ethical reasons — but it was in the 1950s. It’s called the robbers cave experiment.

Bob Wright: There were boys in summer camp who didn’t know they were the subjects of an experiment. They increased the zero-sum dynamics between the two groups of boys, they had these different names like the Rattlers and something else. They turned them into tribes. And then they could either create zero-sum situations by saying, okay, here’s the picnic area, one tribe gets there 20 minutes late so all the food is eaten by the other tribe, that’s zero sum. And so they created a lot of tension between the groups. But then, after getting them to a point where they hated each other, they created fake global emergencies. Like they said, the water supply to the camp has been disrupted. And, I guess predictably, they overcame their animosity and worked together. So it can be done. But it’s not as easy to marshall energy in the face of a more abstract threat compared to a human threat.

Rob Wiblin: Yeah. It’s really interesting. At the beginning of the COVID pandemic, there was a lot of concern about social cohesion and chaos. That we’d see people turning on one another because there wouldn’t be enough food, or there’d be a crime wave. It’s maybe a little bit hard to remember now, but I remember reading lots of stories about that in March. And it just runs so contrary to the psychology research, the sociological research on this. Which just shows very clearly that when a society faces an external threat where everyone is in the same basket, people tend to pull together. And in fact, you see crime go down, you see cooperation go up, because suddenly people feel like they’re all part of this team that’s fighting this collective struggle. Yeah. You see that during wars, without a doubt.

Rob Wiblin: People thought when they started bombing civilian cities that it would cause countries to surrender because it would be so unpleasant for civilians to be bombed. But it was quite the opposite, it caused them to form this intense bond of people in a city and double down on fighting the war that they were in. The one exception to this, interestingly, is sieges. So if you besiege a city and it gradually runs out of food over months and years, then people turn on one another. So it’s not in every instance, but yeah. And in the case of the pandemic, it was very predictable. It would be great if we could maybe use this in some way, although it maybe is a little bit stressful for people to constantly feel besieged, and be cooperating with their fellow citizens because they feel under threat.

Bob Wright: Yeah. By the way, the way the bombing of cities began in World War II was another case of where maybe successful cognitive empathy could have happened. The first case was an accident. The Germans didn’t mean to bomb the civilian population, but the Brits retaliated and the war was on. The Brits assumed it was intentional. But anyway, it’s a little surprising in America that the pandemic hasn’t had more of a congealing effect. I mean, I would say in general, there has been an under-appreciation… Well, there’s two issues. I think there’s still a little bit of an under-appreciation of how non-zero-sum the dynamics are globally. I think America is coming to realize that it’s in our interest to help people around the globe. Anyone getting sick anywhere, to some extent, is a threat to us. But domestically — and I think this is largely to do with just the weird politics that were happening when the pandemic hit — you wound up with… We’ve got these pro-mask and anti-mask tribes, and it’s been pretty ugly.

Rob Wiblin: Yeah. The U.S. is kind of the odd one out here as far as I can tell. I live in the U.K., and I think initially it created a very strong bond and more cooperation. Maybe that’s now kind of waned, but I think maybe now we probably get along better than we did before the pandemic, at least a little bit. I guess the U.S., at least to my knowledge, stands at the top of the list in terms of how much this actually introduced more division.

Bob Wright: Yeah. I’m not sure we should call that the top of the list. That sounds a little more flattering than we deserve. It’s weird times, but it gets back to something you said earlier. You suggested this would be a legitimate cause for effective altruism — look at these machine learning algorithms that wind up governing people’s conduct on social media, recommending videos to them and so on. I mean, it may not be machine learning per se, it may be something less sophisticated, but the point is these are the algorithms that can wind up having pernicious effects, can draw people into extremism and so on. And so, in my way of looking at The Apocalypse Aversion Project, that’s an intervention at the level of psychology.

Bob Wright: In other words, if you ask why America is too divided politically to make meaningful progress on something like a new bioweapons convention, the answer is partly in the algorithms. They have made things worse. And so, intervening in a problem you were already considering the domain of effective altruism I would say is doubly the domain of effective altruism, in the sense that it’s about getting our species to a psychological state where we’re capable of attacking all kinds of problems, including transnational ones.

Rob Wiblin: We did an interview last year with Tristan Harris, who’s been a leader on really worrying about these issues and trying to figure out how can we make these algorithms and other designs of online services such that people don’t end up hating one another and having completely false beliefs, which seems like an incredibly important area. And especially if you can get involved in one of these companies that designs these services, then you’re going to get enormous leverage. Because the amount of software engineering time relative to the amount of user time, it’s a very intense ratio there. And I don’t think Google is really, as an organization, excited about the idea that they are creating extremism and making people miserable, and probably neither is Twitter. Hopefully they can reform their service so there’s something that people, on reflection, are happy that they use rather than something that they use begrudgingly.

Bob Wright: Yeah. I don’t think they’re happy about that, but they’re driven by the profit motive. And so they tend to, I think, let their machines do what increases revenue.

Rob Wiblin: Yeah, do whatever.

Bob Wright: And that seems to have some bad side effects. And so it’s a challenge in a regulatory sense. I mean, one thing I’d like to see is algorithm transparency, where they basically have to give us a better idea of what the algorithm is. Make it public and allow… This might involve creating APIs or whatever. Not that I know what an API is. But I’d like a third party company to come say to me, here’s something you can put on top of Facebook or Twitter, where it’s got this slider here and you can define it however you want. Give me fewer tweets that fit this description, or fewer tweets that lead me to react this way, or whatever. And we wouldn’t be slaves to the really crude and rudimentary tools that Twitter and Facebook offer us, which is almost nothing. I mean, you have almost no control over what’s being shown to you. So that’s something I’d like to see.

Surveillance [01:00:47]

Rob Wiblin: Yeah. While we’re on technology, I just want to make a pitch to you to think about whether, potentially, a lot of people might be able to have more impact by focusing just on research and development, or maybe policy change. I guess the thing that I like about narrow policy changes and science and tech is that often just a small group of people who think that it’s a good idea to create something and that it will improve the world can just go ahead and do it. And they don’t have to try to convince millions and millions of people. Or get this mass cultural change. Yeah. We actually had an episode with Andy Weber who used to be the point person on biological weapons and nuclear threats at the Department of Defense. And he was saying that he thinks we can make a huge dent on the problem of bioweapons, to some degree take them off the table as a strategy that any country might consider, by combining the progress that we’ve made on mRNA vaccine manufacturing and also the progress that we’re making on making it really cheap to sequence DNA.

Rob Wiblin: He paints this vision where scientists continue to figure out how to make mRNA vaccines work really well and how to make them quickly and cheaply, and we continue to make it really cheap to sequence DNA at a mass scale, and then we can just, in every house, or at least every hospital, maybe every school, we can be monitoring the ambient environment to see are there new viruses showing up? And are those viruses spreading? We can do this by basically doing DNA sequencing all over the place. And then if there is a new virus or a new pathogen of any kind that is picked up, and we discover that it’s causing harm, well, hopefully we’ll be able to pick it up really quickly, much quicker than we can now, because we’ll be sequencing everywhere. And then we can very quickly turn around an mRNA vaccine, because mRNA vaccines can be modified to tackle different pathogens much more quickly than old vaccines could.

Rob Wiblin: And then as long as we have the ability to manufacture tons of mRNA vaccines really quickly, then it would be pointless to release a bioweapon, because we would be able to diffuse the challenge really, really quickly. This is kind of an example where one option would be to try to convince everyone to not develop bioweapons, to make the facilities safe, convince anyone who might want to design such a bioweapon that it’s a bad idea, but maybe there’s just this technical patch that we can chuck on the top, which is, well, we just figured out how to make it not work anyway.

Bob Wright: So is he saying you can imagine a sensing system that would detect the deployment of a bioweapon immediately upon deployment, and so it couldn’t get very far? I assume he’s not saying you would know when someone’s working on one, is he?

Rob Wiblin: No, no. So there would be… You could detect it very soon after deployment, because of course the virus gets into the first person or first few people and it starts to spread. And then I suppose the most powerful example would be that everyone takes a test. Everyone takes a swab in the morning to check what viruses they happen to have in them, like with something that they could breathe into. But we can imagine it being pretty functional, even if you’re only testing every tenth person or something periodically. Because you’d notice all of these new DNA sequences are showing up that aren’t ones that we’re familiar with seeing all the time. And then you also notice if it starts showing up more and more and more, then you can see that a virus is taking off and spreading. You can’t detect it immediately, but you can potentially detect it after a week maybe, even after it’s been released. Which is much quicker than we can do today. And so the reaction is much faster.

Bob Wright: So it’s a kind of surveillance technology, although not in the usual sense, not in maybe the creepiest sense. But that leads to a question I have for you. There are these various cases where, with a sufficiently intrusive surveillance system in the traditional sense, like cameras everywhere, information technology surveillance, email being monitored, you can solve a lot of problems. But that is a cost that a lot of us would find very unappealing. And it’s this classic trade-off between security and liberty. And it’s such a hard thing to even think about clearly. I’m wondering, in effective altruism, does that kind of thing enter the realm? How do people deal with it?

Rob Wiblin: Yeah. It’s been discussed a bunch. I think probably the first thing for an interested listener to read might be The Vulnerable World Hypothesis by Nick Bostrom. He says imagine that we’re in a world where it turns out to be really easy for someone to create something that could kill almost everyone. In such a fragile world, almost the only way that humanity could survive might be to have this massive surveillance of everyone to make sure that no one does it, so that we could constantly prevent them. Fortunately, it seems like we don’t live in such a world as extreme as that, at least not yet. And hopefully we never will. But looking into the policy issues here is actually on our list of promising things to do with your career. But we haven’t investigated it a ton yet.

Rob Wiblin: It’s something that people have written about, talked about a bunch, but I don’t think there’s a huge literature on it. I mean, I think the basic story is going to be, there’s this trade-off between the risk that we fail to detect someone who’s doing something incredibly dangerous — that does end up then causing a global catastrophe — versus the risk that we set up this system, which, I think the phrase is ‘turnkey totalitarianism.’ If you’re monitoring everyone all the time, it’s going to be very easy for the government to suddenly just flip into this self-perpetuating authoritarian system. If you can detect if people are doing something dangerous with technology, then you can also detect whether anyone is trying to resist the regime. And so you would just be potentially stuck there forever, which is a global catastrophe of its own.

Rob Wiblin: At this point, it seems like the greater risk is that. I’d be much more worried about this mass surveillance, we have a camera in every room, just leading to a terrible and self-perpetuating political system. I suppose we could imagine that in the future maybe we could find some way to ensure that the system couldn’t be used or abused that way. And maybe the risks from technology could become greater. It’s something worth looking into, but I’m skeptical that’s something that we’ll end up ever wanting to deploy.

Bob Wright: Yeah, it’s tricky because if you just leave people to their own devices and they buy things that make them more secure, and the free market system operates and provides it, they can start building these networks that are one flip of the switch away from being abused. Like these… Is it Ring? Is that the name of the camera company that has the camera on your doorstep? And then it’s in your interest to be alerted if your neighbor’s camera sees something menacing, and then the police say, “Hey, if we tap into this network, we can do good things.” And that’s all true. There are benefits, but pretty soon you have a system where’s suddenly the next scare. I mean, I’m old enough to remember the run-up to the Iraq War. “Saddam Hussein has weapons.” “There may be anthrax next door.” You get a scare like that, and people say, sure, turn the whole thing over to the government.

Rob Wiblin: Yeah. One weakness of the thing is it would have to be global. How are we going to get implementation of such a strong surveillance system in every country? Otherwise someone who wants to do something bad can just go to Somalia or whatever country doesn’t have this system, and potentially do the thing there. Maybe a more narrow idea might be, if you have access to the most dangerous technology, then the government might start monitoring your stuff much more. For example, people who have access to the most serious state secrets, they presumably get monitored a bunch by the government to check whether they’re betraying it. That might be some kind of middle ground that helps, but without creating this risk of totalitarianism so straightforwardly.

How we might have prevented the Iraq War [01:07:53]

Rob Wiblin: You mentioned the Iraq War. You wrote in one of your blog posts recently that you thought the psychological change, the mindfulness changes, that you’re thinking of might have prevented the Iraq War. What’s the theory there?

Bob Wright: Well, a couple of things. I mean, first of all, cognitive empathy would have helped. Slate magazine does a Slow Burn podcast, I guess once a year. And this time it’s about the run-up to the Iraq War. And I’ve listened to the first three. And because I was writing for Slate in 2003, I was part of an assemblage of half a dozen people who were writing for Slate who the people doing the podcast taped. I’ll probably be edited out. But anyway, the podcast is very good and it’s a reminder of how totally duped we can be, at any moment, by government officials. But an example of cognitive empathy, which I gave in that conversation, is one thing people forget is, at the time we invaded, the UN weapons inspectors were in Iraq. They left. We demanded they leave so that we could invade, which sounds crazy because they actually were, and people forget this too, but they were being allowed to inspect every site they asked to inspect.

Bob Wright: Now it’s true that Saddam Hussein, initially he was making them cool their heels awhile. And even, in the longer run, he was refusing to do certain things we demanded like let his scientists leave the country to be interrogated. And I just said, well, it’s not hard to imagine reasons a dictator could not want scientists interrogated abroad, other than them being part of an active WMD program. Maybe they knew about past crimes. Maybe he would feel humiliated in the eyes of his people. Maybe he was afraid they’d defect. There’s a billion things. And yet, back then, everything he did that we didn’t like, we took as confirmation of the premise that he had something to hide. And if you said, back then, wait a second. Could we look at this from Saddam’s perspective? I mean, you’d be shouted down as an apologist. And today you see the same dynamic. If you say, well, could we just try to understand how the regime is looking at things in Russia and in China and Iran and North Korea, people call you an apologist. And I think that’s one of the things we need to get over.

Rob Wiblin: Yeah. That issue of whenever you merely explain the perspective of another potentially hostile, potentially evil country, you’re shut down as an apologist…it feels like an interesting, narrow thing that maybe we could change. Because it seems like that alone could significantly improve discourse around international relations. It seems like people often just slip into making these terrible errors, literally because they have not been allowed to talk about how does this other country think about X? How do they conceive this, given their own history and their own interests? Or at least the leaders. At least understand what Putin is thinking or calculating. That seems like a really valuable meme to spread.

Bob Wright: I agree. Stigmatize the use of the word apologist. I don’t mean be too harsh, but get people to agree that no, that’s just not cool. I mean, that does not further discourse. If you think they are inaccurately characterizing what’s going on in Tehran or Moscow, make the argument. But don’t try to shout them down with a term that you know is meant to just exclude them from the conversation, by calling them an Assad apologist or something. That’s why I do advocate mindfulness, but I recognize that that’s not going to be the whole cure by any means. And I do think you need specific interventions of exactly that kind and to just make things that were cool, uncool.

Rob Wiblin: Yeah. I guess it seems like there’s maybe two classes here. One is changing people’s emotional reactions, getting them to slow down and think about things. And then maybe there’s also this epistemic, rationality focus, where it’s getting people to think more clearly about what is really going on. So they can actually have insights that otherwise they would miss. And both of them seem really valuable interventions if we can make progress on them at a societal level. But yeah, it’s challenging. I guess maybe the bottleneck is that a lot of this stuff is, to some degree, boring compared to the memes that they have to compete with. It’s far more exciting to read about how someone is really bad than to read about how you could slow down and think about something more carefully.

Rob Wiblin: So someone who’s really good at popularizing content, who’s really good at figuring out how we make this snappy and engaging to deal with, maybe they could make more progress. It’s going to be a bit of a hits-based business. Because most of the time, most people making content don’t get much of an audience. But every so often someone breaks through. Like Jordan Peterson, for example. A bunch of his stuff, in some sense, is quite boring. And yet he got this massive audience. Now, whether it was good advice or not… Maybe we need the Jordan Peterson version of “Calm down and think carefully about foreign relations.”

Bob Wright: I mean, it’s a challenge because social media incentives so often work in the other direction. If you want to increase your number of Twitter followers, the best way is to buy into whatever incendiary language is working within your tribe and vilify the other tribes. There’s a lot of perverse incentives here that work against calming down and trying to be rational. But, I think it’s the thing we need to think about creatively.

The effective altruism community [01:13:10]

Bob Wright: That’s why I’m interested in what you’ve done, you meaning the effective altruism people, which is create a movement that has certain values. I think you need a group of people who… There needs to be this kind of ‘tribalist tribe’ thing. It’s funny. I had been thinking of that term and, I mean, I even have the @tribalisttribe Twitter handle, and have for years. I haven’t done anything with it. But when I had that conversation I mentioned with Will MacAskill, who I guess is still… Is he still president of 80,000 Hours?

Rob Wiblin: Yeah…I think he’s at least one of the trustees.

Bob Wright: Apparently he’s not a very forceful one if you’re not sure, but—