What is social impact? A definition

Lots of people say they want to “make a difference,” “do good,” “have a social impact,” or “make the world a better place” — but they rarely say what they mean by those terms.

By getting clearer about your definition, you can better target your efforts. So how should you define social impact?

Over two thousand years of philosophy have gone into that question. We’re going to try to sum up that thinking; introduce a practical, rough-and-ready definition of social impact; and explain why we think it’s a good definition to focus on.

This is a bit ambitious for one article, so to the philosophers in the audience, please forgive the enormous simplifications.

Table of Contents

- 1 A simple definition of social impact

- 2 A more rigorous definition of social impact

- 3 In a nutshell

- 4 Social impact is about making the world better

- 5 What does it mean to “make the world better”?

- 6 Why do we emphasise respecting other values?

- 7 Is this just utilitarianism?

- 8 Conclusion

- 9 Learn more

- 10 Read next

A simple definition of social impact

If you just want a quick answer, here’s the simple version of our definition (a more philosophically precise one — and an argument for it — follows below):

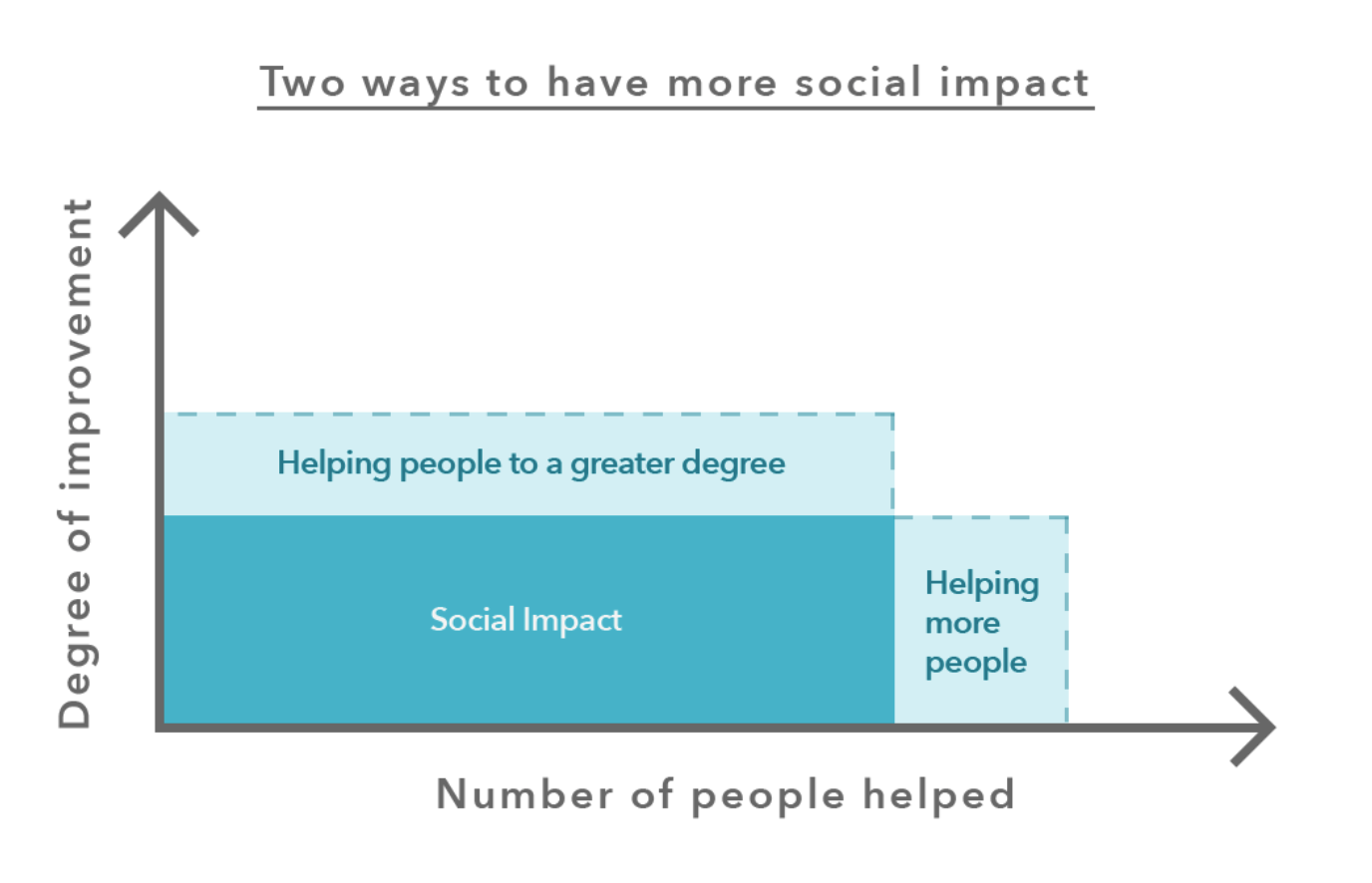

Your social impact is given by the number of people1 whose lives you improve and how much you improve them, over the long term.

This shows that you can increase your impact in two ways: by helping more people over time, or by helping the same number of people to a greater extent (pictured below).

We say “over the long term” because you can help more people either by helping a greater number now, or taking actions with better long-term effects.

This definition is enough to help you figure out what to aim at in many situations — e.g. by roughly comparing the number of people affected by different issues. But sometimes you need a more precise definition.

A more rigorous definition of social impact

Here’s our working definition of “social impact”:

“Social impact” or “making a difference” is (tentatively) about promoting total expected wellbeing — considered impartially, over the long term.

We don’t think social impact is all that matters. Rather, we think people should aim to have a greater social impact within the constraints of not sacrificing other important values – in particular, while building good character, respecting rights and attending to other important personal values. We don’t endorse doing something that seems very wrong from a commonsense perspective in order to have a greater social impact.

In fact, we even think that paying attention to these other values is probably the best way to in fact have the most social impact anyway, even if that’s all you want to aim for.

In the rest of this article, we’ll expand on:

- Why we think social impact is primarily about promoting what’s of value — i.e. making the world better

- Why we think making the world a better place is in large part about promoting total expected wellbeing

- How social impact fits with other values

- How we can assess what makes a difference in the face of uncertainty

We believe that taking this definition seriously has some potentially radical implications about where people who want to do good should focus, which we also explore.

Two final notes before we go into more detail. First, our definition is tentative — there’s a good chance we’re wrong and it might change. Second, its purpose is practical — it aims to cover the most important aspects of doing good to help people make better real-life decisions, rather than capture everything that’s morally relevant.

In a nutshell

The definition:

“Social impact” or “making a difference” is (tentatively) about promoting total expected wellbeing — considered impartially, over the long term.

Why “promoting”? When people say they want to “make a difference,” we think they’re primarily talking about making the world better — i.e. ‘promoting’ good things and preventing bad ones — rather than merely not doing unethical actions (e.g. stealing) or being virtuous in some other way.

Why “wellbeing”? We understand wellbeing as an inclusive notion, meaning anything that makes people better off. We take this to encompass at least promoting happiness, health, and the ability for people to live the life they want. We chose this as the focus because most people agree these things matter, but there are often large differences in how much different actions improve these outcomes.

Why do we say “expected” wellbeing? We can never know with certainty the effects that our actions will have on wellbeing. The best we can do is try to weigh the benefits of different actions by their probability — i.e. compare based on ‘expected value.’ Note that while the action with the highest expected value is best in principle, that doesn’t imply that the best way to find the best action is to make explicit quantitative estimates. It’s often better in practice to use rules of thumb, our intuition, or other methods, since these maximise expected value better than explicit expected value calculations. (Read more on expected value.)

Why “considered impartially”? We mean that we strive to treat equal effects on different beings’ welfare as equally morally important, no matter who they are — including people who live far away or in the future. In addition, we think that the interests of many nonhuman animals, and even potentially sentient future digital beings, should be given significant weight, although we’re unsure of the exact amount. Thus, we don’t think social impact is limited to promoting the welfare of any particular group we happen to be partial to (such as people who are alive today, or human beings as a species).

Why do we say “over the long term”? We think that if you take an impartial perspective, then the welfare of those who live in the future matters. Because there could be many more future generations than those alive today, our effects on them could be of great moral importance. We thus try to always consider not just the direct and short-term effects of actions, but also any indirect effects that might occur in the far future.

Social impact is about making the world better

What does it mean to act ethically? Moral philosophers have debated this question for millennia, and have arrived at three main kinds of answers:

- Being virtuous — e.g. being honest, kind, and just

- Acting rightly — e.g. respecting the rights of others and not doing wrong

- Making the world better — e.g. helping others

These correspond to virtue ethics, deontology, and consequentialism, respectively.

Whatever you think about which perspective is most fundamental, we think all three have something to offer in practice (we’ll expand on this in the rest of the article). So we could say that a simple, general recipe for a moral life would be to:

- Cultivate good character

- Respect constraints, such as the rights of others

- And then do as much good as you can

In short, character, constraints and consequences.

When our readers talk about wanting to “make a difference,” we think most interested in the third of these perspectives — changing the world for the better.

We agree focusing more on doing good makes sense for most people. First, we don’t just want to avoid doing wrong, or live honest lives, but actually leave the world better than we found it. And more importantly, there’s so much we can do to help.

For instance, we’ve shown that by donating 10% of their income to highly effective charities, most college graduates can save the lives of over 40 people over their lifetimes with a relatively minor sacrifice.

From an ethical perspective, whether you save 40 lives or not will probably be one of the most significant questions you’ll ever face.

In our essay on your most important decision, we argued that some career paths open to you will do hundreds of times more to make the world a better place than others. So it seems really important to figure out what those paths are.

Even philosophers who emphasise moral rules and virtue agree that if you can make others better off, that’s a good thing to do. And they agree it’s even better to make more people better off than fewer. (More broadly, we think deontologists and utilitarians agree a lot more than people think.)

John Rawls was one of the most influential (non-consequentialist) philosophers of the 20th century, and he said:

All ethical doctrines worth our attention take consequences into account in judging rightness. One which did not would simply be irrational, crazy.2

Since there seem to be big opportunities to make people better off, and some seem to be better than others, we should focus on finding those.

This might sound obvious, but most discussion of ethical living is very focused on reducing harm rather than doing more good.

For instance, when it comes to fighting climate change, there’s a lot of focus on our personal carbon emissions, rather than figuring out what we can do to best fight climate change.

Asking the second question suggests radically different actions. The best things we can do to fight climate change probably involve working on, advocating for, and donating to exceptional research and advocacy opportunities, rather than worrying about plastic bags, recycling, or turning out the lights.

Why is there so much focus on our personal emissions? One explanation is that ethical views originate from before the 20th century, and sometimes from thousands of years ago. If you were a medieval peasant, your main ethical priority was to help your family survive, without cheating or harming your neighbors. You didn’t have the knowledge, power, or time to help hundreds of people or affect the long-term future.

The Industrial Revolution gave us wealth and technology not even available to kings and queens in previous centuries. Now, many ordinary citizens of rich countries have enormous power to do good, and this means the potential consequences of our actions are usually what’s most ethically significant about them.

But this isn’t to say that we can ignore harms. In general, we think it’s vital to avoid doing anything that seems very wrong from a commonsense perspective, even if it seems like it might lead to a greater social impact, and to cultivate good character

In general, we see our advice as about striving to have a greater impact, within the constraints of your other important values.

So, we think ‘social impact’ or ‘making a difference’ should be about making the world better. But what does that mean?

What does it mean to “make the world better”?

We imagine building a world in which the most beings can have the best possible lives in the long term — lives that are free from suffering and injustice, and full of happiness, adventure, connection, and meaning.

There are two key components to this vision — impartiality and a focus on wellbeing — which we’ll now unpack.

Impartiality: everyone matters equally

When it comes to ‘making a difference,’ we think we should strive to be more impartial — i.e. to give equal weight to everyone’s interests.

This means striving to avoid privileging the interests of anyone based on arbitrary factors such as their race, gender, or nationality, as well as where or even when they live. We also think that the interests of many nonhuman animals should be given significant weight, although we’re unsure of the exact amount. Importantly, we’re also concerned about potentially sentient future digital beings, which could exist in very large numbers and whose welfare could be in part determined by how we design them.

The idea of impartiality is common in many ethical traditions, and is closely related to the “Golden Rule” of treating others as you’d like to be treated, no matter who they are.

Acting impartially is an ideal, and it’s not all that matters. As individuals, we all have other personal goals, such as caring for our friends and family, carrying out our personal projects, and having our own lives go well. Even considering only moral goals, it’s plausible we have other values or ethical commitments beyond impartially helping others.

We’re not saying you should abandon these other goals and strive to treat everyone equally in all circumstances.

Rather, the claim is that insofar as your goal is to ‘make a difference’ or ‘have a social impact,’ we don’t see good reason to privilege any one group over another — and that you should therefore have some concern for the interests of strangers, nonhumans, and other neglected groups.

(And even if you think that the ultimate ideal is to have equal concern for all beings, as a matter of psychology, you probably have other, competing goals, and it’s not helpful to pretend you don’t.)

In Peter Singer’s essay, Famine, Affluence, and Morality, he imagines you’re walking and come across a child drowning in a pond. Everyone agrees that you should run in and save the child, even if it would ruin your new suit and shoes.

This illustrates a principle that many people can get behind: if you can help a stranger a great deal with little cost to yourself, that’s a good thing to do. This shows that most people give some weight to the interests of others.

If it also turns out that you have a lot of power to help others (as we argued above), then it would imply that social impact should be one of the main focuses of your life.

Impartiality also implies that you should think carefully about who you can help the most. It’s common to say that “charity begins at home,” but if everyone’s interests matter equally, and you can help more people who are living far away (e.g. because they’re without cheap basic necessities you can provide), then you should help the more distant people.

We’re convinced that a degree of impartiality is reasonable, and that many people should try thinking harder about impartiality than they are used to. But there remains huge questions about how impartial to be.

The trend over history seems to have been towards a greater impartiality and a wider and wider circle of concern, but we’re unsure where that should stop. For instance, compared to people today, how exactly should we weigh the interests of nonhuman animals, people who don’t exist yet, and potential digital beings? This is called the question of moral patienthood.

Here’s an example of the stakes of this question: we don’t see much reason to discount the interests of future generations simply because they’re distant from us in time. But because there could be so many people in the future, the main focus of efforts to do good should be to leave the best possible world for those future generations. This idea has been called ‘longtermism,’ and is explored in a separate article. We think longtermism is an important perspective, which is part of why we say “over the long term” in our definition of social impact.

This section was about who to help; the next section is about what helps.

Wellbeing: what does it mean to help others?

When aiming to help others, our tentative hypothesis is that we should aim to increase their wellbeing as much as possible — i.e. enable more individuals to live flourishing lives that are healthy, happy, fulfilled; are in line with their wishes; and are free from avoidable suffering.

Although people disagree over whether wellbeing is the only thing that matters morally, almost everyone agrees that things like health and happiness matter a lot — and so we think it should be a central focus in efforts to make a difference.

Putting impartiality and a focus on wellbeing together means that, roughly, how much positive difference an action makes depends on how it increases the wellbeing of those affected, and how many are helped — no matter when or where they live.

What wellbeing consists of more precisely is a controversial question, which you can read about in this introduction by Fin Moorhouse. In brief, there are three main views:

- The hedonic view: wellbeing consists in your degree of positive vs negative mental states such as happiness, meaning, discovery, excitement, connection, and equanimity.

- The preference satisfaction view: wellbeing consists in your desires being fulfilled.

- Objective list theories: wellbeing consists in achieving certain goods, like friendship, knowledge, and health.

In philosophical thought experiments, these different views have very different implications. For instance, if you support the hedonic view, you’d need to accept that being secretly placed into a virtual reality machine that generates amazing experiences is better for you than staying in the real world. If instead you support (or just have some degree of belief in) the preference satisfaction view, you don’t have to accept this implication, because your desires can include not being deceived and achieving things in the real world.

In practical situations, however, we rarely find that different views of wellbeing drive different decisions, such as about which global problems to focus on. The three notions correlate closely enough that differences in views are usually driven by other factors (such as the question of where to draw the boundaries of the expanding circle discussed in the previous section).

What else might matter besides wellbeing? There are many candidates, which is why we say promoting wellbeing is only a “tentative” hypothesis.

Preserving the environment enables the planet to support more beings with greater wellbeing in the long term, and so is also good from the perspective of promoting wellbeing. However, some believe that we should preserve the environment even if it doesn’t make life better for any sentient beings, showing they place intrinsic value on preserving the environment.

Others think we should place intrinsic value on autonomy, fairness, knowledge, and many other values.

Fortunately, promoting these other values often goes hand in hand with promoting wellbeing, and there are often common goals that people with many values can share, such as avoiding existential risks. So again, we believe that the weight people put on these different values has less effect on what to do than often supposed, although they can lead to differences in emphasis.

We’re not going to be able to settle the question of defining everything that’s of moral value in this article, but we think that promoting wellbeing is a good starting point — it captures much of what matters and is a goal that almost everyone can get behind.

How good is it to create a happy person?

We’ve mostly spoken above as if we’re dealing with potential effects on a fixed population, but some decisions could result in more people existing in the long term (e.g. avoiding a nuclear war), while others mainly benefit people who already exist (e.g. treating people who have parasitic worms, which rarely kill people but cause a lot of suffering). So we need to compare the value of increasing the number of people with positive wellbeing with benefiting those who already exist.

This question is studied by the field of ‘population ethics’ and is an especially new and unsettled area of philosophy.

We won’t try to summarise this huge topic here, but our take is that the most plausible view is that we should maximise total wellbeing — i.e. the number of people (again including all beings whose lives matter morally) who exist in all of history, weighted by their level of wellbeing. This is why we say “total” wellbeing in the definition.

That said, there are some powerful responses to this position, which we briefly sketch out in the article on longtermism. For this reason, we’re not certain of this ‘totalist’ view, and so put some weight on other perspectives.

Expected value: acting under uncertainty

How do you know what will increase wellbeing the most?

In short, you don’t.

You have to weigh up the different likelihoods of different outcomes, and act even though you’re uncertain. We believe the theoretical ideal here is to take the action with the greatest expected value compared to the counterfactual. This means taking into account both how much wellbeing our actions could result in, and how likely those outcomes are, and adding them together.

In practice, we try to approximate this with rules of thumb, like the importance, neglectedness, tractability framework.

Going into expected value theory would take us too far afield, so if you want to learn more, check out our separate articles:

- Expected value: how to act when you’re uncertain what will help?

- Counterfactuals and how they change our view of what does good

- Cluelessness: can we know the effects of our actions?

Why do we emphasise respecting other values?

This is to remind us of how much could be left out of our definition, and how radical our uncertainty is.

Many moral views that were widely held in the past are regarded as flawed or even abhorrent today. This suggests we should expect our own moral views to be flawed in ways that are difficult for us to recognise.

There is still significant moral disagreement within society, among contemporary moral philosophers, and, indeed, within our own team.

And past projects aiming to pursue an abstract ethical ideal to the exclusion of all else have often ended badly.

We believe it’s important to pursue social impact within the constraints of trying very hard to:

- Not do anything that seems very wrong from a commonsense perspective

- E.g. to not violate important legal and ethical rights

- Cultivate good character, such as honesty, humility and kindness

- Respect other people’s important values and to be willing to compromise with them

- Respect your other important personal values, such as your family, personal projects and wellbeing

First, following these principles most likely increases your social impact in the long-term, once you take account of the indirect benefits of following them and the limitations of your knowledge.

Second, many believe following these principles is inherently valuable. We’re morally uncertain so try to consider a range of perspectives and do what makes sense on balance.

You can read more about why we think following principles like the above makes sense no matter your ethical views in these additional articles:

- Is it ever okay to take a harmful job in order to do more good?

- Moral uncertainty: how to act when you’re uncertain about what’s good

- Doing good together: how to coordinate effectively and avoid single-player thinking)

- Ways people trying to do good accidentally make things worse, and how to avoid them

Considering these principles, if we had to sum up our ethical code into a single sentence, it might be something like: cultivate a good character, respect the rights of others, and promote wellbeing for the wider world.

And we think this is a position that people with consequentialist, deontological, and virtue-based ethics should all be able to get behind — it’s just that they support it for different reasons.

Is this just utilitarianism?

No. Utilitarianism claims that you’re morally obligated to take the action that does the most to increase wellbeing, as understood according to the hedonic view.

Our definition shares an emphasis on wellbeing and impartiality, but we depart from utilitarianism in that:

- We don’t make strong claims about what’s morally obligated. Mainly, we believe that helping more people is better than helping fewer. If we were to make a claim about what we ought to do, it would be that we should help others when we can benefit them a lot with little cost to ourselves. This is much weaker than utilitarianism, which says you ought to sacrifice an arbitrary amount so long as the benefits to others are greater.

- Our view is compatible with also putting weight on other notions of wellbeing, other moral values (e.g. autonomy), and other moral principles. In particular, we don’t endorse harming others for the greater good.

- We’re very uncertain about the correct moral theory and try to put weight on multiple views.

Read more about how effective altruism is different from utilitarianism.

Overall, many members of our team don’t identify as being straightforward utilitarians or consequentialists.

Our main position isn’t that people should be more utilitarian, but that they should pay more attention to consequences than they do — and especially to the large differences in the scale of the consequences of different actions.

If one career path might save hundreds of lives, and another won’t, we should all be able to agree that matters.

In short, we think ethics should be more sensitive to scope.

Conclusion

We’re not sure what it means to make a difference, but we think our definition is a reasonable starting point that many people should be able to get behind:

“Social impact” or “making a difference” is (tentatively) about promoting total expected wellbeing — considered impartially, over the long term.

We’ve also gestured at how social impact might fit with your personal priorities and what else matters ethically, as well as many of our uncertainties about the definition — which can have a big effect on where to focus.

We think one of the biggest questions is whether to accept longtermism, so we’ve dedicated a whole separate article to that question.

From there, you can start to explore which global problems are most pressing based on whatever definition of social impact you think is correct.

You’ll most likely find that the question of which global problems to focus on is more driven by empirical or methodological uncertainties than moral ones. But if you find a moral question is crucial, you can come back and explore the further reading below.

In short, if you have the extraordinary privilege to be a college graduate in a rich country and to have options for how to spend your career, it’s plausible that social impact, as defined in this way, should be one of your main priorities.

Learn more

Top recommendations

- Why not to take a harmful career even if you think it’ll do more good.

- Podcast: Will MacAskill on moral uncertainty, utilitarianism, and how to avoid being a moral monster

- Podcast: Toby Ord on the perils of maximising the good that you do

Further recommendations

- Podcast: Andreas Mogensen on whether effective altruism is just for consequentialists

- Podcast: Sharon Hewitt Rawlette on why pleasure and pain are the only things that intrinsically matter

- A thread on why deontologists and utilitarians agree more than people think

- Radical Empathy by Holden Karnofsky

- The Expanding Circle: Ethics and Sociobiology by Peter Singer

- What is wellbeing? by Fin Moorhouse

- Impartiality on the Stanford Encyclopedia of Philosophy

- A reading list on moral patienthood on the Effective Altruism Forum

Read next

This article is part of our foundations series. See the full series, or keep reading:

Notes and references

- We often say “helping people” here for simplicity and brevity, but we don’t mean just humans — we mean anyone with experience that matters morally — e.g. nonhuman animals that can suffer or feel happiness, even conscious machines if they ever exist.↩

- See A Theory of Justice by John Rawls.↩