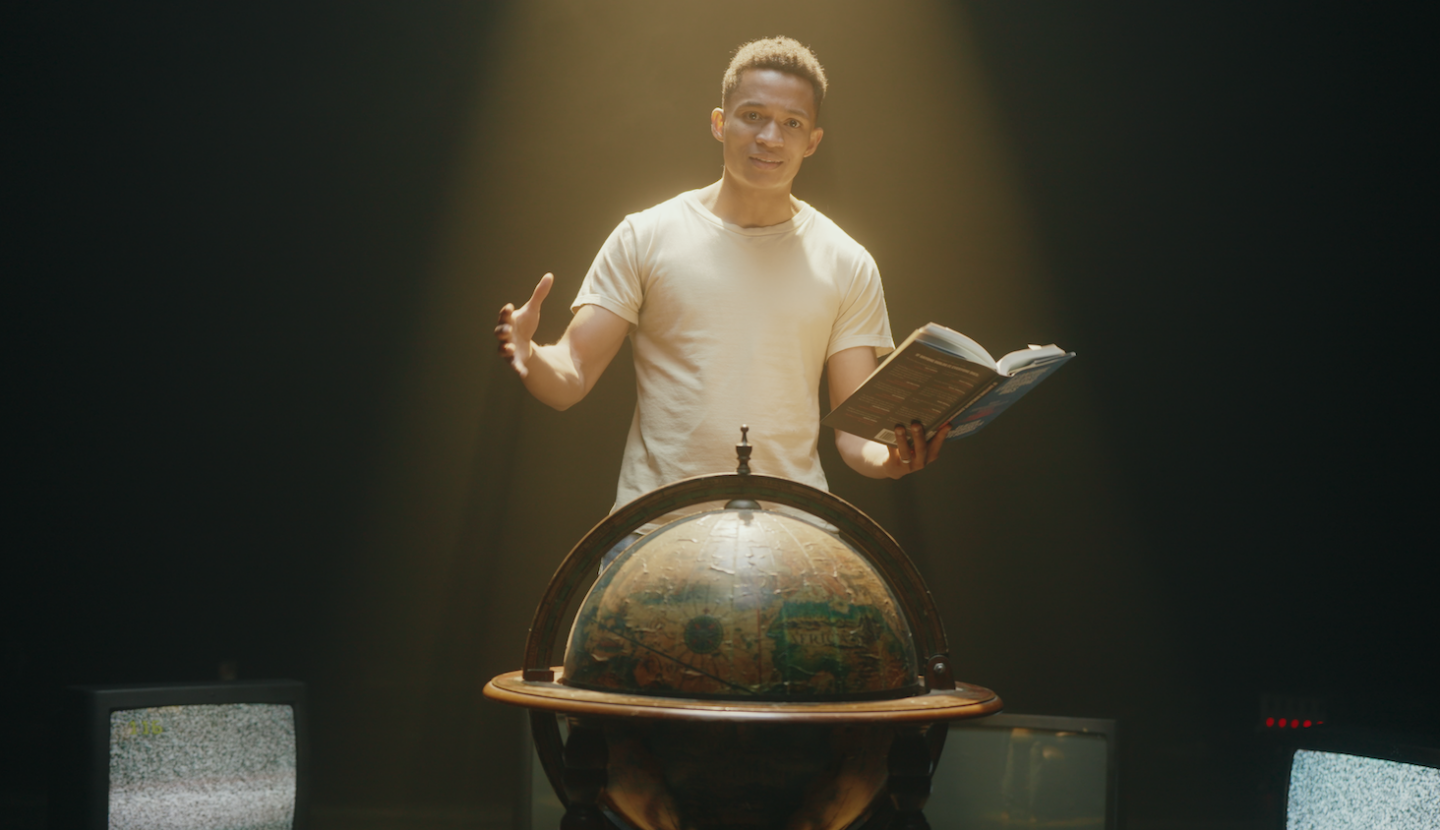

#244 – Benjamin Todd on why we’re updating our career advice for the strangest time in history

The average career is 80,000 hours long. With AI advancing so rapidly, the hours you have left in your career matter more than ever.

Some leading AI researchers think there’s a 10% chance that AI systems begin automating AI research itself this year — and a 60% chance by the end of 2028. This could introduce aggressive feedback loops that completely reshape every industry, institution, and career.

If these predictions are right, the window for influencing the direction of the future could be closing fast. As 80,000 Hours cofounder Benjamin Todd argues in his new book, that makes thinking carefully about your career more important than ever.

Fortunately, there are lots of ways to use your career to make the AI transition go well.

In today’s conversation with host Zershaaneh Qureshi, Ben lays out three scenarios — from AGI by 2029 to a decades-long plateau in AI progress — and explains why not everyone needs to bet on the shortest timeline. A fresh graduate and a senior government official have wildly different leverage, so timing your impact well means weighing where you are in your career against the urgency of the risks.

Ben also addresses the obvious anxieties:

- Will AI come for all the jobs he’s recommending?

- What’s the point in following his advice if the job market is about to collapse?

- Which skills are actually worth building right now?

His new book, 80,000 Hours: How to Have a Fulfilling Career That Does Good, provides a surprisingly concrete framework for making career decisions in these radically uncertain times.

This episode was recorded on May 7, 2026.

We’re hiring

We have lots of open roles at 80,000 Hours — across advising, web, video, and ops — check them out and apply on our website.

Our production team includes:

- Video editors: Josh Alward, Dominic Armstrong, Jasper Luithlen, Milo McGuire, Luke Monsour, and Simon Monsour

- Producers: Elizabeth Cox and Nick Stockton

- Coordination and support: Katy Moore and Lou Moran

- Camera operator: Jeremy Chevillotte

- Music: CORBIT