Table of Contents

- 1 1. Introduction

- 2 2. What does it mean to make a difference?

- 3 3. What are the world’s most pressing problems?

- 4 4. Contribution: which career paths give you the best opportunities to tackle global problems?

- 5 5. Personal fit: what are you good at?

- 6 6. Strategy: how to find your best career

- 6.1 Be more ambitious: a rational case for dreaming big (if you want to do good)

- 6.2 Doing good together: how to coordinate effectively and avoid single-player thinking

- 6.3 Career exploration: when should you settle?

- 6.4 How to balance impact and doing what you love

- 6.5 Ways people trying to do good accidentally make things worse, and how to avoid them

- 7 What’s next: Speak to our team one-on-one to make your new career plan

If you’re already familiar with the ideas in our career guide, this series aims to deepen your understanding of how to increase the impact of your career.

Browse through the titles below, and read whichever interests you. Here’s a reminder about what our advice is based on and some tips on how to best use it.

1. Introduction

Why some career paths likely have 10 or 100 or even 1,000 times more impact than others, making your career your biggest opportunity to make a difference.

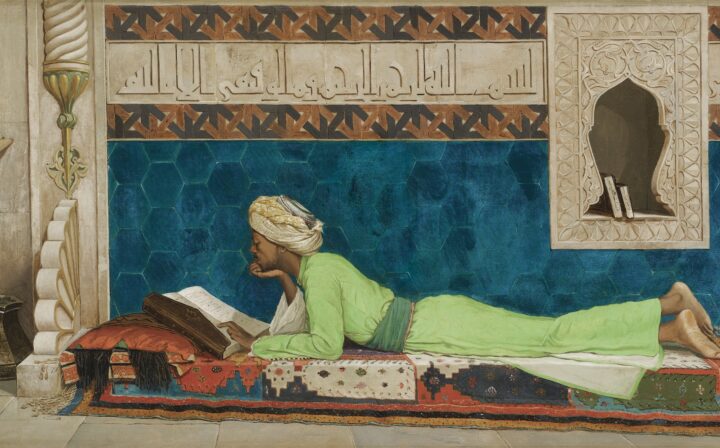

2. What does it mean to make a difference?

We think ‘making a difference’ is best understood as being about the number of lives you improve and how much you improve them by — regardless of who they are or when they’re living.

The case for why the best thing to do with your career could be making sure the future is as good as possible, and what that might imply.

All careers involve some degree of negative impact. That said, in general, we recommend against taking a position which has substantial harm, even if the overall benefits of the work seem greater than the harms.

3. What are the world’s most pressing problems?

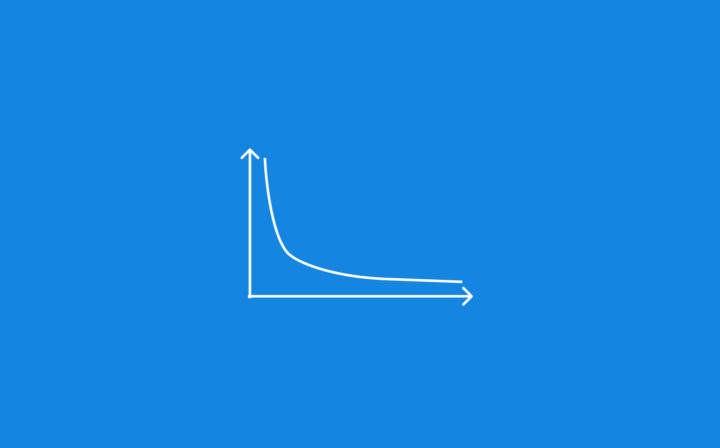

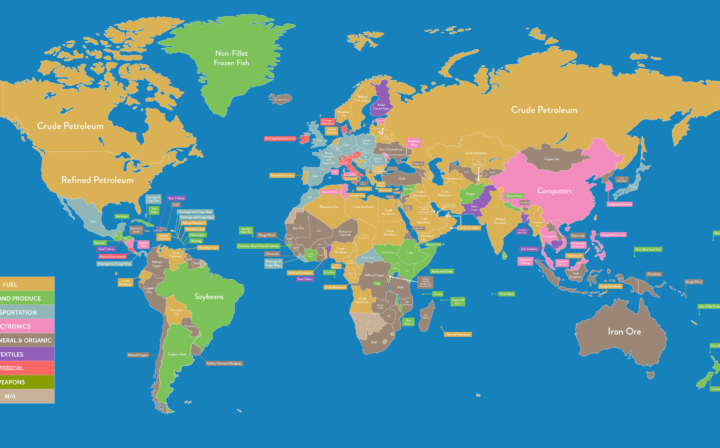

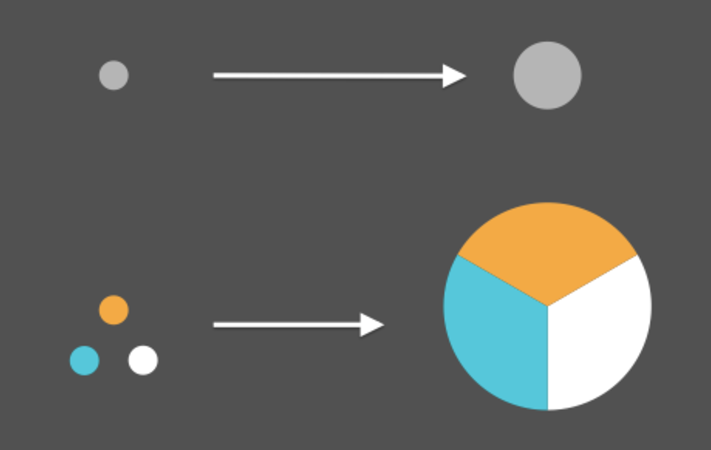

There are huge differences in the importance and neglectedness of different issues, which don’t seem fully offset by differences in tractability. This means that by choosing a different issue, you might be able to increase how much impact you have by over 100 times.

The chance of a catastrophe from nuclear war, runaway climate change, or emerging technology is small each year, but all that adds up over lifetimes. Read about why we think reducing these risks should probably be our biggest priority.

See which issues we think are most important and tractable while still being relatively neglected — meaning they offer the best opportunities to make a big difference.

4. Contribution: which career paths give you the best opportunities to tackle global problems?

You can make a bigger contribution to solving a problem by either pursuing more effective solutions or seeking greater ‘leverage’ — essentially, moving more resources towards your preferred solutions — or both. This article explores how.

Many solutions to global problems don’t have much impact, but the best are enormously effective. How taking a ‘hits-based’ approach to finding the best solutions can enable you to make a far bigger contribution.

Early in your career, we suggest focusing on building useful skills. We use the concept of ‘leverage’ to identify the most useful skills to build and explain how to test your fit and get started.

A list of ideas for high-leverage paths in which you can mobilise a lot of resources toward the best solutions to some of the world’s most pressing problems.

5. Personal fit: what are you good at?

We introduced personal fit in our career guide. Here’s some more on this topic:

If you’re considering working in paths with heavy-tailed, semi-predictable performance — like scientific research — then it could be worth switching paths to get only a small increase in relative fit. Here’s why.

This article summarises the best advice we’ve found on how to identify your strengths, turned into a three-step process.

Comparative advantage — which is related to, but different from personal fit — matters when you’re closely coordinating with a community to fill a limited number of positions.

6. Strategy: how to find your best career

If you want to do good, there are greater reasons to be ambitious and take risks. We cover four arguments about why to set up your life so you can afford to fail, and then aim as high as possible.

Through trade, coordination, and economies of scale, individuals can achieve greater impact by working together — but to take full advantage of this, you need to change how you approach your career.

It’s easy to miss a great option by narrowing down too early. And if careers differ so much in impact, it’s probably even more important to explore than people usually think.

Why doing good and being happier are more aligned than people think, and some thoughts on how to navigate the tradeoffs that arise.

If you’re going to try to have an impact, and especially if you’re going to be ambitious about it, it’s very important to carefully consider how you might accidentally make things worse.

What’s nextSpeak to our team one-on-one to make your new career plan

If you’ve read our foundations series, our 1-1 team might be keen to talk to you. They can help you check your plan, reflect on your values, and maybe make connections with mentors, jobs and funding opportunities. (It’s free.)

Learn even more

We have hundreds more articles on the site. You can filter them by cause, career path, and other topics to find those that are most helpful to your situation.