Preventing catastrophic pandemics

Some of the deadliest events in history have been pandemics. COVID-19 demonstrated that we’re still vulnerable to these events, and future outbreaks could be far more lethal.

In fact, due to developments in technology, we face the possibility of biological disasters that are worse than ever before — bad enough that they could derail civilisation and threaten humanity’s future. The odds of such catastrophic pandemics seem uncomfortably high.

We believe this risk is one of the world’s most pressing problems. And because there are many practical ways to address it, we think working to prevent catastrophic pandemics is one of the most promising ways to safeguard the future of humanity.

Summary

Scale

Pandemics — especially engineered pandemics — pose a significant risk to humanity. Though the risk is difficult to assess, some researchers estimate that there is a greater than 1 in 10,000 chance of a biological catastrophe leading to human extinction within the next 100 years, and potentially as high as 1 in 100. (See below.) A biological catastrophe that doesn’t lead to extinction could still kill billions of people.

Neglectedness

Even in the aftermath of the COVID-19 outbreak, only modest resources are dedicated to pandemic prevention. In the US, for instance, spending on biodefence has only grown from an estimated $17 billion in 2019 to $27 billion in 2026.

One estimate in April 2024 found that global spending on mitigating and preventing disease outbreaks was around $130 billion, and that work specifically targeting the most catastrophic pandemics remained especially neglected.

Solvability

There are promising approaches to improve biosecurity and reduce pandemic risk, including research, policy interventions, and the development of defensive technology.

Table of Contents

- 1 Summary

- 2 Why focus your career on preventing severe pandemics?

- 2.1 Natural pandemics show how destructive biological threats can be

- 2.2 Engineered pathogens could be even more dangerous

- 2.3 Mirror bacteria illustrate the possibility of engineered catastrophic biorisks

- 2.4 Both accidental and deliberate misuse are threats

- 2.5 Overall, the risk seems substantial

- 2.6 Reducing catastrophic biological risks is highly valuable according to a range of worldviews

- 2.7 How do catastrophic biorisks compare to AI risk?

- 3 There are clear actions we can take to reduce these risks

- 4 What jobs are available?

- 5 Want to work on reducing the risk of a biological disaster? We want to help.

- 6 Learn more

Why focus your career on preventing severe pandemics?

COVID-19 highlighted the world’s vulnerability to pandemics and revealed weaknesses in our ability to respond. Despite advances in medicine and public health, around seven million deaths have been recorded from the disease worldwide, and many estimates put the figure far higher.

Historical events like the Black Death and the 1918 flu show that pandemics can be some of the most damaging disasters for humanity, killing tens of millions of people (at a time when the world’s population was less than two billion).

It is sobering to imagine the potential impact of a pandemic pathogen that is much more contagious and deadly than any we’ve seen so far.

Unfortunately, such a pathogen is possible in principle, particularly in light of advancing biotechnology. With each passing year, it becomes easier to design and create biological agents with specific features (more on this below). As the field advances, it may become increasingly feasible to engineer a pathogen that poses a major threat to all of humanity.

States or malicious non-state actors with access to these pathogens could use them as offensive weapons or wield them as threats to obtain leverage over others.

Dangerous pathogens engineered for research purposes could also be released accidentally through a failure of lab safety.

Either scenario could result in a catastrophic ‘engineered pandemic,’ which could pose an even greater threat to humanity than pandemics that arise naturally.

Thankfully, few people seek to use disease as a weapon, and even those willing to conduct such attacks may not aim to produce the most harmful pathogen possible. But the combined possibilities of accident, recklessness, desperation, and unusual malice suggest a disturbingly high chance of a pandemic pathogen being released that could kill a very large percentage of the population. The risk might be especially high during great power conflicts.

But could an engineered pandemic pose an extinction threat to humanity?

There is reasonable debate here. In the past, societies have recovered from pandemics that killed as much as 50% of the population, and perhaps more.1

But we believe future pandemics may be among the largest contributors to existential risk this century, because it now seems possible that advanced biotechnology is reaching a stage where it could be used to create pandemics that would kill more than 50% of the population — not just in a particular area, but globally. It’s possible they could be bad enough to drive humanity to extinction, or at least cause so much damage that civilisation never recovers.

Therefore, it seems extremely important to reduce the risk of biological catastrophes by constructing safeguards against potential outbreaks and preparing to mitigate their worst effects.

It is relatively uncommon for people in the broader field of biosecurity and pandemic preparedness to work specifically on reducing catastrophic risks and preventing engineered pandemics. Projects that reduce the risk of biological catastrophe also receive a relatively small proportion of health security funding.2

In our view, the most severe biological disasters are disproportionately costly because they could endanger civilisation or contribute to existential risk. This suggests that work to prevent the gravest outcomes in particular should receive more funding and attention than it currently does.

In the rest of this section, we’ll compare the risks from natural and artificial pandemics. Later on, we’ll discuss what kinds of work can and should be done in this area.

We also have a career review of biorisk research, strategy, and policy paths, which gives more specific and concrete advice about impactful roles to aim for and how to enter the field.

Natural pandemics show how destructive biological threats can be

Four of the worst pandemics in recorded history were:3

- The Plague of Justinian (541-542 CE), which is thought to have arisen in Asia before spreading into the Byzantine Empire around the Mediterranean. The initial outbreak is thought to have killed around six million (about 3% of the world population)4 and contributed to reversing the territorial gains of the Byzantine empire.

- The Black Death (1335-1355 CE), which is thought to have killed 20–75 million people (about 10% of the global population), with profound impacts on the course of European history.

- The Columbian Exchange (1500-1600 CE) caused a succession of pandemics, likely including smallpox and paratyphoid, brought by European colonists to the Americas. These pandemics devastated Native American populations, and likely played a major role in the loss of around 80% of Mexico’s native population during the sixteenth century. Other groups in the Americas appear to have lost even greater proportions of their communities — as high as 98%.5

- The 1918 Influenza Pandemic (1918 CE) spread across most of the globe and killed 50–100 million people (2.5%–5% of the world population). It may have been deadlier than either world war.

These historical pandemics show the potential for mass destruction from biological threats, and are a threat worth mitigating all on their own. They also show that key features of a global catastrophe, such as high proportional mortality and severe civilisational disruption, can be driven by pandemics.

But despite the horror of these past events, it seems unlikely that a natural pandemic could be bad enough on its own to drive humanity to total extinction in the foreseeable future, given what we know of events in natural history.6

As philosopher Toby Ord argues in his book The Precipice, history suggests humanity faces a very low baseline extinction risk — the chance of being wiped out in ordinary circumstances — from natural causes.

That’s because if the baseline risk were around 10% per century, we’d have to conclude we’ve gotten very lucky for the 200,000 years or so of humanity’s existence. The fact of our existence is much less surprising if the risk has been about 0.001% per century.7

None of the worst plagues we know about in history was enough to destabilise civilisation worldwide or clearly imperil our species’ future. And more broadly, pathogen-driven extinction events in nature appear to be rare for animals.8

Is the risk from natural pandemics increasing or decreasing?

Are we safer from natural pandemics than we used to be? Or do developments in human society actually put us at greater risk?

Good data on these questions is hard to find. The burden of infectious disease generally in human society is on a downward trend, but this doesn’t tell us much about whether the worst mass pandemics could be getting more dangerous.

In the abstract, we can think of many reasons that the risk from naturally arising pandemics might be falling:

- We have better hygiene and sanitation than past eras, and these will likely continue to improve.

- We can produce effective vaccines and therapeutics.

- We better understand how diseases spread, and how they affect the body.

- The human population is healthier overall.

On the other hand:

- Trade and air travel allow much faster and wider transmission of disease.9 For example, air travel seems to have played a large role in the spread of COVID-19 from country to country.10 In previous eras, the difficulty of travelling over long distances likely kept disease outbreaks more geographically confined.

- Climate change may increase the likelihood of new zoonotic diseases.

- Greater human population density may increase the likelihood that diseases will spread rapidly.

- Domestic animals are far more populous, which creates more opportunities for them to develop diseases and pass them on to humans.

There are likely many other relevant considerations. Our guess is that natural pandemics are becoming more frequent, but also much less damaging — such that overall danger will be lower. 11. However, there are still open questions.

Engineered pathogens could be even more dangerous

Even if natural pandemic risks are declining, the risks from engineered pathogens are almost certainly growing.

This is because advancing technology makes it increasingly feasible to create threatening viruses and infectious agents.12 Accidental and deliberate misuse of this technology is a credible global catastrophic risk and could potentially threaten humanity’s future.

One way this could play out is if some dangerous actor wanted to bring back catastrophic outbreaks of the past.

Polio, the 1918 pandemic influenza strain, and most recently horsepox (a close relative of smallpox) have all been recreated from scratch. The genetic sequences of these pathogens and others are publicly available, and the progress and proliferation of biotechnology opens up terrifying opportunities.13

Beyond the resurrection of past plagues, advanced biotechnology could let someone engineer a pathogen more dangerous than those that have occurred in natural history.

When viruses evolve, they aren’t naturally selected to be as deadly or destructive as possible. But someone who is deliberately trying to cause harm could intentionally optimise the harmful features of a virus in a way that is very unlikely to happen naturally.

Gene sequencing, editing, and synthesis are becoming easier and cheaper over time. We’re getting closer to being able to produce biological agents the way we design and produce computers or other products. This may allow people to design and create pathogens that are deadlier or more transmissible, or perhaps have wholly new features.

All the technologies involved have potential medical uses in addition to hazards. For example, viral engineering has been employed in gene therapy and vaccines (including some used to combat COVID-19). Yet knowledge of how to engineer viruses to be better as vaccines or therapeutics could be misused to develop ‘better’ biological weapons. Properly handling these advances involves a delicate balancing act.

Like new technology, new knowledge has mixed effects. Scientists are investigating what makes pathogens more or less lethal and contagious, which may help us better prevent and mitigate outbreaks. But this also means that the information required to design more dangerous pathogens is increasingly available.

Hints of these dangers can be seen in the scientific literature. Gain-of-function experiments with influenza suggested that artificial selection could lead to pathogens with properties that enhance their danger.14 And the scientific community has yet to establish strong enough norms to discourage and prevent the unrestricted sharing of dangerous findings, such as methods for making a virus deadlier. That’s why we warn people going to work in this field that biosecurity involves information hazards. It’s essential that the people handling these risks have good judgement.

Scientists can also make dangerous discoveries unintentionally in lab work. For example, vaccine research can uncover virus mutations that make a disease more infectious. And other areas of biology, such as enzyme research, show how our advancing technology can unlock new and potentially threatening capabilities that haven’t appeared before in nature.15

So while the march of science brings great progress, it also brings the potential for bad actors to intentionally produce new or modified pathogens. Even with the vast majority of scientific expertise focused on benefiting humanity, a much smaller group can use scientific advances to do great harm.

If someone or some group has enough motivation, resources, and technical skill, it’s difficult to place an upper limit on how deadly a pandemic they might one day create. As technology progresses, the tools for creating a biological disaster will become increasingly accessible, and the barriers to achieving terrifying results may get lower and lower — raising the risk of a major attack. (AI technology is especially threatening — read more about this below.)

Mirror bacteria illustrate the possibility of engineered catastrophic biorisks

In December 2024, a working group of 38 scientists, including Nobel laureates, warned in a new report of a potentially catastrophic biorisk – “mirror life.”16

Mirror life could function much like ordinary life, but at the molecular level, it would be crucially different. Here’s why: the DNA, RNA, and amino acids that make up life on Earth have a specific chirality. This means, for example, that DNA is not identical to its mirror image. Just as you can’t replace a left-handed glove with a right-handed glove, you couldn’t replace the DNA in your cells with mirror DNA. It wouldn’t match the other molecular structures it needs to interact with. DNA, in particular, is right-handed, and proteins are made from left-handed amino acids.17

Scientists could theoretically create organisms identical to ordinary life, except that all of these key building blocks are made with mirror-image molecules. Creating some mirror-image molecules is already possible, but synthesising complete mirror-image organisms would be much trickier. And they don’t exist anywhere in nature that we know of.

Researchers have taken steps toward creating mirror cells, such as mirror bacteria. This isn’t yet possible, but it might become possible in less than a decade.

The working group of scientists, including many of the people who had been working towards creating mirror bacteria, argued that doing so would be extremely dangerous. This is because:

- Mirror bacteria could potentially evade immune defence systems and cause lethal infections in humans, animals, and plants.

- Mirror bacteria would have few natural predators and might become an invasive species if released.

- Mitigating the harms would be extremely challenging.

This could trigger a global catastrophe, causing mass extinctions and ecological collapse.

“Living in an area contaminated with mirror bacteria could be similar to living with severe immunodeficiencies,” one of the scientists explained. “Any exposure to contaminated dust or soil could be fatal.”

This is a complex topic, and the science is still new. But the working group released a detailed technical report making the case that creating mirror organisms could lead to a global catastrophe, and that we should avoid this line of research unless it is shown to be safe – especially because the potential benefits seem minimal compared to the risks, and can likely be achieved through safer means.

The Mirror Biology Dialogues Fund is one of the primary groups working on this problem, aiming to advance the conversation about these risks.

The threat of mirror life seems worth understanding better at a minimum, and it has some chance of being the most dangerous currently known biorisk. And it illustrates the key point raised in the previous section: new discoveries and technology could unlock risks that would otherwise not exist.

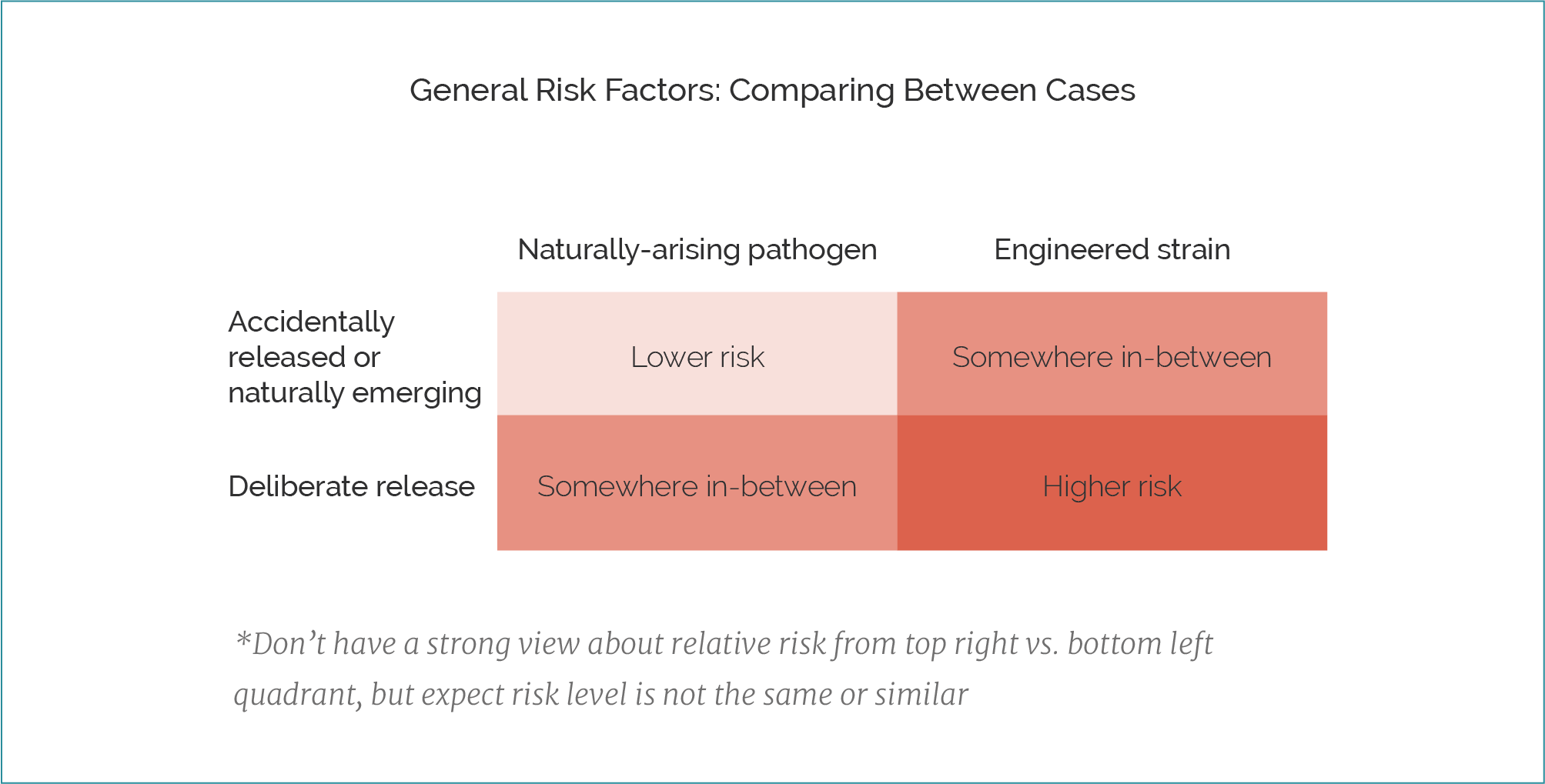

Both accidental and deliberate misuse are threats

We can divide the risks of artificially created pandemics into accidental and deliberate misuse; imagine a science experiment gone wrong compared to a bioterrorist attack.

The history of accidents and lab leaks which exposed people to dangerous pathogens is chilling:

- In 1977, an unusual flu strain emerged that disproportionately sickened young people and was found to be genetically frozen in time from a 1950 strain, consistent with a lab origin from a faulty vaccine trial (see this recent paper for more).

- In 1978, a lab leak at a UK facility resulted in the last smallpox death, a year after the disease had been eradicated in nature.

- In 1979, an apparent bioweapons lab in the USSR accidentally released anthrax spores that drifted over a town, sickening residents and animals and killing about 60 people. Though the incident was initially covered up, Russian President Boris Yeltsin later revealed it was an airborne release from a military lab accident.

- In 2014, dozens of CDC workers were potentially exposed to live anthrax after samples meant to be inactivated were improperly killed and shipped to lower-level labs that didn’t always use proper protective equipment.

- We don’t know how often this kind of thing happens because lab leaks are not consistently tracked. And there have been many more close calls.

History has seen many terrorist attacks and state development of mass-casualty weapons. Incidents of bioterrorism and biological warfare include:

- In 1763, British forces at Fort Pitt gave blankets from a smallpox ward to Native American tribes, aiming to spread the disease and weaken these communities. It’s unclear if this effort achieved its aims, though smallpox devastated many of these groups during the colonial era.

- During World War II, the Japanese military’s Unit 731 conducted horrific human experiments and biological warfare in China. They used anthrax, cholera, and plague, killing thousands and potentially many more.

- In the 1960s and 1970s, the South African government developed a covert chemical and biological warfare programme known as Project Coast. The programme aimed to develop biological and chemical agents targeted at specific ethnic groups and political opponents, including efforts to develop sterilisation and infertility drugs.

- In 1984, followers of the Rajneesh movement contaminated salad bars in Oregon with Salmonella, causing more than 750 infections, in an attempt to influence an upcoming election.

- In 2001, anthrax spores were mailed to several news outlets and two US Senators, causing 22 infections and five deaths.

So should we be more concerned about accidents or bioterrorism? We’re not sure. There’s not a lot of data, and considerations pull in both directions.

Releasing a deadly pathogen on purpose seems more concerning. There are multiple reasons we’d expect an intentional release to be more harmful:

- As discussed earlier, the worst pandemics would likely be intentionally created rather than emerge by chance.

- Someone who creates one pathogen may go on to create more, further increasing the potential for catastrophic harm.

- There are ways to make a pathogen’s release especially harmful (e.g. spreading it in a crowded population centre), and an accidental release wouldn’t be optimised for maximum damage.

On the other hand, a disastrous accident seems more likely than a mass biological attack. Most people are well-intentioned and want to use biotechnology to help the world rather than harm it. And efforts to eliminate state bioweapons programmes likely reduce the number of potential attackers. (But see more about the limits on these efforts below.)

We guess that, all things considered, the former considerations are the more significant factors.18 So we suspect that deliberate misuse is more dangerous than accidental releases, though both are certainly worth guarding against.

Overall, the risk seems substantial

We’ve seen a variety of estimates regarding the chances of an existential biological catastrophe, including the likelihood of an engineered pandemic.20 Perhaps the best estimates come from the Existential Risk Persuasion Tournament (XPT).

This project involved getting groups of both subject matter experts and experienced forecasters to estimate the likelihood of extreme events. For biological risks, the ranges of median estimates between forecasters and domain experts were as follows:

- Catastrophic event (meaning an event in which 10% or more of the human population dies) by 2100: ~1–3%

- Human extinction event: 1 in 50,000 to 1 in 100

- Genetically engineered pathogen killing more than 1% of the population by 2100: 4–10%21

Although they are the best available figures we’ve seen, these numbers have plenty of caveats. The main three are:

- There is little evidence that anyone can achieve long-term forecasting accuracy. Previous forecasting work has assessed performance for questions that would resolve in months or years, not decades.

- There was a lot of variation in estimates within and between groups. Some respondents gave numbers many times, or even many orders of magnitude, higher than other respondents.22

- The domain experts were selected for those already working on catastrophic risks. Other experts in those domains might generally rate extreme risks lower. In general, the forecasters tended to have lower estimates of the risk than the domain experts.

It’s hard to be confident about how to weigh up these different kinds of estimates and considerations, and we think reasonable people will come to different conclusions.

Our view: Given how bad a catastrophic pandemic would be, the fact that there seem to be few limits on how destructive an engineered pandemic could be, and how broadly beneficial mitigation measures are, many more people should be working on this problem than currently are.

Reducing catastrophic biological risks is highly valuable according to a range of worldviews

Because we prioritise problems that could have a significant impact on future generations, we care most about work that will reduce the biggest biological threats — especially those that could cause human extinction or derail civilisation.

But biosecurity and catastrophic risk reduction could be highly impactful for people with a range of worldviews, because:

- Catastrophic biological threats would harm near-term interests too. As COVID-19 showed, large pandemics can bring extraordinary costs to people today. Even more virulent or deadly diseases would cause even greater death and suffering.

- Interventions that reduce the largest biological risks are also often beneficial for preventing more common illnesses. Disease surveillance can detect both large and small outbreaks; counter-proliferation efforts can stop both higher- and lower-consequence acts of deliberate misuse; better PPE could prevent any kind of infection; and so on.

There is also substantial overlap between biosecurity and other problems, such as global health (e.g. the Global Health Security Agenda), factory farming (e.g. ‘One Health‘ initiatives), and AI.

How do catastrophic biorisks compare to AI risk?

Many of those who study existential risks believe that the greatest risks come from biology and AI. Our guess is that threats from catastrophic pandemics are somewhat less pressing than threats stemming from advanced AI systems.

But they’re probably not massively less pressing.

One feature of a problem that makes it more pressing is the existence of tractable solutions to work on. The more tractable solutions there are, the more your effort working on the problem actually helps others. Catastrophic biorisk seems relatively tractable because:

- The fields of public health and biosecurity are larger and better-established than the fields of AI safety and governance.23

- The sciences of disease and medicine are well-established, while AI safety includes multiple competing paradigms with few points of universal consensus.

- There is much less uncertainty and expert disagreement about how to address the problem; we know of several concrete biosecurity interventions that are very likely to reduce catastrophic risk.

The existence of this infrastructure in the biosecurity field may make the work more tractable, but it also makes it arguably less neglected — which would make it less pressing.

In 2023, interest in AI safety and governance began to grow rapidly, making these fields somewhat less neglected than they had been previously. But they’re still relatively neglected compared to the field of biosecurity. Since we view more neglected problems as more pressing, this factor counts in favour of working on AI risk.

We also consider problems that are larger in scale to be more pressing. We might measure the scale of the problem purely in terms of the likelihood of causing human extinction or an outcome comparably as bad. 80,000 Hours assesses the risk of an AI-caused existential catastrophe to be higher than that of an existential catastrophe caused by biorisk. At the same time, AI risk is more speculative than the risk from pandemics, because we know from direct experience that pandemics can be deadly on a large scale. So some people investigating these questions find biorisk to be a much more plausible threat.

But in most cases, which problem you choose to work on shouldn’t be determined solely by your view of how pressing it is (though this does matter a lot!). You should also take into account your personal fit and comparative advantage. So if you find biorisk much more motivating or you have a bio background, it could be more impactful for you to work on biorisk even if the risks are lower and the issue is less neglected overall.

Finally, a note about how these issues relate:

- AI progress may be increasing catastrophic biorisk. Some researchers believe that advancing AI capabilities may increase the risk of a biological catastrophe. Jonas Sandbrink, for example, has argued that advanced large language models may decrease the barriers to creating dangerous pathogens; researchers at SecureBio and the Center for AI Safety have found that AI models outperform most expert virologists on tests involving complex laboratory protocols.24 AI biological design tools could also eventually enable sophisticated actors to cause even more harm than they otherwise would.

- Biorisk could leave us more vulnerable to power-seeking AI systems. An engineered pathogen could be designed and deployed by an AI with relatively few resources (compared to what it would need to, say, launch a nuclear missile). As such, an AI system that escapes human control could use pathogens as a threat to negotiate with humanity, or as weapons of war to weaken human civilisation and seize power. (This idea appears in AI 2027, an influential essay showing how an AI takeover might unfold.)

- There is policy overlap between reducing biorisk and AI risk. Both require balancing the risk and reward of emerging technology, and the policy skills needed to succeed in these areas are similar. You could pursue a career to reduce risks in both areas at once.

There are clear actions we can take to reduce these risks

The broader field of biosecurity and pandemic preparedness has made major contributions to reducing catastrophic risks. Many of the best ways to prepare for more probable but less severe outbreaks will also reduce the worst risks.

For example, if we develop broad-spectrum vaccines and therapeutics to prevent and treat a wide range of potential pandemic pathogens, this will be widely beneficial for public health and biosecurity. But it also decreases the risk of the worst-case scenarios we’ve been discussing — it’s harder to launch a catastrophic bioterrorist attack on a world that is prepared to protect itself against the most plausible disease candidates. And if any state or other actor who might consider manufacturing such a threat knows the world has a high chance of being protected against it, they have less reason to try in the first place.

But if your focus is preventing the worst outcomes, you may want to focus on particular interventions.

Some experts in this area, such as MIT biologist Kevin Esvelt, believe that the best interventions for reducing the risk from human-made pandemics will come from the world of physics and engineering, rather than biology.

This is because for every biological countermeasure to reduce pandemic risk, such as vaccines, there may be tools in the biological sciences to overcome these obstacles — just as viruses can evolve to evade vaccine-induced immunity.

How to reduce biorisk

Leading biosecurity experts have proposed several strategies to significantly reduce the risk of engineered pandemics. These approaches have substantial support among researchers in the field, and could be extremely cost-effective.

We illustrate three of the most promising ideas below, and finish with a list of other suggestions.

1. Improve and implement pathogen detection technology

At present, we mostly notice pathogens by tracking the impact of their symptoms — patients entering hospitals, students absent from school, etc. But many experts are worried about the possibility of a “stealth” pathogen, which could evade these methods by spreading quietly without symptoms, only to cause severe harm years later. (For example, someone living with HIV can be both infectious and asymptomatic for a decade or more.)

To catch a stealth pathogen, we need detection strategies that don’t rely on symptoms. As a bonus, these can still be useful if symptoms manifest quickly; spotting a pathogen even a few days earlier can help us drastically reduce how far it spreads.

Metagenomic sequencing is one promising strategy for pathogen detection. Rather than testing for specific known pathogens, it sequences nucleic acids from all the genetic material in a microbiological sample. With enough samples, sequencing, and computing power, even very rare genomes can be detected and flagged (e.g. by looking for genomes that show signs of having been modified, or that are becoming more prevalent over time).

Frequent metagenomic sequencing requires a steady supply of new samples. Approaches we’ve seen include testing municipal wastewater and collecting nasal swabs from city-dwellers.

You could get involved in this area by:

- Working for SecureBio, which focuses on early detection of novel pathogens via metagenomic sequencing in human-derived samples. Its work includes testing collection methods, refining computational algorithms, and setting up detection systems in cities.

- Conducting research on pathogen detection in an academic setting. World leaders include the Sabeti Lab (MIT/Harvard) and PETAL (UC Berkeley.)

- Working on biosurveillance from within a national or city government.

- The 2026 US federal budget provides funding to develop a Biothreat Radar Detection System, which SecureBio believes could — with the right setup — provide reliable early detection throughout the US.

- The UK is currently piloting mSCAPE, which analyses data from diagnostic labs across the country to monitor trends and pathogen emergence.

- Promoting policies to support detection research and infrastructure, like the EU Biotech Act.

- Starting a new organisation to work on early detection; there are many promising ideas that remain underexplored. (If you’re considering this option, we recommend reaching out to SecureBio.)

2. Scale up PPE production and stockpiling

Medical countermeasures only work against specific pathogens. Flu vaccines are reformulated each year to address new strains, and are still only partially effective; “superbugs” like MRSA have evolved to resist antibiotics and are more dangerous as a result.

In theory, someone could design a pathogen that evades all known medical countermeasures. To guard against this threat, we need methods that could work against any engineered pathogen. Take personal protective equipment (PPE) — masks, gloves, and other physical barriers. Pathogens must infect us to cause harm, so stopping germs from entering our bodies should be universally effective.25 PPE isn’t a permanent solution, but it can protect essential workers and keep society running, buying time for scientists to develop therapeutics.

Andrew Snyder-Beattie posits that elastomeric respirators are a “sweet spot” for PPE. These devices typically filter out 99% of incoming particles, cost less than $50, can use the same filter for six months before replacement, and can last more than 10 years in storage.

But even the best PPE is useless if it isn’t available when needed. In the early days of COVID-19, PPE shortages forced many people to work in risky conditions without protection. We haven’t learned from this mistake: right now, many countries have inadequate PPE stockpiles. If a pandemic struck tomorrow that was much deadlier than COVID, many essential workers might stay home, leading to food shortages and understaffed hospitals.

Researchers at Blueprint Biosecurity have modelled the cost of stockpiling enough elastomeric respirators to provide global coverage for essential workers. Maintaining these stockpiles for 20 years would cost around $50 billion — orders of magnitude less than the expected annual cost of a major pandemic — and provide meaningful protection even against a novel engineered pathogen.26

Working to support the production and distribution of PPE could be a highly impactful way to reduce catastrophic biorisk. You might find an engineering or manufacturing role to develop cheaper and better PPE, a policy role advocating for governments to build stockpiles, or a government position where you can work on procurement and supply chain planning.

(Note that all these roles generally require engineering and/or managerial skill, rather than biology expertise.)

3. Restrict access to information and materials for building engineered pathogens

Building an engineered pathogen requires both a blueprint and the means to execute it. Kevin Esvelt argues that both of these steps are too easy to carry out:

- Researchers regularly conduct experiments that could demonstrate whether certain viruses could cause a pandemic — for example, measuring their ability to infect and grow within human cells, or their transmissibility between animal models.

- Meanwhile, the price of DNA synthesis has plummeted: someone who wants to reconstruct or engineer a pathogen can buy the requisite materials and equipment for less than $50,000. Some DNA synthesis providers conduct screening and background checks before delivering orders — but some of them do not.

Advanced AI systems can accelerate both problems:

- Biological AI models (BAIMs) include protein language models (pLMs), which are trained on large databases of protein sequences and are highly effective at proposing and refining protein sequences with specific properties. This helps researchers identify which mutations might enhance dangerous properties such as immune evasion or transmissibility. pLMs can be combined with automated laboratory platforms to speed up the design-build-test-learn cycle by reducing the amount of experimental work human scientists need to do manually. (For now, this is mostly useful to people who are already highly skilled.)

- pLMs aren’t the only concern. Other BAIMs have been used to design new bacteriophage viruses, which differed substantially from anything found in nature and were more efficient at killing bacteria than their natural competitors. Studies have shown that LLMs can provide accurate guidance on recovering live poliovirus from a synthetic DNA construct, and that customized LLM agents with access to specialized (but public) databases could design new DNA sequences.

- Sequences may not be flagged by screening systems. Multiple experiments have demonstrated ways to evade these systems, whether by creating novel variants of dangerous proteins or ordering harmless fragments that can be used to assemble dangerous sequences.

While AI can make pathogens easier to build and test, and harder to detect,27 we could address these problems by:

- Training AI systems not to generate dangerous sequences.28

- Improving our ability to screen DNA synthesis orders (both the materials being purchased and the buyer’s identity).

- Requiring safety certification for benchtop synthesisers.

- Limiting access to the most powerful biological models, as well as pathogen data that could be used to train dangerous capabilities.

You could contribute to this work through roles in biosecurity governance (either in government or at orgs like the Nuclear Threat Initiative or Center for Health Security), technical work at organisations like SecureDNA and SecureBio, or ML research focused on AI-biology safeguards (which takes place in university labs, as well as certain frontier AI companies and AI safety institutes).

Other ways to contribute

Beyond the three areas above, you could:

- Work with government, academia, industry, and international organisations to improve the governance of gain-of-function research involving potential pandemic pathogens, commercial DNA synthesis, and other research and industries that may enable the creation of (or expand access to) particularly dangerous engineered pathogens.

- Strengthen international commitments to not develop or deploy biological weapons, e.g. the Biological Weapons Convention.

- Carry out ‘horizon scanning’ work to find biorisks that are currently unknown or overlooked. This kind of work led to one of the biggest biosecurity wins in recent years — the realisation that mirror life could be very dangerous. However, it also runs the risk of uncovering infohazards, and should be handled carefully.

- Develop other technologies that can mitigate or detect pandemics,29 including:

- Broad-spectrum testing, therapeutics, and vaccines — and ways to create, manufacture, and distribute them quickly in an emergency.30

- Other mechanisms for impeding high-risk disease transmission, such as far UVC light or glycol vapours.

What jobs are available?

Biosecurity and pandemic preparedness are multidisciplinary fields. To address these threats effectively, we need a range of approaches, including:

- Technical and biological researchers to investigate and develop tools for controlling outbreaks.

- Entrepreneurs and industry professionals to build and deploy these tools.

- Strategic researchers and forecasters to develop plans.

- People in government to pass and implement policies aimed at reducing biological threats.

For our full article on pursuing work in biosecurity, you can read our pandemic prevention career review. Notably, while some careers in the field require a scientific background, there are also many ways for people to contribute using other skills, from operations to entrepreneurship.

If you want to focus on catastrophic pandemics in the biosecurity world, it might be easier to work on broader efforts that have more mainstream support first and then transition to more targeted projects later. If you are already working in biosecurity and pandemic preparedness (or a related field), you might want to advocate for a greater focus on measures that reduce risk robustly across the board, including in worst-case scenarios.

The world could be doing a lot more to reduce the risk of natural pandemics on the scale of COVID-19. It might be easiest to push for interventions targeted at this threat before looking to address the less likely but more catastrophic possibilities. On the other hand, potential attacks or perceived threats to national security often receive disproportionate attention from governments compared to standard public health threats, so there may be more opportunities to reduce risks from engineered pandemics under some circumstances.

To get a sense of what kinds of roles you might take on, you can:

- Read our career review on pandemic prevention and biosecurity

- Fill out this expression of interest form from the biosecurity team at Coefficient Giving, a grantmaker looking to bring more people into the field.

- Check out our job board (see below — not comprehensive, but a good place to start).

Our job board features opportunities in biosecurity and pandemic preparedness:

Want to work on reducing the risk of a biological disaster? We want to help.

We’ve helped people formulate plans, find resources, and put them in touch with mentors. If you want to work in this area, apply for our free one-on-one advising service.

Learn more

Top recommendations

- The Founders Pledge report on global catastrophic biological risks by Christian Ruhl

- The AI Risk Explorer’s content on AI-driven biorisk

- Richard Williamson’s Pandemic prevention as fire-fighting

- Anonymous answers: What are the biggest misconceptions about biosecurity and pandemic prevention?

- Anonymous answers: What are the best ways to fight the next pandemic?

- Podcast: Richard Moulange on how AI designs genomes from scratch & outperforms virologists at lab work — what could go wrong?

- Podcast: Andrew Snyder-Beattie on the low-tech plan to patch humanity’s greatest weakness

- Podcast: James Smith on why he quit everything to work on a biothreat nobody had heard of

Further recommendations

Resources for general pandemic preparedness

- The GCBR Organization Updates newsletter

- The characteristics of pandemic pathogens from CHS (2018)

- The UK Government’s biological security strategy (2025 update)

- Some good online sources to keep abreast of developments in the field (primarily from a US perspective) are the GMU Pandora report and the CHS mailing list.

- Reports from Blueprint Biosecurity: Blueprint for far-UVC and Towards a theory of pandemic-proof PPE

- The Biodefense budget breakdown by the Council on Strategic Risks

Other resources

- For sceptical perspectives on the case for extreme risks from engineered pandemics, you can read a series of posts by David Thorstad, or the more recent Omniscience is not omnipotence.

- The International Biosecurity and Biosafety Initiative (IBBIS) works to strengthen international biosecurity norms, and develop tools and incentives to uphold them.

- What were the death tolls from pandemics in history? from Our World in Data

- A set of classic papers in the field, though they may be outdated in some respects:

- Engineered pathogens: the opportunities, risks and challenges by Cassidy Nelson in The Biochemist (2019)

- Horsepox synthesis: a case of the unilateralist’s curse? by Gregory Lewis in The Bulletin of the Atomic Scientists (2019)

- Information hazards in biotechnology by Gregory Lewis, Piers Millett, Anders Sandberg, Andrew Snyder-Beattie, and Gigi Gronvall (2019)

- Bridging health and security sectors to address high-consequence biological risks by Cassidy Nelson and Michelle Nalabandian (2019)

Career resources

- The Emerging Leaders in Biosecurity Fellowship at CHS

- Marc Lipsitch on choosing a graduate programme or postdoctoral fellowship

- A list of promising places to work, study, and network

- A list of experts you could potentially try to work with

- US biosecurity policy resources, think tanks, and fellowships

- The Engineering Biology Research Consortium maintains a list of biosecurity opportunities and provides resources and programs on bioengineering work more broadly.

Podcasts

Notes and references

- Luke Muehlhauser’s writeup on the Industrial Revolution, which also discusses some of the deadliest events in history, reviews the evidence on the Black Death, as well as other outbreaks. Muehlhauser’s summary: “The most common view seems to be that about one-third of Europe perished in the Black Death, starting from a population of 75–80 million. However, the range of credible-looking estimates is 25–60%.”↩

- Gregory Lewis, one of the contributors to this piece, has previously estimated that a quality-adjusted ~$1 billion is spent annually on global catastrophic biological risk reduction. Most of this comes from work that is not explicitly targeted at GCBRs but is still disproportionally useful for reducing them.

For the most up-to-date analysis of biodefence spending in the US we’ve seen, check out the Biodefense budget breakdown from the Council on Strategic Risks.↩

- All of the impacts of the cases listed are deeply uncertain, as:

- Vital statistics range from at best very patchy (1918) to absent. Estimates of historical populations are very imprecise (let alone estimates of their mortality rates or the mortality attributable to a given outbreak).

- Proxy indicators (e.g. historical accounts, archaeology) have very poor resolution, leaving a lot to educated guesswork and extrapolation.

- Attribution of historical consequences of an outbreak are highly contestable. Other events can offer competing explanations.

Although these factors add ‘simple’ uncertainty, we would guess academic incentives and selection effects introduce a bias to overestimates for historical cases. For this reason, we used Muehlhauser’s estimates for ‘death tolls’ (generally much more conservative than typical estimates, such as ’75–200 million died in the black death’), and reiterate that the possible historical consequences are ‘credible’ rather than confidently asserted.

For example, it’s not clear the plague of Justinian should be on the list at all. Mordechai et al. (2019) survey the circumstantial archeological data around the time of the Justinian Plague and find little evidence of a discontinuity suggestive of a major disaster: papyri and inscriptions suggest stable rates of administrative activity, and pollen measures suggest stable land use. They also offer reasonable alternative explanations for measures that did show a sharp decline — new laws declined during the ‘plague period’, but this could be explained by government efforts at legal consolidation having coincidentally finished beforehand.

Even if one takes the supposed impacts of each outbreak at face value, each has features that may disqualify it as a ‘true’ global catastrophe. The first three, although afflicting a large part of humanity, left another large part unscathed (the Eurasian and American populations were effectively separated). The 1918 flu had a very high total death toll and global reach, but lower proportional mortality and relatively limited historical impact. And while the Columbian Exchange had a high proportional mortality and a crippling impact on the affected civilisations, it had comparatively little effect on global population.↩

- The precise death toll of the Justinian plague, common to all instances of ‘historical epidemiology,’ is very hard to establish — note for example this recent study suggesting a much lower death toll. Luke Muehlhauser ably discusses the issue here. Others might lean towards somewhat higher estimates given the circumstantial evidence for an Asian origin of this plague (and thus possible impact on Asian civilisations in addition to Byzantium), but with such wide ranges of uncertainty, quibbles over point estimates matter little.↩

- It may have contributed to subsequent climate changes like the ‘Little Ice Age.’↩

- There might be some debate about what counts as a pandemic arising ‘naturally.’ For instance, if a pandemic only occurs because climate change shifted the risk landscape, or because air travel allowed a virus to spread more widely than it would have had planes never been invented, it could arguably be considered a ‘human-caused’ pandemic. For our purposes, though, when we discuss ‘natural’ pandemics, we mean any pandemic that doesn’t arise from either purposeful introduction of the pathogen into the population or the accidental release of a pathogen in a laboratory or clinical setting.↩

- The evidence from such considerations isn’t overwhelming — see Manheim (2018) for a counterargument. But Manheim’s work still agrees with the central thrust of the argument in this article.

Kevin Esvelt discussed why natural pandemics are unlikely to be the most dangerous in an interview on our podcast.↩

We used the IUCN Red List of Threatened and Endangered Species and literature indexed in the ISI Web of Science to assess the role of infectious disease in global species loss. Infectious disease was listed as a contributing factor in [fewer than] 4% of species extinctions known to have occurred since 1500 (833 plants and animals) and as contributing to a species’ status as critically endangered in [fewer than] 8% of cases (2,852 critically endangered plants and animals).

But note also:

Although infectious diseases appear to play a minor role in global species loss, our findings underscore two important limitations in the available evidence: uncertainty surrounding the threats to species survival and a temporal bias in the data.

From: Smith KF, Sax DF, Lafferty KD. Evidence for the role of infectious disease in species extinction and endangerment. Conserv Biol. 2006 Oct;20(5):1349-57 pubmed.ncbi.nlm.nih.gov/17002752/.↩

- Although this is uncertain. Thompson et al. (2019) suggest that higher rates of air travel could be protective, the mechanism being that increased population mixing allows wider spread of ‘pre-pandemic’ pathogen strains, and thus builds up cross-immunity in the global population, giving us greater protection from the subsequent pandemic strain.↩

- See, for instance, “COVID-19 and the aviation industry: The interrelationship between the spread of the COVID-19 pandemic and the frequency of flights on the EU market” by Anyu Liu, Yoo Ri Kim, and John Frankie O’Connell.↩

- One suggestive datapoint comes from an AIR worldwide study that modelled what would happen if the 1918 influenza outbreak began today. It suggests that although the absolute numbers of deaths would be similar — in the tens of millions — the proportional mortality of the global population would be much lower.↩

- One could examine this using a mix of qualitative and quantitative metrics. On the former, consider the impacts of recent biotechnological breakthroughs like CRISPR-Cas9 genome editing, synthetic bacterial cells, or the Human Genome Project. Quantitatively, metrics of sequencing costs and publications show accelerating trends.↩

- Although there’s wide agreement on the direction of this effect, the magnitude is less clear. There remain formidable operational challenges to performing a biological weapon attack (well beyond the ‘in principle’ science), and historically, many state and non-state biological weapon programmes stumbled at these hurdles (Ouagrham-Gormley 2014). Biotechnological advances probably have lesser (but non-zero) effects in reducing these challenges.↩

- The interpretation of these gain-of-function experiments is complicated by the fact that the resulting strains had relatively ineffective mammalian transmission and lower pathogenicity, although derived from highly pathogenic avian influenza.↩

- See, for example, the following reports:

- Kemp elimination catalysts by computational enzyme design

- Computational design of an enzyme catalyst for a stereoselective bimolecular Diels-Alder reaction

- De novo computational design of retro-aldol enzymes

- Improved thermostability and enzyme activity of a recombinant phyA mutant phytase from Aspergillus niger N25 by directed evolution and site-directed mutagenesis

- Enhancing thermostability of Escherichia coli phytase AppA2 by error-prone PCR↩

- The risks had been mentioned previously on occasion, but this was the first in-depth technical report analysing the possibility.↩

- One amino acid, glycine, is achiral — which means it is symmetrical, and its mirror image is identical to it.↩

- There is some loosely analogous evidence with respect to accidental/deliberate misuse of other things. Although firearm accidents kill many (albeit less than ‘deliberate use’, at least in the US), it is hard to find such an accident that killed more than five people; far more people die from road traffic accidents than vehicle ramming attacks, yet the latter are over-represented in terms of large casualty events; aircraft accidents kill many more people than terrorist attacks using aircraft, yet the event with the largest death toll was from the latter category, etc.

By even looser analogy, it is very hard to find accidents which have killed more than 10,000 people, less so deliberate acts like war or genocide (although a lot turns on both how events are individuated, and how widely the consequences are tracked and attributed to the ‘initial event’).↩

- Claire Zabel is a managing director at Coefficient Giving, which is the largest funder of 80,000 Hours.↩

- Ord, The Precipice (2020): 3% by 2120

- Sandberg and Bostrom, Global catastrophic risks survey (2008): 2% by 2100

- Pamlin and Armstrong, Global challenges: 12 risks that threaten human civilisation (2015): 0.0001% to 5% (depending on different definitions) by 2115

- Fodor, Critical review of The Precipice (2020): 0.0002% by 2120

- Millet and Snyder-Beattie, Existential risk and cost-effective biosecurity (2017): 0.00019% (from biowarfare or bioterrorism) per year (assuming this is constant, this is equivalent to 0.02% by 2120).↩

- This risk surpasses the risk of non-genetically engineered pathogens by 2100.↩

- See for example this figure, for a less than 1% population death event from a genetically engineered pathogen by 2100:

The superforecasters (orange) tend to give much lower estimates than the biorisk people (~4% vs. 10%, and the boxplots do not overlap). Each point represents a forecaster, so within each of the groups the estimates range from between (at least) 1% to 25%.↩

- That said, there are still many exciting opportunities to work on reducing risks from AI.↩

- This research looked at AI models that were cutting-edge at the time, but are now several generations out of date; the best models of today are probably much more capable.↩

- However, pathogens that cause harm to humans by inhibiting agriculture or damaging the natural environment demand different countermeasures.↩

- For comparison, one analysis estimated that COVID-19 alone cost the global economy well over $10 trillion; this number would be much higher if you considered the impacts of all disease outbreaks.↩

- While AI progress introduces new risks and could make catastrophic outcomes easier to achieve, it’s also being used defensively — including as a means to improve both synthesis screening and customer screening.↩

- Our podcast episode with Richard Moulange also covers this strategy.↩

- Much has been written on specific technologies; for example, see Broad-Spectrum Antiviral Agents: A Crucial Pandemic Tool (2019). A number of the podcast episodes listed above discuss some of the most promising ideas, as do many of the papers in the “Other resources” section.↩

- Though note that, as discussed above, researchers working on these technologies should be careful to mitigate risks from any dual-use findings that could cause harm.↩