Policy and research ideas to reduce existential risk

In his book The Precipice: Existential Risk and the Future of Humanity, 80,000 Hours trustee Dr Toby Ord suggests a range of research and practical projects that governments could fund to reduce the risk of a global catastrophe that could permanently limit humanity’s prospects.

He compiles over 50 of these in an appendix, which we’ve reproduced below. You may not be convinced by all of these ideas, but they help to give a sense of the breadth of plausible longtermist projects available in policy, science, universities and business.

There are many existential risks and they can be tackled in different ways, which makes it likely that great opportunities are out there waiting to be identified.

Many of these proposals are discussed in the body of The Precipice. We’ve got a 3 hour interview with Toby you could listen to, or you can get a copy of the book mailed you for free by joining our newsletter:

Table of Contents

Policy and research recommendations

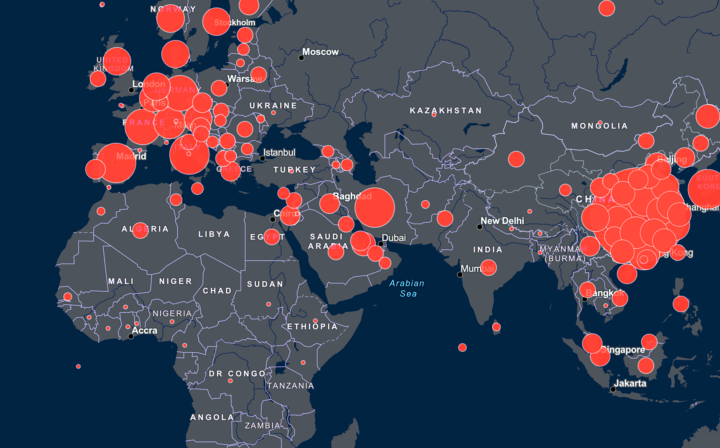

Engineered Pandemics

- Bring the Biological Weapons Convention into line with the Chemical Weapons Convention: taking its budget from $1.4 million up to $80 million, increasing its staff commensurately, and granting the power to investigate suspected breaches.

- Strengthen the WHO’s ability to respond to emerging pandemics through rapid disease surveillance, diagnosis and control. This involves increasing its funding and powers, as well as R&D on the requisite technologies.

- Ensure that all DNA synthesis is screened for dangerous pathogens. If full coverage can’t be achieved through self regulation by synthesis companies, then some form of international regulation will be needed.

- Increase transparency around accidents in BSL-3 and BSL-4 laboratories.

- Develop standards for dealing with information hazards, and incorporate these into existing review processes.

- Run scenario-planning exercises for severe engineered pandemics.

Unaligned Artificial Intelligence

- Foster international collaboration on safety and risk management.

- Explore options for the governance of advanced AI.

- Perform technical research on aligning advanced artificial intelligence with human values.

- Perform technical research on other aspects of AGI safety, such as secure containment and tripwires.

Asteroids & Comets

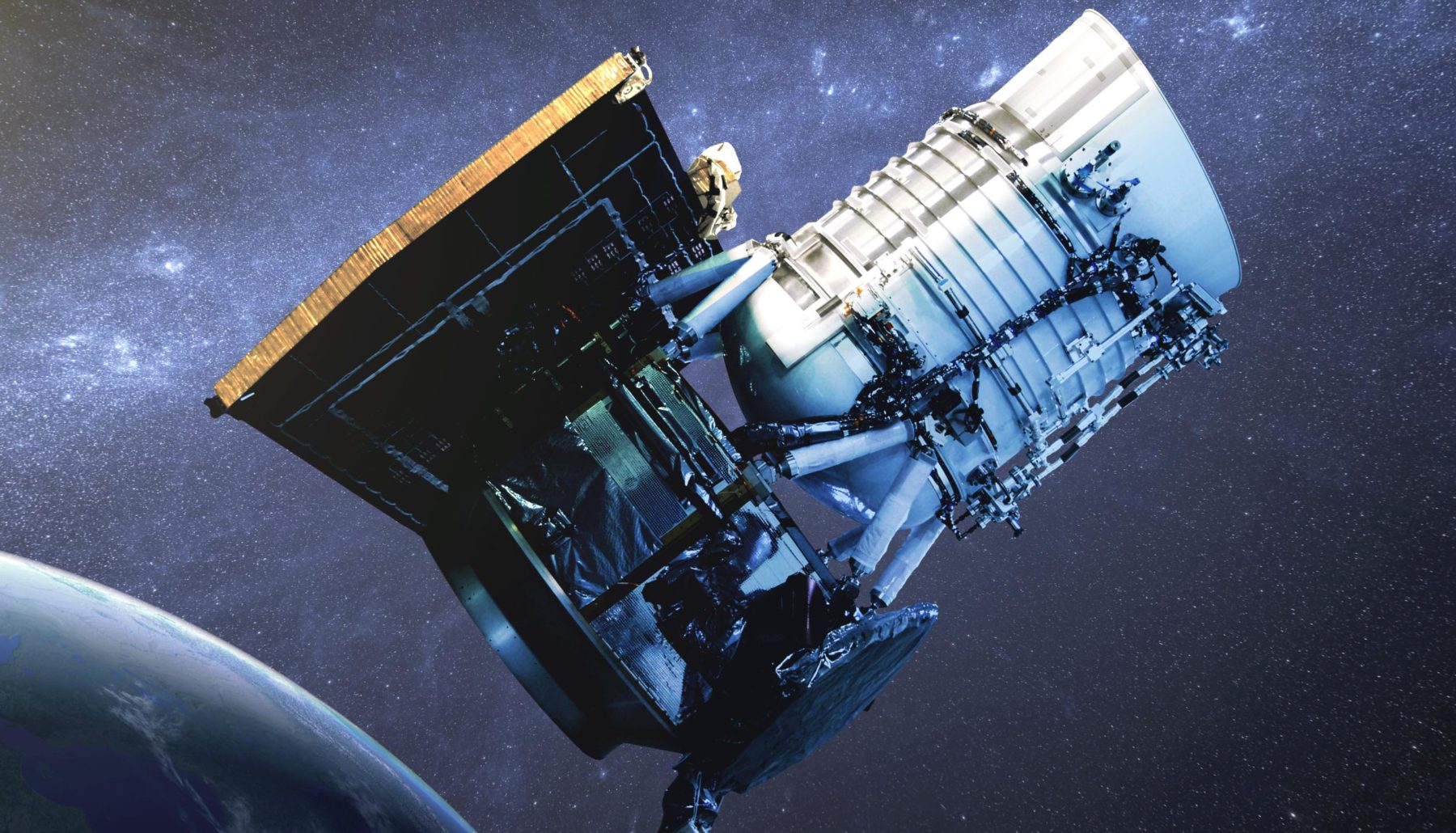

- Research the deflection of 1 km+ asteroids and comets, perhaps restricted to methods that couldn’t be weaponised such as those that don’t lead to accurate changes in trajectory.

- Bring short-period comets into the same risk framework as near-Earth asteroids.

- Improve our understanding of the risks from long-period comets.

- Improve our modelling of impact winter scenarios, especially for 1–10 km asteroids. Work with experts in climate modelling and nuclear winter modelling to see what modern models say.

Supervolcanic Eruptions

- Find all the places where supervolcanic eruptions have occurred in the past.

- Improve the very rough estimates on how frequent these eruptions are, especially for the largest eruptions.

- Improve our modelling of volcanic winter scenarios to see what sizes of eruption could pose a plausible threat to humanity.

- Liaise with leading figures in the asteroid community to learn lessons from them in their modelling and management.

Stellar Explosions

- Build a better model for the threat including known distributions of parameters instead of relying on representative examples. Then perform sensitivity analysis on that model—are there any plausible parameters that could make this as great a threat as asteroids?

- Employ blue-sky thinking about any ways current estimates could be underrepresenting the risk by a factor of a hundred or more.

Nuclear Weapons

- Restart the Intermediate-Range Nuclear Forces Treaty (INF).

- Renew the New START arms control treaty, due to expire in February 2026.

- Take US ICBMs off hair-trigger alert (officially called Launch on Warning).

- Increase the capacity of the International Atomic Energy Agency (IAEA) to verify nations are complying with safeguards agreements.

- Work on resolving the key uncertainties in nuclear winter modelling.

- Characterise the remaining uncertainties then use Monte Carlo techniques to show the distribution of outcome possibilities, with a special focus on the worst-case possibilities compatible with our current understanding.

- Investigate which parts of the world appear most robust to the effects of nuclear winter and how likely civilisation is to continue there.

Climate

- Fund research and development of innovative approaches to clean energy.

- Fund research into safe geoengineering technologies and geoengineering governance.

- The US should re-join the Paris Agreement.

- Perform more research on the possibilities of a runaway greenhouse effect or moist greenhouse effect. Are there any ways these could be more likely than is currently believed? Are there any ways we could decisively rule them out?

- Improve our understanding of the permafrost and methane clathrate feedbacks.

- Improve our understanding of cloud feedbacks.

- Better characterise our uncertainty about the climate sensitivity: what can and can’t we say about the right-hand tail of the distribution.

- Improve our understanding of extreme warming (e.g. 5–20 °C), including searching for concrete mechanisms through which it could pose a plausible threat of human extinction or the global collapse of civilisation.

Environmental Damage

- Improve our understanding of whether any kind of resource depletion currently poses an existential risk.

- Improve our understanding of current biodiversity loss (both regional and global) and how it compares to that of past extinction events.

- Create a database of existing biological diversity to preserve the genetic material of threatened species.

General

- Explore options for new international institutions aimed at reducing existential risk, both incremental and revolutionary.

- Investigate possibilities for making the deliberate or reckless imposition of human extinction risk an international crime.

- Investigate possibilities for bringing the representation of future generations into national and international democratic institutions.

- Each major world power should have an appointed senior government position responsible for registering and responding to existential risks that can be realistically foreseen in the next 20 years.

- Find the major existential risk factors and security factors — both in terms of absolute size and in the cost-effectiveness of marginal changes.

- (Editor’s note: existential risk factors are problems, like a shortage of natural resources, that don’t directly risk extinction, but could nonetheless indirectly raise the risk of a disaster. Security factors are the reverse, and might include better mechanisms for resolving disputes between major military powers.)

- Target efforts at reducing the likelihood of military conflicts between the US, Russia and China.

- Improve horizon-scanning for unforeseen and emerging risks.

- Investigate food substitutes in case of extreme and lasting reduction in the world’s ability to supply food.

- Develop better theoretical and practical tools for assessing risks with extremely high stakes that are either unprecedented or thought to have extremely low probability.

- Improve our understanding of the chance civilisation will recover after a global collapse, what might prevent this, and how to improve the odds.

- Develop our thinking about grand strategy for humanity.

- Develop our understanding of the ethics of existential risk and valuing the long-term future.