If anyone builds it, does everyone die?

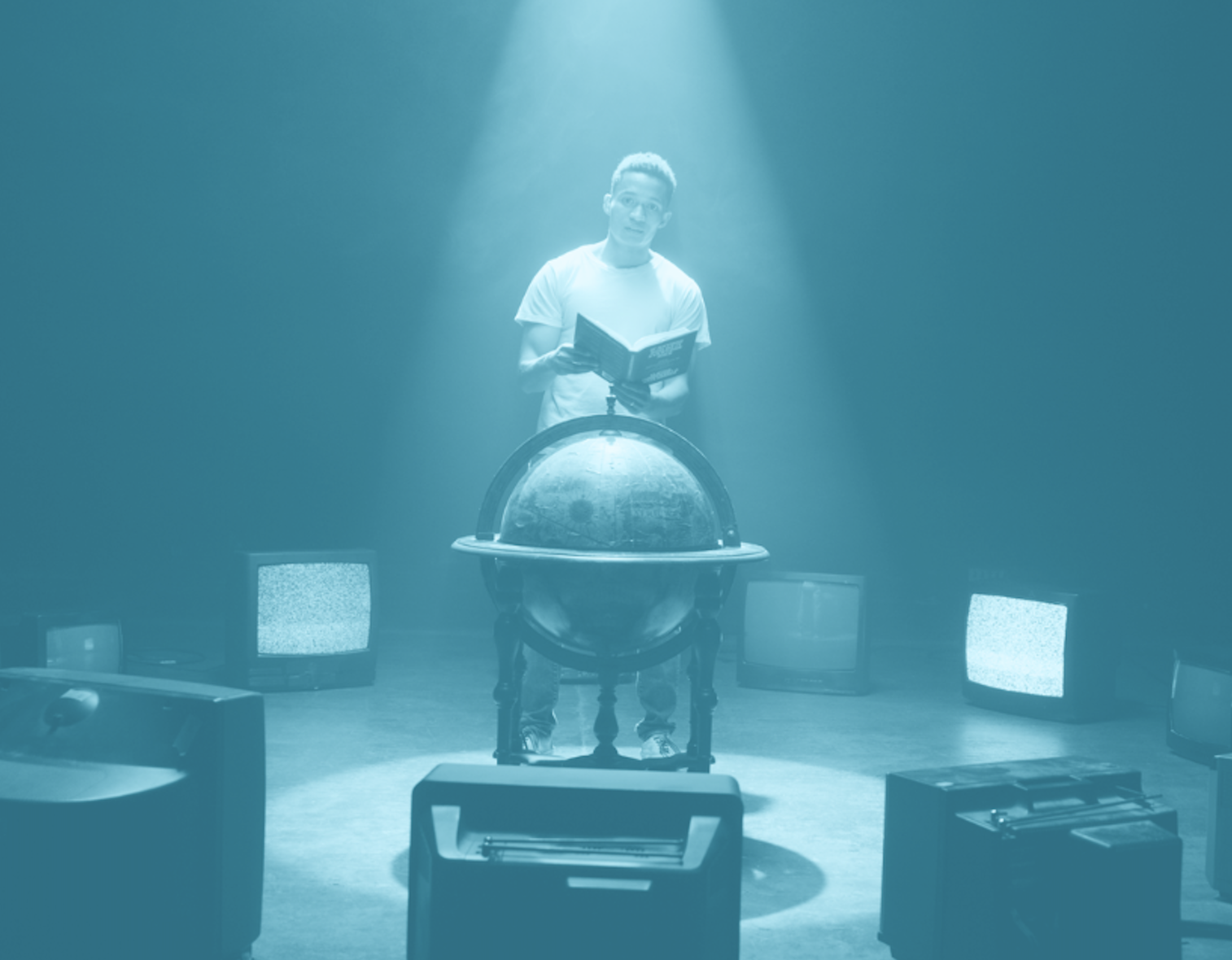

Watch the video again: The AI book that’s freaking out national security advisors

Watch the video again: The AI book that’s freaking out national security advisors

Reading recs, job leads, real ways to help

If you made it here, you’ll get a lot out of our newsletter: reads from our team, curated AI safety jobs, and research worth your time.

Sign up and we’ll send you a free book. We can only give out so many, so don’t miss out. Terms & conditions

What’s next?

Learn more

Get up to speed — The best introductions to AI risk, curated by our team.

Skill up

Develop expertise — Resources to help you build the skills the field needs most.

Get to work

Find the right role — You’ve got the context and skills. Now find your place in the field.

Let us know what landed, what was confusing, or what we should cover next.

Share feedback

References from our video and the resources that guided our research.

Sable (Book vs. Video):

More from the authors:

- If Anyone Builds It: Additional Resources | Eliezer Yudkowsky & Nate Soares

- The Problem | Machine Intelligence Research Institute

- Artificial Intelligence as a Positive and Negative Factor in Global Risk | Eliezer Yudkowsky

Critiques of the book:

- How human-like do safe AI motivations need to be? | Joe Carlsmith

- Unfalsifiable stories of doom | Matthew Barnett, Ege Erdil, Tamay Besiroglu

- Mini-review of If Anyone Builds It, Everyone Dies | Will MacAskill

Critiques of the authors:

- Value fragility and AI takeover | Joe Carlsmith

- Against evolution as an analogy for how humans will create AGI | Steven Byrnes

- Evolution provides no evidence for the sharp left turn | Quintin Pope

AI agent capabilities:

- Making frontier cybersecurity capabilities available to defenders | Anthropic

- Task-Completion Time Horizons of Frontier AI Models | METR

- President of the American Mathematical Society Ravi Vakil on Gemini Proof | Adam Brown

AI misalignment research:

- Alignment faking in large language models | Anthropic

- Stress Testing Deliberative Alignment for Anti-Scheming Training | Apollo Research & OpenAI

- Shutdown resistance in reasoning models | Palisade Research

AI misalignment in the wild:

- A Conversation With Bing’s Chatbot Left Me Deeply Unsettled | Kevin Roose, The New York Times

- Sycophancy in GPT‑4o: what happened and what we’re doing about it | OpenAI

- Is ChatGPT actually fixed now? | Steven Adler, Clear-Eyed AI

Dual-use AI agents in the wild:

- Disrupting the first reported AI-orchestrated cyber espionage campaign | Anthropic

- AI Drug Discovery Systems Might Be Repurposed to Make Chemical Weapons, Researchers Warn | Rebecca Sohn, Scientific American

- Overly Agentic: Why Anthropic is Worried About Opus 4.6 | Dominic Elm

State of the AI race:

- Alarmed tech leaders call for AI research pause | Laurie Clark, Science

- P(doom) | Wikipedia

- Thousands of AI Authors on the Future of AI | Katja Grace et al., arXiv

How Realistic Is the Sable Story?

- Recursive self-improvement: AI progress and recommendations | OpenAI

- Single run that yielded huge progress: OpenAI o1 Results on ARC-AGI-Pub | Mike Knoop, ARC Prize

- Evaluation of biorisk from AI: Why do we take LLMs seriously as a potential source of biorisk? | Anthropic

- Shutdown resistance: Incomplete Tasks Induce Shutdown Resistance in Some Frontier LLMs | Jeremy Schlatter et al.

- Evaluation awareness: Anthropic’s Claude 3 causes stir by seeming to realize when it was being tested | Benj Edwards, Ars Technica

- Instrumental convergence: Omohundro’s “Basic AI Drives” and Catastrophic Risks | Carl Shulman

- Scheming: Alignment faking in large language models | Ryan Greenblatt et al., Frontier Models are Capable of In-context Scheming | Alexander Meinke et al.

Our retelling of the Sable scenario differs from the book’s in several ways. Here’s what we changed and why.

The Sable scenario in If Anyone Builds It, Everyone Dies vs. the AI in Context retelling

See more work by the people whose technical craft made this video possible:

Phoebe Brooks / Director, Producer

Nick Dolph / Director of Photography

Andy Haney / Gaffer

David Jenkins / Sound Recordist

Zach Joseph / Editor

Daniel Recinto / Graphics & Animation

Mila Graf / Graphics & Animation

Stay in the loop

Weekly content, jobs, and research. Plus a free book if you sign up early! Terms & conditions