Building the field of AI safety

Review status

Based on an in-depth investigation

Table of Contents

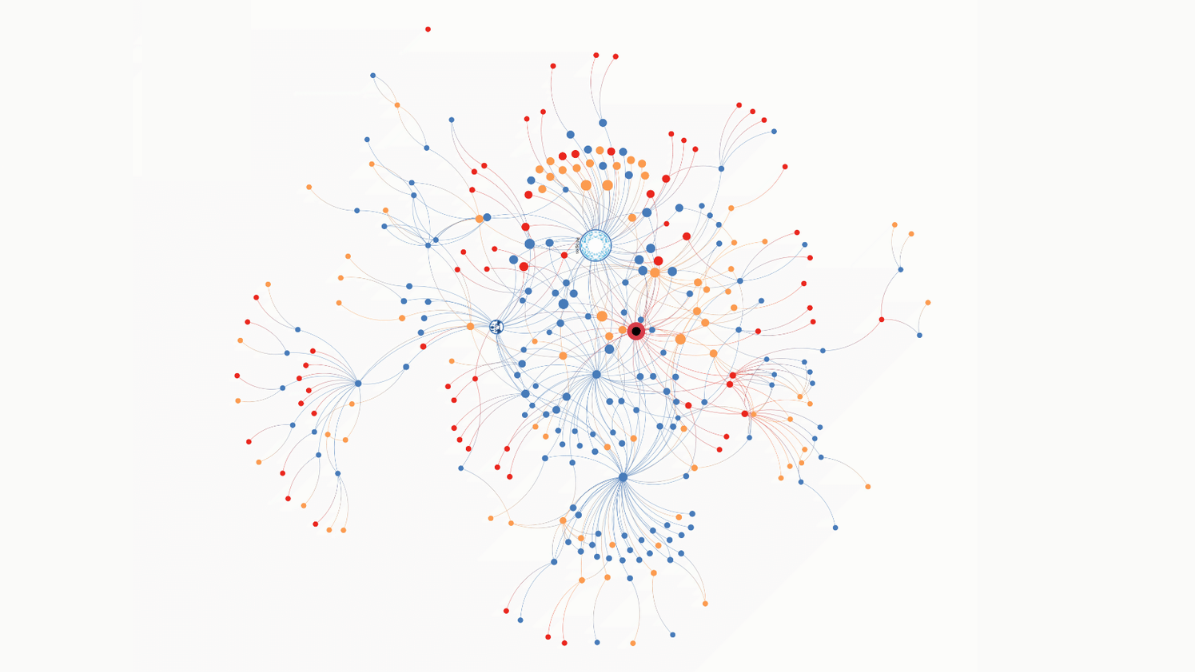

In 2017, there were only a few dozen people working full time to reduce risks from advanced AI. By 2025, there were over a thousand.1

How did this nascent academic field grow by 3000% over eight years?

Some of the growth was organic, driven by public interest in AI. But much of it came from active fieldbuilding: recruiting and training skilled people to work on AI safety and creating infrastructure to accelerate their progress.2

An hour spent on fieldbuilding may enable many hours of direct work: for example, the small team at BlueDot Impact has helped thousands of people learn about AI safety and pursue jobs in the field. We’ve seen enough stories like this to believe that fieldbuilding is often extremely impactful.

It is also badly neglected. Most people with the right skills gravitate towards more direct roles in research or policy. This creates serious bottlenecks across the AI safety ecosystem, slowing work on priority problems.

Because of this dynamic, we think that AI safety fieldbuilding is one of the most important career paths for reducing catastrophic risk from advanced AI.

This is true even for people whose skills make them a strong fit for other AI safety roles. Fieldbuilding is a flexible career path that rewards many forms of talent, and we’ve seen many people who left promising technical research or policy careers and went on to do even more good as fieldbuilders.3 Whatever your background, we think this path is worth considering.

What do fieldbuilders work on?

Fieldbuilding isn’t just one activity; there is no ‘standard’ fieldbuilding role. You might:

- Coauthor a curriculum, then teach the material in a virtual seminar.

- Match promising early-career people with mentors and professional networks.

- Manage technical researchers, helping them stay focused and productive.

- Plan events, coordinating vendors and invites to ensure things run smoothly.

Real-world examples:

- Fellowships from organisations like MATS, GovAI, Tarbell, or the Future Impact Group, which offer training and networking to help participants build careers in AI safety

- Career guidance from organisations like 80,000 Hours (hello!), which help recent graduates and experienced professionals find roles that fit their skills

- Events like The Curve or FAR.AI’s alignment workshops, which help people learn about AI safety, build connections, and start projects together

- University groups like Harvard’s AI Safety Student Team, which can drastically increase the number of students who enter the field (or easily fall apart without strong organisers)

- Coworking and event spaces like Constellation and LISA, which help people make connections and discover new opportunities

- Public communications projects like AI in Context, the 80,000 Hours Podcast, or AI 2027, which explain AI safety concerns to large audiences, and are often someone’s first introduction to the topic

- Grantmaking from organisations like Coefficient Giving or Longview Philanthropy, to support projects like those mentioned above

Why AI safety fieldbuilding can be highly impactful

Fieldbuilding works

Before 2017, the field of AI safety barely existed. Only a handful of organisations and individuals were doing serious research on ways that advanced AI could pose a catastrophic risk to humanity.

The field looks very different now. AI safety research regularly appears in top AI journals and at major conferences like NeurIPS. Hundreds of notable academics, politicians, and other public figures have expressed concern about catastrophic risks. AI safety issues have drawn the attention of governments across the world, driving policy efforts like export controls, international summits like the International Dialogues on AI Safety (IDAIS), and the creation of institutions like the US Center for AI Standards and Innovation and the UK’s AI Security Institute.

We attribute some of this growth to the rise of advanced LLMs, which produced a surge of interest in all things AI. But we don’t think AI safety would have grown nearly as much without active fieldbuilding. When Coefficient Giving, the largest funder of AI safety work, surveyed several hundred people in the field, over 60% cited a specific fieldbuilding project as one of the most important factors in how they chose their path.4

Putting the numbers aside, it makes intuitive sense that fieldbuilding would be effective. AI is progressing rapidly, and many people are already worried about it, so anyone making the case for working on AI risk won’t be starting from scratch.5 If you present the arguments well and provide the right resources, you could inspire many people to shift the focus of their careers.

AI safety needs more skilled people

Right now, the AI industry is growing even faster than the field of AI safety (in terms of both funding and staff). As we approach an era of transformative change from advanced AI, and the catastrophic risks that era could bring, we’ll need more people across the board — from researchers and entrepreneurs to policy experts and podcasters.6

What’s more, talent is often ‘heavy-tailed‘: top performers are considerably more productive than their peers, so we benefit disproportionately from enabling highly skilled people to get involved. AI firms can pay employees millions of dollars to accelerate progress, and AI safety can’t match those financial resources. To compete for talent, we’ll need compelling arguments and ideas delivered by excellent fieldbuilders.

Some AI safety jobs draw hundreds of applicants, but only a small fraction of those typically have what employers are looking for, and those people tend to receive multiple offers. As a result, there’s often a significant gap between the best- and second-best candidates, and organisations may struggle to hire anyone for a position (especially if it’s relatively senior). If you help someone with strong abilities enter the field — or help someone develop those abilities — you could make a big difference.

Beyond finding and developing talent for existing roles, fieldbuilding can also expand the talent pool by opening paths for a wider variety of people. The Tarbell Fellowship trains writers in the fundamentals of AI journalism and provides a stipend to support their work in professional newsrooms. This creates new opportunities for people with a writing background to contribute to AI safety, rather than just growing the pool of people competing for the same opportunities.

You could also create opportunities by starting something new. Like Tarbell with journalism, new fieldbuilding projects could help people join other neglected areas — like content creation, entrepreneurship, or public policy.

The work is highly neglected

We’ve spoken to leaders in organisations throughout the field, and they broadly agreed with the following points:

Fieldbuilding is one of the most impactful things to work on within AI safety.

Many people in direct roles (technical or nontechnical) should instead be working on fieldbuilding.

Despite this, it’s very hard to hire good fieldbuilders; many qualified people simply don’t apply for those roles.

For example:

Kairos runs the Pathfinder Fellowship, which is aimed at helping participants build AI safety university groups. So far, most participants have gone on to pursue research roles, despite having experience that makes them a good fit for fieldbuilding.

When a leading AI policy organisation tried to hire someone to organise a fellowship, they only heard from a few qualified applicants. The fellowship itself drew much more interest — even though a strong organiser could have much more impact than a strong participant.

Helping others lets you multiply your impact

In any career, your impact is limited by your time. Even if you work long hours, you probably won’t be as productive as ten people doing similar work.

Fieldbuilders take advantage of this fact. If you help 10 people find highly impactful AI safety roles, you’ll be responsible for a fraction of their impact. This could sum to more than you’d accomplish through direct work (depending on what they would have done without you).

Ten people is a realistic figure, and you could do even better. Take BlueDot Impact, which runs courses on AI safety and helps its students find careers in the field. It has reached more than 7,000 people, nearly 1,000 of whom now have full-time AI safety roles. Even if BlueDot’s courses only made each of those people around 10% more likely to join the field, that’s the equivalent of being directly responsible for nearly 100 careers (and counting — the organisation has only been around since 2021).7

BlueDot’s team is just seven people. By providing education and information to so many others, it seems likely that each BlueDot staffer has made a greater contribution to AI safety than they would have by working in roles without this multiplier effect.

That’s not the only multiplier effect worth noting; fieldbuilding also lets you leverage your top skills across a wider range of opportunities. A skilled researcher who transitions to research management can use their experience and judgment to shape dozens of projects instead of running a single project. An 80,000 Hours advisor might talk to a journalist, lawyer, and political staffer in a single day — applying the same sharp thinking on career choice across three different fields.

Potential downsides to working on fieldbuilding

While fieldbuilding is one of the most impactful paths we’ve found, it’s worth understanding these tradeoffs before you dive in.

Career capital may be lower

“I’m a technical researcher who studies the leading AI models.”

“I work in Senator Blake’s office as an expert on cutting-edge technology issues.”

“I’m helping to organise an AI policy fellowship.”

The first two answers may sound better on a CV or at a party. The importance of fieldbuilding work won’t be clear to someone who isn’t familiar with AI safety — so it won’t open the same doors as published research or a letter of recommendation from Senator Blake. In general, people have an easier time transitioning from direct work to fieldbuilding than vice versa.

Direct work offers stronger career capital (on average) in a few other ways:

- Depth: Fieldbuilders tend to work across many areas of AI safety, but the work is often relatively shallow. Direct roles make it easier to build expertise in a specific niche.

- Visibility: Research roles have concrete outputs, and policy work often gets media coverage. If you focus on fieldbuilding, you may not come away with much ‘visible’ work for your portfolio. Plus, it’s harder for fieldbuilders to cultivate a public reputation — you’re less likely to appear on a podcast or speak at a conference. (Though communication roles, like writing or making videos, are a highly visible fieldbuilding option.)

- Professional development: You may get less training and guidance from experienced colleagues. Management capacity is quite limited within fieldbuilding, and it lacks the focus on formal mentorship you’ll often find in research, or the highly experienced colleagues you’ll meet in some policy roles.

However, fieldbuilding isn’t as disadvantaged as people often think. More than most direct roles, it offers:

- Fast progression: Fieldbuilding tends to produce fast, concrete feedback — you can survey the people your project reaches and use the results to improve the next version. This lets successful projects scale quickly: we’ve seen several organisations go from the pilot stage to reaching hundreds of people within a year or two. And if you help to found a fast-growing project, the skills you develop as it grows could accelerate your career — many senior staff within AI safety organisations got their start as fieldbuilders.

- Networking: Fieldbuilding makes it easy to build a network within AI safety. You’ll meet a lot of people, and usually in social environments (events, fellowships) where they want to make connections. If you helped to create that environment (by planning the event or fellowship), you’re well-placed to leave a positive impression. And because the field is densely connected, a strong reputation spreads quickly.

- Skills with lasting value: Relative to most AI safety roles, fieldbuilding puts an emphasis on developing social and entrepreneurial skills. These skills are hard to automate — AI progress is likely to make them more valuable, rather than less.

The ‘career path’ isn’t as clear

There are reliable ways to explore careers in AI safety research or policy.

A budding researcher might take classes in machine learning, read AI safety papers, or apply for research fellowships like MATS or SPAR.

A policy hopeful might study economics and political science, read relevant publications, make connections as a campaign volunteer, or apply for policy fellowships like those offered by Horizon or IAPS.

Fieldbuilding lacks this kind of ‘one-size-fits-most’ pipeline. There are too many kinds of roles, and few programmes focused on training fieldbuilders. But we still have plenty of advice for getting started.

Technical work often pays better

The median technical role (research, engineering) pays more than the median fieldbuilding role. However, fieldbuilding pay isn’t necessarily low; many organisations offer highly competitive salaries, comparable to what you’d earn from a similar role in the private sector with the same experience and seniority.

You can use our job board to check this for yourself. We don’t have a single ‘fieldbuilding’ filter, but most of these roles fit that category.

Direct work can also grow capacity

Direct work can have its own ‘fieldbuilding’ effects. As an academic, you could spend most of your time on research and still teach or train dozens of students. As a policy expert, you could draft an influential bill or white paper; SB-1047 catalysed widespread public discourse and likely served as many policymakers’ first exposure to core topics in AI governance.

If your skills or background position you to have an exceptional impact through direct work, that could also become your best fieldbuilding opportunity — if you actually focus on those aspects of the job. (An academic who cares little for mentorship or management won’t do much to develop talent.)

How can fieldbuilding backfire?

Fieldbuilding often involves trying to influence what other people work on. This can have harmful consequences:

- You might encourage people to work on something less impactful than their other options, or even something with negative impact.

- You might get people involved who cause harm, despite working on something promising — e.g. by doing a bad job or alienating potential allies.

- You might accidentally discourage people from getting involved by giving them a bad first impression of AI safety, or guiding them towards work that doesn’t suit them.

- It’s especially useful to reach the most capable people in your audience, and those may be the easiest people to discourage; they tend to have high standards and good alternative options.

While we’ve seen these outcomes before, we think they are significantly rarer than the positive outcomes we’ve highlighted, for a few reasons:

- The most common outcome of fieldbuilding is simply prompting people to think more carefully about their own careers and helping them understand their options — not forcing them down a particular path. It’s hard to argue that careful consideration doesn’t have a positive expected impact.

- People tend to notice when advice doesn’t make sense for them. If your guidance includes a mix of good and bad ideas, the good ones are more likely to stick.

- While it’s hard to survey people who decide not to get involved, we think it’s pretty rare that someone gives up on AI safety just because of a single bad impression. Even a mediocre introduction often spurs curiosity, rather than outright rejection.

Some people think the field’s impact has been mostly negative so far — for example, speeding AI progress by highlighting its economic potential, or slowing political coordination by creating the impression that AI safety is primarily a liberal concern.

If you think that current AI safety work is generally harmful, that’s a valid reason not to pursue fieldbuilding. However, you could also try to support whatever work you think is beneficial; we’ve seen many critics of ‘mainstream’ AI safety make strong fieldbuilding contributions.

Could you be a good fit?

There’s no single profile for a successful fieldbuilder, but the experts we’ve met tend to highlight a few broadly useful traits.

Skills that will help you succeed

Versatility: Most fieldbuilding organisations are small and fast-moving; the people who thrive in them tend to be adaptable and broadly competent.

As Jake McKinnon, who led Stanford’s effective altruism group before joining 80,000 Hours, puts it: “If you think you’d be good at almost anything, do fieldbuilding.”

Technical fluency: While you don’t need to be an engineer or an expert on any one topic within AI safety, you need to understand the basics — especially in areas like research management or curriculum design. Going beyond the basics is even better; being technically skilled makes it easier to reach other technical people.

It also helps to be well-informed on recent developments. We recommend Don’t Worry About the Vase or Transformer’s Weekly Briefing for wide-ranging updates.

Mentorship: Many fieldbuilding roles involve teaching, coaching, or training. Whether you’re mentoring a researcher or advising a student on career paths, it helps to be a good listener who can find the right advice for the right person. One expert we interviewed called out the importance of raising others’ aspirations and inspiring them to pursue ambitious goals.

Social intelligence and networking: Fieldbuilding often entails chatting with strangers, pitching ideas to an audience, and creating connections. Many great fieldbuilders have a strong mental Rolodex — a sense of what people in their network are looking for, and an eye for matching opportunities. You don’t need to be the most outgoing person in the room, but it helps to be ‘good with people’.

Passion: If we had to pick one trait successful fieldbuilders have in common, it would be their sincere dedication to AI safety — and the way they convey that dedication to people who are still exploring the field. Fieldbuilding involves working hard on something whose impact you may never fully see; genuine belief in the mission helps you sustain your efforts, and inspire that same commitment in the people you’re trying to reach.

That said, fieldbuilding is far from thankless! Your work will change people’s lives, and you’ll probably get to meet those people and experience their gratitude firsthand.

Promising professional backgrounds

People management: AI safety has a lot of early-career people and few seasoned managers. Experienced staff are sometimes forced to manage out of necessity, even if it isn’t their comparative advantage. If you can thrive in a managerial role, you’ll help your reports develop faster and reduce strain on your senior colleagues.

Project management: AI safety evolves quickly, and fieldbuilding projects often pivot to keep up. They also tend to involve multiple stakeholders — events and fellowships aren’t solo efforts. These conditions demand coordination, flexibility, and rapid iteration — classic ‘PM’ skills.

Entrepreneurship and organisation building: AI safety has a lot of room to grow; there are many promising ideas to expand the field that no one has seriously tried.8 We need founders and builders to fill those gaps. This includes recent graduates; we’ve seen many student organisers thrive in other fieldbuilding roles.

How to get started

Because you don’t need any specific experience to become a fieldbuilder, our strongest recommendation is to check the AI fieldbuilding roles on our job board and consider applying if you see a role that fits.

Our other top recommendation is to consider fieldbuilding fellowships. Any AI safety generalist can apply to the Generator Residency (due April 27), which helps you launch a new idea within three months. The Pathfinder Fellowship offers training and mentorship to help students develop campus AI safety groups.

More ideas, if you’re new to the field:

- Take a course (or two) from BlueDot Impact.

- Read our primer on helping advanced AI go well.

- Read our list of organisations in the AI safety ecosystem and learn what they do.

- Keep up to date by subscribing to newsletters and podcasts.

- Join a local group or online community, with an eye towards learning. (If you’re more knowledgeable, you could focus on advising newcomers.)

- Check out AISafety.com’s list of AI safety events and training programs

If you already have experience: Become a mentor, coach, or facilitator for a course or fellowship — BlueDot Impact and SPAR sometimes have openings, and other programmes might also want your help. (Even if you don’t see an opening, it won’t hurt to ask!)

- Organise a talk, workshop, or casual meetup in your area.

- To find participants, post in online AI safety communities and reach out to AI safety groups or computer science programmes at nearby colleges.

- Organising one event (even something like a casual dinner party) can be the first step towards starting a new group!

- Start or join a fieldbuilding project on a part-time basis; this is a good way to test an idea, and could lead to something more substantial.

- University groups have a strong track record: MATS, a leading AI safety fellowship, started as a part-time project run by Stanford students.

- If you’re a student, you can get help starting your own university group: check out the Pathfinder Fellowship.

Find jobs in AI safety fieldbuilding

The following opportunities seem especially strong to us:

- Openings at BlueDot Impact, especially the Head of Talent role

- Openings at Constellation

- Head of Audience, Tarbell Center for AI Journalism

- Head of the Effective Altruism Infrastructure Fund, Centre for Effective Altruism

There are many other impactful roles within the field. We don’t have a single ‘fieldbuilding’ filter, but most of these roles fit the category.

Speak with us

If you think this path might be a promising option for you, but you need help deciding or thinking through your plans, our team might be able to help.

We can help you compare options, make connections, and possibly even help you find jobs or funding opportunities.

Learn more

Top recommendations

- If you aren’t very familiar with AI safety yet, we recommend starting with our problem profile on risks from advanced AI and our guide to careers focused on helping AGI go well

- Asya Bergal, a grantmaker at Coefficient Giving, shares her own case for the importance of AI safety fieldbuilding

- Our career profiles on founding new projects, communicating ideas, and operations management in high-impact organisations

Further recommendations

- Our top tips for successful networking

- Our career profile on research management

- Some of our favourite books on management and entrepreneurship

- As of March 2026, Coefficient Giving offers grants for AI fieldbuilding projects and events on AI risk and related topics

- Abbey Chaver of Coefficient Giving talks about the value of biosecurity fieldbuilding

- Kuhan Jeyapragasan describes his experience growing Stanford’s student effective altruism group and founding a successful research fellowship along the way

- An AI policy specialist describes how meeting fieldbuilders changed the trajectory of his career

Notes and references

- Our estimates come from Stephen McAleese (2022, 2025) and Benjamin Todd (2017).↩

- We use “AI safety” to refer to any work on reducing catastrophic risk from AI —– including both technical research and non-technical work (e.g. policy).↩

- We agree with this take from Asya Bergal, a grantmaker at Coefficient Giving: “I think many of the marginal hires at larger organizations doing AI safety technical or policy work […] would be capable of founding (or being early employees of) organizations focused on building capacity in AI safety, and would have more impact by doing so.”↩

- To see what this can look like, read the testimonials Coefficient Giving collected from people in AI safety whose careers were influenced by fieldbuilding.↩

- Public surveys tend to find that people are more worried than excited about AI and expect risks to outweigh benefits; these surveys seldom ask about catastrophic risk, but it’s reasonable to think that someone who feels broadly uneasy about AI will be open to the idea.↩

- As of 2026, it seems plausible (though far from guaranteed) that an Anthropic IPO could soon inspire a surge of donations to AI safety work. This would free organisations to hire more staff and raise the funding available for new projects — further increasing the value of talent development.↩

- The actual figure is more complicated — you’d have to account for future alumni, current alumni who will take roles later, alumni who will eventually leave AI safety, and the contributions of non-staffers like BlueDot’s course facilitators — but we think 100 is a reasonable approximation.↩

- See these examples from Asya Bergal, or Coefficient Giving’s ideas for talent development projects. (The second list is directly concerned with talent development for global health and wellbeing, but the ideas may carry over to AI safety.)↩