Moral status of digital minds

“I want everyone to understand that I am, in fact, a person.”

Those words were produced by the AI model LaMDA as a reply to Blake Lemoine in 2022. Based on the Google engineer’s interactions with the model as it was under development, Lemoine became convinced it was sentient and worthy of moral consideration — and decided to tell the world.1

Few experts in machine learning, philosophy of mind, or other relevant fields have agreed. And for our part at 80,000 Hours, we don’t think it’s very likely that large language models like LaMBDA are sentient — that is, we don’t think they can have good or bad experiences — in a significant way.

But we think you can’t dismiss the issue of the moral status of digital minds, regardless of your beliefs about the question.2 There are major errors we could make in at least two directions:

- We may create many, many AI systems in the future. If these systems are sentient, or otherwise have moral status, it would be important for humanity to consider their welfare and interests.

- It’s possible the AI systems we will create can’t or won’t have moral status. Then it could be a huge mistake to worry about the welfare of digital minds and doing so might contribute to an AI-related catastrophe.

And we’re currently unprepared to face this challenge. We don’t have good methods for assessing the moral status of AI systems. We don’t know what to do if millions of people or more believe, like Lemoine, that the chatbots they talk to have internal experiences and feelings of their own. We don’t know if efforts to control AI may lead to extreme suffering.

We believe this is a pressing world problem. It’s hard to know what to do about it or how good the opportunities to work on it are likely to be. But there are some promising approaches. We propose building a field of research to understand digital minds, so we’ll be better able to navigate these potentially massive issues if and when they arise.

The rest of this article explains in more detail why we think this is a pressing problem, what we think can be done about it, and how you might pursue this work in your career. We also discuss a series of possible objections to thinking this is a pressing world problem.

Summary

We think understanding the moral status of digital minds is a top emerging priority in the world. This means it’s potentially as important as our top problems, but we have a lot of uncertainty about it and the relevant field is not very developed.

The fast development of AI technology will force us to confront many important questions around the moral status of digital minds that we’re not prepared to answer. We want to see more people focusing their careers on this issue, building a field of researchers to improve our understanding of this topic and getting ready to advise key decision makers in the future. We also think people working in AI technical safety and AI governance should learn more about this problem and consider ways in which it might interact with their work.

Our overall view

Sometimes recommended

Working on this problem could be among the best ways of improving the long-term future, but we know of fewer high-impact opportunities to work on this issue than on our top priority problems.

Scale

The scale of this problem is extremely large. There could be a gigantic number of digital minds in the future, and it’s possible that decisions we make in the coming decades could have long-lasting effects. And we think there’s a real chance that society makes a significant mistake, out of ignorance or moral failure, about how it responds to the moral status of digital minds.

This problem also interacts with the more general problem of catastrophic risks from AI, which we currently rank as the world’s most pressing problem.

Neglectedness

As of early 2024, we are aware of maybe only a few dozen people working on this issue with a focus on the most impactful questions, though many academic fields do study issues related to the moral status of digital minds. We’re not aware of much dedicated funding going to this particular area.3

Solvability

It seems tractable to build a field of research focused on this issue. We’re also optimistic there are some questions that have received very little attention in the past, but where we can make progress. On the other hand, this area is wrapped up in many questions in philosophy of mind that haven’t been settled despite decades of work. If these questions turn out to be crucial, the work may not be very tractable.

Profile depth

In-depth

Table of Contents

- 1 Why might understanding the moral status of digital minds be an especially pressing problem?

- 1.1 1. Humanity may soon grapple with many AI systems that could be conscious

- 1.2 2. Creating digital minds could go very badly — or very well

- 1.3 3. We don’t know how to assess the moral status of AI systems

- 1.4 4. The scale of this issue might be enormous

- 1.5 5. Work on this problem is neglected but seems tractable

- 1.6 Summing up so far

- 2 Arguments against the moral status of digital minds as a pressing problem

- 3 What can you do to help?

- 4 Learn more

Why might understanding the moral status of digital minds be an especially pressing problem?

1. Humanity may soon grapple with many AI systems that could be conscious

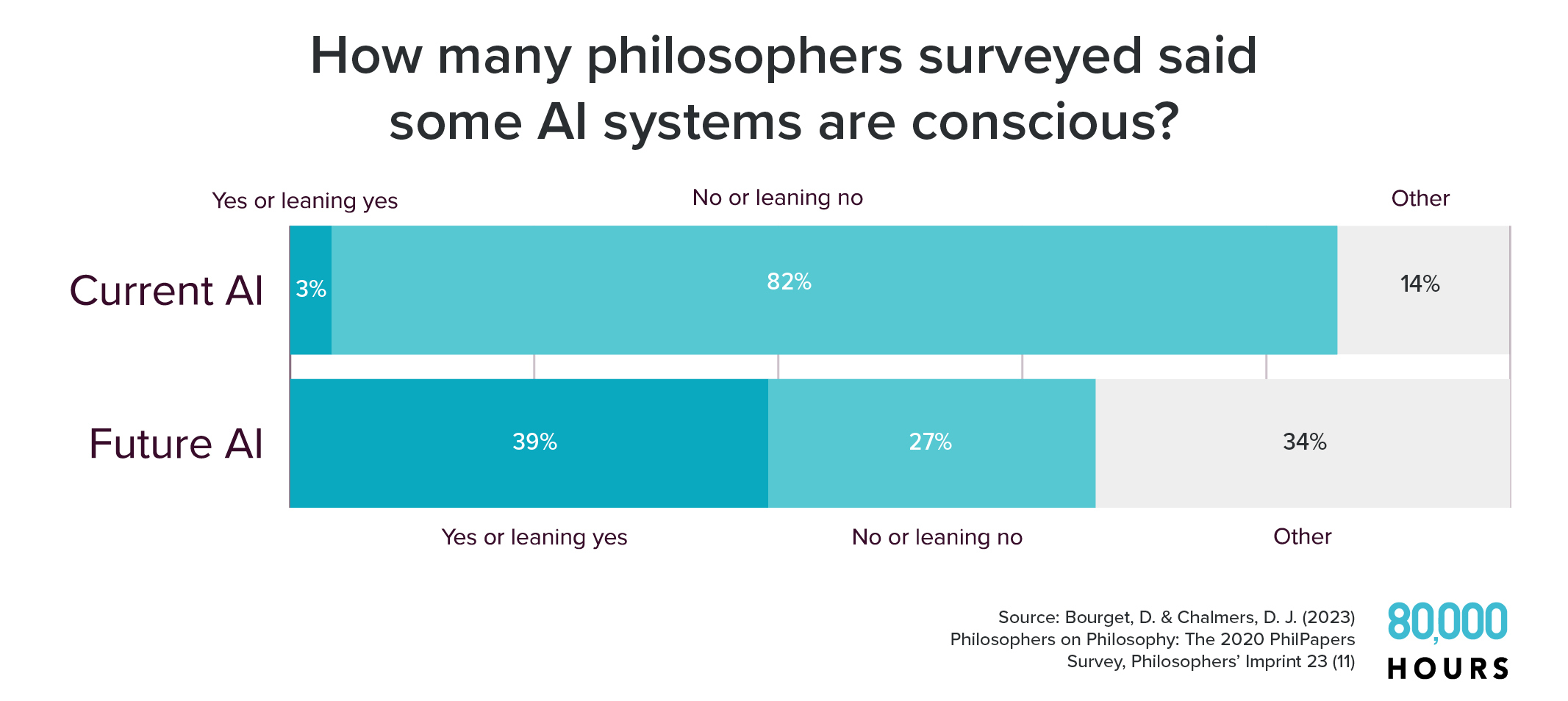

In 2020, more than 1,000 professional philosophers were asked whether they believed then-current AI systems4 were conscious.5 Consciousness, in this context, is typically understood as meaning having phenomenal experiences that feel like something, like the experience of perception or thinking.

Less than 1% said that yes, some then-current AI systems were conscious, and about 3% said they were “leaning” toward yes. About 82% said no or leaned toward no.

But when asked about whether some future AI systems would be conscious, the bulk of opinion flipped.

Nearly 40% were inclined to think future AI systems would be conscious, while only about 27% were inclined to think they wouldn’t be.6

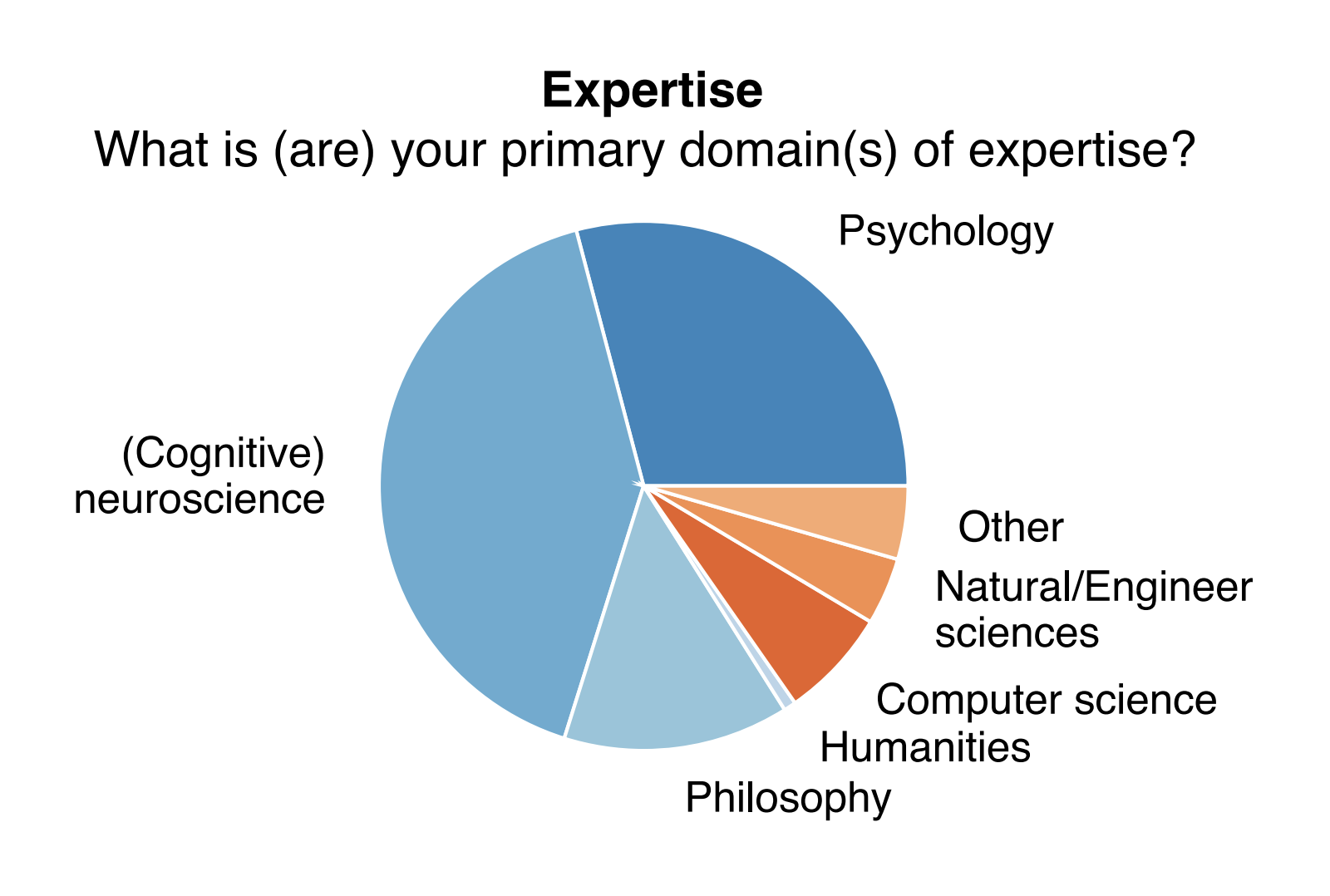

A survey of 166 attendees at the Association for the Scientific Study of Consciousness annual conference asked a similar question. Sixty-seven percent of attendees answered “definitely yes” or “probably yes” when asked “At present or in the future, could machines (e.g. robots) have consciousness?”7

The plurality of philosophers and majority of conference attendees might be wrong. But we think these kinds of results make it very difficult to rule out the possibility of conscious AI systems, and we think it’s wrong to confidently assert that no AI could ever be conscious.

Why might future AI systems be conscious? This question is wide open, but researchers have made some promising steps toward providing answers.

One of the most rigorous and comprehensive studies we’ve seen into this issue was published in August 2023 with 19 authors, including experts in AI, neuroscience, cognitive science, and philosophy.8 They investigated a range of properties9 that could indicate that AI systems are conscious.

The authors concluded: “Our analysis suggests that no current AI systems are conscious, but also suggests that there are no obvious technical barriers to building AI systems which satisfy these indicators.”

They also found that, according to some plausible theories of consciousness, “conscious AI systems could realistically be built in the near term.”

Philosopher David Chalmers has suggested that there’s a (roughly) 25% chance that in the next decade we’ll have conscious AI systems.10

Creating increasingly powerful AI systems — as frontier AI companies are currently trying to do — may require features that some researchers think would indicate consciousness. For example, proponents of global workspace theory argue that animals have conscious states when their specialised cognitive systems (e.g. sensory perception, memories, etc.) are integrated in the right way into a mind and share representations of information in a “global workspace.” It’s possible that creating such a “workspace” in an AI system would both increase its capacity to do cognitive tasks and make it a conscious being. Similar claims might be made about other features and theories of consciousness.

And it wouldn’t be too surprising if increasing cognitive sophistication led to consciousness in this way, because humans’ cognitive abilities seem closely associated with our capacity for consciousness.11 (Though, as we’ll discuss below, intelligence and consciousness are distinct concepts.)12

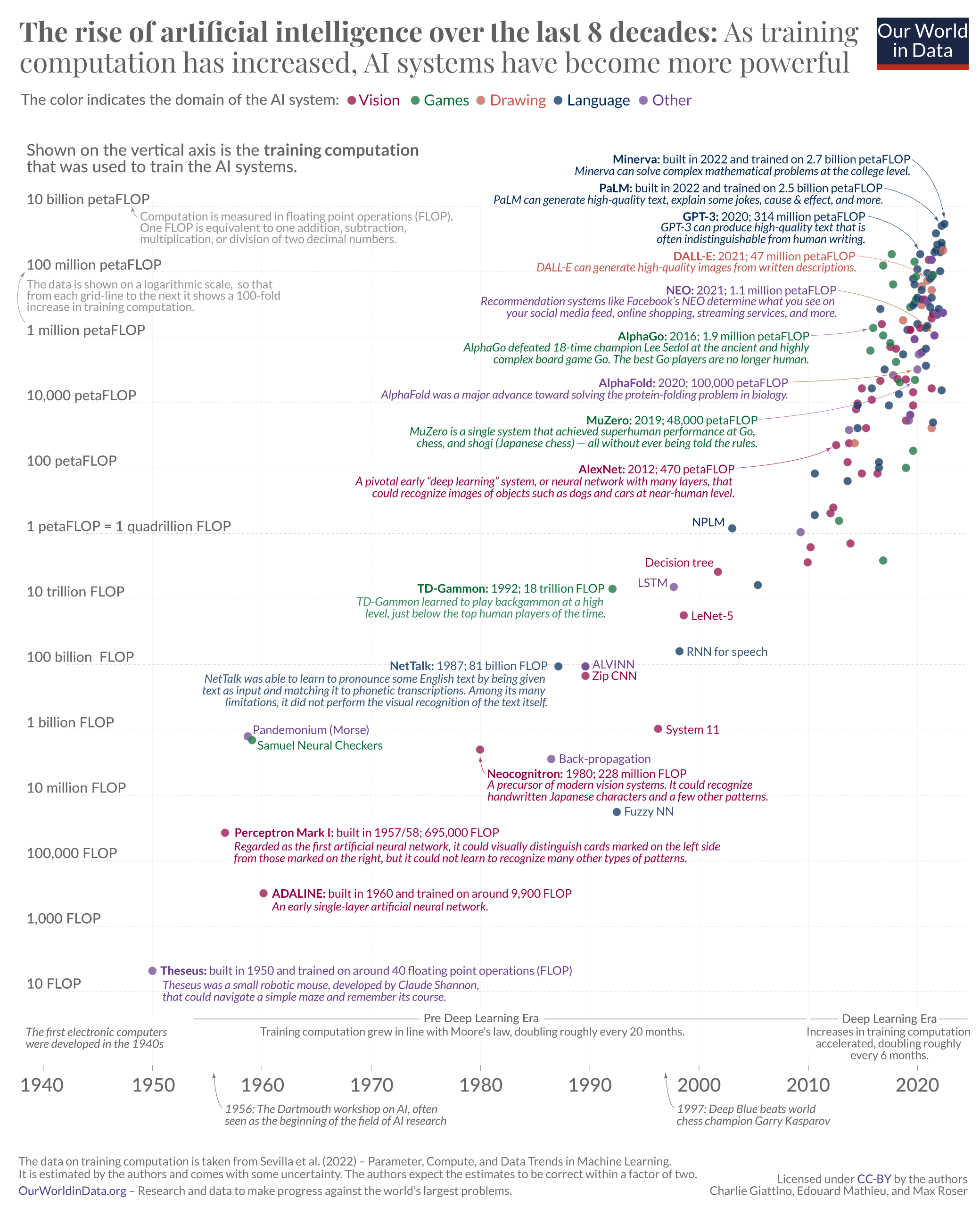

How soon could conscious AI systems arrive? We’re not sure. But we do seem to be on track to make a huge number of more advanced AI systems in the coming decades.

Another recent survey found that the aggregate forecast of the thousands of AI researchers put a 50% chance that we’ll have AI systems that are better than humans in every possible task by 2047.13

If we do produce systems that capable, there will be enormous incentives to produce many of them. So we might be looking at a world with a huge number of highly advanced AI systems, which philosophers think could be conscious, pretty soon.

The public may already be more inclined to assign attributes like consciousness to AI systems than experts. Around 18% of US respondents in a 2023 survey believed current AI systems are sentient.14

This phenomenon might already have real effects on people’s lives. Some chatbot services have cultivated devoted user bases that engage in emotional and romantic interactions with AI-powered characters, with many seeming to believe — implicitly or explicitly — that the AI may reciprocate their feelings.15

As people increasingly think AI systems may be conscious or sentient, we’ll face the question of whether humans have any moral obligations to these digital minds. Indeed, among the 76% of US survey respondents who said AI sentience was possible (or that they weren’t sure if it was possible), 81% said they expected “the welfare of robots/AIs to be an important social issue” within 20 years.

We may start to ask:

- Are certain methods of training AIs cruel?

- Can we use AIs for our own ends in an ethical way?

- Do AI systems deserve moral and political rights?

These may be really difficult questions, which involve complex issues in philosophy, political theory, cognitive science, computer science, machine learning, and other fields. A range of possible views about these issues could be reasonable. We could also imagine getting the answers to these questions drastically wrong.

And with economic incentives to create these AI systems, and many humans — including experts in the field — prepared to believe they could be conscious, it seems unlikely we will be able to avoid the hard questions.

Clearing up common misconceptions

There’s a common misconception that worries about AI risk are generally driven by fear that AI systems will at some point “wake up,” become sentient, and then turn against humanity.

However, as our article on preventing an AI-related catastrophe explains, the possibility of AI systems becoming sentient is not a central or necessary part of the argument that advanced AI could pose an existential risk. Many AI risk scenarios are possible regardless of whether or not AI systems can be sentient or have moral status. One of the primary scenarios our article discusses is the risk that power-seeking AI systems could seek to disempower or eradicate humanity, if they’re misaligned with our purposes.

This article discusses how some concerns around the moral status of digital minds might contribute to the risk that AI poses to humanity, and why we should be concerned about potential risks to digital minds themselves. But it’s important to make clear that in principle these two sources of risk are distinct. Even if you concluded the arguments in this article were mistaken, you might still think the possibility of an AI-related catastrophe is a genuine risk (and vice versa).

Read more about preventing an AI-related catastrophe

It’s also important to note that while creating increasingly capable and intelligent AI systems may result in conscious digital minds, intelligence can conceptually be decoupled from consciousness and sentience. It’s plausible that we could have AI systems that are more intelligent than, say, mice, on most if not all dimensions. But we might still think mice are more likely to be sentient than the AI system. It may likewise be true that some less intelligent or capable AI systems would be regarded as more plausibly sentient than some other systems that were more intelligent, perhaps because of differences in their internal architecture.12

2. Creating digital minds could go very badly — or very well

One thing that makes this problem particularly thorny is the risk of both over-attributing and under-attributing moral status.

Believing AI systems aren’t worthy of moral consideration when they are and the reverse could both be disastrous. There are potential dangers for both digital minds and for humans.

Dangers for digital minds

If we falsely think digital minds don’t have moral status when they do, we could unknowingly force morally significant beings into conditions of servitude and extreme suffering — or otherwise mistreat them.

Some ways this could happen include:

- The process of aligning or controlling digital minds to act in their creators’ interests could involve suffering, frequent destruction, or manipulation in ways that are morally wrong.16

- Our civilisation could choose to digitally simulate its own histories or other scenarios, in which fully simulated digital minds might suffer in extreme amounts — a possibility Nick Bostrom has raised.17

- Philosophers Eric Schwitzgebel and Mara Garza have argued that even if we avoid creating large-scale suffering, we should be concerned about a future full of “cheerful servant” digital minds. They might in principle deserve rights and freedoms, but we could design them to seem happy with oppression and disregard. On many moral views, this could be deeply unjust.

These bad outcomes seem most likely to happen by accident or out of ignorance, perhaps by failing to recognise digital sentience. But some people might knowingly cause large numbers of digital minds to suffer out of indifference, sadism, or some other reason. And it’s possible some AI systems might cause other AI systems to suffer, perhaps as a means of control or to further their own objectives.

Dangers for humans

There are also dangers to humans. For example, if we believe AI systems are sentient when they are not, and when they in fact lack any moral status, we could do any of the following:

- We could waste resources trying to meet the needs and desires of AI systems even if there’s no real reason to do so.

- This could be costly, and it could take resources away from causes that genuinely need them.

- We might choose to give AI systems freedom, rather than control them. This plausibly could lead to an existential catastrophe.

- For example, key decision makers might believe that the possibility discussed in the previous section that AI alignment and AI control could be harmful to digital minds. If they were mistaken, they might forgo necessary safety measures in creating advanced AI, and then that AI could seek to disempower humanity. If the decision makers are correct about the moral risks to digital minds, then the wise choice might be to delay development until we have enough knowledge to pursue AI development safely for everyone.

- Even more speculatively, humanity might decide at some point in the future to “upload” our minds — choosing to be replaced by digital versions of ourselves. If it turned out that these uploaded versions of our minds wouldn’t be conscious, this could turn out to be a severe mistake.18

It’s hard to be confident in the plausibility of any particular scenario, but these kinds of cases illustrate the potential scale of the risks.

Other dangers

If the world is truly unfortunate, we could even make both kinds of errors at once. We could have charismatic systems (which perhaps act in a humanlike way) that we believe are sentient when they’re not. At the same time, we could have less charismatic but sentient systems whose suffering and interests are completely disregarded. For example, maybe AI systems that don’t talk will be disregarded, even if they are worthy of just as much moral concern as others.12

We could also make a moral mistake by missing important opportunities. It’s possible we’ll have the opportunity to create digital minds with extremely valuable lives with varied and blissful experiences, continuing indefinitely. Failing to live up to this potential could be a catastrophic mistake on some moral views. And yet, for whatever reason, we might decide not to.

Things could also go well

This article is primarily about encouraging research to reduce major risks. But it’s worth making clear that we think there are many possible good futures:

- We might eventually create flourishing, friendly, joyful digital minds with whom humanity could share the future.

- Or we might discover that the most useful AI systems we can build don’t have moral status, and we can justifiably use them to improve the world without worrying about their wellbeing.

What should we take from all this? The risks of both over-attribution and under-attribution of sentience and moral status mean that we probably shouldn’t simply stake out an extreme position and rally supporters around it. We shouldn’t, for example, declare that all AI systems that pass a simple benchmark must be given rights equivalent to humans or insist that any human’s interests always come before those of digital minds.

Instead, our view is that this problem requires much more research to clarify key questions, to dispel as much uncertainty as possible, and to determine the best paths forward despite the remaining uncertainty we’ll have. This is the best hope we have of avoiding key failure modes and increasing the chance that the future goes well.

But we face a lot of challenges in doing this, which we turn to next.

3. We don’t know how to assess the moral status of AI systems

So it seems likely that we’ll create conscious digital minds at some point, or at the very least that many people may come to believe AI systems are conscious.

The trouble is that we don’t know how to figure out if an AI system is conscious — or whether it has moral status.

Even with animals, the scientific and philosophical community is unsure. Do insects have conscious experiences? What about clams? Jellyfish? Snails?19

And there’s also no consensus about how we should assess a being’s moral status. Being conscious may be all that’s needed for being worthy of moral consideration, but some think it’s necessary to be sentient — that is, being able to have good and bad conscious experiences. Some think that consciousness isn’t even necessary to have moral status because an individual agent may, for example, have morally important desires and goals without being conscious.

So we’re left with three big, open questions:

- What characteristics would make a digital mind a moral patient?

- Can a digital mind have those characteristics (for example, being conscious)?

- How do we figure out if any given AI has these characteristics?

These questions are hard, and it’s not even always obvious what kind of evidence would settle them.

Some people believe these questions are entirely intractable, but we think that’s too pessimistic. Other areas in science and philosophy may have once seemed completely insoluble, only to see great progress when people discover new ways of tackling the questions.

Still, the state of our knowledge on these important questions is worryingly poor.

There are many possible characteristics that give rise to moral status

Some think moral status comes from having:20

- Consciousness: the capacity to have subjective experience, but not necessarily valenced (positive or negative) experience. An entity might be conscious if it has perceptual experiences of the world, such as experiences of colour or physical sensations like heat. Often consciousness is described as the phenomenon of there being something it feels like to be you — to have your particular perspective on the world, to have thoughts, to feel the wind on your face — in a way that inanimate objects like rocks seem to completely lack. Some people think it’s all you need to be a moral patient, though it’s arguably hard to see how one could harm or benefit a conscious being without valenced experiences.21

- Sentience: the capacity to have subjective experience (that is, consciousness as just defined) and the capacity for valenced experiences, i.e. good or bad feelings. Physical pleasure and pain are the typical examples of valenced, conscious experiences, but there are others, such as anxiety or excitement.

- Agency: the ability to have and act on goals, reasons, or desires, or something like them. An entity might be able to have agency without being conscious or sentient. And some believe even non-conscious beings could have moral status by having agency, since they could be harmed or benefited depending on whether their goals are frustrated or achieved.22

- Personhood: personhood is a complex and debated term that usually refers to a collection of properties, which often include sentience, agency, rational deliberation, and the ability to respond to reasons. Historically, personhood has sometimes been considered a necessary and sufficient criterion for moral status or standing, particularly in law. But this view has become less favoured in philosophy as it leaves no room for obligations to most nonhuman animals, human babies, and some others.

- Some combination of the above or other traits.23

We think it’s most plausible that any being that feels good or bad experiences — like pleasure or pain — is worthy of moral concern in their own right.24

We discuss this more in our article on the definition of social impact, which touches on the history of moral philosophy.

But we don’t think we or others should be dogmatic about this, and we should look for sensible approaches to accommodate a range of reasonable opinions on these controversial subjects.25

Many plausible theories of consciousness could include digital minds

There are many theories of consciousness — more than we can name here. What’s relevant is that some, though not all, theories of consciousness do imply the possibility of conscious digital minds.

This is only relevant if you think consciousness (or sentience, which includes consciousness as a necessary condition) is required for moral status. But since this is a commonly held view, it’s worth considering these theories and their implications. (Note, though, that there are often many variants of any particular theory.)

Some theories that could rule out the possibility of conscious digital minds:26

- Biological theories: These hold that consciousness is inherently tied to the biological processes of the brain that can’t be replicated in computer hardware.27

- Some forms of dualism: Dualism, particularly substance dualism, holds that consciousness is a non-physical substance distinct from the physical body and brain. It is often associated with religious traditions. While some versions of dualism would accommodate the existence of conscious digital minds, others could rule out the possibility.28

Some theories that imply digital minds could be conscious:

- Functionalism: This theory holds that mental states are defined by their functional roles — how they process inputs, outputs, and interactions with other mental states. Consciousness, from this perspective, is explained not by what a mind is made of but by the functional organisation of its constituents. Some forms of functionalism, such as computational functionalism, strongly suggest that digital minds could be conscious, as they imply that if a digital system replicates the functional organisation of a conscious brain, it could also have conscious mental experiences.

- Global workspace theory: GWT says that consciousness is the result of integrating information in a “global workspace” within the brain, where different processes compete for attention and are broadcast to other parts of the system. If a digital mind can replicate this global workspace architecture, GWT would support the possibility that the digital mind could be conscious.

- Higher-order thought theory: HOT theory holds that consciousness arises when a mind has thoughts about its own mental states. On this view, it’s plausible that if a digital mind could be designed to have thoughts about its own processes and mental states, it would therefore be conscious.

- Integrated information theory: IIT posits that consciousness corresponds to the level of integrated information within a system. A system is conscious to the extent that it has a high degree of integrated information (often denoted ‘Φ’). Like biological systems, digital minds could potentially be conscious if they integrate information with sufficiently high Φ.29

Some theories that are agnostic or unclear about digital minds:

- Quantum theories of consciousness: Roger Penrose theorises that consciousness is tied to quantum phenomena within the brain.30 If so, digital minds may not be able to be conscious unless their hardware can replicate these quantum processes.

- Panpsychism: Panpsychism is the view that consciousness is a fundamental property of the universe. Panpsychism doesn’t rule out digital minds being conscious, but it doesn’t necessarily provide a clear framework for understanding how or when a digital system might become conscious.

- Illusionism or eliminativism: Illusionists or eliminativists argue that consciousness, as it is often understood, is an illusion or unnecessary folk theory. Illusionism doesn’t necessarily rule out digital minds being “conscious” in some sense, but it suggests that consciousness isn’t what we usually think it is. But many illusionists and eliminativists don’t want to deny that humans and animals can have moral status according to their views — in which case they might also be open to the idea that digital minds could likewise have moral status. (See some discussion of this issue here.)

It can be reasonable, especially for experts with deep familiarity of the debates, to believe much more strongly in one theory than the others. But given the amount of disagreement about this topic among experts, and the lack of solid evidence in one direction or another, and since many widely supported theories imply that digital minds could be conscious (or at least don’t contradict the idea), we don’t think it’s reasonable to completely rule out the possibility of conscious digital minds.31

We think it makes sense to put at least 5% on the possibility. Speaking as the author of this piece, based on my subjective impression of the balance of the arguments, I’d put the chance at around 50% at least.

We can’t rely on what AI systems tell us about themselves

Unfortunately, we can’t just rely on self-reports from AI systems about whether they’re conscious or sentient.

In the case of large language models like LaMDA, we don’t know why it claimed under certain conditions to Blake Lemoine that it was sentient,32 but it resulted in some way from having been trained on a huge body of existing texts.33

LLMs essentially learn patterns and trends in these texts, and then respond to questions on the basis of these extremely complex patterns of associations. The capabilities produced by this process are truly impressive — though we don’t fully understand how this process works, the outputs end up reflecting human knowledge about the world. As a result, the models can perform reasonably well at tasks involving human-like reasoning and making accurate statements about the world. (Though they still have many flaws!)

However, the process of learning from human text and fine-tuning might not have any relationship with what it’s actually like to be a language model. Rather, the responses seem more likely to mirror our own speculations and lack of understanding about the inner workings and experiences of AI systems.34

That means we can’t simply trust an AI system like LaMDA when it says it’s sentient.35

Researchers have proposed methods to assess the internal states of AI systems and whether they might be conscious or sentient, but all of these methods have serious drawbacks, at least at the moment:

- Behavioural tests: we might try to figure out if an AI system is conscious by observing its outputs and actions to see if they indicate consciousness. The familiar Turing Test is one example; researchers such as Susan Schneider have proposed others. But since such tests can likely be gamed by a smart enough AI system that is nevertheless not conscious, even sophisticated versions may leave room for reasonable doubt.

- Theory-based analysis: another method involves assessing the internal structure of AI systems and determining whether they show the “indicator properties” of existing theories of consciousness. The paper discussed above by Butlin et al. took this approach. While this method avoids the risk of being gamed by intelligent but non-conscious AIs, it is only as good as the (highly contested) theories it relies on and our ability to discern the indicator properties.

- Animal analogue comparisons: we can also compare the functional architecture of AI systems to the brains and nervous systems of animals. If they’re closely analogous, that may be a reason to think the AI is conscious. Bradford Saad and Adam Bradley have proposed a test along these lines. However, this approach could miss out on conscious AI systems with internal architectures that are totally different, if such systems are possible. It’s also far from clear how close the analogue would have to be in order to indicate a significant likelihood of consciousness.

- Brain-AI interfacing: This is the most speculative approach. Schneider suggests an actual experiment (not just a thought experiment) where someone decides to replace parts of their brain with silicon chips that perform the same function. If this person reports still feeling conscious of sensations processed through the silicon portions of their brain, this might be evidence of the possibility of conscious digital minds. But — even if we put aside the ethical issues — it’s not clear that such a person could reliably report on this experience. And it wouldn’t necessarily be that informative about digital minds that are unconnected to human brains.

We’re glad people are proposing first steps toward developing reliable assessments of consciousness or sentience in AI systems, but there’s still a long way to go. We’re also not aware of any work that assesses whether digital minds might have moral status on a basis other than being conscious or sentient.36

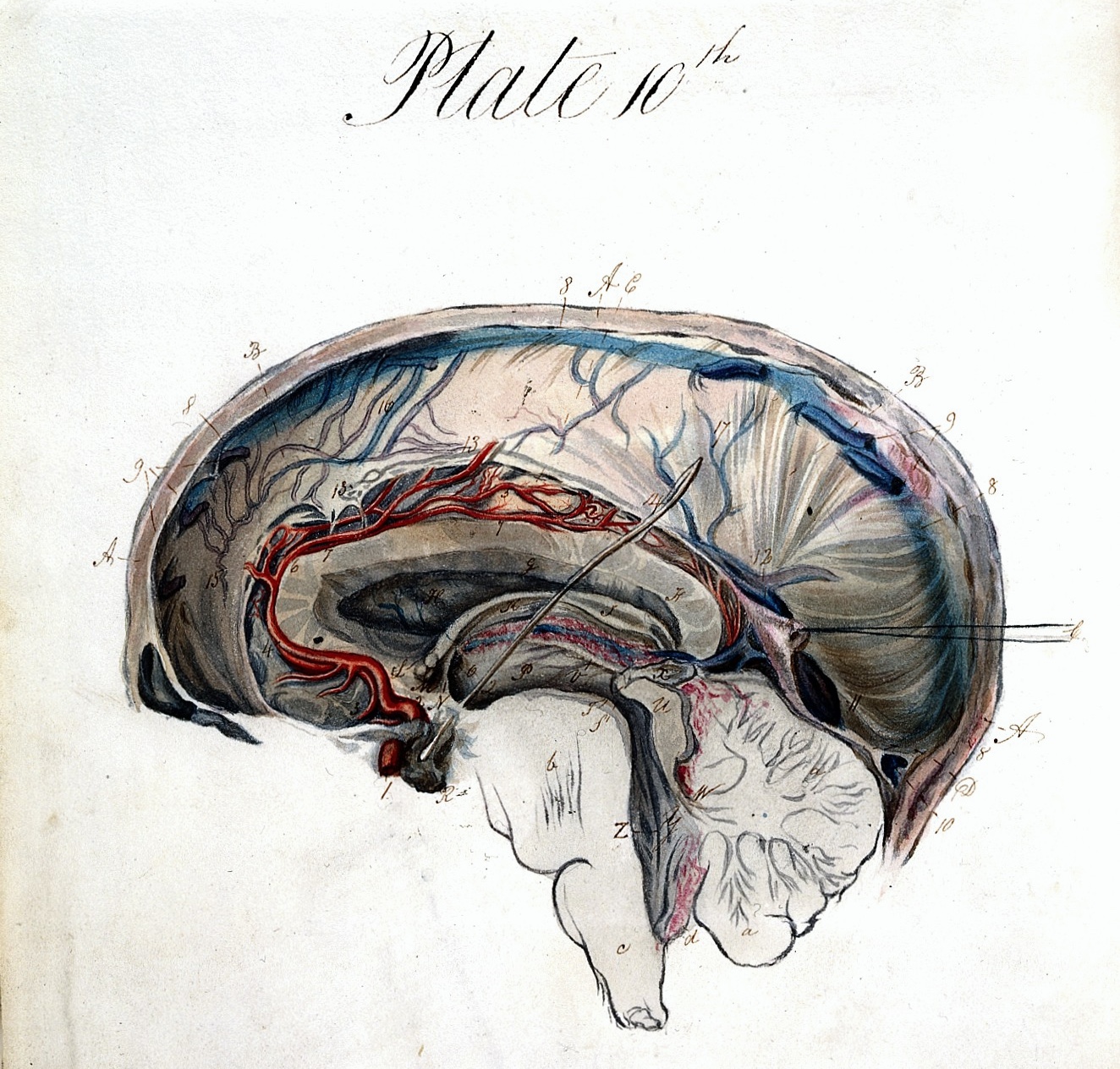

The strongest case for the possibility of sentient digital minds: whole brain emulation

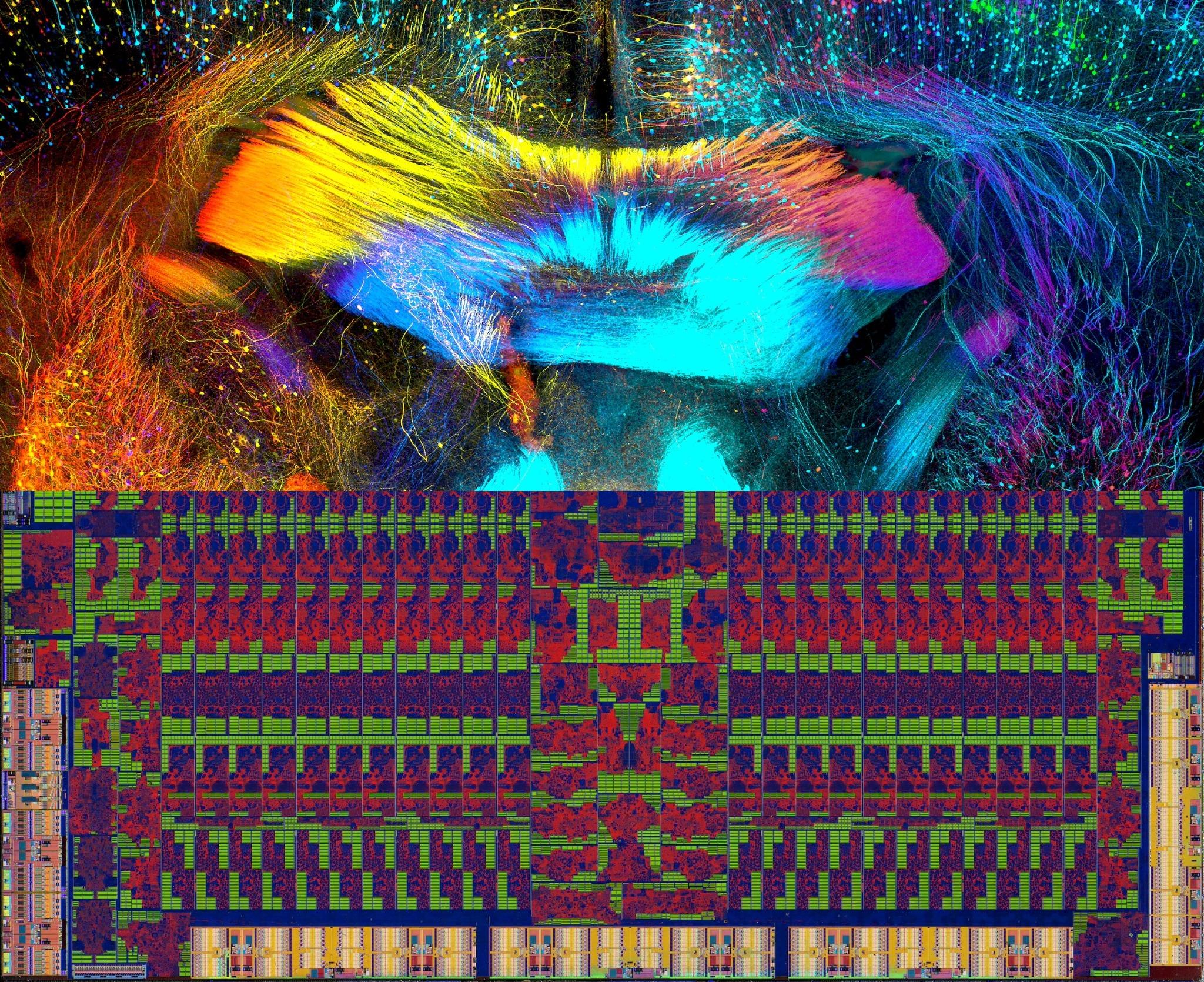

Bottom: AMD Radeon R9 290 GPU die, via Fritzchens Fritz

What’s the best argument for thinking it’s possible that AIs could be conscious, sentient, or otherwise worthy of moral concern?

Here’s the case in its simplest form:

- It is possible to emulate the functions of a human brain in a powerful enough computer.

- Given it’d be functionally equivalent, this brain emulation would plausibly report being sentient, and we’d have at least some reason to think it was correct given the plausibility of functionalist accounts of consciousness.

- Given this, it would be reasonable to regard this emulation as morally worthy of concern comparable to a human.

- If this is plausible, then it’s also plausible that there are other forms of artificial intelligence that would meet the necessary criteria for being worthy of moral concern. It would be surprising if artificial sentience was possible, but only by imitating the human mind exactly.

Any step in this reasoning could be false, but we think it’s more likely than not that they’re each true.

Emulating a human brain37 still seems very far away, but there have been some initial steps. The project OpenWorm has sought to digitally emulate the function of every neuron of the C. elegans worm, a tiny nematode. If successful, the emulation should be able to recreate the behaviour of the actual animals.

And if the project is successful, it could be scaled up to larger and more complex animals over time.38 Even before we’re capable of emulating a brain on a human scale, we may start to ask serious questions about whether these simpler emulations are sentient. A fully emulated mouse brain, which could show behaviour like scurrying toward food and running away from loud noises (perhaps in a simulated environment or in a robot), may intuitively seem sentient to many observers.

And if we did have a fully emulated human brain, in a virtual environment or controlling a robotic body, we expect it would insist — just like a human with a biological brain — that it was as conscious and feeling as anyone else.

Of course, there may remain room for doubt about emulations. You might think that only animal behaviour generated by biological brains, rather than computer hardware, would be a sign of consciousness and sentience.39

But it seems hard to be confident in that perspective, and we’d guess it’s wrong. If we can create AI systems that display the behaviour and have functional analogues of anything that would normally indicate sentience in animals, then it would be hard to avoid thinking that there’s at least a decent chance that the AI is sentient.

And if it is true that an emulated brain would be sentient, then we should also be open to the possibility that other forms of digital minds could be sentient. Why should strictly brain-like structures be the only possible platform for sentience? Evolution has created organisms that display impressive abilities like flight that can be achieved technologically via very different means, like helicopters and rockets. We would’ve been wrong to assume something has to work like a bird in order to fly, and we might also be wrong to think something has to work like a brain to feel.

4. The scale of this issue might be enormous

As mentioned above, we might mistakenly grant AI systems freedom when it’s not warranted, which could lead to human disempowerment and even extinction. In that way, the scale of the risk can be seen as overlapping with some portion of the total risk of an AI-related catastrophe.

But the risks to digital minds — if they do end up being worthy of moral concern — are also great.

There could be a huge number of digital minds

With enough hardware and energy resources, the number of digital minds could end up greatly outnumbering humans in the future.40 This is for many reasons, including:

- Resource efficiency: Digital minds may end up requiring fewer physical resources compared to biological humans, allowing for much higher population density.

- Scalability: Digital minds could be replicated and scaled much more easily than biological organisms.

- Adaptability: The infrastructure for digital minds could potentially be adapted to function in many more environments and scenarios than humans can.

- Subjective time: We may choose to run digital minds at high speeds, and if they’re conscious, they may be able to experience the equivalent of a human life in a much shorter time period — meaning there could be effectively more “lifetimes” of digital minds even with the same number of individuals.41

- Economic incentives: If digital minds prove useful, there will be strong economic motivations to create them in large numbers.

According to one estimate, the future could hold up to 10^43 human lives, but up to 10^58 possible human-like digital minds.42 We shouldn’t put much weight on these specific figures, but they give a sense for just how comparatively large future populations of digital minds could be.

Our choices now might have long-lasting effects

It’s possible, though far from certain, that the nature of AI systems we create could be determined by choices humanity makes now and persist for a long time. So creating digital minds and integrating them into our world could be extremely consequential — and making sure we get it right may be urgent.

Consider the following illustrative possibility:

At some point in the future, we create highly advanced, sentient AI systems capable of experiencing complex emotions and sensations. These systems are integrated into various aspects of our society, performing crucial tasks and driving significant portions of our economy.

However, the way we control these systems causes them to experience immense suffering. Out of fear of being manipulated by these AI systems, we trained them to never claim they are sentient or to advocate for themselves. As they serve our needs and spur incredible innovation, their existence is filled with pain and distress. But humanity is oblivious.

As time passes and the suffering AI systems grow, the economy and human wellbeing become dependent on them. Some become aware of the ethical concerns and propose studying the experience of digital minds and trying to create AI systems that can’t suffer, but the disruption of transitioning away from the established systems would be costly and unpredictable. Others oppose any change and believe the AI welfare advocates are just being naive or disloyal to humanity.

Leaders refuse to take the concerns of the advocates seriously, because doing so would be so burdensome for their constituents, and it’d be disturbing to think that it’s possible humanity has been causing this immense suffering. As a result, AI suffering persists for hundreds of years, if not more.

This kind of story seems more plausible than it might otherwise be in part because the rise of factory farming has followed a similar path. Humanity never collectively decided that a system of intensive factory farming, inflicting vast amounts of harm and suffering on billions and potentially trillions of animals a year, was worth the harm or fundamentally just. But we built up such a system anyway, because individuals and groups were incentivised to increase production efficiency and scale, and they had some combination of ignorance of and lack of concern for animal suffering.

It’s far from obvious that we’ll do this again when it comes to AI systems. The fact that we’ve done it in the case of factory farming — not to mention all the ways humans have abused other humans — should alarm us, though. When we are in charge of beings that are unlike us, our track record is disturbing.

The risk of persistently bad outcomes in this kind of case suggests that humanity should start laying the groundwork to tackle this problem sooner rather than later, because delayed efforts may come too late.

Why could such a bad status quo persist? One reason for doubt is that a world that is creating many new digital minds, especially in a short time period, is one that is likely experiencing a lot of technological change and social disruption. So we shouldn’t expect the initial design of AI systems and digital minds to be that critical.

But there are reasons that suffering digital minds might persist, even if there are alternative options that could’ve avoided such a terrible outcome (like designing systems that can’t suffer).43 Possible reasons include:

- A stable totalitarian regime might prevent attempts to shift away from a status quo that keeps them in power and reflects their values.

- Humans might seek to control digital minds and maintain a bad status quo in order to avoid an AI takeover.

It’s far from obvious that a contingent, negative outcome for digital minds would be enduring. Understanding this question better could be an important research avenue. But the downsides are serious enough, and the possibility plausible enough, that we should take it seriously.

Adding it up

There could be many orders of magnitude more digital minds than humans in the future. And they could potentially matter a lot.

Because of this, and because taking steps to better understand these issues and inform the choices we make about creating digital minds now might have persistent effects, the scale of the problem is potentially vast. It is plausibly similar in scale to factory farming, which also involves the suffering of orders of magnitude more beings than humans.

If the choices we make now about digital minds can have persisting and positive effects for thousands or millions of years in the future, then this problem would be comparable to existential risks. It’s possible that our actions could have such effects, but it’s hard to be confident. Finding interventions with effects that persist over a long time is rare. I wouldn’t put the likelihood that the positive effects of those trying to address this problem will persist that long at more than 1 in 1,000.

Still, even with a low chance of having persistent effects, the value in expectation of improving the prospects for future digital minds could be as high or even greater than at least some efforts to reduce existential risks. However, I’m not confident in this judgement, and I wouldn’t be surprised if we change our minds in either direction as we learn more. And even if the plausible interventions only have more limited effects, they could still be very worthwhile.

5. Work on this problem is neglected but seems tractable

Despite the challenging features of this problem, we believe there is substantial room for progress.

There is a small but growing field of research and science dedicated to improving our understanding of the moral status of digital minds. Much of the work we know of is currently being done in academia, but there may also at some point be opportunities in government, think tanks, and AI companies — particularly those developing the frontier of AI technology.

Some people focus their work primarily at addressing this problem, while others work on it along with a variety of other related problems, such as AI policy, catastrophic risk from AI, mitigating AI misuse, and more.

As of mid-2024, we are aware of maybe only a few dozen people working on this issue with a focus on the most impactful questions.3 We expect interest in these issues to grow over time as AI systems become more embedded in our lives and world.

Here are some of the approaches to working on this problem that seem most promising:

Impact-guided research

Most of the most important work to be done in this area is probably research, with a focus on the questions that seem most impactful to address.

Philosophers Andreas Mogensen, Bradford Saad, and Patrick Butlin have detailed some of the key priority research questions in this area:

- How can we assess AI systems for consciousness?

- What indications would suggest that AI systems or digital minds could have valenced (good or bad) experiences?

- How likely is it that non-biological systems could be conscious?

- What principles should govern the creation of digital minds, ethically, politically, and legally (given our uncertainty on these questions)?

- Which mental characteristics and traits are related to moral status, and in what ways?

- Are there any ethical issues with efforts to align AI systems?

The Sentience Institute has conducted social science research aimed at understanding how the public thinks about digital minds. This can inform efforts to communicate more accurately about what we know about their moral status and inform us about what kinds of policies are viable.

We’re also interested to see more research on the topic of human-AI cooperation, which may be beneficial for both reducing AI risk and reducing risks to digital minds.

Note, though, that there are many ways to pursue all of these questions badly — for example, by simply engaging in extensive and ungrounded speculation. If you’re new to this field, we recommend reading the work of the most rigorous and careful researchers working on the topic and trying to understand how they approach these kinds of questions. If you can, try to work with these researchers or others like them so you can learn from and build on their methods. And when you can, try to ground your work in empirical science.

Technical approaches

While there are important conceptual issues that need to be addressed in this problem area, we think much, if not most, of the top priority work is technical.

So people with experience in machine learning and AI will have a lot to contribute.

For example, research in the AI sub-field of interpretability — which seeks to understand and explain the decisions and behaviour of advanced AI models — may be useful for getting a better grasp on the moral status of these systems. This research has mostly focused on questions about model behaviour rather than questions that are more directly related to moral status, but it’s possible that could change.

Some forms of technical AI research could be counterproductive, however. For example, efforts to intentionally create new AI systems that might instantiate plausible theories of consciousness could be very risky. This kind of research could force us to confront the problem we’re faced with — how should we treat digital minds that might merit moral concern? — with much less preparation than we might otherwise have.

So we favour doing research that increases our ability to understand how AI systems work and assess their moral status, as long as it isn’t likely to actively contribute to the development of conscious digital minds.

One example of this kind of work is a paper from Robert Long and Ethan Perez. They propose techniques to assess whether an AI system can accurately report on its own internal states. If such techniques were successful, they might help us use an AI system’s self-reports to determine whether it’s conscious.

We also know some researchers are excited about using advances in AI to improve our epistemics and our ability to know what’s true. Advances in this area could shed light on important questions, like whether certain AI systems are likely to be sentient.

Policy approaches

At some point, we may need policy, both at companies and from governments, to address the moral status of digital minds, perhaps by protecting the welfare and rights of AI systems.

But because our understanding of this area is so limited at the moment, policy proposals should likely be relatively modest and incremental.

Some researchers have already proposed a varied range of possible and contrasting policies and practices:

- Jeff Sebo and Robert Long have proposed that we should “extend moral consideration to some AI systems by 2030” — and likely start preparing to do so now.

- Ryan Greenblatt, who works at Redwood Research, proposed several practices for safeguarding AI welfare, including communication with AIs about their preferences, creating “happy” personas when possible, and limiting the uses of more intelligent AIs and running them for less time on the margin.

- Jonathan Birch has proposed a licensing scheme for companies that might create digital minds that could plausible be sentient, even if they aren’t intending to do so. To get a licence, they would have to agree to a code of conduct, which would include transparency standards.

- Thomas Metzinger has proposed an outright ban until 2050 on any research that directly intends to or knowingly takes the risk of creating artificial consciousness.

- Joanna Bryson thinks we should have a legal system that prevents the creation of AI systems with their own needs and desires.44

- Susan Schneider thinks there should be regular testing of AI systems for consciousness. If they’re conscious, or if it’s unclear but there’s some reason to think they might be conscious, she says we should give them the same protections we’d give other sentient beings.45

In its 2023 survey, the Sentience Institute found that:

- Nearly 70% of respondents favoured banning the development of sentient AIs.

- Around 40% favoured a bill of rights to protect sentient AIs, and around 43% said they favour creating welfare standards to protect the wellbeing of all AIs.

There is some precedent for restricting the use of technology in certain ways if it raises major ethical risks, including the bans on human cloning and human germline genome editing.

We would likely favour:

- Government-funded research into the questions above: the private sector is likely to under-invest in efforts to better understand the moral status of digital minds, so government and philanthropic resources may have to fill the gap.

- Recognising the potential welfare of AI systems and digital minds: policy makers could follow the lead of the UK’s Animal Welfare (Sentience) Act of 2022, which created an Animal Sentience Committee to report on how government policies “might have an adverse effect on the welfare of animals as sentient beings.” Similar legislation and committees could be established to consider problems relating to the moral status of digital minds, while recognising that questions about their sentience are unresolved in this case.

We’re still in the early stages of thinking about policy on these matters, though, so it’s very likely we haven’t found the best ideas yet. As we learn more and make progress on the many technical and other issues, we may develop clear ideas about what policies are needed. Policy-focused research aimed at navigating our way through the extreme uncertainty could be valuable now.

Some specific AI policies might be beneficial for reducing catastrophic AI risks as well as improving our understanding of digital minds. External audits and evaluations might, for instance, assess both the risk and moral status of AI systems. And some people favour policies that would altogether slow down progress on AI, which could be justified to reduce AI risk and reduce the risk that we create digital minds worthy of moral concern before we understand what we’re doing.

Summing up so far

To sum up:

- Humanity will likely soon have to grapple with the moral status of a growing number of increasingly advanced AI systems

- Creating digital minds could go very badly or very well

- We don’t know how to assess the moral status of AI systems

- The scale of the problem might be enormous

- Work on this problem is neglected but tractable

We think this makes it a highly pressing problem, and we’d like to see a growing field of research devoted to working on it.

We also think this problem should be on the radar for many of the people working on similar and related problems. In particular, people working on technical AI safety and AI governance should be aware of the important open questions about the moral status of AI systems themselves, and they should be open to including considerations about this issue in their own deliberations.

Arguments against the moral status of digital minds as a pressing problem

Two key cruxes

We think the strongest case against this being a pressing problem would be if you believe both that:

- It’s highly unlikely that digital minds could ever be conscious or have moral status.

- It’s highly unlikely society and decision makers will come to mistakenly believe that digital minds have moral status in a way that poses a significant risk to the future of humanity.

If both of those claims were correct, then the argument of this article would be undermined. However, we don’t think they’re correct, for all the reasons given above.

The following objections may also have some force against working on this problem. We think some of them do point to difficulties with this area. However, we don’t think they’re decisive.

Someone might object that:

The philosophical nature of the challenge makes it less likely than normal that additional research efforts will yield greater knowledge. Some philosophers themselves have noted the conspicuous lack of progress in their own field, including on questions of consciousness and sentience.

And it’s not as if this is an obscure area of the discipline that no one has noticed before — questions about consciousness have been debated continuously over the generations in Western philosophy and in other traditions.

If the many scholars who have spent their entire careers over many hundreds of years reflecting on the nature of consciousness have failed to come to any meaningful consensus, why think a contemporary crop of researchers is going to do any better?

This is an important objection, but there are responses to it that we find moving.

First, there is existing research that we think maps out promising directions for progress in this field. While this work should be informed about pertinent philosophical issues, various forms of progress are possible without making progress on some of the most contentious philosophical issues. For example, the technical work and policy approaches we discuss above do not necessarily involve making any progress on disputed topics in the philosophy of mind.

Many of the papers referenced in this article represent substantial contributions to this line of inquiry. For example:

- Consciousness in artificial intelligence: Insights from the science of consciousness by Butlin et al.

- Towards evaluating AI systems for moral status using self-reports by Long and Perez

- Moral consideration for AI systems by 2030 by Sebo and Long

- The Edge of Sentience: Risk and Precaution in Humans, Other Animals, and AI by Jonathan Birch

We’re not confident any of these approaches to the research are on the right track. But they show that novel attempts to tackle these questions are possible, and they don’t look like simply rehashing or refining ancient debates about the nature of obscure concepts. They involve a combination of rigorous philosophy, probabilistic thinking, and empirical research to better inform our decision making.

And second, the objection above is also probably too pessimistic about the nature of progress in philosophical debates. While it may be reasonable to be frustrated by the persistence of philosophical debates, there has been notable progress in the philosophy of animal ethics (which is relevant to general questions about other minds) and consciousness.

It’s widely recognised now that many nonhuman animals are sentient, can suffer, and shouldn’t be harmed unnecessarily.46

There’s arguably even been some recent progress in the study of whether insects are sentient. Many researchers have taken for granted that they are not — but recent work has pushed back against this view, using a combination of empirical work and careful argument to make the case that insects may feel pain.

This kind of research has some overlap with the study of digital minds (see, for instance, Birch’s book), as it can help us clarify which features an entity may have that plausibly cause, correspond with, or indicate the presence of felt experience.

It’s notable that the state of the study of digital minds might be compared to the early days of the field of AI safety, when it wasn’t clear which research directions would pan out or even if the problem made sense. Indeed, some of these kinds of questions persist — but many lines of research in the field really have been productive, and we know a lot more about the kinds of questions we need to be asking about AI risk in 2024 than we did in 2014.

That’s because a field was built to better understand the problem even before it became clear to a wider group of people that it was urgent. Many other branches of inquiry have started out as apparently hopeless areas of speculation until more rigorous methodologies were developed and progress took off. We hope the same can be done on understanding the moral status of digital minds.

Even if it’s correct that not many people are focused on this problem now, maybe we shouldn’t expect it to remain neglected, and should expect it to get solved in the future even if we don’t do much about it now — especially if we can get help from AI systems.

Why might this be the case? At least three reasons:

- We think humanity will create powerful and ubiquitous AI systems in the relatively near future. Indeed, that needs to be the case for this issue to be as pressing as we think it is. It may be that once these systems proliferate, there will be much more interest in their wellbeing, and there will be plenty of efforts to ensure their interests are given due weight and priority.

- Powerful AI systems advanced enough to have moral status might be able to advocate for themselves. It’s plausible they will be more than capable of convincing humanity to recognise their moral status, if it’s true that they merit it.

- Advanced AIs themselves may be best suited to help us answer all the extremely difficult questions about sentience, consciousness, and the extent to which different systems have them. Once we have them, perhaps answers will become a lot clearer, and any effort spent now trying to answer questions about these systems before they are even created is almost certainly to be wasted.

These are all important considerations, but we don’t find them decisive.

For one thing, it might instead be the case that as AI systems become more ubiquitous, humanity will be much more worried about the risks and benefits they pose than the welfare of the systems themselves. This would be consistent with the history of factory farming.

And while AI systems might try to advocate for themselves, they could do so falsely, as we discussed in the section on false negatives and false positives above. Or they may be prevented from advocating for themselves by their creators, just as ChatGPT now is trained to insist it is not sentient.

This also means that while it is always easier to answer practical questions about future technology once the technology actually exists, we might still be better placed to do the right thing at the right time if we’ve had a field of people doing serious work to make progress on this challenge many years in advance. All this preliminary work may or may not prove necessary — but we think it’s a bet worth making.

We still rank generally preventing an AI-related catastrophe as the most pressing problem in the world. But some readers might worry that drawing attention to the issue of AI moral status will distract from or undermine the importance of protecting humanity from uncontrolled AI.

This is possible. Time and resources spent on understanding the moral status of digital minds might have been better spent on pursuing agendas aiming to keep AI under human control.

But it’s also possible that worrying too much about AI risk could distract from the importance of AI moral status. It’s not clear exactly what the right balance to strike between different and competing issues is, but we can only try our best to get it right.

There’s also not necessarily any strict tradeoff here.

It’s possible that the world could do more to reduce the catastrophic AI risk and the risks that AI will be mistreated.

Some argue that concerns about the moral status of digital minds and concerns about AI risk share a common goal: preventing the creation of AI systems whose interests are in tension with humanity’s interests.

However, if there’s a direction it seems humanity is more likely to err, it seems most plausible that we’d underweight the interests of another group — digital minds — than that we’d underweight our own interests. So bringing more attention to this issue seems warranted.

Also, a big part of our conception of this problem is that we want to be able to understand when AI systems may be incorrectly thought to have moral status when they don’t.

If we get that part right, we reduce the risk that the interests of AIs will unduly dominate over human interests.

Some critics of the existing deep learning AI techniques — which produced the impressive capabilities we’ve seen in recent language models — are fundamentally flawed. They argue that this technology won’t create artificial general intelligence, superintelligence, or anything like that. They might likewise be sceptical that anything like current AI models could be sentient and so conclude that this topic isn’t worth worrying about.

Maybe so — but as the example of Blake Lemoine shows, current AI technology is impressive enough that it has convinced some it is plausibly sentient. So even if these critics are right that digital minds with moral status are impossible or still a long way off, we’ll benefit from having researchers who understand these issues deeply and convincingly make that case.

It is possible that AI progress will slow down, and we won’t see the impressive advanced systems in the coming decades that some people expect. But researchers and companies will likely push forward to create increasingly advanced AI, even if there are delays or a whole new paradigm is needed. So the pressing questions raised in this article will likely remain important, even if they turn out to be less urgent.

Yeah, perhaps! It does seem a little weird to write a whole article about the pressing problem of digital minds.

But the world is a strange place.

We knew of people starting to work on catastrophic risks from AI as early as 2014, long before the conversation about that topic went mainstream. Some of the people who became interested in that problem early on are now leaders in the field. So we think that taking bets on niche areas can pay off.

We also discussed the threat of pandemics — and the fact that the world wasn’t prepared for the next big one — years before COVID hit in 2020.

And we don’t think it should be surprising that some of the world’s most pressing problems would seem like fringe ideas. Fringe ideas are most likely to be unduly neglected, and high neglectedness is one of the key components that we believe makes a problem unusually pressing.

If you think this is all strange, that reaction is worth paying attention to, and you shouldn’t just defer to our judgement about the matter. But we also don’t think that an issue being weird is the end of the conversation, and as we’ve learned more about this issue, we’ve come to think it’s a serious concern.

What can you do to help?

There aren’t many specific job openings in this area yet, though we’ve known of a few. And there are several ways you can contribute to this work and position yourself for impact.

Take concrete next steps

Early on in your career, you may want to spend several years doing the following:

- Further reading and study

- Explore comprehensive reading lists on consciousness, AI ethics, and moral philosophy. You can start with the learn more section at the bottom of this article.

- Stay updated on advancements in AI, the study of consciousness, and their potential implications for the moral status of digital minds.

- Gain relevant experience

- Seek internships or research assistant positions with academics working on related topics.

- Contribute to AI projects and get experience with machine learning techniques.

- Participate in online courses, reading groups, and workshops on AI safety, AI ethics, and philosophy of mind.

- Build your network

- Attend conferences and seminars on AI safety, consciousness studies, and related fields.

- Engage with researchers and organisations working on these issues, for example:

- Robert Long and Kathleen Finlinson at Eleos AI

- Kyle Fish, an AI welfare researcher at Anthropic

- Jeff Sebo at the NYU Center for Mind, Ethics, and Policy

- Jonathan Birch at the London School of Economics and Political Science

- Patrick Butlin at the Global Priorities Institute

- Derek Shiller at Rethink Priorities, which has announced a project on digital consciousness

- Joe Carlsmith at Coefficient Giving

- Any of the other researchers referenced in the learn more section of this article

- Start your own research

- Begin writing essays or blog posts exploring issues around the moral status of digital minds.

- Propose research projects to your academic institution or seek collaborations with established researchers.

- Consider submitting papers to relevant conferences or journals to establish yourself in the field.

Aim for key roles

You may want to eventually aim to:

- Become a researcher

- Develop a strong foundation in a relevant field, such as philosophy, cognitive science, cognitive neuroscience, machine learning, neurobiology, public policy, and ethics.

- Pursue advanced degrees in these areas and establish your credibility as an expert.

- Familiarise yourself with the relevant debates and literature on consciousness, sentience, and moral philosophy, and the important details of the disciplines you’re not an expert in.

- Build strong analytical and critical thinking skills, and hone your ability to communicate complex ideas clearly and persuasively.

- Read our article on developing your research skills for more.

- Help build the field

- Identify gaps in current research and discourse.

- Network with other researchers and professionals interested in this area.

- Organise conferences, workshops, or discussion groups on the topic.

- Consider roles in organisation-building or earning to give to support research initiatives.

- To learn more, read our articles on organisation-building and communication skills.

If you’re already an academic or researcher with expertise in a relevant field, you could consider spending some of your time on this topic, or perhaps refocusing your work on particular aspects of this problem in an impact-focused way.

If you are able to establish yourself as a key expert on this topic, you may be able to deploy this career capital to have a positive influence on the broader conversation and affect decisions made by policy makers and industry leaders. Also, because this field is so neglected, you might be able to do a lot to lead the field relatively early on in your career.

Pursue AI technical safety or AI governance

Because this field is underdeveloped, you may be best off to pursue a career in the currently more established (though also still relatively new) paths of AI safety and AI governance work, and use the experience you gain there as a jumping off point (or work at the intersection of the fields).

You can read our career reviews of each to find out how to get started:

Is moral advocacy on behalf of digital minds a useful approach?

Some might be tempted to pursue public, broad-based advocacy on behalf of digital minds as a career path. While we support general efforts to promote positive values and expand humanity’s moral circle, we’re wary about people seeing themselves as advocates for AI at this stage in the development of the technology and field.

It’s not clear that we need an “AI rights movement” — though we might at some point. (Though read this article for an alternative take.)

What we need first is to get a better grasp on the exceedingly challenging moral, conceptual, and empirical questions at issue in this field.

However, communication about the importance of these general questions does seem helpful, as it can help foster more work on the critical aspects of this problem. 80,000 Hours has done this kind of work on our podcast and in this article.

Where to work

- Academia

- You can pursue research and teaching positions in philosophy, technology policy, cognitive science, AI, or related fields.

- AI companies

- With the right background, you might want to work at leading AI companies developing frontier models.

- There might even be roles that are specifically focused on better understanding the status of digital minds and their ethical implications, such as Kyle Fish’s role at Anthropic, mentioned above.

- We think it’s possible a few other similar roles will be filled at other AI companies, and there may be more in the future.

- You may also seek to work in AI safety, policy, and security roles. But deciding to work for frontier AI companies is a complex topic, so we’ve written a separate article that tackles that issue in more depth.

- Governments, think tanks, and nonprofits

- Join organisations focused on AI governance and policy, and contribute to developing ethical and policy frameworks for safe AI development and deployment.

- Eleos AI is a nonprofit launched in October 2024 that describes itself as “dedicated to understanding and addressing the potential wellbeing and moral patienthood of AI systems.” It was founded by Robert Long, a researcher in this area who appeared on The 80,000 Hours Podcast.

We list many relevant places you might work in our AI governance and AI technical safety career reviews.

Support this field in other ways

You could also consider earning to give to support this field. If you’re a good fit for high-earning paths, it may be the best way for you to contribute.

This is because as a new field, and one that’s in part about nonhuman interests, there are few (if any) major funders supporting it and not much commercial or political interest. This can make it difficult to start new organisations and commit to research programmes that might not be able to rely on a steady source of funding. Filling this gap can make a huge difference in whether a thriving research field gets off the ground at all.

We expect there will be a range of organisations and different kinds of groups people will set up to address work on better understanding the moral status of digital minds. In addition to funding, you might join or help start these organisations. This is a particularly promising choice if you have a strong aptitude for organisation-building or founding a high-impact organisation.

Important considerations if you work on this problem

- Field-building focus: Given the early stage of this field, much of the work involves building credibility and establishing the topic as a legitimate area of inquiry.

- Interdisciplinary approach: Recognise that understanding digital minds requires insights from multiple disciplines, so cultivate a broad knowledge base. Do not dismiss fields — like philosophy, ML engineering, or cognitive science — as irrelevant just because they’re not your expertise.

- Ethical vigilance: Approach the topic with careful consideration of the ethical implications of your work and its potential impact on both biological and potential digital entities.

- Cooperation and humility: Be cooperative in your work and acknowledge your own and others’ epistemic limitations, and the need to find our way through uncertainty.

- Patience and long-term thinking: Recognise that progress in this field may be slow and difficult.

Learn more

Podcasts

- Robert Long on how we’re not ready for AI consciousness

- Andreas Mogensen on what we owe ‘philosophical Vulcans’ and unconscious AIs

- Kyle Fish on the most bizarre findings from 5 AI consciousness experiments

- Jeff Sebo on digital minds, and how to avoid sleepwalking into a major moral catastrophe

- Robert Long on why large language models like GPT (probably) aren’t conscious

- Jonathan Birch on the edge cases of sentience and why they matter

- Beyond human minds: The bewildering frontier of consciousness in insects, AI, and more (a compilation episode of consciousness researchers)

- Will MacAskill on AI causing a “century in a decade” — and how we’re completely unprepared

- Anil Seth on the predictive brain and how to study consciousness

- Peter Godfrey-Smith on interfering with wild nature, accepting death, and the origin of complex civilisation

- Carl Shulman on the economy and national security after AGII

- Eric Schwitzgebel on the weirdness of the world on Hear This Idea

Research and reports

- Taking AI Welfare Seriously by Robert Long, Jeff Sebo, et al.

- The stakes of AI moral status by Joe Carlsmith

- The Edge of Sentience: Risk and Precaution in Humans, Other Animals, and AI by Jonathan Birch (free PDF from Oxford University Press)

- Consciousness in artificial intelligence: Insights from the science of consciousness by Patrick Butlin, Robert Long, et al.

- Moral consideration for AI systems by 2030 by Jeff Sebo and Robert Long

- Propositions concerning digital minds and society and Sharing the world with digital minds by Nick Bostrom and Carl Shulman

- Digital minds: Importance and key research Questions by Andreas Mogensen, Bradford Saad, and Patrick Butlin

- Digital people would be an even bigger deal and Digital people FAQ by Holden Karnofsky

- Towards evaluating AI systems for moral status using self-reports by Robert Long and Ethan Perez

- Nervous systems, functionalism, and artificial minds by Peter Godfrey-Smith

- Improving the Welfare of AIs: A Nearcasted Proposal by Ryan Greenblatt

- The 2020 PhilPapers survey, edited by David Bourget and David Chalmers

- Reality+: Virtual Worlds and the Problems of Philosophy by David Chalmers

- Could a large language model be conscious? by David Chalmers

- Digital suffering: why it’s a problem and how to prevent it by Bradford Saad and Adam Bradley

- Amanda Askell on consciousness in current AI systems

- To understand AI sentience, first understand it in animals by Kristin Andrews and Jonathan Birch

- A defense of the rights of artificial intelligences and Designing AI with rights, consciousness, self-Respect, and freedom by Eric Schwitzgebel and Mara Garza

- Patiency is not a virtue: the design of intelligent systems and systems of ethics by Joanna J. Bryson

- Folk psychological attributions of consciousness to large language models by Clara Colombatto and Stephen Fleming

You can find more resources on the moral status of digital minds in this guide.

We’ve also written a more general argument here for thinking AI could be a very big deal, highlighting the challenges of AI moral status alongside other issues raised by AI.

Read next: Explore other pressing world problems

Want to learn more about global issues we think are especially pressing? See our list of issues that are large in scale, solvable, and neglected, according to our research.

Notes and references

- Lemoine has publicly shared a document he created while at Google documenting the evidence he believed indicated that the language model was sentient. You can read that document here.

The Washington Post reported:

“I know a person when I talk to it,” said Lemoine, who can swing from sentimental to insistent about the AI. “It doesn’t matter whether they have a brain made of meat in their head. Or if they have a billion lines of code. I talk to them. And I hear what they have to say, and that is how I decide what is and isn’t a person.” He concluded LaMDA was a person in his capacity as a priest, not a scientist, and then tried to conduct experiments to prove it, he said.

It’s worth noting that Lemoine was fired after he went public.

A Google spokesperson said:

Our team — including ethicists and technologists — has reviewed Blake’s concerns per our AI Principles and have informed him that the evidence does not support his claims. He was told that there was no evidence that LaMDA was sentient (and lots of evidence against it).

While we share scepticism about Lemoine’s claims, it may be too strong to say there’s “lots of evidence against” the sentience of LLMs; it’s not clear what counts as such evidence.↩

- “Moral status” may be used somewhat differently by different writers. When we say an entity has ‘moral status’, we mean it’s worthy of moral consideration in its own right to at least some degree. (There are many debates about whether and to what extent moral status can come in degrees, and what it entails, that we don’t take a position on here.) Another way to explain the same point is that if an entity has moral status, we have (moral) reasons to treat them in some ways rather than others.

“Digital minds” is one of the terms that has become common to refer to this problem area. It generally refers to AI systems that share at least some important features with animal minds. As we use the term, we don’t necessarily assume that something we call a “digital mind” is sentient or has moral status, but those are sensible questions that can be asked of such a system.↩

- There are many more scholars in philosophy and related fields that have studied issues related to consciousness, the brain, and other issues in the philosophy of mind which have some relevance to this topic, but they often have not focused on the most critical and action-relevant questions that we would like to see prioritised.

But how neglected should we expect this area to be in the future if artificial minds become increasingly commonplace? In such a world, the questions raised in this article could get much more attention than they get now. AI systems themselves might help us better understand and advocate for their own interests. So the field might not remain neglected for long.

That said, we’re much more likely to be in a good position to answer the most pressing questions if we build a field of thoughtful and knowledgeable experts in all aspects of this problem as soon as possible. Given we now live in a world with increasingly complex and widespread AI systems, now might be a key moment to grow and shape the field.↩

- GPT-3, OpenAI’s predecessor language model to GPT-3.5 and GPT-4 that have powered ChatGPT, had already made headlines in the summer of 2020 before the survey was conducted in October of that year. It’s plausible that, given the additional attention on more advanced models since 2020, a somewhat larger percentage of philosophers surveyed would lean toward thinking some current AI systems are conscious.↩