Shrinking AGI timelines: a review of expert forecasts

As a non-expert, it would be great if there were experts who could tell us when we should expect artificial general intelligence (AGI) to arrive.

Unfortunately, there aren’t.

There are only different groups of experts with different weaknesses.

This article is an overview of what five different types of experts say about when we’ll reach AGI, and what we can learn from them (that feeds into my full article on forecasting AI).

In short:

- Every group shortened their estimates in recent years.

- AGI before 2030 seems within the range of expert opinion, even if many disagree.

- None of the forecasts seem especially reliable, so they neither rule in nor rule out AGI arriving soon.

Table of Contents

Here’s an overview of the five groups:

AI experts

1. Leaders of AI companies

The leaders of AI companies are saying that AGI arrives in 2–5 years, and appear to have recently shortened their estimates.

This is easy to dismiss. This group is obviously selected to be bullish on AI and wants to hype their own work and raise funding.

However, I don’t think their views should be totally discounted. They’re the people with the most visibility into the capabilities of next-generation systems, and the most knowledge of the technology.

And they’ve also been among the most right about recent progress, even if they’ve been too optimistic.

Most likely, progress will be slower than they expect, but maybe only by a few years.

2. AI researchers in general

One way to reduce selection effects is to look at a wider group of AI researchers than those working on AGI directly, including in academia. This is what Katja Grace did with a survey of thousands of recent AI publication authors.

The survey asked for forecasts of “high-level machine intelligence,” defined as when AI can accomplish every task better or more cheaply than humans. The median estimate was a 25% chance in the early 2030s and 50% by 2047 — with some giving answers in the next few years and others hundreds of years in the future.

The median estimate of the chance of an AI being able to do the job of an AI researcher by 2033 was 5%.1

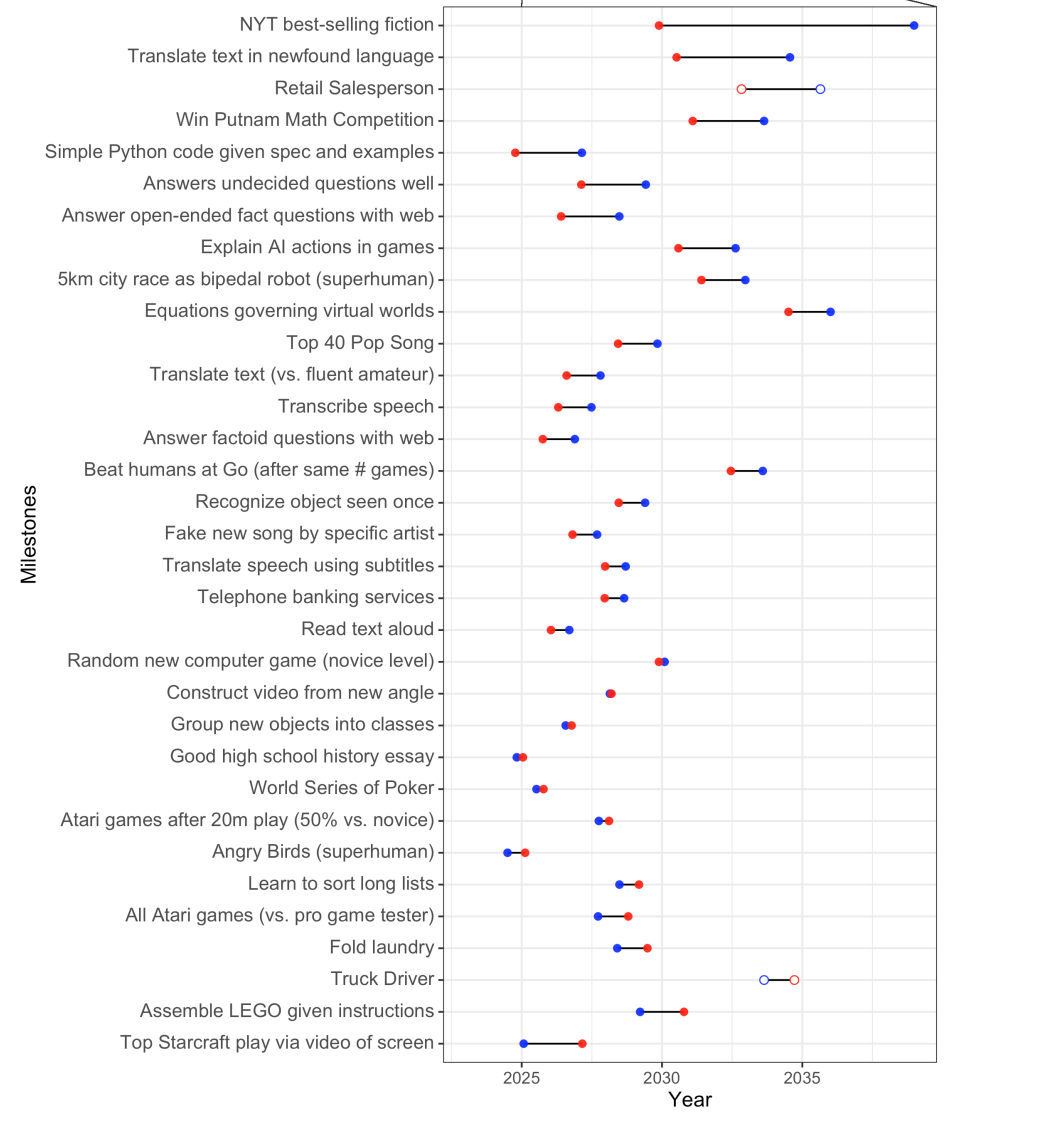

They were also asked about when they expected AI could perform a list of specific tasks (2023 survey results in red, 2022 results in blue).

Historically their estimates have been too pessimistic.

In 2022, they thought AI wouldn’t be able to write simple Python code until around 2027.

In 2023, they reduced that to 2025, but AI could maybe already meet that condition in 2023 (and definitely by 2024).

Most of their other estimates declined significantly between 2023 and 2022.

The median estimate for achieving ‘high-level machine intelligence’ shortened by 13 years.

This shows these experts were just as surprised as everyone else at the success of ChatGPT and LLMs. (Today, even many sceptics concede AGI could be here within 20 years, around when today’s college students will be turning 40.)

Finally, they were asked about when we should expect to be able to “automate all occupations,” and they responded with much longer estimates (e.g. 20% chance by 2079).

It’s not clear to me why ‘all occupations’ should be so much further in the future than ‘all tasks’ — occupations are just bundles of tasks. (In addition, the researchers think once we reach ‘all tasks,’ there’s about a 50% chance of an intelligence explosion.)

Perhaps respondents envision a world where AI is better than humans at every task, but humans continue to work in a limited range of jobs (like priests).2 Perhaps they are just not thinking about the questions carefully.

Finally, forecasting AI progress requires a different skill set than conducting AI research. You can publish AI papers by being a specialist in a certain type of algorithm, but that doesn’t mean you’ll be good at thinking about broad trends across the whole field, or well calibrated in your judgements.

For all these reasons, I’m sceptical about their specific numbers.

My main takeaway is that, as of 2023, a significant fraction of researchers in the field believed that something like AGI is a realistic near-term possibility, even if many remain sceptical.

If 30% of experts say your airplane is going to explode, and 70% say it won’t, you shouldn’t conclude ‘there’s no expert consensus, so I won’t do anything.’

The reasonable course of action is to act as if there’s a significant explosion risk. Confidence that it won’t happen seems difficult to justify.

Expert forecasters

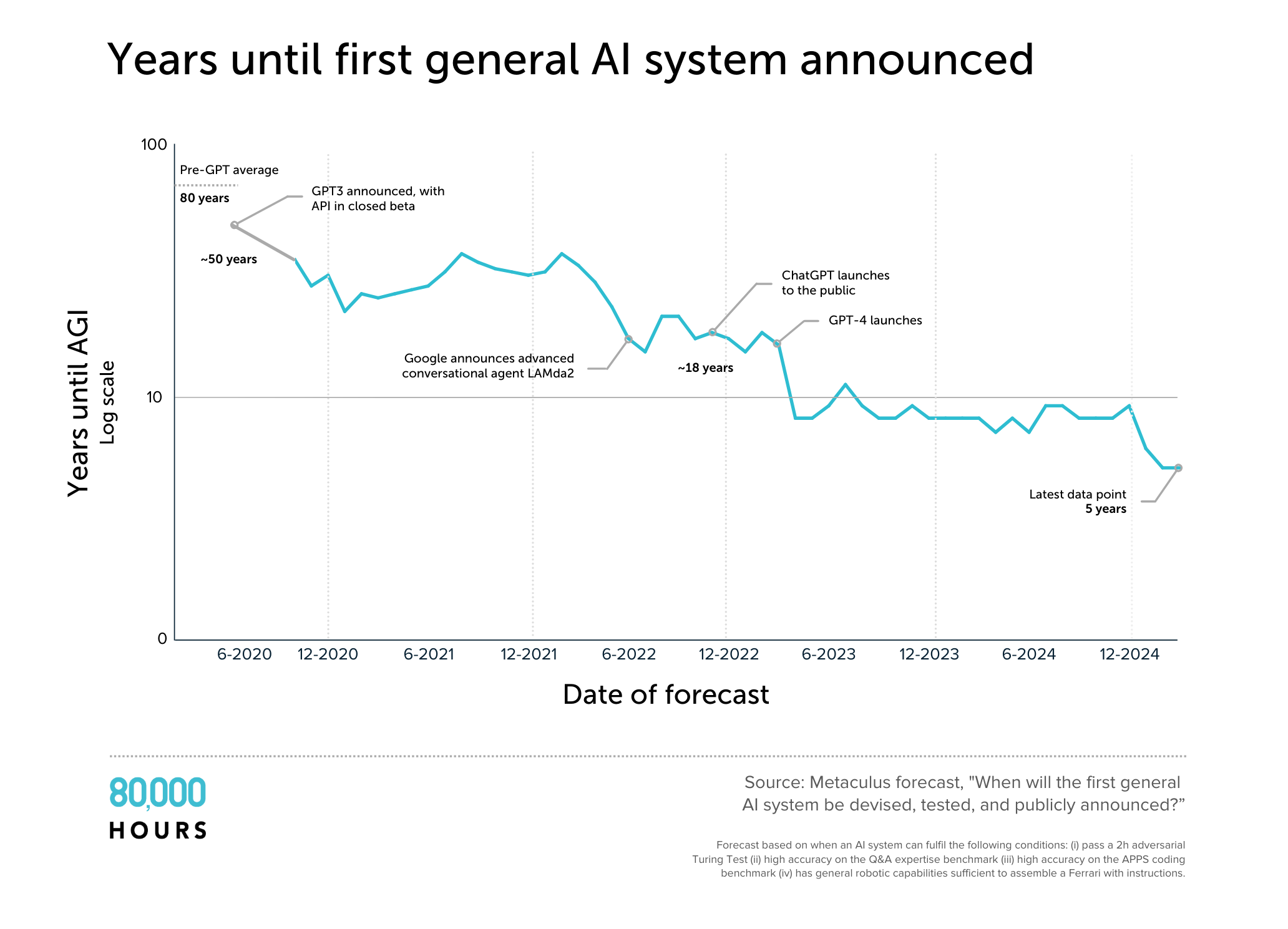

3. Metaculus

Instead of seeking AI expertise, we could consider forecasting expertise.

Metaculus aggregates hundreds of forecasts, which collectively have proven effective at predicting near-term political and economic events.

It has a forecast about AGI with nearly 2000 responses. AGI is defined with four conditions (detailed on the site).

As of February 2026, the forecasters average a 25% chance of AGI by 2029 and 50% by 2033.

The forecast has dropped dramatically over time, from a median of 50 years away as recently as 2020. However, it has risen in the last year (with the 25% and 50% figures each increasing by two years).

However, the definition used in this forecast is not great.

First, it’s overly stringent, because it includes general robotic capabilities. Robotics is currently lagging, so satisfying this definition could be harder than having an AI that can do remote work jobs or help with scientific research.

But the definition is also not stringent enough because it doesn’t include anything about long-horizon agency or the ability to have novel scientific insights.

An AI model could easily satisfy this definition but not be able to do most remote work jobs or help to automate scientific research.

Metaculus also seems to suffer from selection effects and their forecasts are seemingly drawn from people who are unusually into AI.

4. Superforecasters in 2022 (XPT survey)

Another survey asked 33 people who qualified as superforecasters of political events.

Their median estimate was a 25% chance of AGI (using the same definition as Metaculus) by 2048 — much further away.

However, these forecasts were made in 2022, before ChatGPT caused many people to shorten their estimates.

The superforecasters also lack expertise in AI, and they made predictions that have already been falsified about growth in training compute.

5. Samotsvety in 2023

In 2023, another group of especially successful superforecasters, Samotsvety, which has engaged much more deeply with AI, made much shorter estimates: ~28% chance of AGI by 2030 (from which we might infer a ~25% chance by 2029).

These estimates also placed AGI considerably earlier compared to forecasts they’d made in 2022.

More recently, one of the leaders of Samotsvety (Eli Lifland), was involved in a forecast for ‘superhuman coders’ as part of the AI 2027 project. This gave roughly a 25% chance of arriving in 2027.

However, compared to the superforecasters above, Samotsvety are selected for interest in AI.

Finally, all of the three groups of forecasters have been selected for being good at forecasting near-term current events, which could fail to generalise to forecasting long-term, radically novel events.

Summary of expert views on when AGI will arrive

| Group | 25% chance of AGI by | Strengths | Weaknesses |

|---|---|---|---|

| AI company leaders (January 2025) | 2026 Unclear definition. |

|

|

| Published AI researchers (2023) | ~2032 Defined as ‘can do all tasks better than humans’ |

|

|

| Metaculus forecasters (January 2025) | 2027 four-part definition incl. robotic manipulation. |

|

|

| Superforecasters via XPT (2022) | 2047 Same definition as above. |

|

|

| Samotsvety superforecasters (2023) | ~2029 Same definition as above. |

|

|

In sum, it’s a confusing situation. Personally, I put some weight on all the groups, which averages me out at ‘experts think AGI before 2030 is a realistic possibility, but many think it’ll be much longer.’

This means AGI soon can’t be dismissed as ‘sci fi’ or unsupported by ‘real experts.’ Expert opinion can neither rule out nor rule in AGI soon.

Mostly, I prefer to think about the question bottom up, as I’ve done in my full article on when to expect AGI.

Learn more

- Why AGI might be here by 2030.

- Through a glass darkly by Scott Alexander is an exploration of what can be learned from expert forecasts on AI.

- ‘Long’ timelines to advanced AI have gotten crazy short by Helen Toner.

- Results of the largest survey of AI researchers from 2023, and some sceptical discussion of it.

Notes and references

- Median probability of being able to do the job of an AI researcher by 2043 was 10%.

An AI that can meaningfully help speed-up AI research will probably arrive sooner (which might accelerate a “full” automated researcher).↩ - I’d also argue “all tasks” is more relevant to figuring out when an acceleration of AI or scientific research might be possible.↩