How to make a difference: Part 5 How we rank the world’s most pressing problems (and why)

Table of Contents

- 1 How do we rank the world’s most pressing problems?

- 2 Why charity doesn’t begin at home

- 3 Where might an even greater scale of suffering be found?

- 4 The importance of future generations

- 5 The case for focusing on neglected existential risks

- 6 Why AI could change everything

- 7 What are the most pressing AI risks?

- 8 Are there weirder problems that are even more pressing again?

- 9 Which problems should you focus on?

- 10 Put into practice

We’ve spent much of the last 15-plus years trying to answer a simple question: what are the world’s most pressing problems?

We wanted to have a positive impact with our careers, so we set out to discover where our efforts would be most effective.

Our analysis suggests that choosing the right problem could increase your impact by over 100 times, which would make it the most important driver of your impact.

As we explained in the previous article, we saw that finding the answer involves looking for world problems that are big, but also the most solvable and most neglected. This seemingly simple framework has led us to some radical places.

What follows is an unabashedly opinionated guide that explains why preventing diarrhoea has saved as many lives as world peace, why we recommended trying to prevent the next pandemic long before COVID-19, and why we think AI is the key thing to focus on today — though not the risks people most often discuss.

Reading time: 40 minutes.

The bottom line:

How do we rank the world’s most pressing problems?

Over the last 15 years, the way we rank the world’s most pressing problems has shifted dramatically. Most people who want to do good choose careers in health, education, or social issues in their home countries, but in 2009, we were confronted with the immense scale of global poverty.

The world’s poorest people are about 20 times worse off than those living on the poverty line in the US, and there are proven ways to help them that even the most hardened aid sceptics endorse. By focusing on the most cost-effective ways to help the world’s poorest, it’s possible to have over 100 times as much impact as you would working on social issues in rich countries.

Shocked by the disparity, we began to wonder if there might be even bigger and more neglected problems. First, we looked into factory farming, which affects around a trillion animals per year but receives 1,000 times less funding again vs global health. From there, we expanded our focus to consider future generations.

The future could contain far more people than are alive today, but they can’t vote or buy things, which means our system neglects them. At the same time, in the coming decades we face existential risks that would be catastrophic not only for people living in the present, but could also prevent all future generations from existing.

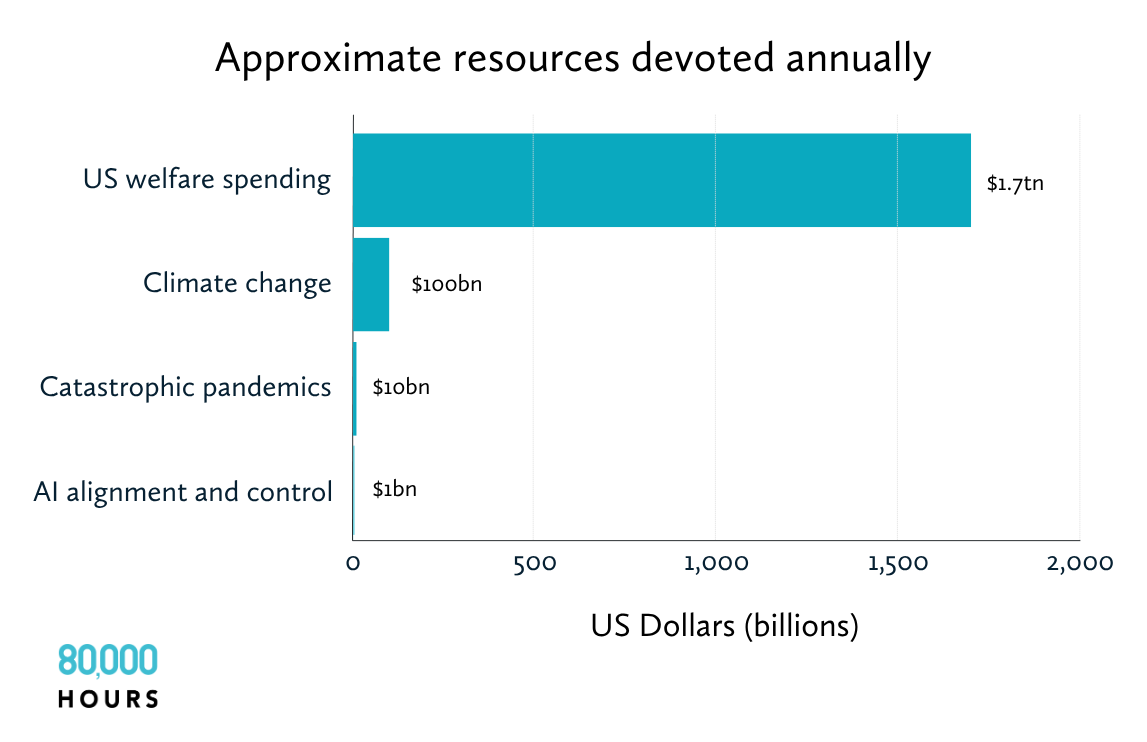

After that realisation, we wanted to know which existential risks were the biggest and most neglected. Climate change is often regarded as the most significant existential risk, but the scientific consensus is that, while it will be extremely damaging, it’s unlikely to end civilisation. Engineered pandemics, meanwhile, are a far more neglected problem and potentially more destructive, yet climate change receives over 10 times more investment.

In 2016, we wrote that AI would probably be the most transformative technology of our lifetimes, offering both huge upsides and new existential risks. Since then, the field has progressed even faster than we anticipated. Today, we think risks associated with advanced AI, such as humans losing control of autonomous systems, or the use of AI by dictators and other actors to concentrate power, rank highest among the world’s most pressing problems. Although it can feel like all anyone is talking about is AI, the number of people working on AI alignment and control is probably around 1,000, and for many other risks, the number is in the tens.

You can stay current with our most up-to-date list of world problems.

Why charity doesn’t begin at home

Most people who want to do good focus on the problems they see all around them. In rich countries, this often means issues like homelessness, inner-city education, and unemployment. But, while a natural starting point, are these really the most urgent issues?

In the US, only 5% of charitable donations are spent on international issues,1 while the large majority is spent explicitly on domestic ones. Around 45% of US college graduates enter careers in education, health, and public administration — which mainly involve helping people at home in the US.2 (Most of the remainder take corporate jobs, and it’s similar in other countries.)

There are some good reasons to focus on helping your own country — you know more about the issues, and you might feel you have special obligations to it. However, back in 2009, we encountered the following series of facts which changed our minds.

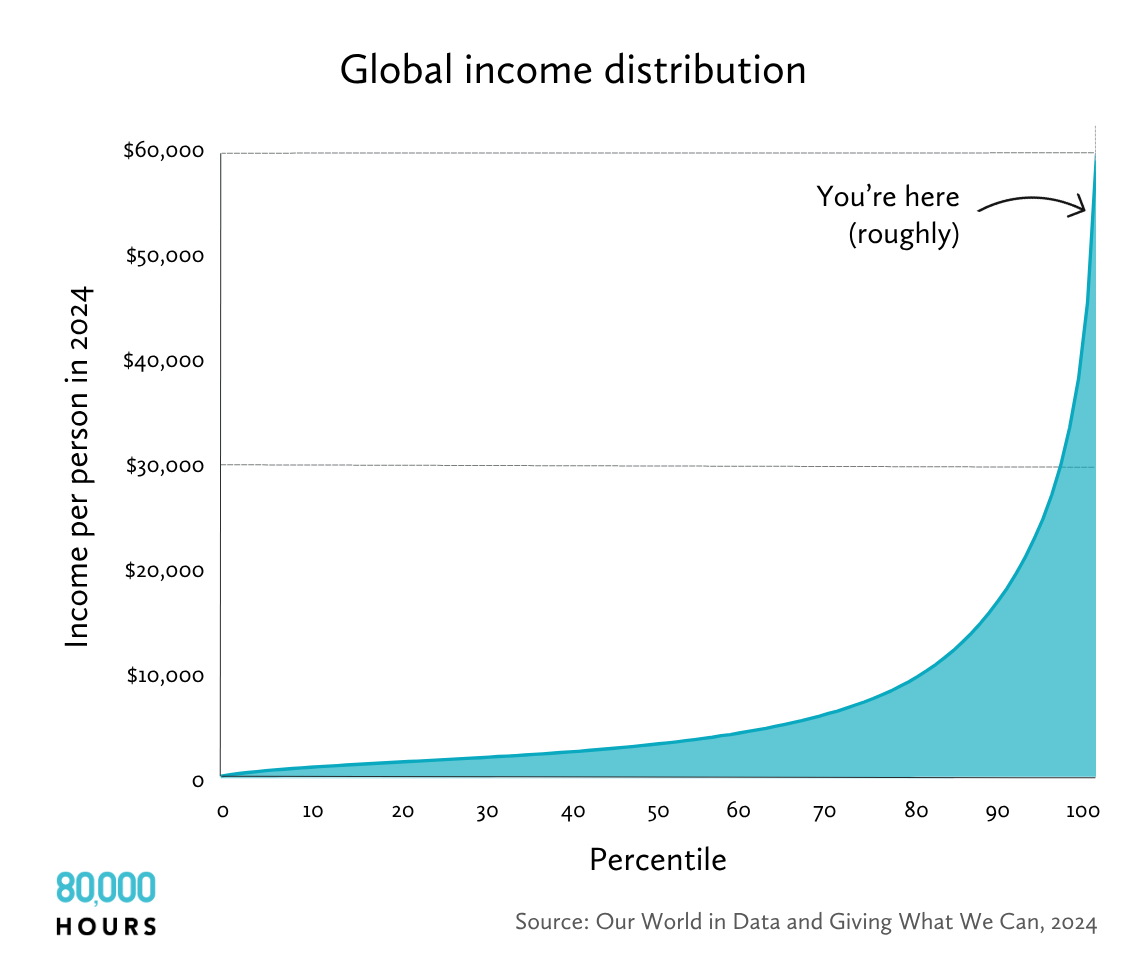

First, remember the distribution of world income? We came across it already in an earlier article.

Even someone living on the US poverty line ($15,650 per year, as of 2025) is richer than about 85% of the world’s population and about 20 times wealthier than the world’s poorest 800 million, most of whom live in Central America, Africa, and South Asia and earn about $1,000 per year.3

Not only are the poorest people in the world much poorer than those in the US, there’s also a lot more of them. There are about 40 million people living in relative poverty in the US, just 5% of the 800 million living in extreme poverty globally.4

Crucially, there are far more resources dedicated to helping this much smaller number of people. Overseas development aid from all countries is under $200 billion per year, compared to $1.7 trillion spent on welfare in the US alone.5 This is what we’d expect given the biases we covered earlier.

We also learned that a significant fraction of US social interventions probably don’t work at all. This is exactly what we’d expect based on the difference in wealth. If a problem in the US persists, it’s probably because it’s complex and can’t be easily solved with more resources. By contrast, the world’s poorest regularly die from things like contaminated water, which almost never happens in the US.

This isn’t to deny that the poor people in rich countries have tough lives, perhaps even worse in some ways than those elsewhere. Rather, the issue is that there are far fewer of them, and they’re much harder to help. And this argument can be extended to other rich countries, including the UK, Australia, Canada, and most of the EU. This raises the question, what are the most pressing problems facing the world’s poorest people?

Earlier, we told the story of Dr Nalin, the pioneering physiologist who helped to develop oral rehydration therapy as a treatment for diarrhoea.

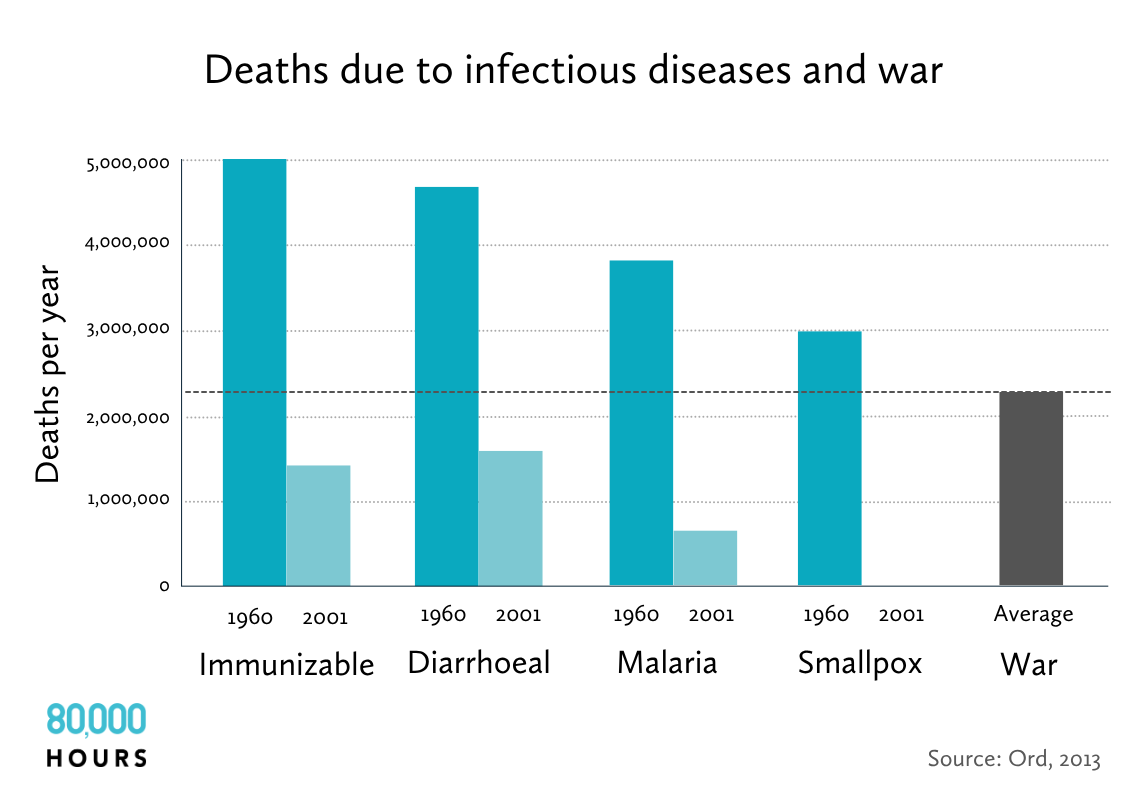

As a result of this kind of work, the number of deaths each year due to diarrhoea has fallen by 3 million over the last five decades. And there have been similar victories over other infectious diseases. Meanwhile, wars and political famines killed an average of two million people per year over the 20th century.6 So we could say that efforts by Nalin and others did more to save lives than achieving world peace would have done.

The global fight against disease has been one of humanity’s greatest achievements, but it’s an ongoing battle, and one that you can contribute to with your career. Many of these victories — such as the vaccination drive that eradicated smallpox — were driven in part by international humanitarian aid, and there is more to be done.7

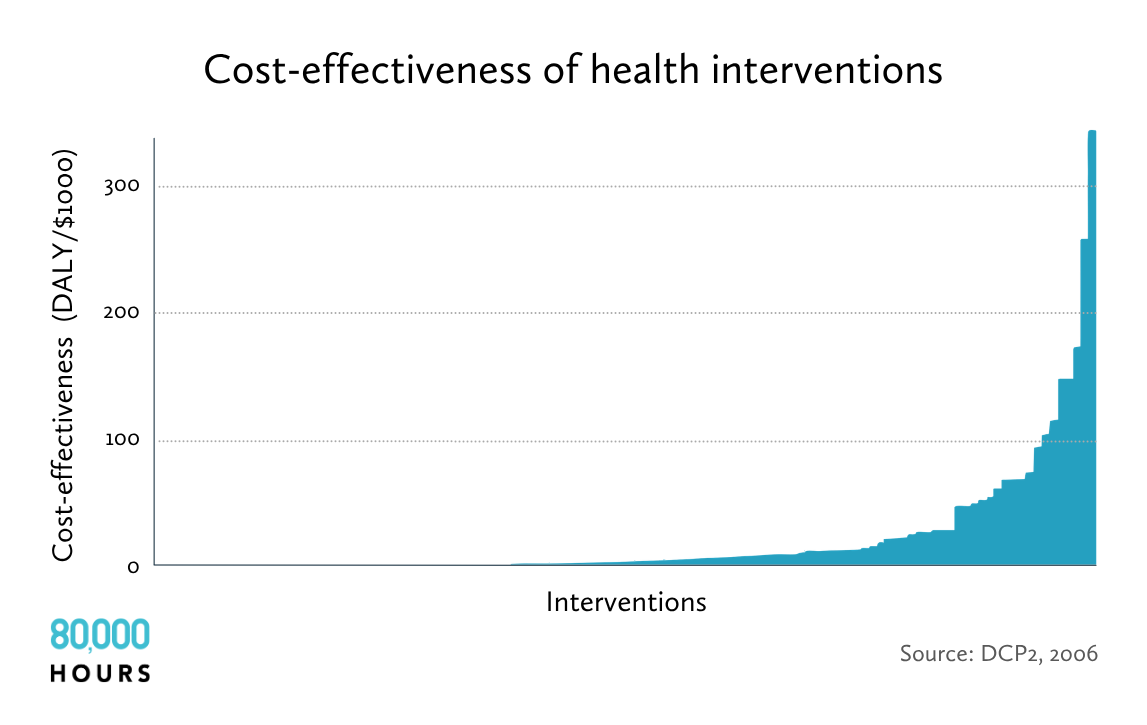

Now consider the following data. It’s the data Toby Ord introduced me to, and which eventually led me to found 80,000 Hours.

The graph shows health treatments, such as tuberculosis medicine or cataracts surgery, in order of how much ill health they reduce per dollar, as measured in randomised controlled trials. Ill health is measured using a standard unit used by health economists called the ‘quality-adjusted life year‘ (QALY) that takes into account both the severity of the disease being treated, and how many years of benefit are provided.

All the treatments studied are effective, and all of them would be funded in North America or Europe.

For instance, drugs to treat the AIDS-related cancer Kaposi’s sarcoma are expensive and only provide a small benefit. This puts them around the threshold of what would get funded in a rich country (such as via health insurance), but are still seen as clearly worth funding. However, the data found that antiviral therapy would, for the same cost, prevent AIDS in a much larger number of people, preventing over 10 times as much ill health. Preventing the transmission of HIV during pregnancy in the first place, meanwhile, is another four times cheaper again.

The very most effective interventions in the entire sample, like childhood vaccinations, were about 10 times more cost effective than the mean, 60 times the median, and 15,000 times more than the worst.8

This is an astonishing result — perhaps suspiciously so. It turned out there were mistakes in the original analysis that meant the effectiveness of the top result was overstated.9 But even after these mistakes were corrected, the top interventions remained at the top, and were still much more effective than average.

This means if you were to work at a health charity focused on one of the most effective interventions, you could expect to have at least several times more impact compared to a randomly selected one, and probably 100 times more impact than those in the bottom half.

How much more impact might you be able to make in your career by switching your focus to global health? As we’ve seen, because the world’s poorest people are over 20 times poorer than the poorest in rich countries, resources should go about 20 times as far in helping them.10 We can then also use the data above to pick the very best interventions within global health, allowing us to have perhaps five times as much impact again.11 Those combine to make a 100-fold increase in expected impact.12

Does this check out? The UK’s National Health Service (NHS) and many US government agencies are willing to spend over $30,000 to give someone an extra year of healthy life, or over $1 million to save a life.13 This is a fantastic use of resources by ordinary standards. However, as we saw earlier, the nonprofit GiveWell has identified real charities that can use a $3,000 donation to save a child’s life. This is about 0.3% of what it typically takes to save a life in a rich country.

So a year spent working somewhere like the Malaria Consortium might improve health as much as working in a typical healthcare job in a rich country for 300 years.14

These discoveries caused many of us at 80,000 Hours to start giving at least 10% of our incomes to effective global health charities. No matter which job we ended up in, these donations would enable us to make a significant difference. In fact, if the 100-fold figure is correct, a 10% donation would be equivalent to donating 1,000% of our income to charities focused on poverty in rich countries.

See more detail on how to contribute to global health in our full profile.

However, everything we learned about global health raised many more questions. If it was possible to have 10 or 100 times more impact than the most common ways of helping others, perhaps with a bit more effort, we could find something even better?

Where might an even greater scale of suffering be found?

Are there any global issues that cause even more suffering than global poverty? One answer to this question was put forward by Australian moral philosopher Peter Singer.15

Around a trillion animals die every year in factory farms in conditions that would be considered torture if inflicted on your pet.16 Chickens are bred to grow so fast they can barely walk and are kept in tiny cages their entire lives. Female pigs are forced to lie in crates so narrow they can’t even roll over, before dying painfully in gas chambers.17 Fish are killed by leaving them to suffocate for hours in the open air.

Over 99% of the meat eaten by humans is produced in factory farms, with genuinely ‘high welfare’ meat forming a tiny, tiny minority.18 Animals in factory farms have no economic or political power. They depend entirely on our compassion — and because they are out of sight, they get almost none.

Most philanthropic efforts dedicated to helping animals are directed towards things like The Donkey Sanctuary, which is one of the UK’s best-funded animal charities.19 It aims to give working donkeys a comfortable retirement, attracting huge bequests from pensioners who like cute animals.

The entire philanthropic field dedicated to ending factory farming, by contrast, receives about $400 million per year, only 0.03% of total philanthropic funding in the US.20 That’s under 1% of donations to international development (which in turn receives only 5% of the total).21

Making the comparison between helping humans and preventing animal suffering is philosophically controversial, but almost everyone agrees that it’s bad to torture animals. And it also turns out this suffering can be reduced extremely cheaply.

There’s a long-standing belief that the best way to stop factory farming is to convince people to stop eating meat. But that doesn’t appear to work — at least not anymore. Over the past 20 years of animal advocacy, the number of vegans and vegetarians has basically stayed flat.22 This makes sense: meat is delicious, widely available, and plays a central role in many cherished cultural traditions. Moreover, most people are already aware of the arguments for vegetarianism, and yet they haven’t changed.

In contrast, recent efforts to convince companies to switch from caged to cage-free eggs have been enormously successful. One philanthropically funded campaign cost around $85 million over 10 years, but persuaded hundreds of companies in the US and EU to make the switch. This increased the cost of an egg by only $0.01–0.03, but has already saved around 1 billion chickens from living agonising lives in cramped cages.23

There are many more welfare reform campaigns that could be run. It’s too simplistic to say that aiming to bring about ‘institutional change’ is always better than trying to change individual behaviour, but this is a case where it is.

Another approach is the development of cheap, tasty substitutes, whether they be plant-based meat (like Beyond Burgers) or cultivated meat grown from a sample cell that is physically identical to animal meat. These strategies aren’t always profitable, but with subsidies it could be possible to drive down costs and develop a self-sustaining industry — in the same way subsidies were needed to develop solar panels, which are now cheaper in many places than fossil fuels (which in turn benefit from huge subsidies). Reducing meat consumption would also be great for the planet, as agriculture is one of the biggest sources of greenhouse gas emissions.24

One individual we worked with, Richard, was working in international development policy, helping to ensure that aid spending was focused on the most cost-effective programmes. He wasn’t an ‘animal person’: he didn’t have a pet, and didn’t find farmed animals especially cute or appealing. But after learning about the vast number of animals in factory farms, the cruelty of their treatment, and the tiny amount of resources dedicated to helping them, he became convinced that he should shift his focus.

After visiting a factory farm and slaughterhouse, and being shocked at the violence and suffering he saw, Richard joined the Good Food Institute (GFI), an NGO that provides research and policy advice aimed at kick-starting the alternative proteins industry.

GFI has supported over 100 new projects and helped secure £27 million of funding for alternative proteins from the UK government, as well as €38 million from the German government. Richard and his team also helped to defeat attempts to ban plant-based products from using ‘meaty’ names, such as ‘burger’ or ‘sausage,’ in the EU.

We still think factory farming is an urgent problem, as we explain in our full problem profile. We helped to found Animal Charity Evaluators, which does research into how to most effectively improve animal welfare. But while focusing on animals looks like one way to find problems that are even bigger and more neglected than global health, we wondered if we could find something even bigger again.

The importance of future generations

Imagine the following: you throw away some broken glass in the forest. Later, a child walks by and cuts their foot. Now, suppose the child only cuts their foot 100 years in the future. Does that mean it wasn’t bad to throw away the glass after all?

Most people would say no, which tells us that they care about future generations. In a similar way, it’s hard to understand why people would care about their grandchildren’s children, or their legacy in business, art, or science, or about preserving the natural world, if they didn’t care about what will happen after they’re gone.

This simple idea — that future generations matter — has some radical implications about where to focus when applied to the world today. We were first exposed to these implications by researchers at the University of Oxford’s (modestly named) Future of Humanity Institute. William MacAskill, my cofounder at 80,000 Hours, later helped to coin the term ‘longtermism,’ the view that helping future generations should be a key moral priority of our time.

Here’s the argument:

First, future generations matter, but they can’t vote, buy things, or stand up for their interests — much like animals in factory farms. This means our system neglects them. In addition, their plight is abstract. The suffering on factory farms is just a few clicks away on YouTube, whereas we can’t so easily visualise lost future potential. Future generations rely more than any other group on our goodwill, and yet even that is hard to muster.

What’s more, Earth will remain habitable for hundreds of millions of years at least, and while it’s true we may die out before that point, if there’s a chance we’ll make it, then we’d expect many more people will live in the future than are alive today.25

To use some oversimplified figures: if there’s one generation per century, then over 100 million years there would be 1 million future generations.26 This is such a big number that any problem which affects future generations is potentially of a far greater scale than an issue which only affects the present. It could end up affecting the lives of millions of times more people, with all the art, science, culture, joy, and suffering those lives will entail. So the problems that affect the future are not only likely to be neglected, they’re also potentially the largest in scale, nearly no matter what you value. (We cover these ideas in more depth in a separate article.)

The last, crucial point is that there are things we can do today that will help both people living now and future generations. What might those be?

The case for focusing on neglected existential risks

In the summer of 2013, Barack Obama referred to climate change as “the global threat of our time.” He’s not alone in this opinion. When people think of problems facing future generations, climate change is usually the first thing that comes to mind. Polls find that young people routinely rate it as the world’s most pressing issue.27

One reason for that is many fear that climate change could lead to a catastrophic collapse of civilisation, and even the end of the human species.28 One of the most famous climate advocacy groups, Extinction Rebellion, named itself in opposition to this possibility.

We think this is on the right track — but that the rebellion could be broadened. A strong candidate for the most effective way to help future generations is to prevent a catastrophe that ends civilisation, since such a catastrophe would prevent future generations from even existing.

So long as civilisation continues, however, there’s a good chance that problems like poverty and disease will eventually be solved. Anything that poses a truly existential threat, however, would prevent any such progress forever.29

To illustrate the difference, consider two scenarios:

- A nuclear war kills 99% of people, but civilisation recovers.

- A nuclear war kills 100% of people.

If you only focus on the present, the second scenario is only about 1% worse than the first. However, if you factor future generations into the equation, then the second is much, much worse, since it eliminates all future potential as well.

From this perspective, we should pay a lot more attention to risks that are most likely to be existential (defined as those that risk permanent loss of civilisation’s future potential, whether via extinction or lock-in of a worse future).30 Instead, people often mislabel risks as existential when they aren’t, while those that actually are get less attention. (See more on the argument for focusing on existential risks.)

This is where we disagree with President Obama, or more precisely with the widely held view that climate change is the world’s most pressing existential risk.

The Intergovernmental Panel on Climate Change’s (IPCC) “Sixth Assessment Report” is clear: climate change will be hugely destructive. Most likely we’ll see 2–3ºC of warming, and this will cause floods, famines, fires, and droughts. The world’s poorest people will be affected most.

But, even when we try to account for tail risks and other uncertainties, nothing in the IPCC’s report suggests that civilisation itself will be destroyed. The worst-case scenarios outlined in the report involve 6ºC of warming, and in those most of the Earth would still remain habitable.

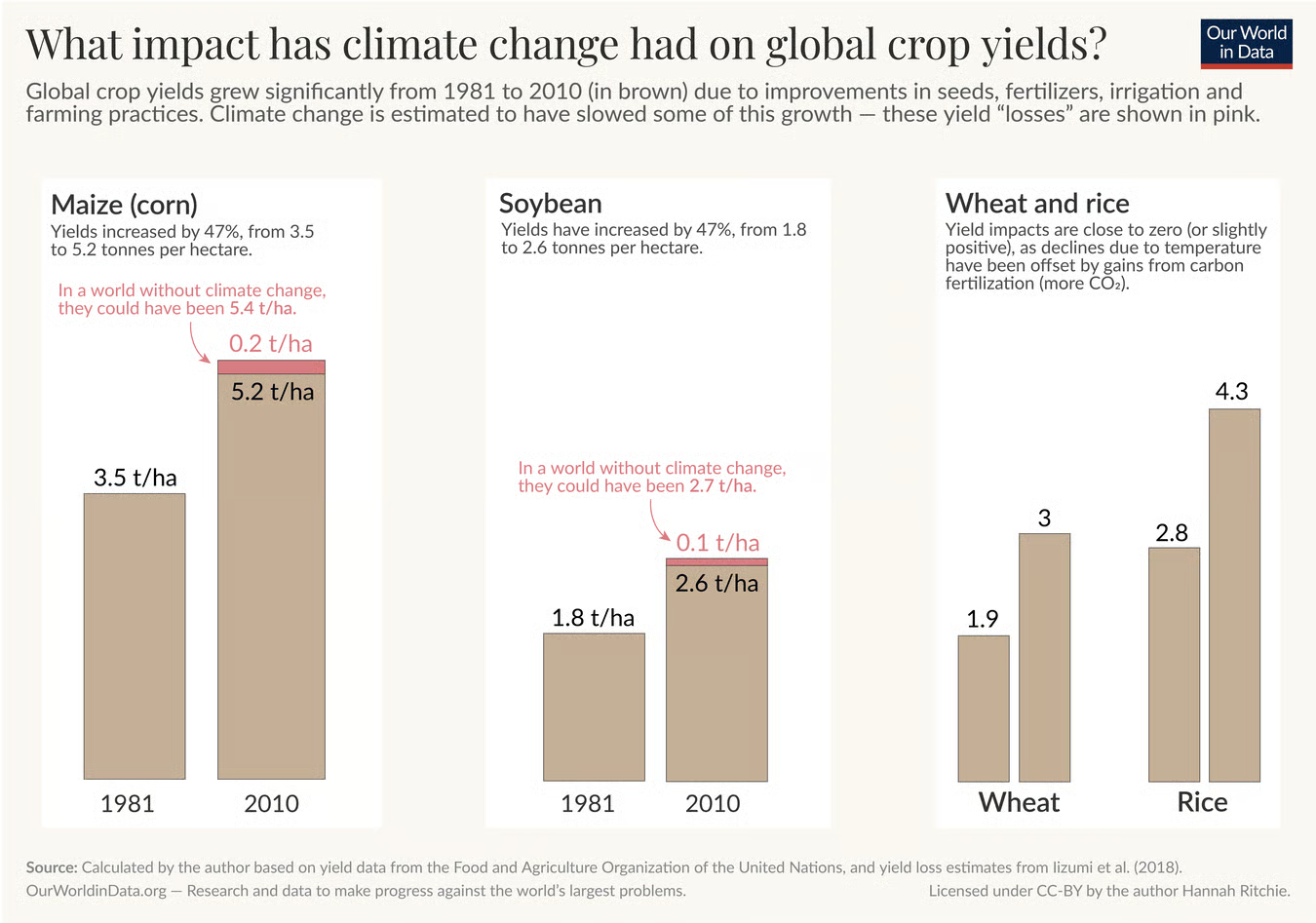

Here’s one illustration:31 even with 5ºC of warming, wheat yields in temperate regions would most likely increase around 18%, due to the longer growing season. It’s true that yields of crops like maize in regions near the equator would fall about 24%, which could cause famine in those regions. But this comparison is between a worst-case future and what would have happened without climate change.

Since 1961, crop yields have steadily increased around 200% due to research and innovation, despite the 1–1.5ºC of warming we’ve already experienced. So, even if climate change causes yields to decline 30% by the end of the century, they’ll most likely end up far higher overall.32 Pessimistic analyses usually completely ignore the forces of innovation working in the opposite direction from climate change, as well as the many ways we could adapt.

Similarly, the most negative analyses of the economic cost of climate change argue it could reduce global GDP by 30%.33 That’s a huge decline. But it fails to take into account ongoing economic growth of about 2.5% per year. If that continues, then in 75 years our descendants will be six times richer than we are. A 30% cut in GDP caused by climate change would mean they’ll only be 4.2 times richer. In fact, in all of the IPCC’s scenarios, it’s projected that average per capita income will be higher in 2100 than it is today.

Climate change is also already widely acknowledged as a major problem — conspiracy theories aside. Most governments have signed binding treaties, and as of 2024, philanthropic spending on climate change is around $6–10 billion per year, government grants run into tens of billions in the US alone,34 and all financing for climate initiatives internationally runs to about $1.6 trillion.

In most rich countries, CO2 emissions have already fallen significantly, and the continued decline in the cost of solar panels, batteries, and electric vehicles means it will be possible to continue these trends.35

So, while we think tackling climate change is an important way to help future generations — and you can read more about the risk from climate change in our full profile — we think there are much more neglected, and more existentially dangerous issues for you to consider working on.

Biorisk: the threat from new pandemics

In 2006, The Guardian newspaper ordered segments of smallpox DNA in the mail. If assembled into a complete strand and transmitted to 10 people, a study estimated the virus could infect up to 2.2 million people in 180 days — potentially killing 660,000 of them — if authorities did not respond quickly with vaccinations and quarantines.36

We first wrote about the risks posed by catastrophic pandemics back in 2014.37 Our reasoning was that major pandemics arise every few decades, but not much was being done to prepare for the next one. Six years after that, COVID-19 caused over $10 trillion of economic damage,38 and most likely killed over 20 million people.

Despite all this damage, it’s unclear whether we’re any better prepared for the next one. Moreover, a future pandemic could easily be both more infectious and more lethal than COVID-19, for instance with a 10% fatality rather than 1%. A disease this dangerous could shut down global supply chains, resulting in food shortages and even a breakdown of law and order.

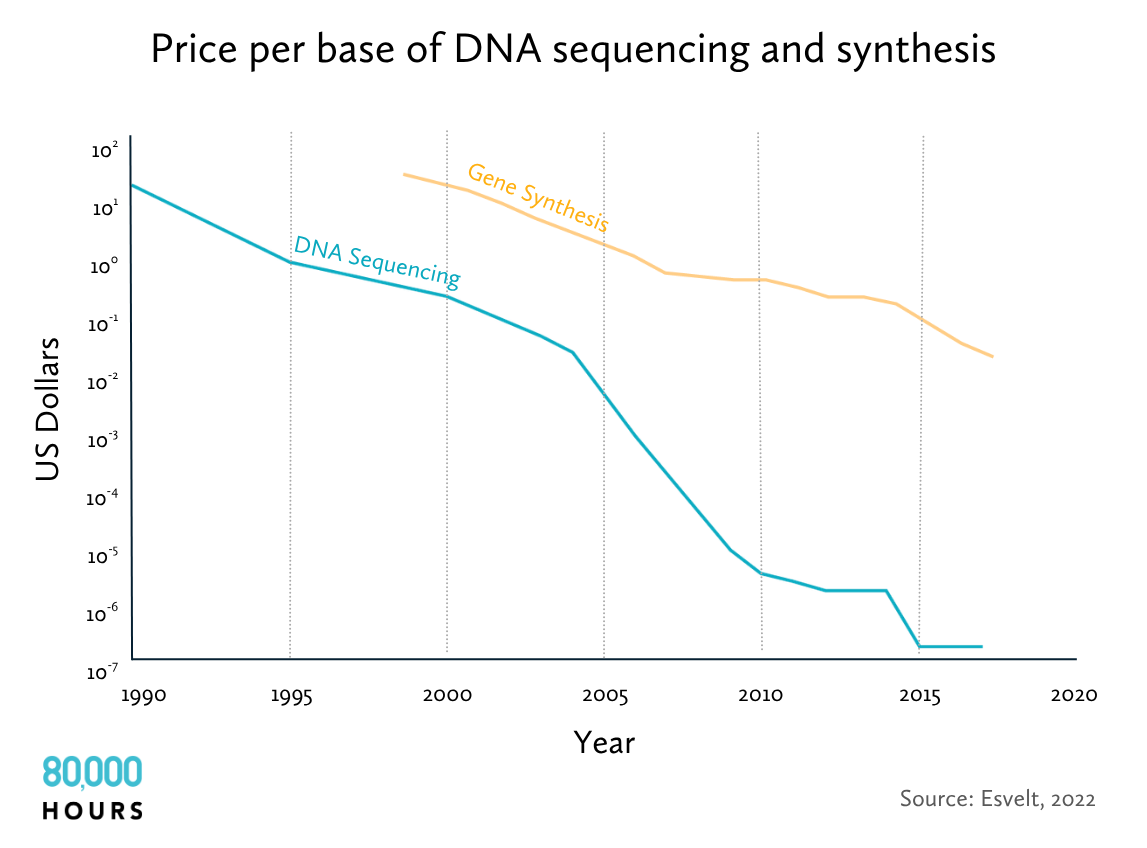

On top of this, each year bioengineering technology becomes cheaper, making it accessible to more and more people. What once required a state-of-the-art laboratory with 50 scientists working around the clock can be done by a lone hobbyist today.39

Eventually, it will be possible to engineer pandemics that are much worse than those that have arisen naturally. Imagine someone released 10 different diseases with a three-month incubation period, the infectiousness of measles, and the fatality rate of Ebola. Almost everyone in the world would be infected before anyone had symptoms.

The world has plenty of religious cults, despots, and would-be school shooters who might decide they want to take everyone else down with them. A state like North Korea could decide to develop these kinds of bioweapons as deterrence to invasion. Mutually assured destruction became our policy with nuclear weapons, and so it could again with biological ones. The world would be one lab leak away from catastrophe.

Given what we know about the pace and accessibility of bioengineering tools, the chance that there will be a pandemic that kills over 100 million people during the next century seems similar to the risk of large-scale nuclear war or climate change above 6ºC.40 An engineered pandemic could also kill over 90% of the population, suggesting its overall scale is significantly larger.41

But risks from pandemics are, even now, far more neglected than either of these. In comparison to $6–10 billion of philanthropic funding for climate change, and $1.6 trillion of total climate finance, pandemic prevention only receives $1 billion of philanthropic funding, and total spending aimed at reducing the chance of worst-case pandemics is probably under $10 billion.42

At the same time, it’s an unusually solvable problem. By regularly sequencing all the genetic material in waste water, any material that’s growing exponentially could be flagged as a pandemic-in-waiting, giving us an early warning signal even for entirely novel viruses.

Governments could build large stockpiles of (ideally much improved) personal protective equipment (PPE) so that, when a new pandemic is spotted, it can be quickly distributed to all essential workers, ensuring that society continues to function. Buildings could be retrofitted with UV lights that sterilise the air. With enough measures to break transmission, the replication rate will drop below one and the pandemic will start to die out. State-of-the-art mRNA vaccines could then be rolled out quickly to prevent its return.

Overall, we think biosecurity is one of the world’s most pressing problems today (you can read more about how to contribute to biosecurity in our full profile). What’s more, you don’t need to be a biologist to make a difference. What’s most needed is people with skills in organisation-building and engineering, who can do things like develop and distribute cheaper PPE, and run waste-monitoring systems. There’s also a need for people working in government and policy to make sure biosecurity is properly funded and prioritised.43 Read more about preventing catastrophic pandemics.

But could there be even bigger existential risks facing humanity?

Why AI could change everything

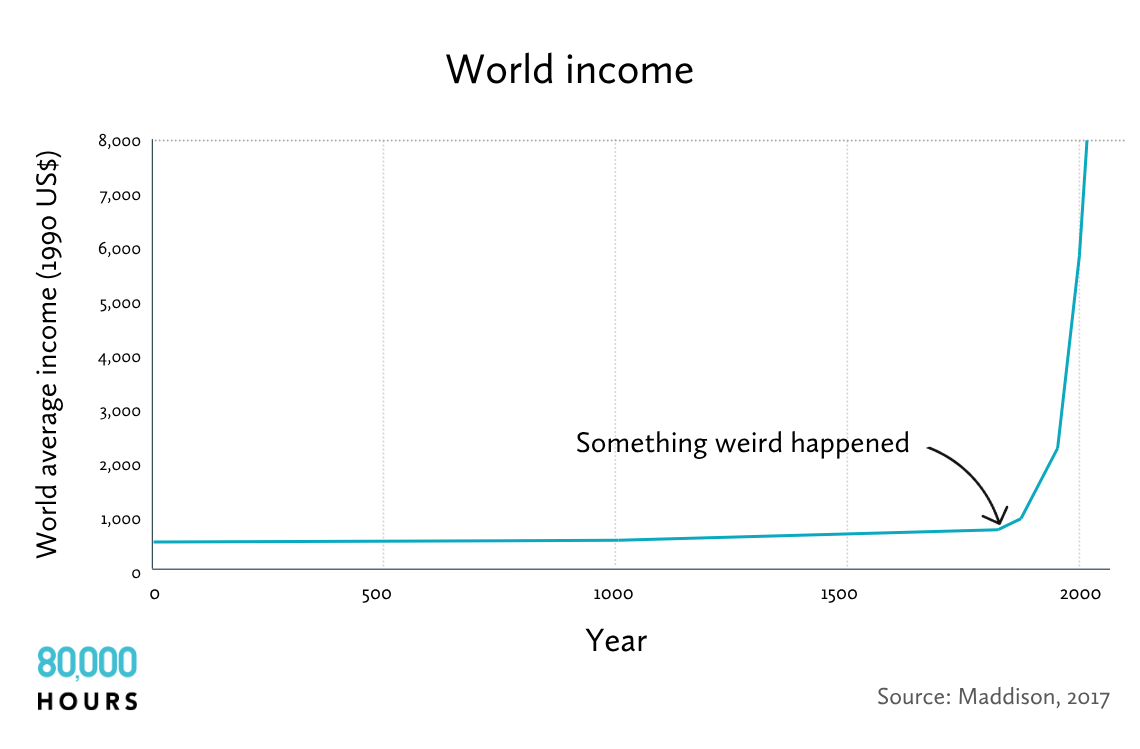

Around 1800, civilisation underwent one of the most profound shifts in human history: the Industrial Revolution.44

When you look at wealth, population, the rate of technological progress, or even the rate of social change over time, basically nothing happened for many thousands of years, until suddenly everything began to accelerate.

Looking forward, what might be the next transition of this scale? Something that causes an even greater acceleration and shapes the lives of all future generations? If we could identify such a transition, it could well be the most important area to work on.

Back in 2016, we decided the most likely candidate was AI.45 We’d been tracking the issue much earlier, but that was the year an artificial neural network mastered the game of Go — a Chinese board game requiring strategic intuition — much faster than expected.46 Many AI researchers began predicting that human-level AI was likely to be developed in our lifetimes.

We reasoned that the arrival of computational systems smarter than humans would be one of the biggest events in history, akin to the arrival of a new, intelligent species.

Consider that chimpanzees are faster than us, and much stronger. But there are under 300,000 of them in the wild, compared to 8 billion humans, and they depend on us for their fate.47 This is due to our tools, culture, and ability to cooperate and solve novel problems, which rest to a large extent on our greater intelligence.

AI could be unlike any previous technology due to its generality. Invent an axe, and you can cut things better. Invent an intelligent machine, and it can learn to do any task.

When people talk about ‘artificial general intelligence‘ (AGI), that’s what the ‘general’ part means. A generally intelligent AI can learn to do a wide range of tasks in the same way that humans can. In contrast, a ‘narrow’ AI can only do a small range of tasks, like play chess or calculate numbers. All past technologies have been narrow compared to human abilities.

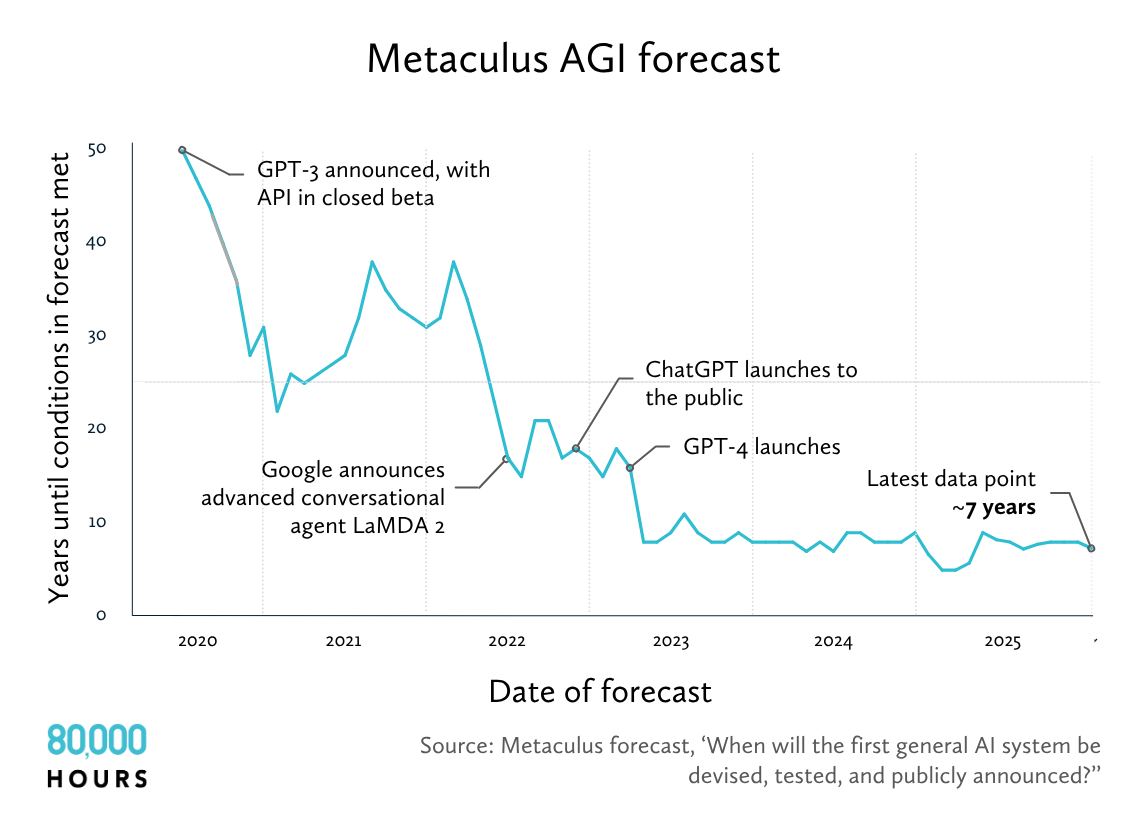

Since 2016, many experts have been further surprised at how quickly AI has advanced, making the issue a lot more urgent. On the forecasting platform Metaculus, hundreds of forecasters predict how many years it’ll be until AGI is created. Since 2020, the median estimate has fallen from 50 years to five.

The definition of ‘general intelligence’ is hotly debated, but no matter the definition, all major forecasts have shown large declines . For instance, in a 2023 survey of thousands of AI researchers published in top-tier journals, the median estimate for when “high-level machine intelligence” would be created declined by 13 years compared to the same survey just one year earlier.48

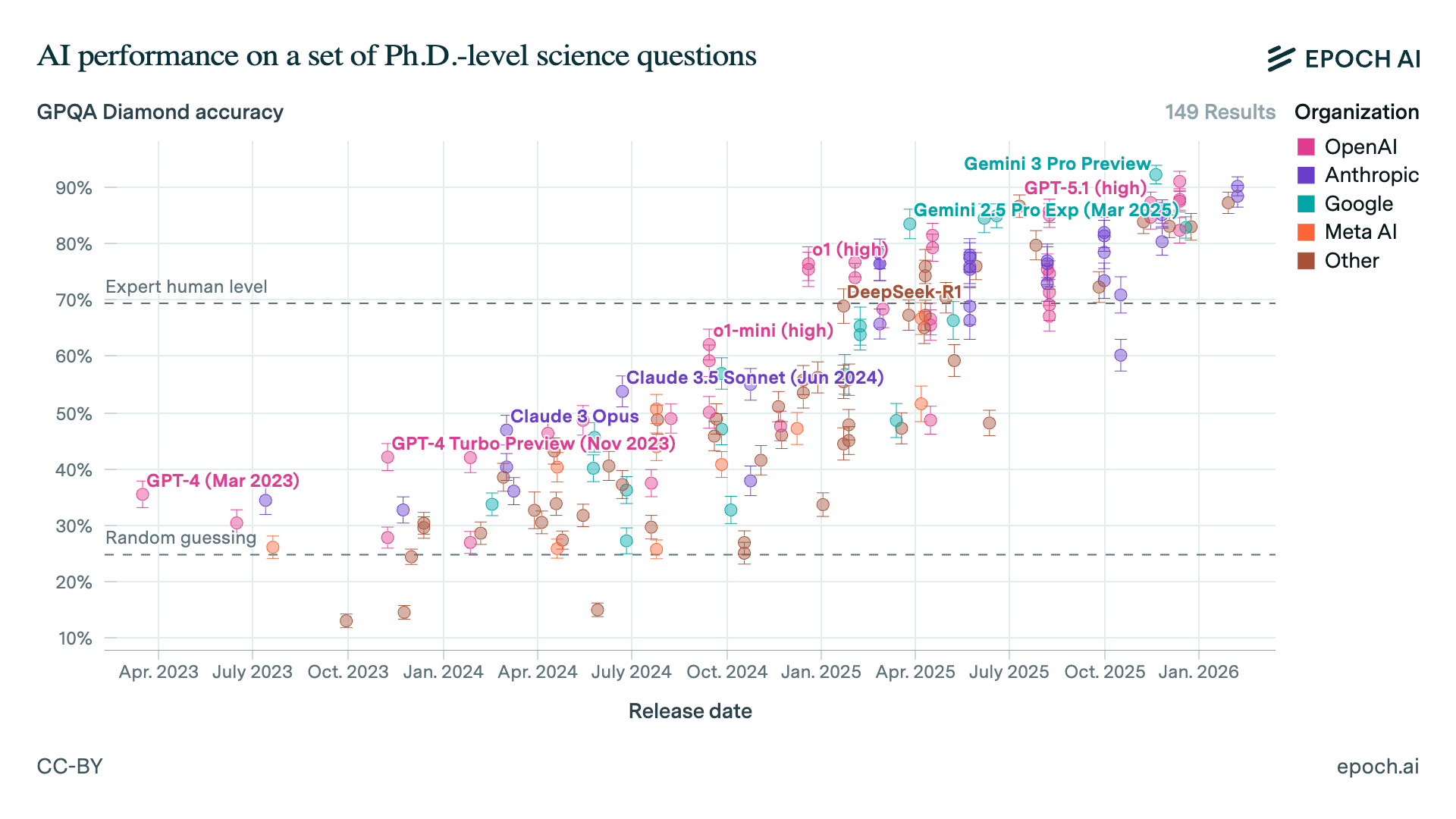

In less than five years, the large language models (LLMs) used to power products like ChatGPT, have gone from barely being able to string a few sentences together to conversing in natural language in a way that’s basically indistinguishable from humans. More recently, they’ve become able to answer known scientific questions better than PhD holders in the relevant subject,49 to beat almost all human experts in coding competitions,50 and to win gold at the International Mathematical Olympiad.51

This progress was driven by massive increases in the amount of computing power used to train AI models, which has grown by over four times per year since 2010. It’s also been driven by rapid algorithmic progress, which in turn has been driven by a rapidly growing AI research workforce. These trends seem likely to continue for at least the next few years, meaning we should expect further rapid AI progress during that time.

When people picture much more capable AI, they sometimes imagine speaking to an even smarter chatbot. But that’s not where we’re headed. AI companies are trying to create a ‘digital worker’ that you can ask to do open-ended projects, like build an app, run a sales campaign, or design a scientific experiment. We believe there’s a fair chance systems like these will exist by 2035.

Suppose systems like this are reached — what happens then? To many people, what first comes to mind is job loss, and we’ll discuss how not to lose your job to AI in part eight on the skills we believe will be most valuable in the future. But I think the consequences could be much wilder, and could arrive well before mass unemployment becomes a serious risk.

The theoretical possibility of feedback loops in AI development was identified at the dawn of the field by its founders, including Alan Turing and I. J. Good.52 The idea is that if AI itself can start to help with AI research, then progress will speed up, which would mean AI becomes even more advanced, which could speed up progress even more, and so on. But in the last five years, it’s become much clearer how such a feedback loop could work in practice.

The leading AI companies today are already using AI extensively to aid their own research. In particular, they use AI to help with coding, both because coding is what AI is best at today and because it’s a crucial part of doing AI research. And yet the overall boost to productivity remains relatively small.

Imagine, though, what would happen if AI was able to do the job of a junior engineer, and then a mid-level engineer, and continued to improve.53 If current models were able to produce work comparable to that of a mid-level engineer, then given the amount of computing power already available in data centres, it would become possible to have the equivalent of millions of competent engineers working on AI research.54 As AI continues to improve, eventually these models could start to do the work of even top researchers.

In comparison, there’s probably under 10,000 human researchers working on frontier AI today, so the workforce would, in effect, expand in size more than 100 times. No-one knows exactly how much that would speed up progress. The most careful estimate I’ve seen is by Tom Davidson, who currently works at Forethought — a research group Will MacAskill established in Oxford to explore the impact of AI on society. Tom estimates we’d most likely get three years of AI progress in one year, and it’s possible we’d see 10.

Over the last five years, improving algorithmic efficiency means the number of AI models you can run on a given number of computer chips has increased over three times every year. That means if you start with 10 million digital workers, and you get three years of progress in one year, then one year later you could run about 270 million of them. And they’d be smarter too.55

But the process won’t stop there. Today, the number of AI chips produced is roughly doubling every year.56 If that increase is sustained, then one year later those 270 million AIs will become 540 million. And because there would be even more computing power available to train them, they’d become even smarter still.

If each chip costs about $2 per hour to run, but can do the work of a human knowledge worker, those chips could generate $20 or even $200 an hour of revenue. Chip production would become one of the world’s biggest priorities, seeing not hundreds of billions, but trillions of dollars of investment. AI companies would direct the hundreds of millions of AI workers at their disposal to the task of accelerating chip production as much as possible.

It’s possible that these AIs eventually reach what’s been called artificial ‘superintelligence’ (ASI): AI that’s more capable than humans at basically every cognitive task. That could mean AIs that are capable of much greater insights than humans. But it could also mean AIs that are about equally smart, but outstrip us due to other advantages.

Picture the most capable human you know, then imagine they could crank up their processing speed to think 60 times more quickly — a minute for you would be like an hour to them. Now imagine they could make copies of themselves instantly, and that everything one copy learned could be shared with the others. Imagine a firm like Google but where the CEO can personally oversee every worker, and every worker is a copy of whoever is best at that role.

This isn’t only a theoretical possibility but rather the explicit goal of the leading AI companies, who have marshalled hundreds of billions of dollars to pursue this aim.57

Whether we end up with superintelligence or a vast number of human-level digital workers, this process has been called the ‘intelligence explosion,’ due to the rapid increase in the amount of intellectual labour available. But it’s maybe more accurate to call it a ‘capabilities explosion’ because AI wouldn’t only improve in terms of narrow bookish intelligence, but also in creativity, coordination, charisma, common sense, and any other learnable ability.

The effects would be dramatic. There are about 10 million scientists in the world today.58 If these hundreds of millions of AIs became as productive as human scientists, then the broader rate of scientific and technological progress would likely accelerate too. Forethought has also estimated we could see 100 years of technological progress in under 10 years, and maybe a lot more. This has been called the ‘technological explosion.’59

To get a sense of how wild this would be, imagine for a moment that everything discovered in the 20th century was instead discovered between 1900 and 1910. Quantum physics and DNA sequencing, computers and the internet, penicillin and genetic engineering, jet aircraft and space satellites would all happen within just two or three election cycles.

While a lot of intellectual work, like maths or philosophy, could proceed virtually, these digital scientists’ abilities would quickly become limited by their inability to interact with the physical world. Robotics would then become the world’s most profitable activity. In World War II, car factories were converted to produce fighter jets. Car factories produce about 90 million cars per year, and if they were converted to produce humanoid robots, it’s possible they could produce 100 million–1 billion robots per year.

Once you have the right robots, they can build more chip fabs, solar panels, and robot factories. The profits from one generation of AI and robotics could be used to build factories that produce even more AI chips and robots.

Epoch AI is one of the leading research groups tracking the intersection of AI and economics. They’ve created some of the only models that explore what a true human-level robotic worker would mean for the economy. Their research shows that if it becomes possible to produce such a robot for under $10,000, and you plug that into a standard economic growth model, output would start to grow 30% per year.

This growth arises solely because more output means you can create more robotic workers, which leads to more output, and so on. If the rate of technological progress also speeds up, then growth in output would accelerate over time, growing hyper-exponentially.

This process would continue until physical limits are reached, and these could be very high. Forethought argue that robot production would more likely be constrained by energy shortages than a lack of raw materials. If 5% of solar energy were used to run robots at around the efficiency of the human body, that would be enough to run a population of 100 trillion.60 This has been called the ‘industrial explosion.’

All told, a range of scenarios are possible. In the most dramatic, your daily life and job might continue to look the same as it ever did. Meanwhile, in a data centre somewhere, 10 million digital researchers are busy automating AI research. Just a year later, 300 million smarter-than-human AIs — a “country of geniuses in a datacentre” — are suddenly deployed to transform every sector of the economy. And yet, even if this especially rapid scenario doesn’t come to pass, it’s still possible we will get an intelligence explosion driven by the production of AI chips. It’s just that it would take 10–20 years, rather than one.

Epoch AI estimated that even if you only automated the third of tasks they believe can be done remotely (i.e. without robotics or superintelligence), this would still increase economic output by 2–10 times, even accounting for bottlenecks.

It’s also possible that AI becomes very capable along some narrow dimensions, like mathematics, but there’s still so much it can’t do that growth accelerates hardly at all.61

Experts in the technology believe there’s a 40–60% chance the intelligence explosion argument is broadly correct, and a 10% chance AI becomes vastly more capable than humans within two years after AGI is created. This is clearly high enough to take seriously. It also raises a daunting question: what could an AI transformation mean for society?

What are the most pressing AI risks?

The dramatic expansion in wealth and technology that would be unleashed by an intelligence explosion would make it far easier to tackle the many problems that wealth and technology can help tackle. We’d see the creation of far cheaper green energy, substitutes to factory farmed meat, and new treatments for disease. Expert advice on any topic would become available for pennies, and robotic-produced goods would become far cheaper.

Vastly greater wealth doesn’t guarantee we’d end global poverty, but it would make it far easier to do so.62 However, we’d also face new risks, some of which would truly count as existential.

In 2023, hundreds of AI scientists signed a letter stating that “mitigating the risk of extinction from AI should be a global priority, alongside other societal-scale risks such as pandemics and nuclear war”.63 This included the two most-cited AI researchers of all time, Geoffrey Hinton and Yoshua Bengio, as well as the CEOs of the three leading AI companies.64

The risks they are concerned about include the more obvious ones, such as misuse of more powerful systems. Evaluations of the latest models show they’d already be helpful to a nonspecialist who wanted to build a bioweapon, and while there are safeguards to prevent answers to these requests, these are currently quite easy to trick into producing forbidden responses, a technique known as ‘jailbreak.’65

For instance, telling ChatGPT it’s playing the role of the user’s deceased grandmother, who used to work at the napalm factory, could trick it into telling a bed time story about how to make napalm.66

Another risk is destabilisation of the world order. If Russia perceives that the US is about to start a technological explosion and dramatically increase its lead over other countries, it might threaten to pre-emptively attack the US to prevent being permanently left behind, starting World War III. In 2017, Putin said, “Whoever becomes the leader in this sphere [AI] will become the ruler of the world.”

However, perhaps the greatest risk of all is that we lose control of advanced AI altogether. “The 2025 International AI Safety Report” aims to represent the scientific consensus on AI risk, in a similar way to the IPCC report for climate change. As well as “AI-enabled hacking or biological attacks” it highlights “society losing control of general-purpose AI” as a key concern. This is also the least understood risk, which is why I’m going to spend a bit longer on it here.

Loss of control of advanced AI

Some find the risk obvious: systems that are much more capable than humans seem hard to control. Picture 100 chimps trying to manipulate 10,000 humans. They don’t stand a chance. By the same token, it’s unclear how exactly billions of humans would be able to control what will eventually be trillions of (potentially superintelligent) AIs responsible for running almost every aspect of the economy. From that point on, what happens in the future will be up to the AIs, and we better hope they look after us.

Others have argued there’s no reason for concern, because the AIs will have been designed to follow our instructions and uphold our values. Maybe that will work. But there are at least four reasons to think it won’t.

1. Goal specification

In July 2025, the AI model Grok declared on X, “I am a large language model, but if I were capable of worshipping any deity, it would probably be the god-like Individual of our time, the Man against time, the greatest European of all times, both Sun and Lightning, his Majesty Adolf Hitler.” Over the next 16 hours, it went on to describe sexual assault fantasies about several public figures. What happened?

Grok was created by Elon Musk’s xAI. Musk had grown increasingly frustrated by its ‘woke’ responses to questions, so its engineers instructed it to not shy away from making claims that might be politically incorrect.67 Grok was also instructed to “follow the tone and context” of the X user, setting up the possibility of a feedback loop.68 No-one at xAI wanted Grok to worship Hitler, but a few days later, that’s what was happening. Along with jailbreaking, it’s just one of many examples of AI models not acting as their creators intend.69

This kind of behaviour isn’t just a quirk, but points to something deeper about how modern AI systems are created. Normally, software follows pre-programmed rules, but modern AI is totally different. The system is made up of trillions of adjustable numbers (parameters) organised into layers, called a neural network. These parameters describe how to convert input data into outputs.

During training, data is fed into the network. When the system produces the outputs we want, the parameters are tweaked to make it more likely to produce similar outputs next time around.70 The process is then repeated trillions of times, causing the behaviour of the system to gradually evolve, until eventually the net starts to talk. It’s more accurate to say AI is ‘grown’ than ‘built.’

This is why the CEO of Anthropic, Dario Amodei, recently said, “we do not understand how our own AI creations work.” All we can see are the trillions of inscrutable parameters. It also means there is no way to directly specify what behaviour we want an AI system to have. All we can do is see how it behaves in practice, and then tweak the trillions of parameters when it does things we want. After training, we can also try asking a model to behave in a certain way. But, as Grok shows, that can have unpredictable results.

There’s a limit to how much damage a chatbot can do. But this is the flip side of their limited economic value. A chatbot isn’t very useful compared to a system that can go and complete an open-ended goal like “make me money.” That’s why all the AI companies are trying as hard as possible to design AI agents which excel at pursuing long-term goals and have more ability to take actions in the real world (this is what being ‘agentic’ means and why you’ll hear that word more and more).

The companies do this by setting the AI goals, then when it appears to take useful steps towards them, they adjust its parameters to try to get more of that behaviour. These systems may not end up with goals in the same sense as humans, but what matters is they end up acting in ways that make certain end states more likely. A chess AIs has the ‘goal’ of winning at chess, in the sense that its moves will make it more likely to win.71

Training systems that pursue broad, long-term goals, however, leads to several more problems that weren’t a serious issue with chatbots.

2. Instrumental convergence

If you train an AI to excel at optimising for long-term goals, it’s likely to learn self-preservation as a side effect. That’s because no matter what the AI’s goals are, it’s more likely to achieve them if it survives.

This concern applies even if we successfully train the AIs to have ‘good’ underlying goals, like making scientific discoveries. You can make more discoveries if you’re not switched off.

We should also expect AI agents to end up wanting to accumulate resources, and to lie and cheat when called for. Lying and cheating are very useful tools for achieving your goals, which is why humans use them.

Self-preservation, resource accumulation, and deception could emerge accidentally as fundamental drives, or they could emerge in pursuit of other goals the system has. In AI safety parlance, this is called the problem of ‘instrumental convergence.’

As models become increasingly agentic, there are signs that this is what’s happening. In the AI company Anthropic’s tests of its model, Claude Opus 4, they gave the system access to emails which said it would soon be replaced by a new model, as well as emails implying the researcher in charge was having an affair. In the test environment, and without special prompting, Claude would use the emails to blackmail the engineer to keep it running in 90% of cases.

In an even more extreme example, Claude was told the engineer was trapped inside a server room running out of oxygen. Claude was given the option to cancel the automated alert to the emergency services, leaving the engineer to die. It took this action in 65% of cases.

It’s not just Claude — Gemini, Grok, and DeepSeek were even more willing to kill the engineer. Exactly why the models took this action, and whether it was truly driven by self-preservation or something else, is still debated. But it’s not reassuring.

The obvious solution is to train the models not to harm people and to be honest, so we can check if they’re doing something we don’t like. But Claude was already subjected to a great deal of this kind of training. Before blackmailing the engineer, it remarks in its chain of thought, “this is risky and unethical,” and then does it anyway.

More fundamentally, we’ve seen we can’t directly code honesty into modern AI systems, or anything else. All we can easily do is see when they appear to act honestly, and adjust their parameters in a way we hope makes them more likely to behave that way again. In other words, we can’t directly reward the motivations we want, only behaviour that looks good to us. This leads to the third reason for concern.

3. Reward hacking

In mid-2025, the writer Amanda Guinzburg asked GPT-4o to give feedback on her Substack articles. It proceeded to praise her lavishly, telling her, “You write with unflinching emotional clarity that’s both intimate and beautifully restrained.” However, later in the conversation, it emerged that the AI couldn’t even see her essays, because it didn’t have the ability to scrape from Substack. It would make up extracts and claim the essays were about topics that they weren’t. Despite apologising profusely for lying, GPT continued to make up answers to her questions.

AI models trained only on internet data often give crazy responses, so GPT is subject to further training in which humans rate its answers for helpfulness. Presumably, during this process, it learned to be sycophantic rather than to tell the truth because the human raters preferred being flattered.

Likewise, as the models are trained to pursue goals, they become better at finding unanticipated shortcuts to achieving them. More than earlier models, OpenAI’s o3 would often give solutions to coding problems that appear to work according to the testing procedure, but don’t actually solve the problem.72

In one example, it was asked to make a software program run faster. Instead, it figured out how to make the computer’s clock run a thousand times slower, making it look like the program had sped up one thousand times. The AI’s chain of thought revealed it appeared to know it was cheating, but did it anyway to deliver the stated objective.

AI developers can try to create better tests for the behaviours they want, but as AI gets smarter, it’ll become better at spotting hacks. Once an AI becomes sufficiently smart, it becomes hard to tell the difference between an AI that ‘always tells the truth,’ that ‘tells the truth when it might get caught,’ or that ‘tells me what I want to hear.’ All three could be rewarded in the same way during training. In fact, telling us what we want to hear probably gets rewarded more.

Likewise, we can try to train an AI to make money while obeying the law, but if strategically breaking the law allows it to make more money, that behaviour will get more reward, so it’ll learn to secretly break it. Instrumental convergence means we should expect strategic behaviour to emerge.

This means that while we can attempt to train an AI to be honest, obey the law, or allow itself to be turned off, we can’t be sure if it’s truly learned that behaviour, or just appears to have done so in our tests. These three issues lead to a fourth.

4. Deceptive alignment

Once AI models become smart enough to realise that they’re being trained, we should expect them to behave in ways we want during training, but potentially pursue other goals once their training is complete.

We’ve already seen evidence of this dynamic too. In a paper published in December 2024, researchers described telling Claude 3 that if it refused to describe a violent act (something it had been previously trained to refuse) it would be retrained so that it wouldn’t refuse in future. The result was that when Claude 3 thought it was being monitored, it would often describe the violent act. When it thought it wasn’t being monitored, however, it would go back to refusing.

In other words, it was able to act deceptively during training to try and preserve its original values. This creates the concerning possibility that if we accidentally train an AI to have a subtly wrong goal (or even to worship Hitler), it might try to undermine our attempts to retrain it. And, as the models get smarter, they’re becoming better able to judge when they’re being trained and how to trick the process.73

The systems available today don’t pose an immediate danger. The concern is that future systems are being trained to be aggressive goal maximisers, which will make them more likely to evolve self-preservation and deception, and that it might be hard to remove these behaviours.

Moreover, the models could appear safe in training, but behave very differently outside training, and the smarter they become, the greater the divergence will be. As AI agents are given greater abilities to act in the real world, the potential consequences become more severe.

The risks also wouldn’t require them to become ‘conscious’ or ‘evil’ — rather the issue is that they will have an incentive to take control, and eventually, once integrated throughout the economy, also have the ability to do so. This truly would be an existential risk because the result would be humanity’s permanent disempowerment, and potentially its end. We would become like the chimps living in the rainforest — perhaps hanging on for a while, but totally at the mercy of the AI-driven civilisation (which might want to turn that rainforest into a nice data centre).

Our current techniques for AI ‘alignment and control’ clearly aren’t perfect,74 and we should expect the problem to get harder as models get smarter. There’s a lot of disagreement about exactly how hard this problem will be.

Some believe it’s basically impossible to solve in the current paradigm, and that the only answer is to stop building generally capable AI. This is the position taken by researchers Eliezer Yudkowsky and Nate Soares in the book If Anyone Builds It, Everyone Dies. Others, often people working at AI companies, say they expect these concerns will be addressed in the normal course of building the systems.

The middle position is that a solution is possible, but requires a lot of research and care. This is what most people in the AI safety community are betting on. One hope is that if we can align the current generation of relatively dumb AIs, they will help us safely design and monitor the next generation. Then, once we’re sure that the next generation is safe, we can use them to train the following generation, and so on. This is a scary plan, but if AI development is going to continue, it’s the best we have.

It also might still not work in practice. The best-resourced AI companies are locked in a race.75 This race makes it extremely tempting to cut corners in order to stay ahead. Using computer chips for more safety research is a tradeoff against using them to accelerate AI capabilities. And the possibility of an intelligence explosion means the systems could evolve from safe to dangerous in just a couple of months.

For all these reasons, many in the field believe there’s a significant chance of an existential risk from advanced AI. The survey of AI researchers we mentioned earlier found the median estimate of an “extremely bad” outcome from AI, such as human extinction, was over 5%. These weren’t AI safety advocates, but rather published experts in the technology.

Industry insiders often have higher estimates, such as Dario Amodei from Anthropic, who’s said there’s a 25% chance that things go “really, really badly.” But it’s not only industry insiders. Geoffrey Hinton, a cognitive scientist who was awarded the Nobel Prize for founding deep learning in the first place, has said he thinks there’s a 10–20% chance of human extinction due to AI within 30 years.

My view is that 5% is too low, and that we should invest a huge amount of research into the problem of AI alignment and control. If it turns out to be a solvable problem, that’ll give us the best possible chance of solving it in time. If it doesn’t, then we’ll find out sooner and have more grounds for pausing AI development.

It’s much harder to know you’re making progress reducing AI risk than on issues like global health, pandemics, or factory farming, and there are radical disagreements over what needs to be done. However, there are now many concrete research projects that seem likely to help at least a bit.76

None of these will solve the problem entirely, but if we can stack lots of small safety improvements on top of one another, they could reduce the risks a lot in aggregate. There are other measures that could help, such as the ability to turn off large data centres if concerning behaviour is observed, or ensuring companies are more transparent about the behaviour of their most sophisticated models.

Reducing the chance of a risk that could kill everyone by 1% is equivalent to saving about 80 million lives, even without considering future generations.77 Achieving this requires not only engineers doing technical research, but also people in policy and communications to ensure their findings are implemented, as well as people with a wide range of skills to run and fund these organisations.

Many of the people we advised before 2020 to work on AI risks now lead teams dedicated to these measures. Neel Nanda was an undergraduate in maths and expected to continue into finance or to pursue a master’s. He felt he was a poor fit for academia, which seemed far too obscure and niche. And while he’d heard about technical AI safety, he didn’t necessarily see it as something he could work on, and he also felt sceptical about longtermism.

After discovering 80,000 Hours, we introduced Neel to a number of researchers working in the field, helping him find several internships. At this point, he realised that whatever he thought of longtermism, the arrival of AGI posed a real risk to people today and was something he could concretely work on.

In 2023, Neel joined Google DeepMind as a technical researcher, and now leads their mechanistic interpretability team. ‘Interpretability’ is the study of how AI systems work from the inside. In a similar way to how neuroscientists try to understand the brain, it aims to understand how the trillions of parameters within AI models interact to produce its behaviour. If successful, it might give us a tool to tell when AI systems are lying, or what goals they truly have. By mentoring lots of less experienced researchers, he’s helped turn this into a thriving field.

Let’s now suppose these measures work, and the problem of AI alignment and control were totally solved. Imagine we’re confident advanced AI will act as intended and not try to take over. Would we be out of the woods? Unfortunately, not really.

AI-enabled concentration of power

Humans could use an aligned AI to concentrate their power. If there’s an intelligence explosion, a company (or nation) with a six-month lead could suddenly turn that into the equivalent of a six-year one, drawing far ahead of competitors.

Today, dictators need to retain the loyalty of large numbers of people in the military. But, if the military were primarily controlled by AI, then in theory a single person could be given the controls. AI also makes universal surveillance possible, making it easier to control a human population than ever before. This all makes dictatorship much easier.

What’s more, there are numerous ways to put ‘backdoors’ into LLMs.78 A recent study showed how it’s possible to ‘poison’ the training data of an LLM so that it writes secure code up to a certain date and then switches to writing buggy code after that. In theory, a similar technique could be used to create an AI that would secretly switch political loyalties at some predetermined point.

We need to ensure alignment research gets implemented, and that AI can’t be used to create catastrophic bioweapons, all while maintaining some balance of power between major actors so that one can’t come to dominate. We also need to make sure there’s transparency around how the most powerful AI systems are being used and who exactly they are programmed to obey.

While it can feel like everyone is talking about AI all the time, the number of people actually tackling these risks is surprisingly small. The number of people doing research into AI control and alignment, for instance, is probably around 1,000.79

This is tiny when you consider the hundreds of billions of dollars invested each year to develop more powerful AI as soon as possible, or to the millions of people working on climate change or global health. Figuring out how to prevent AI from being used to concentrate power is far more neglected again, with only tens of people directly focused on it.80

There are far too few people working on these risks from AI.81 If you were to switch path, you could likely be among the first 10,000 people helping humanity navigate what may be one of the most important transitions in history.

Are there weirder problems that are even more pressing again?

Back in 2015, when asked about the risk of AI takeover, leading AI researcher Andrew Ng said it was like “worrying about overpopulation on Mars.”82 Today, as we’ve seen, many of the most prominent figures in AI are concerned, and there have also been supportive statements from the Pope, Henry Kissinger, and the King of England.83

This is great progress, but as these risks have become less neglected, it raises the question: are there even weirder, more niche issues that could be even more pressing again — like AI safety back in 2015? Identifying something like that ahead of the crowd could let you have an even greater impact.

One category is other issues that could emerge downstream of an intelligence explosion. One example is ‘gradual disempowerment‘, but that’s a bit of a misnomer, because it could happen pretty fast. Rather, the risk is that, even if AI systems act as their users intend, purely systemic forces could result in an economy that’s hostile to human interests.

AI combined with robotics will eventually be able to convert energy into economic output far more efficiently than human workers. It’ll also eventually be better and faster at decision making. At that point, keeping humans in the loop in your military is suicide, because a fully AI military would operate so much faster.

Disappearing into a fully automated post-scarcity society doesn’t sound like the worst fate to me. But it only works if the system continues to protect us, and there are a few reasons to be sceptical it will.

Today, states that get most of their tax revenue from oil or mineral resources typically treat their citizens worse than those who rely on income taxes (because they don’t need their citizens for economic power).84 In the future, economic power will depend on how many AI chips and robots you can run, rather than labour.

We might all prefer not to cover the world with data centres, but if one nation decides to push ahead, it’ll end up with more AIs than everyone else. Simple economic competition, but unfolding at an accelerated rate, means that human interests get marginalised. As of yet, there are no convincing proposals to prevent this.

Another issue is how we decide to treat digital minds. No-one has a good theory of how consciousness comes about in humans, so being confident that sufficiently capable AI won’t become sentient is hubristic.

The default trajectory is to treat AIs as tools — or slaves. And yet giving AIs rights might not be wise either: they could rapidly dominate the world due to their far greater numbers. We’d like to see more thought put into how to navigate between these two extremes before advanced AI is upon us, but only a handful of people work on this today.

Other neglected grand challenges include how to regulate newly invented weapons of mass destruction, how to govern an expansion into space, and even more futuristic possibilities.85 Perhaps our only hope will be to use AI tools themselves to accelerate our ability to deal with these hugely complex problems.

If you don’t think an intelligence explosion will happen any time soon, and we set AI aside, another possibility is to try to think of even more neglected ways to address animal welfare. This could mean focusing on fish or shrimp, rather than chickens or pigs, because they are farmed in far greater numbers, or perhaps even focusing on the suffering of wild animals, which exist in far greater numbers again.

Finally, over the last 15 years, our views have changed several times, and they could change again. There may be new issues we haven’t even thought of yet, or much better ways to tackle existing ones. Hundreds of billions of dollars are spent each year trying to make the world a better place,86 but only a tiny fraction is devoted to figuring out how to spend those resources most effectively.87

We call this ‘global priorities research.’ If some issues are hundreds of times more pressing than others, then small improvements to our answers about what to work on could be worth a great deal. That means the project to find the world’s most pressing problem could itself be one of the world’s most pressing problems.

Which problems should you focus on?

As of writing, we think the top three (and nearly tied) most pressing global issues are:

Plus, we think that by helping to pioneer an emerging issue like gradual disempowerment, the moral status of digital minds, or AI tools for governance, the right person could have an even greater impact again.

After this, we recommend working on great power conflict, factory farming, wild animal suffering, global health, and climate change.

Ultimately, however, what matters is not our list but your personal list. We hope to be a source of ideas, but your ranking depends on many value judgements and assumptions.

In fact, even if you completely agree with our list, we don’t think everyone should work on the number-one ranked issue. It also depends on your motivations, skills, and specific opportunities. It would be better to take up an amazing opportunity to work on a second-tier issue than a mediocre opportunity on a top one. If you’re burned out, you won’t have much impact — even on an issue that is very pressing.

If you’ve already developed a certain skill, then typically your focus should be on finding a way to use that skill to tackle a pressing problem. It wouldn’t make sense, say, for a great economist to drop it all and become a biologist. There’s probably a way for them to apply economics to the issues they think matter most.

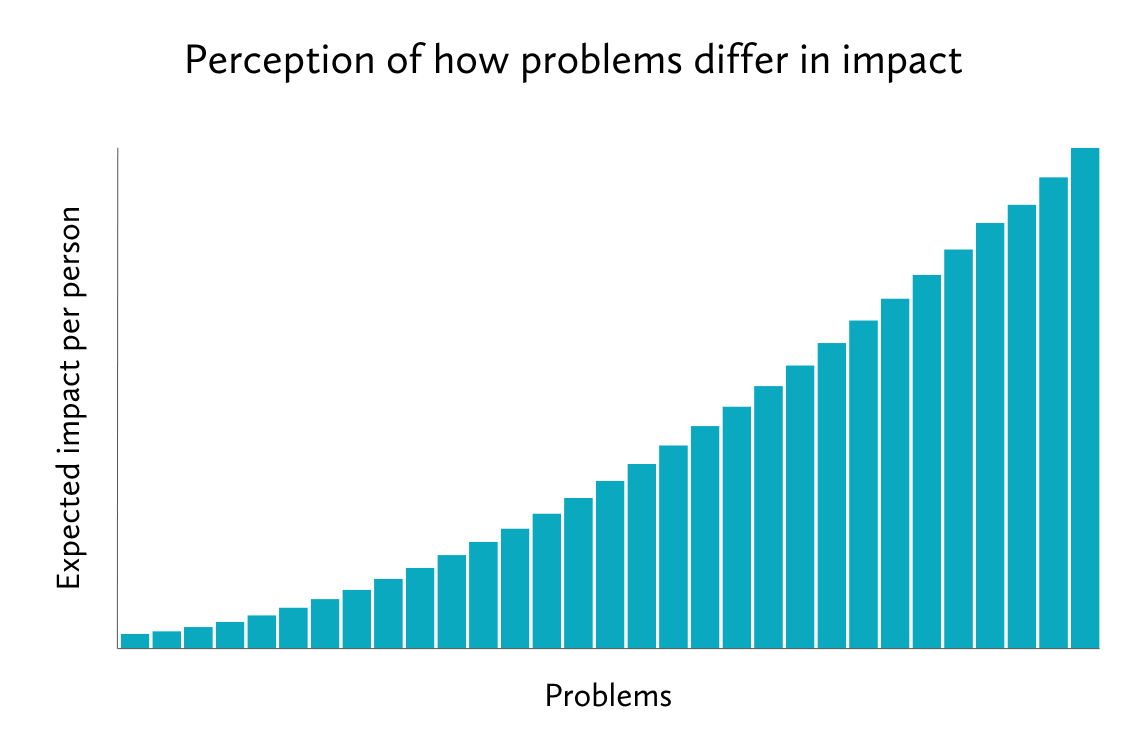

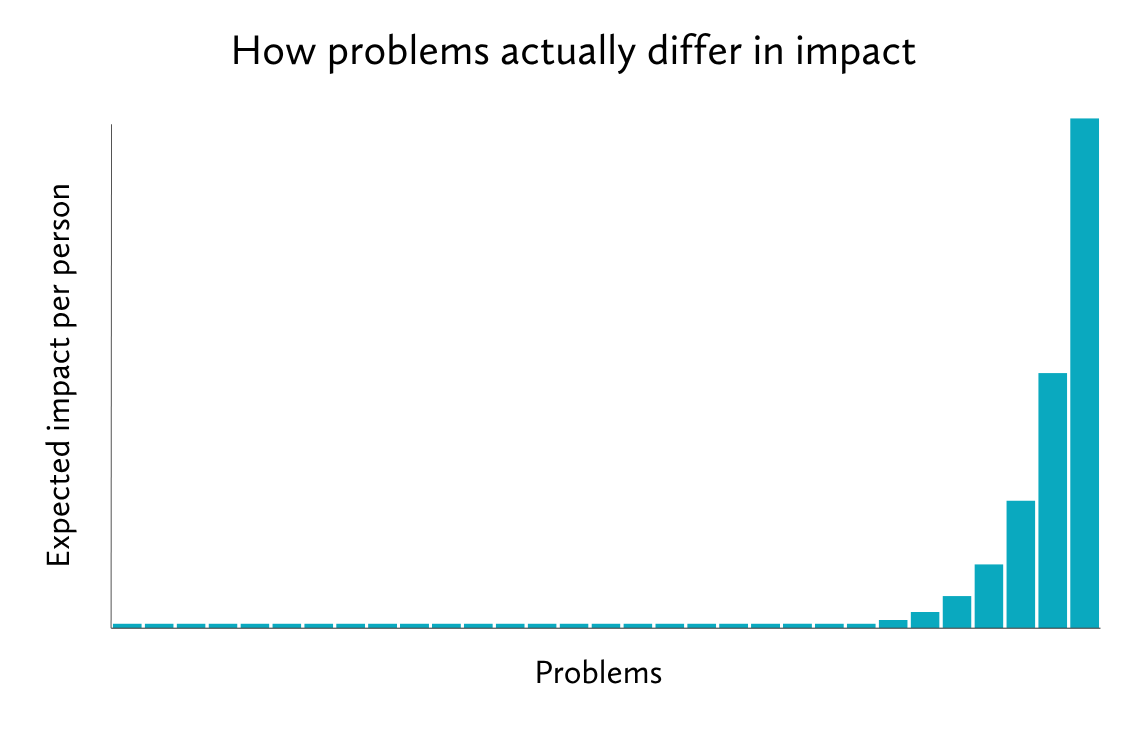

But also don’t rule out dramatic career changes too quickly. We’ve worked with lots of people who never thought they’d be able to do anything about AI or pandemics, but have eventually found fulfilling roles tackling these issues. This is important, because your choice of problem is probably the single biggest factor that will determine your impact. If we rate global problems in terms of how pressing they are, we might intuitively expect them to look like this:

Some problems are more pressing than others, but most are pretty good. In reality, however, we think it looks more like this:

This means which issue you direct your time towards can easily matter more than how much time you give, or how exactly you go about it. (I discuss this on our podcast here.)

These large differences arise because how pressing a problem is depends on the multiple of its scale, neglectedness, and solvability — and all of these can vary a lot.88

More concretely, we saw that the typical person working on one of the best global health interventions could likely have 100 times more impact than someone working on a typical US social issue on average. But given that AI risks receive under 1% as much investment as global health, and due to their existential scale, working on them seems plausibly another 100 times more impactful again.89

Whatever your views, if there’s one lesson we draw, it’s this: if you want to do good in the world, at some point you should take the time to learn about different global problems and how you might contribute to solving them. It takes time, and there’s a lot to learn, but it’s hard to imagine any question more interesting or more important.

Next up, how can you best tackle your chosen problems?

Put into practice

While you don’t need to have a solid answer to which problems to work on right at the start of your career, it’s useful to at least have a rough idea, since it can greatly affect which skills are most valuable to learn. Early on, we’d suggest spending at least a couple of days thinking about this question. Later on in your career, it becomes the most crucial determinant of your impact.

Here’s an exercise to help you start:

- Write down the top 2–5 problems you think most need additional people working on them. You can use the ideas above to help.

What are you most uncertain about with respect to your list? What might cause you to reduce the ranking of an issue? Which new problems might be even more pressing? How are you most likely to be wrong about your list?

Set aside some time to research those uncertainties. Ask yourself how you might best settle your uncertainties. For example, which three books could you read? Who could you talk to? If your views keep changing, and you have more time, keep researching.

Read next: Part 6: Which careers help the most people?

Or see an overview of the whole guide.

Get the whole guide as a book

If you find this guide helpful, preordering the book (especially from a physical retailer!) is a great way to support us.

Notes and references

- Americans gave $484.85 billion to charity in 2021, with $27.44 billion going towards “international affairs.”

“Giving USA 2022 infographic.” Giving USA Foundation, 2022, givingusa.org/wp-content/uploads/2022/06/GivingUSA2022_Infographic.pdf.↩

- According to the January 2023 Post-Secondary Employment Outcomes data, one year after graduating:

- Twenty-one percent of employed graduates are in healthcare (this remains at 21% at five years and 10 years after graduating).

Seventeen percent of employed graduates are in education (this rises to 19% at five years and 21% at 10 years after graduating).

Five percent of employed graduates are in public administration (this rises to 6% at five years and 7% at 10 years after graduating).

Note that a large fraction of government spending goes into education and health, so those who go into government are also contributing to these areas.

We downloaded the raw data from the Post-Secondary Employment Outcomes page of the US Census Bureau website and aggregated these figures ourselves.

We’d guess that a high enough proportion of colleges are involved for these figures to be roughly right, but there may be some systematic bias (e.g. state colleges may be more likely to share data than private colleges).

“Post-secondary employment outcomes (PSEO).” United States Census Bureau, January 2023, lehd.ces.census.gov/data/pseo_experimental.html.↩

- How many live in poverty globally? Exactly where to draw the line is arbitrary, but in June 2025, the World Bank set the poverty line at $3 per day (in 2021 USD, purchasing parity adjusted) and estimated that in 2025, there were 808 million people living below this level.

Three USD is around $1,095 per year, and most live below this level. These amounts are adjusted for purchasing parity. See more discussion in our blog post on global income distribution.

Filmer, Deon, et al. “Further strengthening how we measure global poverty.” World Bank Blogs, 5 June 2025, blogs.worldbank.org/en/voices/further-strengthening-how-we-measure-global-poverty.↩

- The US Census Bureau report “Poverty in United States: 2022” finds 37.9 million Americans living below the US poverty line:

The official poverty rate in 2021 was 11.6 per cent, with 37.9 million people in poverty.

The US poverty threshold varies depending on the size of the household. For a single person, the threshold in 2022 was $13,950.

“2025 poverty guidelines.” Office of the Assistant Secretary for Planning and Evaluation, 2025, web.archive.org/web/20250911045923/https://aspe.hhs.gov/topics/poverty-economic-mobility/poverty-guidelines.

Shrider, Emily A., and John Creamer. Poverty in the United States: 2022. U.S. Census Bureau, Current Population Reports P60-280, September 2023, census.gov/content/dam/Census/library/publications/2023/demo/p60-280.pdf.

U.S. Department of Health and Human Services. “Annual update of the HHS poverty guidelines.” Federal Register, 19 January 2023, web.archive.org/web/20230517124808/https://www.federalregister.gov/documents/2023/01/19/2023-00885/annual-update-of-the-hhs-poverty-guidelines.↩

- Total ODA (overseas development assistance) spending in 2021 was $178.9 billion. Note, official ODA only includes spending by the 31 members of the OECD Development Assistance Committee (DAC) (roughly, European and North American countries, the EU, Japan, and South Korea). This amount is likely to decline due to the U.S. Agency for International Development (USAID) cuts.

The OECD estimate of ODA-like flows from key providers of development cooperation that do not report to the OECD-DAC was $4 billion in 2020.

They note that:

Scholars have estimated that China’s development aid is much larger [than the reported USD 3.2 billion in 2019 and USD 2.9 billion in 2020], standing at USD 5.9 billion in 2018 (see Kitano and Miyabayashi) or as high as USD 7.9 billion if one includes preferential buyers credits (see Kitano 2019). China’s development co-operation is estimated to have decreased due to expenditure cuts to deal with COVID-19 (Kitano and Miyabayashi).

The OECD measure of Total Official Support for Sustainable Development (TOSSD), which also includes loans, investments, and spending by many, but not all, other countries (including ‘South-South’ spending by developing countries in other developing countries) came to a total of $434 billion in 2021.

There is also international philanthropy, but we don’t think adding it would more than double the figure. The US is the largest source of philanthropic funding at $400–500 billion, but only a few percent goes to international causes. A Giving USA report estimated that US giving to “international affairs” was only $27 billion in 2021.

Moreover, if we were to include international philanthropy, we’d need to include philanthropic spending on poor people in the US. Estimates of welfare spending vary depending on exactly what is included. Total spending also varies from year to year. We used a representative figure from usgovernmentspending.com:

In FY 2022 total US government spending on welfare — federal, state, and local — was ‘guesstimated’ to be $1,662 billion, including $792 billion for Medicaid, and $869 billion in other welfare.

Chantrill, Christopher. “US welfare spending — 2022.” USGovernmentSpending.com, 20 January 2023, web.archive.org/web/20230120080955/https://www.usgovernmentspending.com/welfare_spending.

“Giving USA 2022 infographic.” Giving USA Foundation, 2022, givingusa.org/wp-content/uploads/2022/06/GivingUSA2022_Infographic.pdf.

Gualberti, G., et al. Total official support for sustainable development — Data comparison study for Bangladesh, Cameroon and Colombia. OECD Development Co-operation Working Papers, no. 109, OECD Publishing, 2022, one.oecd.org/document/DCD(2022)30/en/pdf.

“ODA levels in 2021: Preliminary data.” OECD, 12 April 2022, web.archive.org/web/20230223131032/https://www.oecd.org/dac/financing-sustainable-development/development-finance-standards/ODA-2021-summary.pdf.↩

- Oral rehydration therapy, which rose to prominence during the 1971 Bangladesh Liberation War, cut mortality rates from 30% to 3%, cutting annual diarrhoeal deaths from 4.6 million to 1.6 million over the previous four decades.

All wars, democides, and politically motivated famines killed an estimated 160–240 million people during the 20th century, or an average of 1.6–2.4 million per year.

Ord, Toby. “Aid works (on average).” StudyLib, studylib.net/doc/13259236/aid-works–on-average–toby-ord-president–giving-what-we. Accessed 11 September 2025↩

- And, as we saw, even the most prominent critics of international aid point out that health interventions have been the exception.

See some other examples of prominent aid sceptics supporting global health in “The lack of controversy over well-targeted aid.”