Emergency pod: Did OpenAI give up, or is this just a new trap? (with Rose Chan Loui)

When attorneys general intervene in corporate affairs, it usually means something has gone seriously wrong. In OpenAI’s case, it appears to have forced a dramatic reversal of the company’s plans to sideline its nonprofit foundation, announced in a blog post that made headlines worldwide.

The company’s sudden announcement that its nonprofit will “retain control” credits “constructive dialogue” with the attorneys general of California and Delaware — corporate-speak for what was likely a far more consequential confrontation behind closed doors. A confrontation perhaps driven by public pressure from Nobel Prize winners, past OpenAI staff, and community organisations.

But whether this change will help depends entirely on the details of implementation — details that remain worryingly vague in the company’s announcement.

Return guest Rose Chan Loui, nonprofit law expert at UCLA, sees potential in OpenAI’s new proposal, but emphasises that “control” must be carefully defined and enforced: “The words are great, but what’s going to back that up?” Without explicitly defining the nonprofit’s authority over safety decisions, the shift could be largely cosmetic.

Why have state officials taken such an interest so far? Host Rob Wiblin notes, “OpenAI was proposing that the AGs would no longer have any say over what this super momentous company might end up doing. … It was just crazy how they were suggesting that they would take all of the existing money and then pursue a completely different purpose.”

Now that they’re in the picture, the AGs have leverage to ensure the nonprofit maintains genuine control over issues of public safety as OpenAI develops increasingly powerful AI.

Rob and Rose explain three key areas where the AGs can make a huge difference to whether this plays out in the public’s best interest:

- Ensuring that the contractual agreements giving the nonprofit control over the new Delaware public benefit corporation are watertight, and don’t accidentally shut the AGs out of the picture.

- Insisting that a majority of board members are truly independent by prohibiting indirect as well as direct financial stakes in the business.

- Insisting that the board is empowered with the money, independent staffing, and access to information which they need to do their jobs.

This episode was originally recorded on May 6, 2025.

Video editing: Simon Monsour and Luke Monsour

Audio engineering: Ben Cordell, Milo McGuire, Simon Monsour, and Dominic Armstrong

Music: Ben Cordell

Transcriptions and web: Katy Moore

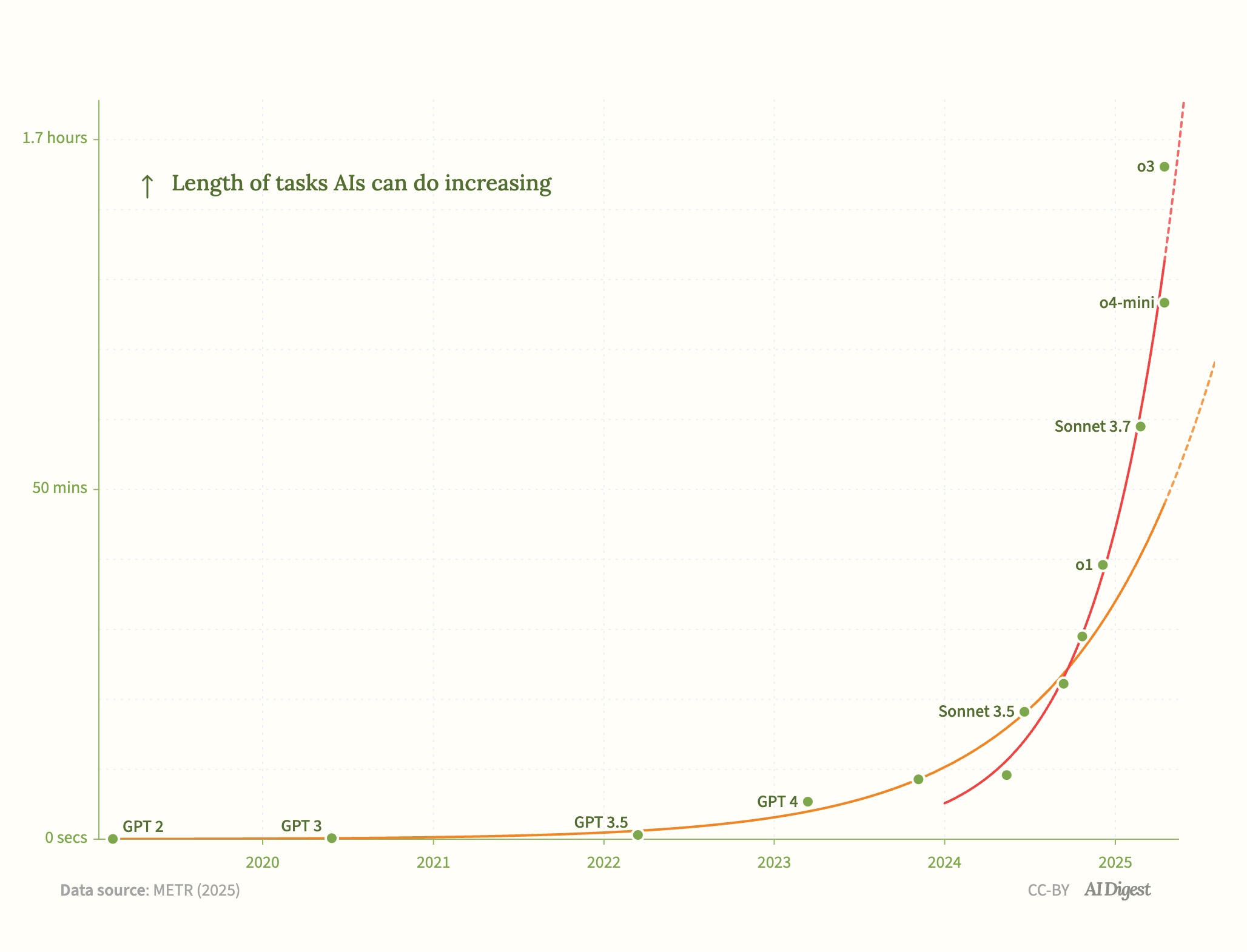

AI systems are rapidly becoming more autonomous, as measured by the

AI systems are rapidly becoming more autonomous, as measured by the

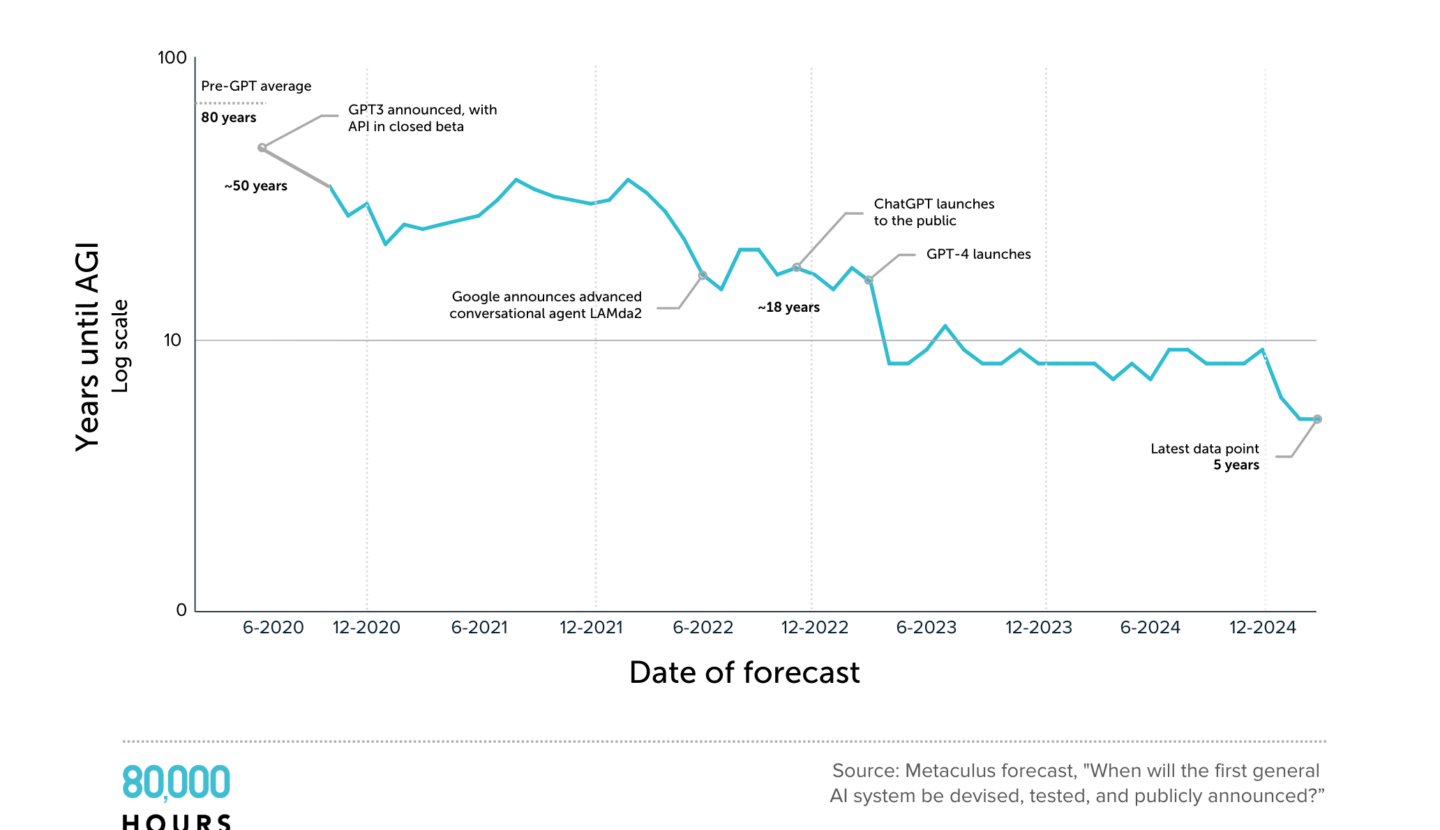

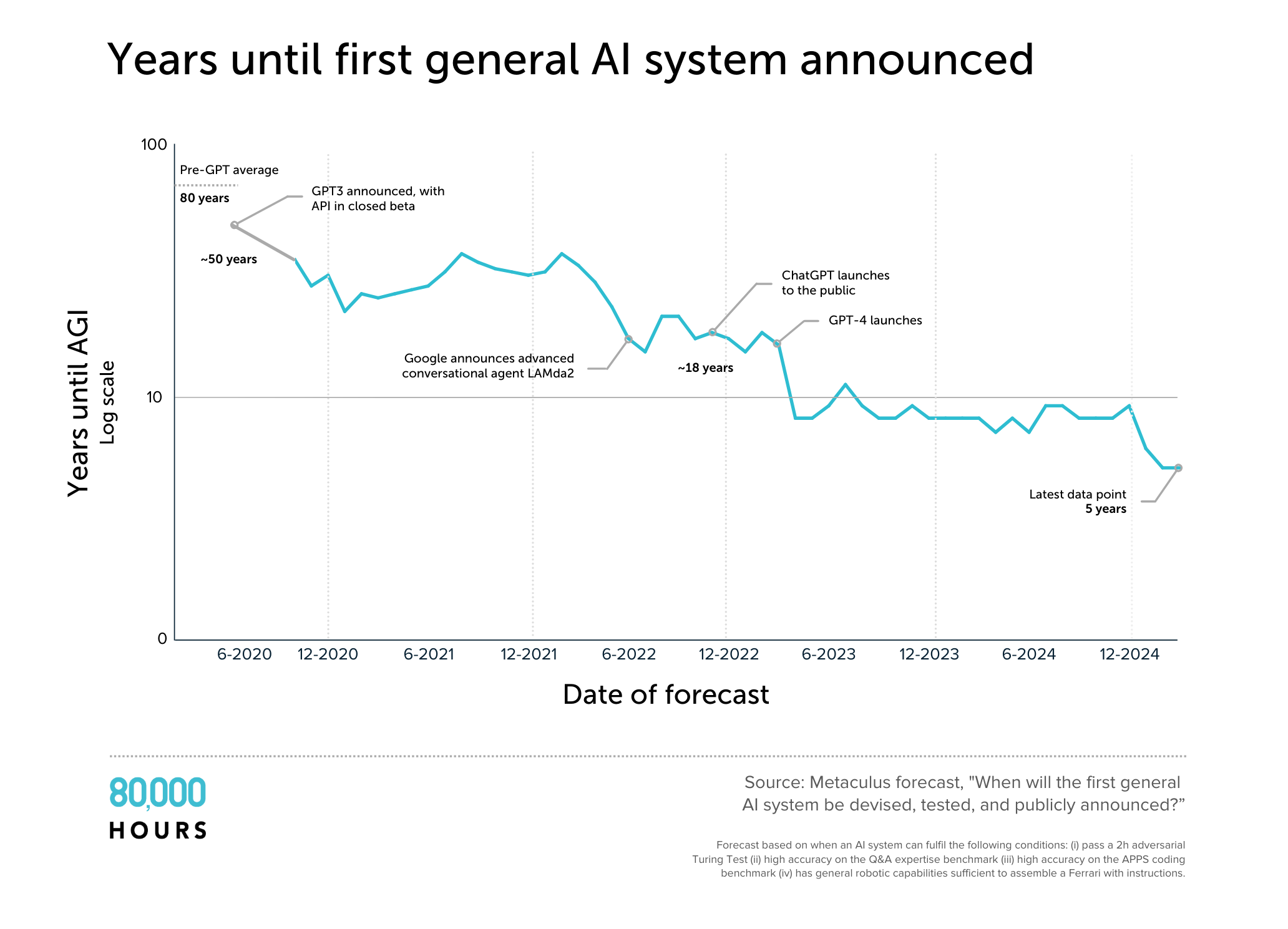

In four years, the mean estimate on Metaculus for when AGI will be developed has plummeted from 50 years to five years. There are problems with the definition used, but the graph reflects a broader pattern of declining estimates.

In four years, the mean estimate on Metaculus for when AGI will be developed has plummeted from 50 years to five years. There are problems with the definition used, but the graph reflects a broader pattern of declining estimates.