Practical steps to take now that AI risk is mainstream

AI risk has gone mainstream. So what’s next?

Last Tuesday’s statement on AI risk has hit headlines across the world. Hundreds of leading AI scientists and other prominent figures — including the CEOs of OpenAI, Anthropic and Google DeepMind — signed the one-sentence statement:

Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.

This mainstreaming of concerns about the risk of extinction from AI represents a substantial shift to the strategic landscape — and should, as a result, have implications on how best to reduce the risk.

How has the landscape shifted?

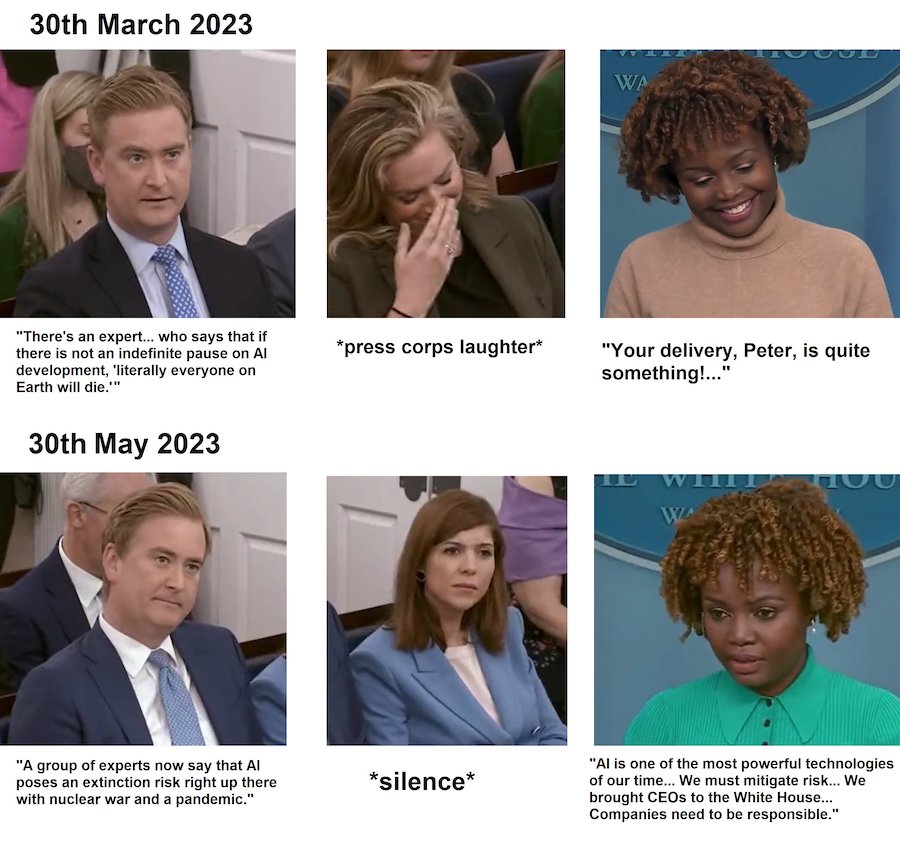

Pictures from the White House Press Briefing. Meme from @kristjanmoore. The relevant video is here.

Pictures from the White House Press Briefing. Meme from @kristjanmoore. The relevant video is here.

So far, I think the most significant effect of the changes in the way these risks are viewed can be seen in changes in political activity.

World leaders — including Joe Biden, Rishi Sunak, Emmanuel Macron — have all met leaders in AI in the last few months. AI regulation was a key topic of discussion at the G7. And now it’s been announced that Biden and Sunak will discuss extinction risks from AI as part of talks in DC next week.

At the moment, it’s extremely unclear where this discussion will go. While I (tentatively) think that there are actions that governments could be taking, it’s possible that governments will act in a way that could increase the risk overall.

But it does seem like some kind of government action is now likely to take place, in the near future.

Looking a bit further forward, the other substantial change to the strategic landscape is that, probably, more people are going to end up working on AI risk sooner than I would previously have predicted.

This blog post was first released to our newsletter subscribers.

Join over 500,000 newsletter subscribers who get content like this in their inboxes weekly — and we’ll also mail you a free book!

What does this mean for our actions?

Our framework suggests thinking about the effects of all this news on the scale, neglectedness and solvability of risks from AI.

- Scale: It’s unclear whether the risk from AI has gone up or down in recent months. All else equal, I’d guess that more attention on the issue will be beneficial — but I’m very uncertain.

- Neglectedness: It seems likely that more people will be working on AI risk sooner. This reduces the neglectedness of the risk, making it harder for any one individual to have an impact.

- Solvability: For the moment, at least, it appears that it’s going to be easier to convince people to take action to reduce the risk.

Overall, I’d guess that the increase in solvability is, for now, more substantial than any decrease in neglectedness, making risks from AI overall slightly more pressing than they have been in the past. (It’s very plausible that this could change within the next few years.)

Our recommendations for action

There are still far too few people working on AI risk — and now might be one of the highest-impact times to get involved. We think more people should consider using their careers to reduce risks from AI, and not everyone needs a technical or quantitative background to do so.

(People often think that we think everyone should work on AI, but this isn’t the case. The impact you have over your career depends not just on how pressing the problems you focus on are, but also the effectiveness of the particular roles you might do, and your personal fit for working in them. I’d guess that less than half of our readers should work on reducing risks from AI — there are other problems that are similarly important. But I’d also guess that many of our readers underestimate their ability to contribute.)

There are real ways you could work to reduce this risk:

- Consider working on technical roles in AI safety. If you might be able to open up new research directions, that seems incredibly high impact. But there are lots of other ways to help. You don’t need to be an expert in ML — it can be really useful just to be a great software engineer.

- It’s vital that information on how to produce and run potentially dangerous AI models is kept safe. This means roles in information security could be highly impactful.

- Other jobs at AI companies — many of which don’t require technical skills — could be good to take, if you’re careful to avoid the risks.

- Jobs in government and policy could, if you’re well informed, position you to provide cautious and helpful advice as AI systems become more dangerous and powerful.

We’ll be updating our career reviews of technical and governance roles soon as part of our push to keep our advice in line with recent developments.

Getting to the point where you could secure any of these roles — and doing good with them — means becoming more informed about the issues, and then building career capital to put yourself in a better position to have an impact. Our rule of thumb would be “get good at something helpful.”

- You could pursue relevant graduate study like an ML PhD — or try doing some self-study.

- Work in entry-level software engineering roles, in big tech or at startups — or maybe even get a junior position at a top AI company.

- Take an entry-level route into policy careers.

- Or just do anything where you might really excel.

Take a look at our (new!) article on building career capital for more.

Finally, if you’re serious about working on reducing risks from AI, consider talking to our team.

I don’t think human extinction is likely. In fact, in my opinion, humanity is almost certainly going to survive this century. But the risk is real, and substantial, making this probably the most pressing problem currently facing the world.

And right now could be the best time to start helping. Should you?

Learn more:

- Article: What could an AI-caused existential catastrophe actually look like?

- Podcast: Catherine Olsson and Daniel Ziegler on fast paths into aligning AI as a machine learning engineer