#28 – Owen Cotton-Barratt on why daring scientists should have to get liability insurance

#28 – Owen Cotton-Barratt on why daring scientists should have to get liability insurance

By Robert Wiblin and Keiran Harris · Published April 27th, 2018

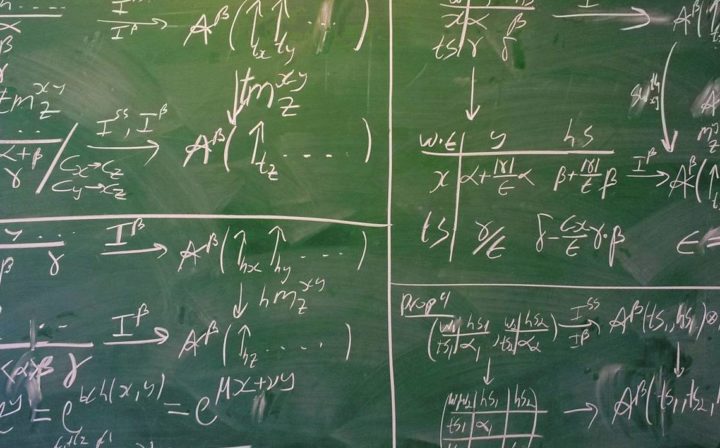

Photo by Dan Kitwood from Vox. Source.

A researcher is working on creating a new virus – one more dangerous than any that exist naturally. They believe they’re being as careful as possible. After all, if things go wrong, their own life and that of their colleagues will be in danger. But if an accident is capable of triggering a global pandemic – hundreds of millions of lives might be at risk. How much additional care will the researcher actually take in the face of such a staggering death toll?

In a new paper Dr Owen Cotton-Barratt, a Research Fellow at Oxford University’s Future of Humanity Institute, argues it’s impossible to expect them to make the correct adjustments. If they have an accident that kills 5 people – they’ll feel extremely bad. If they have an accident that kills 500 million people, they’ll feel even worse – but there’s no way for them to feel 100 million times worse. The brain simply doesn’t work that way.

So, rather than relying on individual judgement, we could create a system that would lead to better outcomes: research liability insurance.

Once an insurer assesses how much damage a particular project is expected to cause and with what likelihood – in order to proceed, the researcher would need to take out insurance against the predicted risk. In return, the insurer promises that they’ll pay out – potentially tens of billions of dollars – if things go really badly.

This would force researchers think very carefully about the cost and benefits of their work – and incentivize the insurer to demand safety standards on a level that individual researchers can’t be expected to impose themselves.

Get this episode by subscribing to our podcast on the world’s most pressing problems and how to solve them: type 80,000 Hours into your podcasting app.

Owen is currently hiring for a selective, two-year research scholars programme at Oxford.

In this wide-ranging conversation Owen and I also discuss:

- Are academics wrong to value personal interest in a topic over its importance?

- What fraction of research has very large potential negative consequences?

- Why do we have such different reactions to situations where the risks are known and unknown?

- The downsides of waiting for tenure to do the work you think is most important.

- What are the benefits of specifying a vague problem like ‘make AI safe’ more clearly?

- How should people balance the trade-offs between having a successful career and doing the most important work?

- Are there any blind alleys we’ve gone down when thinking about AI safety?

- Why did Owen give to an organisation whose research agenda he is skeptical of?

Highlights

I don’t hear stories about people just doing these things which are deadly boring to them. Maybe sometimes somebody trawls through the data set even though it’s not interesting, but they have some kind of personal drive to do that and that makes it interesting in the moment to them.

It’s a bit complicated. On the one hand you might think that academics are being kind of unvirtuous by never going and looking at things which aren’t interesting to them. On the other hand, I actually think that having a sense of what is interesting and what is boring is a powerful intellectual tool for making real progress on questions.

I think that the general principle of working out how do we build intellectual communities working on the problems that we want to, is actually a really important one. Many of the most important problems in the world it seems to me are things that we’re still a bit confused about. Things where research is going to be a key aspect of getting towards a solution. But to get good research, we need to have really good people who have a good sense of what it is that we actually need answers to and are motivated and excited to go and work on that. And so if we can work out how to set up the systems that empower those people to address those questions, that seems really valuable to me.

A PhD is a long time investment and I know a lot of people who do PhDs and then think, “This isn’t what I want to be working on.” And often people enter into PhD programs, because that’s the thing you do if you want to go and be a researcher.

I think that if you are talented, often PhD supervisors will want to work with you. And if you have ideas of, ‘actually, I think that this is a particularly valuable research topic’, you can quite likely find somebody who would be excited to supervise you doing a PhD on that. And there you’ve used some of your selection power on choosing the topic and going for things that seem particularly important. Then you can still spend a lot of your time on working out, okay, how do I actually just write good papers in this topic?

Articles, books, and other media discussed in the show

- Work with Owen by applying for the Research Scholars Programme.

- What does (and doesn’t) AI mean for effective altruism? by Owen Cotton-Barratt

- Underprotection of Unpredictable Statistical Lives Compared to Predictable Ones by Marc Lipsitch, Nicholas G. Evans, and Owen Cotton-Barratt

- Pricing Externalities to Balance Public Risks

and Benefits of Research by Sebastian Farquhar, Owen Cotton-Barratt, and Andrew Snyder-Beattie - Projects of uncertain difficult by Owen Cotton-Barratt

- Prospecting for Gold (Keynote) – Owen Cotton-Barratt at EAGxOxford 2016

- Selecting the appropriate model for diminishing returns by Owen Cotton-Barratt

- Machine Intelligence Research Institute

- A Federal Ban on Making Lethal Viruses Is Lifted – New York Times

Transcript

Robert Wiblin: Hi listeners, this is the 80,000 Hours Podcast, the show about the world’s most pressing problems and how you can use your career to solve them. I’m Rob Wiblin, Director of Research at 80,000 Hours.

Before we get to today’s episode I just wanted to remind you all about EA Global San Francisco which is coming up quite soon, the weekend of June 9th and 10th.

EA Global is the main annual event for people who want to use evidence and careful analysis to improve the world as much as possible. If you like this show you’re very likely to enjoy the event.

A number of guests from the podcast will be there for you to meet including William MacAskill, Lewis Bollard and Holden Karnofsky, among many other speakers. Needless to say I’ll be there as well.

I’m mentioning this now because the application deadline is coming up soon on the 13th of May, so if you think you might go you’ll want to apply now. You can do that at eaglobal.org.

The next major event will be in London in October. Applications for that aren’t open yet, but I’ll let you know about them soon.

Community run EA Global events are also happening in Melbourne in July and Utrecht in June. You can also find out about those at eaglobal.org.

And without further ado, I bring you Owen Cotton-Barratt.

Robert Wiblin: Today I’m speaking with Owen Cotton-Barratt.

Owen has a DPhil in pure mathematics from Oxford University. He’s now a Research Fellow at the Future of Humanity Institute also at Oxford University. He previously worked as an academic mathematician and as director of research at the Center for Effective Altruism. His research interests include how to make comparisons between the impact of different actions, especially with the focus and understanding the long-term effects of actions taken today.

Thanks for coming on the podcast Owen.

Owen Cotton-Barratt: Thanks Rob, looking forward to talking.

Robert Wiblin: We’re going to dive into a bunch of things you’ve been researching over the last few years, but first, what did you do your maths DPhil on?

Owen Cotton-Barratt: My thesis was entitled ‘Geometric and Profinite Properties of Groups’. This is a pretty obscure area, so it’s a part of geometric group theory, which lies at the intersection of algebra and topology. I expect the number of listeners in this podcast who would know much about this to be pretty small.

Robert Wiblin: Maybe like two or three and you know them all.

Owen Cotton-Barratt: Yeah.

Robert Wiblin: Yeah. I have no idea what that is, but I guess what made you decide to shift out of this mathematics research given that I suppose you must have spent 10 years preparing to go into a career like that.

Owen Cotton-Barratt: I found and continue to find mathematics fascinating. I think it has a lot of really interesting problems and there’s a deep elegance to it. For a long time I thought, “Well, that’s the basis I should be choosing things on.” It was around the time that I was finishing my DPhil that I started talking to people at the Future of Humanity Institute.

I was impressed that they were trying to consider questions, which obviously had large importance for where we were going as a civilization, and could potentially actually affect people’s actions. And made me think, “Hang on, I’ve just been choosing what to research on the basis of what seems fascinating.” But there’s a lot of other dimensions that one could choose on and I can personally get interested in a lot of different things. Maybe I should be using at least some of my selection power to choose topics that are important and valuable to have answers to, as well as just interesting.

Robert Wiblin: Seems to me like it’s part of the scientific or academic ideology that people should study things that they find really interesting. This isn’t taken as kind of an indulgence among academics that, while that they should study some things, but they find it fun to do these other things and we should all have some fun in life. It’s actually almost regarded as a virtuous thing to do. Why do you think that is and is there some merit to that view?

Owen Cotton-Barratt: Yeah. I think you’re right. I think there is some merit to that view. The thing that feels like it has merit is that when you’re confused about an area, things that seem fascinating, that seem interesting, that feel like you might be able to make progress on are often good woops in and good ways of actually leading to deeper understanding.

I think a lot of the important intellectual progress that has been made in the past, has come from people playing around with things where they were a bit confused and were fascinated by that, and had ideas that they thought might be able to dissolve some of the confusion. I definitely think there’s an important place for that aesthetic.

On the other hand, the space of things that we could research is just so large that we’re always having to make choices about things we are going to focus on versus things we’re not going to focus on. As individuals, we don’t have a good understanding of the entire space, and so there were questions that we’ve devoted some attention to and we might become fascinated by. There were many other questions that we’ve just never really considered. If we spent more time looking at them, we might also find that there were fascinating things there.

My guess is that the correct approach is to take a bit of a mix of these two different aesthetics. On the one hand thinking about what actually seems important, what do we want to make progress on and then spending time looking at questions in the vicinity of those things. To see if there are places where perhaps you’re then fascinated by something in that vicinity and you can make important progress.

Robert Wiblin: Have you ever heard a story of an academic who decided to work on something that they found incredibly boring, but they thought was just incredibly important and so they did it anyway? I guess that doesn’t have the same romance to it, so I imagine that those cases are under-covered in science journalism. Maybe they also just don’t exist, because it’s so hard to research things that you don’t enjoy.

Owen Cotton-Barratt: Yeah. I think this is kind of a great question. It’s like what is going on there, because I agree. I don’t hear stories about people just doing these things which are deadly boring to them. Maybe sometimes somebody trawls through the data set even though it’s not interesting, but they have some kind of personal drive to do that and that makes it interesting in the moment to them.

It’s a bit complicated. On the one hand you might think that academics are being kind of unvirtuous by never going and looking at things which aren’t interesting to them. On the other hand, I actually think that having a sense of what is interesting and what is boring is a powerful intellectual tool for making real progress on questions. There are lots of dead ends that one could pursue in research, and some of them might at a surface level seem promising. And one of the ways that I think good researchers avoid getting sucked into these is often not by having a very explicit model that no, this isn’t worth doing, but having an aesthetic judgment of no, it seems boring and they have some implicit models of actually that’s not going to lead to anything exciting. Then that makes them not motivated to work on it.

Robert Wiblin: Is the idea that people have kind of a system one sense of whether the answer to a question is going to offer a fundamental insight or an insight that’s only applicable to that very specific case?

Owen Cotton-Barratt: Yeah, exactly and whether a particular methodology that’s been proposed is going to yield real insight into the thing or not.

Robert Wiblin: All right, let’s move on to some specific ideas that you have on how to make the world better. A couple of years ago I think you had this idea for how we could reduce the risk of harmful side effects from scientific research by using liability insurance. I see that’s recently actually appeared in a paper and gotten published. What’s the idea there?

Owen Cotton-Barratt: The idea is that research has lots of externalities. The effects of the research aren’t all just captured by the people doing the research. This is one of the reasons it’s often a great activity to engage in. It can be a way of producing a large lasting change in the world. Normally we think about the positive externalities of research, the benefits that are created that accrue to other people who are able to use the ideas in the future.

In some cases there might also be negative externalities from research and they might be quite stochastic, kind of uncertain negative externalities. The idea that triggered looking into this was research that people have been doing on flu viruses, where this kind of so-called gain of function research where they edit them to make them more transmissible or more virulent. This could help us understand actually what makes flus potentially dangerous, and therefore how better to respond to potential pandemic flu. Pandemic flu looks like it could be a pretty big deal.

On the other hand, if people are creating new viruses, which are more dangerous than any that exist naturally, that’s the type of thing where if you have an accident, it might not just be bad for people at the lab locally. It could trigger a global pandemic. This is a very big deal.

Robert Wiblin: The vast majority of the harm would be the people who had nothing to do with the research whatsoever?

Owen Cotton-Barratt: Exactly. You could try to say, “Well, let’s just do the research safely and let’s try and have a kind of first order solution to this problem. Work on how can we set up the processes, which will let us do research without incurring these risks.” Maybe run slightly different experiments, maybe find ways to run these experiments with a lower chance of risk. That’s a type of thing that you can only do if you’re an expert in the field.

Something else you can try and do is, think about okay, can we set up a system, which is more likely to lead to good outcomes, is less likely to lead to catastrophic accident, because the incentives for the actors involved are more correct. In this case you have individuals who are doing the research. Obviously it would be terrible for them if there were an accident and it caused a very large scale pandemic. But it can only get so bad for them. If they had an accident and it killed five people, already these people would either be dead, because they were the people immediately exposed, or it would be catastrophic for their careers, and they’d feel extremely bad.

If they have an accident and it kills 500 million people, I don’t think that they can feel 100 million times worse. The human brain just doesn’t work that way. The type of effects don’t scale with that. So this is a case of scope insensitivity where the individuals aren’t necessarily incentivized to take care, to make it 100 million times less likely or whatever the appropriate level is.

Instead of thinking about just how individual researchers can do better to have safe experiments, we can think about how we can set the system up to lead to better outcomes. And the idea here was that there would be an assessment of, in expectation, how much damage might be caused. Perhaps you get an insurer to produce this assessment. And in order to do the research you need to pay the costs that are predicted for this. Maybe you just directly pay that to an insurer. Maybe you pay it to a centralized body, which is doing the assessment.

Robert Wiblin: Someone looks at this research and then tries to estimate the expected harm to others from it occurring, and then they have to pay some expected value of the harm? They have to compensate people ahead of time?

Owen Cotton-Barratt: Yeah, so in the classic model this would work where you pay an insurer for the risk that you’re imposing and the insurer promises, “Well, if this happens, I’ll pay up and you don’t have to.” That has two different beneficial effects. On the one hand it makes people less inclined to do research, where the benefits don’t in fact outweigh the costs. On the other hand it means that the insurer, which has a lot of money and does care about larger catastrophes much more than small ones, the oomph to demand particularly strict safety standards and the incentives to actually work out which safety standards will be effective in reducing risk and which are just costly measures, which don’t in fact affect the risk very much.

Robert Wiblin: If the flu did escape the lab, is the idea that under the system now everyone around the world will be able to sue their researchers for the harm that they caused and then the insurer will cover it? Or is it that what, well they’re never actually going to claim the money, but we’re going to try to guess in expectation how much they are going to claim, and then they have to pay it upfront?

Owen Cotton-Barratt: In principle, you’d like to have the case that everybody could sue and the insurers would pay out. In fact, you’re not going to find an insurer with a big enough bankroll to do this. You might be able to get insurance to the level of tens or perhaps even hundreds of billions. A particularly bad pandemic could incur costs, which were up in the trillions, so you can’t insure the whole thing. Insurers have a much bigger bankroll and more to lose than the individual researchers, and so scope insensitivity only starts kicking in for them at a much larger scale than when we were thinking about the individual researchers doing this.

Robert Wiblin: The science researchers are going to pay the insurer upfront, but the insurer never then pays anything out in particular?

Owen Cotton-Barratt: Well the insurer …

Robert Wiblin: Isn’t this just free money for the insurer? They’re kind of selling an indulgence or something to that the researchers.

Owen Cotton-Barratt: No. If there’s a pretty bad pandemic, the insurer will pay out a lot of money. If there’s a very large pandemic, the insurer will pay out the same lot of money, whatever the cap was on the amount that they could lose.

Robert Wiblin: There is a cap?

Owen Cotton-Barratt: There is a cap in order to mean that insurers are okay even entering into this kind of arrangement. If there weren’t a cap, there’d just be say, “No, that could bankrupt us, too risky for us,” and nobody would be willing to offer insurance. There is a way to set it up which doesn’t involve and insurance at all though, and that is more like the free money thing.

You have a government body, which does the same type of assessment that the insurer would do, and then says, “And you need to pay us this in order to do the research.” Then it becomes a kind of tax where the tax is directly related to the assessment of the expected costs.

Robert Wiblin: Something like a Pigouvian risk tax, like you’d have the Pigouvian carbon tax?

Owen Cotton-Barratt: Exactly, yeah.

Robert Wiblin: Who would do the risk assessment here?

Owen Cotton-Barratt: I think this idea has a number of wrinkles and challenges to actually being implemented, and that is one of them. There have been some attempts to make quantitative estimates of the risk associated with these types of experiments, but they have varied by few orders of magnitude. There is expertise already within insurance firms or technically within reinsurance firms in modeling pandemic risk, so they have some understanding of the type and the scope of damage that might be caused by particularly bad pandemic.

That doesn’t cover accidents from these artificially created pandemics, but there are other data sets on how often there are accidents in biosafety labs. I think that one can do some reasonable analysis of this. When the US government was looking into this research a couple of years ago, they commissioned a private analysis of the risks and benefits of the research. Gryphon Scientific, the firm who did this, did manage to produce a number for the expected damage from biosafety.

There was another class of possible damages by security risks where, by doing the research and publishing it, you make possible bad actors aware that this is a thing they could do. The research could be stolen, it could be misused. They didn’t manage to quantify that, but it’s a start.

Robert Wiblin: Is the idea that this risk assessment will be done by the government, by some regulatory agency? Or is the idea that the insurance company would produce these estimates and thereby decide what price they’re going to charge for this insurance coverage?

Owen Cotton-Barratt: There’s two different versions of this idea. In one of the versions, the government is producing the estimate. That has the challenge of aligning incentives correctly for the people in government making the estimate, but it has the advantage that they can in principle try to capture all of the different sources of risk without needing to be able to do proper assignments of responsibility after the fact.

In the case of insurers, you have the challenges of assignation of response ability and also the fact that they only have so large a bankroll. So the possible liability will be capped, but you have the advantage that the incentives are more properly aligned.

Robert Wiblin: If we imagine that some dangerous research was done in the UK and it was covered by some insurer, and it had terrible effects all around the world, is the idea that only British people would be able to sue the researchers who messed up and thereby get compensated by the insurer? That’s how you make the cap functional or is that everyone around the world sues them all at once and then whoever kind of gets the lawsuit in first get the money? Or perhaps they all get sued and they all get some fraction that like as much as the insurer is able to pay?

Owen Cotton-Barratt: Yeah. I don’t know. The stage that this idea is at is, it’s a kind of preliminary idea working out in principle lawfully how might the moving pieces work. The question that you’re asking is one that comes down to international law, and that is just not my area of expertise.

Robert Wiblin: That is not your jurisdiction?

Owen Cotton-Barratt: Yup.

Robert Wiblin: Okay, well here’s another thing that occurs to me. With this idea you’re trying to internalize the external harms caused by this research.

Owen Cotton-Barratt: Yeah that’s right.

Robert Wiblin: Research has both external benefits and external costs, and if you only internalize the external costs, but you don’t compensate them for the external benefits, then this would create a big bias against conducting research. They pay the global costs of their actions, but they don’t receive the global benefits. Does that make sense?

Owen Cotton-Barratt: Yeah, that does make sense. I think that this is, there were already a bunch of processes for internalizing the benefits. This is why research is often correctly in my view subsidized by states and by philanthropists. We have mechanisms for determining which research is likely to have particularly large benefits and funding that. This is the idea behind the grant process, where you have experts in the field assessing research proposals and trying to fund the ones that they think are likely to be particularly valuable.

In this case there would be an extra line in the cost of the research grant, which would be to cover the possible externalities and that would be a cost of the research, which they would have to weigh up when they were deciding which pieces of research to fund as well as other costs. It could still be quite correct to fund this in some cases, because the expected benefits might be sufficiently large.

Robert Wiblin: Scientists are going to hate this idea aren’t they? It’s not going to increase the total budget available for scientific research, but some of it now goes firstly to insurers and then to the general public whenever they’re harmed by scientists actions. It seems like they’ll be unpopular.

Owen Cotton-Barratt: In a way that is an explicit subsidy, which is being provided to research at the moment by the government kind of underwriting, because it would come down to either the government or the public picking up the costs of large scale catastrophe if it occurred. In the version where it’s a government body that the money is paid to, that could be in fact a Research Council itself, so it might be that it doesn’t reduce the total amount of money going to science at all, but it just redistributes it …

Robert Wiblin: To some other group?

Owen Cotton-Barratt: Somewhat and so it’s, yeah exactly, it’s helping the scientists who are doing research, which is more robustly net beneficial relative to the ones who are doing things, which are possibly concerning. I think that, that would have both supporters and detractors within science. I know that some by biologists are concerned that their colleagues are working on things, which have the potential to cause large scale catastrophe. If that did happen, I think it would be extremely bad for the reputation of science. There’s some responsibility that people might feel to avoid getting into that situation in the first place.

Robert Wiblin: Another question that is relevant is what fraction of a research has very large negative potential consequences? If this was extremely rare, then maybe you would just want to regulate those things directly, if it’s just a handful of odd cases. If this is a more widespread phenomenon, where all research has potentially negative harmful consequences and it’s just a question of estimating how large they are, then perhaps you need some more systematic way of doing it.

Owen Cotton-Barratt: I think at the moment there are not many bits of research which have significant direct risks of large scale catastrophe. You say that you could just, why don’t we just regulate this directly? The challenge is, maybe even though it has the risks, we should be doing the research, because it might be that this is exactly the research, which helps reduce the risk of having a bad global influenza pandemic. We need a real way of weighing up the risks and the benefits and not just saying, “Well it’s risky so we can’t do it.”

Robert Wiblin: Another paper you published recently talks about how we tend to treat unpredictable risks differently than risks where we know each year roughly how many people are going to die. But we just don’t know who. What’s the difference in how we treat those two cases?

Owen Cotton-Barratt: Yeah, so this paper was with Marc Lipsitch and Nick Evans. It was Marc’s idea. Marc is actually one of the biologists I mentioned who’s concerned about the gain-of-function research. He got to thinking, what is going on? What are the general processes that produce this? It’s really an idea that we’ve already touched on in the conversation, that if you have a small chance of causing a very large-scale catastrophe, then the actor who is responsible who is possibly responsible for taking mitigating actions isn’t properly incentivized to take them to the appropriate degree. Because in the case of a catastrophe, it just more than wipes out everything that they have at stake, and so they are insensitive to the scale of things.

Robert Wiblin: So beyond the point that you’re given the death penalty, it just doesn’t really matter that much, like after you commit any further crimes than that, that might be an analogous case.

Owen Cotton-Barratt: Yeah. It’s the, this is called the judgment proof problem. You can’t hold people to account to things beyond a scale of what they stand to lose. This means that for those situations where there’s a small chance of a large catastrophe, the incentives don’t line up as well as when there are lots of uncorrelated small chances of accidents, but where in aggregate we have a pretty good idea of what the scale of damage is going to be.

You might think if a car crashes, car manufacturers can be sued if their vehicle design leads to people dying, but this is going to be a kind of broadly statistical effect. And we can make reasonable estimates about how many people are going to die in car crashes in a given year, even though we have no idea who those people will be.

Robert Wiblin: The usual story that’s told here is that, we care more about these risks when the people who might die are known. If I’m in hospital and there’s a 50% chance that I’m going to die, people care more about, well, people hopefully would care more about that, probably would care more about that, than a random person. A person chosen at random having a 50% of death, is that right?

Owen Cotton-Barratt: This is the distinction between identified and statistical lives. Observationally, people do care more about saving a life when it’s known whose life they’re saving, than saving a life in expectation across a large population, where it’s not known what the effect is going to be. I think that ethicists debate whether or not that increased level of care is actually ethically appropriate. There are cases that when we have more of a relationship or we can see the person perhaps that does give us a stronger duty of care, I tend to…

Robert Wiblin: But that should cancel out, because if you don’t know who it is then a large number of people would each have a tiny partial concern for that person.

Owen Cotton-Barratt: Yeah. I’m pretty sympathetic to the view that you’re expressing. I think that generally we should treat them about the same, but I don’t think that the case is totally open and shut. In the case where in either case you don’t know who’s going to be affected and it’s just the difference between whether you know roughly how many they’ll be or there’s uncertainty even about the number, I find it hard to see how an ethical case can even get started for really distinguishing between those. And so I think it is an issue that the incentives in our society push against treating these the same.

Robert Wiblin: Your point is, let’s say in the United States about 10,000 people die in traffic accidents every year. We’re more concerned about that then we are about an accident that happens every hundred years but then kills a million people, even though those two killed the same on average each year. Or I guess you could have an even more extreme case, where there’s a risk that we kind of guess will kill 10,000 people a year on average. We really don’t know what the distribution is of the probability and severity of accidents, so it’s much more abstract.

Owen Cotton-Barratt: Yeah, that’s basically what we’re arguing, at least insofar as economic incentives go. There are psychological factors, which complicate this and mean that it could go in either direction. You can look at the details in the paper, but at a large scale that’s what we think is happening and why we think that some of these more uncertain large scale risks are particularly neglected.

This whole line of thinking is not trying to directly produce solutions for catastrophic risks, but trying to work out what directions can we go in to set up the system we’re operating in, so that system is more likely to produce solutions. I think that this is a lens we can use in a number of different domains. People at the Future of Humanity Institute and a lot of other places these days are spending some attention to thinking about risks from artificial intelligence. The kind of direct way to think about what do we do about this is, how do we build systems, which are safe and robustly beneficial while still being really powerful?

And I think that that is a good strand of research to be exploring. I think that we might get ideas there, which are valuable. This is the, just tackle the problem head on approach. Another thing we could do is, ask what does it even mean for a system to be safe and robustly beneficial? Can we give a very good specification of that? This is a different research direction, but if you managed to get a good enough specification of what that meant, then other people could come in and do the research of, well, now we know exactly what the target is, so we can build and optimize for that. And so that allows for specialization of labor. You don’t need your researchers to simultaneously be able to recognize what a good and safe system is, and to have the technical know-how to be able to do it.

Robert Wiblin: Do you think the Future of Humanity Institute might be in a better position to specify what safety would look like and how to measure it, rather than design safe systems themselves?

Owen Cotton-Barratt: I don’t want to make claims about who should be doing particular strands of research. I think that this is another direction, which is probably worth exploring in the research community, thinking about beneficial and safe Artificial Intelligence. It might be that the problem of specifying exactly what it means to be a safe system is harder than the problem of just building one. In that case we definitely want to be aiming for the direct problem as well, but it might be that the problem of specifying a safe system turns out to be easier. I think that it’s valuable for some people to be putting effort into that side of things, to check for low hanging fruit.

A particular advantage of this in the case of Artificial Intelligence is, at some point down the line we may start automating more and more of Artificial Intelligence development and it may be that we can get much more powerful Artificial Intelligence systems, because we have a well specified sense of what being powerful as an Artificial Intelligence system means. We can just automate the exploration process for which architectures are going to give us good performance on this.

If we also have a very well specified idea of what it means to be a safe system, we may be able to automate safety research at the same time as we can automate capability research.

Robert Wiblin: Is part of the hope here as well that by making a really interesting intellectual problem very clear, where the solution feels more concrete to people than it might have otherwise, you kind of be able to hijack that time, because they’ve read about this problem and just find it irresistible to work on?

Owen Cotton-Barratt: Yeah, absolutely and I think the idea of hijacking makes it sound a bit more sinister here than it really is. It’s providing people with a problem that in fact they would find interesting and motivating to work on. This is quite a co-operative move with researchers. I think there’s a couple of different angles that you might pull people in on here. One is people who think well I like to build systems to specifications and now there are specifications, now I know what to do. Now I feel like this is actually a problem that I can engage with, rather than just hearing the abstract arguments and thinking, “Well, this sounds important, but I don’t know how to start.”

On the other hand, you might have people who read the specifications that you come up with and go, “Hey, that’s not right.” It’s often easier to criticize than to propose a new idea, and so by putting ideas out there, you can help bring in intellectual labor to the process of refining and improving them.

Robert Wiblin: Yeah, I’ve often actually found that myself, that if I want to find out the answer to a question, then I should offer a terrible answer to it. Then people start piling on and telling you why it’s wrong, and offering better solution.

Owen Cotton-Barratt: I am extremely vulnerable to this attack mode. I feel like a lot of the work I did for the Centre of Effective Altruism in the past was people saying, “Why don’t we do this?” Building metrics and I’m like, “That is the wrong metric to use. Here let me spend a week designing a better one for you.”

Robert Wiblin: Well, silly people like me need a way of getting the attention of greater intellectual firepower from people like you.

Owen Cotton-Barratt: I think that the general principle of working out how do we build intellectual communities working on the problems that we want to, is actually a really important one. Many of the most important problems in the world it seems to me, are things that we’re still a bit confused about. Things where research is going to be a key aspect of getting towards a solution. But to get good research, we need to have really good people who have a good sense of what it is that we actually need answers to and are motivated and excited to go and work on that. And so if we can work out how to set up the systems that empower those people to address those questions, that seems really valuable to me.

Robert Wiblin: Well that brings us to another topic that we wanted to talk about, which is how you can have a career in academia while still studying things that you think are really important. Often when we’re advising people who are considering doing a PhD or perhaps they’re doing their first post-doc, we have to point out there’s a trade-off between advancing their career as quickly as possible and doing the research that they themselves think is most important to do. Typically, if you want to do really valuable research, you want to do something extremely innovative, perhaps create a new field that doesn’t exist, but if you want to advance your career as much as possible, you want to do something that’s already going to be recognized as a legitimate field and try to tie yourself into an existing literature. How have you found this trade-off yourself now working at Oxford?

Owen Cotton-Barratt: I found Centre for Effective Altruism and Future of Humanity Institute very intellectually productive environment to work in. I’ve been optimizing quite far towards, just do work that I think is valuable and only putting a smallish fraction of my research attention into turning things into papers for the sake of getting publications and looking like of respectable academic. Maybe I’ve been recently splitting this on a kind of 90%, 10% basis.

Depending on people’s situation, I don’t think that that split is always going to be correct. I’ve been writing a few papers over the last few years and generally only writing papers where it seems like a paper is the best way to communicate an idea. Sometimes I’ve been happy to just leave an idea in a blog post or present it in a talk, and this has, let me go faster and explore more of the space of possibilities. I think that sometimes people entering into academia feel like they don’t even have permission and they’re not entitled to have opinions about what research is important. They just need to buckle down and do the work to get tenure and then eventually they’ll be able to burst out and start thinking, “Okay, now what do I really want to work on?”

I actually worry about this attitude and think that it might be quite destructive towards academia as a whole. Everybody optimizing for several years for what is going to be good for my career. I worry about this for a couple of different reasons, but one is that, I’m not even sure it’s the thing which sets them up to do the most valuable research later. There are lots of things which set us up to be in positions to do valuable research. One of them is having a recognition that you are an established thinker in this field and that what you have to say is going to be worth listening to.

Another is just having well calibrated ideas about what is going to be valuable to work on. I think that choosing particularly important research questions is a skill. I think it’s a somewhat difficult skill, because the feedback loops are a bit messy, but there are some feedback loops there. Like many skills, I think that it’s one where the best way to get better at it is to practice and to try and seek out feedback, how was this doing, hopefully from reality. Sometimes the feedback loops some kind of reality are too long and slow, but then you can try and get feedback from other thinkers whose judgment you trust.

It’s also the case that, if people are trying to do research on questions that feel particularly important to them, they are going to be more directed in the other things that they’re choosing to learn about and build up expertise in. They are likely to build expertise in topics, which are really crucial to the things that they think are important. Rather than building expertise in the topic, which is kind of adjacent maybe it’s a little bit like something that’s important, but not actually that close. I think that, that could help people to be better positioned to have a lot of impact in the future.

Robert Wiblin: Okay, so the first problem is that, if you just try to do what’s respectable then you don’t develop good judgment about what problems are actually important to study. The second problem is that you’ll just end up accumulating lots of knowledge about things that are kind of close to something important, but not that close. Later on when you try to actually do the things that are valuable, you don’t know very much about them.

Owen Cotton-Barratt: Yeah, and you may still be reasonably positioned and be able to do something on them, but perhaps much less than if you’d spent the intervening years building up expertise on things which were more well targeted for the problems that you ultimately care about. A PhD is a long time investment and I know a lot of people who do PhDs and then think, “This isn’t what I want to be working on.” And often people enter into PhD programs, because that’s the thing you do if you want to go and be a researcher.

I think that if you are talented, often PhD supervisors will want to work with you. And if you have ideas of, ‘actually, I think that this is a particularly valuable research topic’, you can quite likely find somebody who would be excited to supervise you doing a PhD on that. And there you’ve used some of your selection power on choosing the topic and going for things that seem particularly important. Then you can still spend a lot of your time on working out, okay, how do I actually just write good papers in this topic?

There are skills of how do I write good papers, which are generally transferable, which academia teach as well, which are valuable to learn. If you learn them in a domain where you think it’s really important, that seems a lot better than learning them in a domain, which is just kind of adjacent to something you think is important.

Robert Wiblin: You don’t think the cost in terms of advancing your academic career is so severe that you should just play the game early on?

Owen Cotton-Barratt: I don’t think it’s so severe. I think that there is a cost. I think that a lot of academic advice is generally standardized to trying to help people maximize the chance of a successful career in the, this is successful for them personally sense.

Robert Wiblin: When they don’t particularly care about the impact of the work at all?

Owen Cotton-Barratt: Exactly. I expect that there will be some people who could have had successful in that sense academic careers, where if they pay a bit of a price by using more of their optimization power on trying to deduce a topic will end up not doing so.

But because the impact from research is so heavy tailed, the distribution is heavy tailed and it’s a few star researchers who have a lot of the impact. I think that unless the individual was just particularly set on, “I want to have a job in academia no matter what,” not thinking about impact, that is unlikely to be so much of a cost. If they are in the tail and they were going to be one of those researchers who could have a very large impact, I think that they’re likely to succeed and be able to stay in academia no matter what topic they work on. As long as they’re putting enough attention into doing the work or trying to turn it into respectable papers on the topic that they think is important.

I would far prefer those people who may go on and do really great research, to spend more of their time and earlier thinking about what research is it that we actually need to see.

Robert Wiblin: So, life’s about trade-offs. How should people split their attention between bolstering their career, their academic career and just doing, focusing on the research as most important?

Owen Cotton-Barratt: I think that there isn’t going to be one answer to this, because it will depend on a bunch of personal circumstances. How far through the careers people are, how good the backup options they have are, that’s in the aid of trying to provide numbers for this, I think that people should spend at least 10 or 20% of their attention on working out what is valuable to be working on and experimenting with that. Probably early in their career, at least 20% of their attention on thinking about how am I going to do things, which are valuable for setting up the career to go well for later. That’s a pretty big range, but people can work out where they think they should be situated in that with respect to others in the cohort of people who might be taking this approach.

Robert Wiblin: I saw your talk at Effective Altruism Global San Francisco, which was about what Artificial Intelligence does and doesn’t imply for effective altruism. Do you want to describe the main idea that you were promoting there?

Owen Cotton-Barratt: Yes, so the idea here is about how we prioritize given our uncertainty about the nature and the timing of radically transformative Artificial Intelligence technology. Because I think it is appropriate to have a lot of uncertainty about this. If you ask experts, I think the consensus position is that we really don’t know when Artificial Intelligence is coming. It could be that there are some unexpected breakthroughs and we see transformative systems even in the next few years. Or it could be many, many decades.

.

In light of this, I think it’s worthwhile as a community not putting all our eggs in one basket. Instead, we can think what would be particularly valuable to do on each of these different plausible timelines, and devoting at least some attention collectively to pursuing each of those activities. This is predicated on the idea that there are likely to be low hanging fruit within any given strategy. And so if we’re looking for the best marginal returns, that will mean taking at least some of the low hanging fruit in each of these different possible scenarios.

Robert Wiblin: Okay, so the reasoning is, Artificial Intelligence could be really significant in shaping the future of humanity. It might come soon, it might come late, it might come never I suppose, so there’s a wide range of different scenarios that we need to plan for. Your thinking is, given that there’s hundreds or thousands of people involved in the community, we should kind of split them to work on different projects that would be optimal for different kind of timelines of when Artificial Intelligence might arise.

Owen Cotton-Barratt: Yeah. I don’t think that we want to commit to a position, which expresses more confidence than we actually should feel or than the experts are generally expressing.

Robert Wiblin: So that means that we should have a very wide kind of confidence distribution, probability distribution about when we should expect artificial intelligence to advance beyond particular levels.

Owen Cotton-Barratt: I think that pretty wide is quite appropriate at the moment. I think that there’s some pressure towards putting extra work into scenarios where it comes unexpectedly soon, relative to what the raw probabilities would imply, because those are scenarios where only people around fairly soon are ever going to be in a position to influence. Whereas for scenarios where the timelines are longer, there is likely to be a larger, more knowledgeable, more empowered community of people in the future, who will be able to give those attention.

Robert Wiblin: Okay, so the logic there is that, even if it’s very unlikely that Artificial Intelligence that’s smarter than humans could come in five or 10 years. If that does happen, then kind of we’re among a pretty small group of people who are alert to that possibility right now and could potentially try to take actions to make that go better than it would otherwise. But if it’s going to arrive in 100 years, well by that stage they’ll be thousands, hundreds of thousands, maybe millions of people who have thought about this and many governments will have taken an interest. Really we’re not so essential in that kind of case.

Owen Cotton-Barratt: That’s correct. You said even if it’s very unlikely, I think that this argument applies down to probabilities of the order of a percent. Maybe a fraction of a percent. It doesn’t apply if we really think it’s vanishingly unlikely, if we think it’s one in a million chance that it were coming in five or 10 years. Then I just don’t think this should be on our priority list, but I don’t think it’s appropriate to have that much confidence that it isn’t coming that soon. Technology sometimes makes relatively unexpected breakthroughs.

Robert Wiblin: What kinds of things should people do in these different cases? I imagine that you would adopt pretty different strategies if it’s coming soon versus late. How might they look different in a broad sense?

Owen Cotton-Barratt: Yeah. If it is coming imminently of the time scale of just a few years, then it’s likely to be largely only people who are already engaged with the research or the industry who are in a position to have much of an effect on this. And so I think that it makes sense from a comparative advantage perspective for these people to be giving more of their attention to the imminent scenarios, even if they think that they’re relatively unlikely.

The time scale of 15 years or something, then people can go and say, “Okay, I’m going to do a masters and then maybe get a PhD and go get a career in this field,” that would be too slow if things were imminent, but is exactly the right kind of thing on these medium timescales. We can try to sketch out now what the structures of things that people should be doing over a decade or two. As we get further into the future, it is less likely that the people who we’re talking to now are going to be in the key positions.

Robert Wiblin: You and I might have died by that point.

Owen Cotton-Barratt: Right, maybe the researchers who do it are like haven’t been born yet, and that’s true even if we’re looking 30, 40 years out. It becomes more important to try and build good processes, good intellectual infrastructure to promote the best ideas and mean that they rise to the top, and that we don’t forget important insights that we keep attention on the things that matter and don’t get distracted by ideas that in fact don’t have a firm enough foundation.

Robert Wiblin: If you’re an undergraduate listening to this and you would like to try to help with the issue of positively shaping the development of Artificial Intelligence, then probably there’s not a whole lot you can do in the short time lens scenario, where Artificial Intelligence appears in less than 10 years. This is going to take you a while to graduate, take you a while to train up and get relevant skills and credibility. Should they just largely ignore that and think more about the medium term cases, where they have time to do enough training to then be around and in relevant positions when the crunch time comes?

Owen Cotton-Barratt: I think that’s probably right. I think that most undergraduates are going to be more likely to have a positive impact in scenarios where things are at least 10 years out. Maybe more directly themselves 10 to 25 years out or more indirectly by helping build communities, which are going to get things right over even slightly longer time scales.

Robert Wiblin: Whereas I suppose you or I are already around and kind of aware of the issues and unfortunately I don’t have any technical training, but possibly there are things that I could try to do that might make people more cautious about deploying an Artificial Intelligence that’s smarter than humans within the next five years.

Owen Cotton-Barratt: Plausibly, although if we look at comparative advantage you seem to be in a pretty good position to help steer this community of talented altruistically minded people that’s springing up. I, in a sense, think that that’s just probably the best thing for you to continue doing, even though it’s aiming at perhaps slightly longer time scales.

Robert Wiblin: Before we move on, are there any things you want to clarify about this argument so listeners can actually apply it in their own lives?

Owen Cotton-Barratt: Yeah. I think that one thing, which is relevant here is that, there’s just a lot we don’t understand about Artificial Intelligence, and that means that if you’re thinking about trying to work to help get good outcomes from this, you need to be the type of person who is happy operating in a domain, where there’s a lot we don’t understand. Trying to find things which are robustly good to do anyway, and try and untangle some of what’s going on there.

I think that some people probably hear this description and think, “Oh that sounds yummy,” that’s just like intellectually really fun and feels appealing and feels like the type of thing they’re good at. I am more excited about people like that getting involved. I think that they will have a skill set, which is often better. Also, I think that personal motivation does help people to do important work and this will go better for such people.

Robert Wiblin: This kind of ties back to your point earlier about how it could be really important for people who can, to specify the problem more clearly, because that kind of requires a particular kind of intellectual audacity that is perhaps not so common. Not even so common in academia, where people maybe want to work on existing problems that have been well established and understood and just like add another grain of sand to the pile.

Owen Cotton-Barratt: Right, and it ties back to the thing we were discussing at the start about researchers working on things that are interesting verses dull to them, where I think people often make important progress when they find something fascinating.

Robert Wiblin: I think that the term I’ve heard for this is a pre-paradigmatic field, is that right?

Owen Cotton-Barratt: Yeah. People are starting to lay out research agendas within Artificial Intelligence safety, of questions that we could try to answer that might help get us towards an eventual solution. But I think that we’re still experimenting with this research agendas. I think we don’t have a consensus of yes, this is what we need to be doing, and so it is valuable to explore and make more progress on these agendas. But we need more people who can think about what are the relative merits of these different research agendas? What are they missing? What other questions should we be asking?

Robert Wiblin: I guess they have to be able to pick up mistakes even though they might be very hard to see, because you’re not actually implementing anything yet. The machine isn’t breaking down, you’re just going down a dark alleyway.

Owen Cotton-Barratt: Right. It’s almost certainly easy to wrap ourselves up in our confusion and think that we’ve solved something when in fact we haven’t. A stance of curious skepticism may often be the most useful one here.

Robert Wiblin: Do you think there’s any examples of blind alleyways we’ve gone down already, within thinking about Artificial Intelligence safety?

Owen Cotton-Barratt: Okay, this is a personal view of something where I don’t think there’s yet a consensus that it is a blind alley, but early on when people were thinking about safety, there was an idea that, because we expect things to be rational agents, we can use the economic theory and say their goals should be describable by a utility function. Therefore, the correct angle of attack is to think about what utility functions are appropriate to have and think about how can we specify good utility functions for goals.

Now it seems to me some of the most promising approaches that people are proposing towards Artificial Intelligence safety, dispose with this altogether. They more indirectly specify what is valuable to aim for, and behavior may eventually be representable as the utility function. But it’s unclear that analyzing it at the level of utility function is the most useful one. I still think there are useful insights to get from that, so I don’t want to describe it quite as a blind alley. But I think that it has had a larger share of attention than it deserves.

Robert Wiblin: The idea here is that an Artificial Intelligence might be messy a bit in the way that human brains are? In a sense we have a utility function, but that’s just like an emergent feature of a brain that’s making decisions in a very complicated way. If you try to understand humans by specifying everything down in the utility function, well we don’t even know how to do that now. It could be the case that even after we design an intelligence that’s superhuman, this utility function may not appear anywhere at all in the code. It will just be an emergent property of the entire system.

Owen Cotton-Barratt: That’s correct. It doesn’t even need to be the case that the system is messy to have this property. It might be that the system is quite clean and elegant, but it’s cast in ways, which make it simple and elegant with respect to a different way of slicing it up. The utility function, which is implied, is very complicated and messy. Or it might be that it is a messy system and that there isn’t a particularly simple, clean way to describe it.

Robert Wiblin: I’ve heard that neural nets can do quite impressive things sometimes, but we often don’t have any understanding of how the internal pieces are working.

Owen Cotton-Barratt: Right, so neural nets are a tool, which let us get approximations to functions. Some functions might be effectively doing intelligent tasks. Out of a fairly black box process, we just say, “Hey, we want it to do something, which does something like this and it produces an answer.” If your whole system is just a neural net, then we don’t have much of a sense of what’s going on internally. People are developing tools to get somewhat more insight into that, but we’re a long way off having a kind of complete understanding and it may be that it’s just intractable to get that.

It’s possible that systems, which are built out of neural nets could have more high level transparency and simplicity, even if there are individual components where all we know is how it’s going to have this type of behavior.

Robert Wiblin: It’s kind of like humans have some process for making sense of the things that are coming into their eyes – visual processing. Then it spits out some concepts, but then we have kind of a higher-level thought process that is more scrutable, where we actually use language to try to think things through.

Owen Cotton-Barratt: Right, or if I go and use a computer, I can understand a lot of what’s happening at the level of, “Well, this was a tab in my browser,” without necessarily understanding it at the level of the machine code that’s running kind of many layers of abstraction down.

Robert Wiblin: I think one of the organizations that has focused more on this rational agent model is the Machine Intelligence Research Institute in Berkeley right?

Owen Cotton-Barratt: Mm-hmm (affirmative).

Robert Wiblin: If I recall correctly, you actually donated to them last year, that’s even though you think their research agenda has perhaps received more attention than it deserves. Do you want to explain that?

Owen Cotton-Barratt: Yeah. When I try and for my own internal views of which research agendas are particularly valuable to be pursuing, I am not convinced that the highly reliable agent design agenda that MIRI has, is ideal for something like the reasons I was just pointing to. It gets a bit complex and nuanced. I’m particularly excited to see more investigation of approaches, which try to look at the whole problem of Artificial Intelligence safety and give us a paradigm for working on that. Paul Christiano has been doing work in this direction and so far not that many other people have.

I know and have spent hours talking to several of the researches at MIRI and I have a good opinion of them as researchers. When we talk about problems, I think yes they have good ideas, they have good judgment about how to go about these aspects of it. When they tell me, “Look, this feels like it’s important,” and it seems to us that this is going to be a crucial step towards solving the Artificial Intelligence safety problem, even though they can’t fully communicate to me the reason that it feels as compelling to me as it does to them. I say, okay, maybe it seems to me that they’re wrong, but I only have my judgment of this, which forms that impression.

It seems to them like they’re right and I have another judgment, which is that hey, they’re people who think carefully about things and try to reach correct answers. Perhaps they’re right about this, so that makes me think that it’s in the bucket of research that is worth pursuing.

When I was donating last year, I was looking around at the different types of research, which were being done and people were proposing pursuing. It seemed like for most of the areas around Artificial Intelligence safety, it wasn’t being very funding limited. There was more of a bottleneck of people who might be really excellent researchers, who were willing to go in and start working on it. When such people existed, they could get funding.

On the other hand, MIRI had found people who seemed technically impressive and they said, “We are interested in hiring more of these people. We’d like them to come and work with us on our research agendas,” but they were constrained by funding and this made it look valuable to fund them.

Robert Wiblin: I guess when you have an area like Artificial Intelligence safety research, where there’s just a lot of money sloshing around – there’s a lot of people who would like to fund something here – do you think what happened here was just a result of the fact that people were trying to fund the stuff that looked robustly good? Then all that’s left is stuff that’s kind of a little bit sketchy or like not everyone is convinced that this is actually so worthwhile undertaking, and so you kind of had to go down the list a bit. All of the best stuff was funded and now you’re looking at something like, “I’m not so convinced about this,” but a minority of people, or some reasonable fraction of people think that it’s worth funding. Even though I am perhaps not one of them, I’m going to put my money there anyway.

Owen Cotton-Barratt: Yeah, that feels like a little bit too much of a caricature of the situation to me. I think that another feature, which is in favor of the thinking that MIRI is doing, is that the researchers there are trying to look at the whole problem to some extent and say, “What are we going to need to eventually do and work out what do we therefore need to be working at this?” This isn’t, it’s not clear whether it will turn out to be valuable, but it isn’t crazily fringe, it isn’t like there’s just a very small probability that this turns out correct.

I think that some of the other types of research, which have been getting an easier time of getting funding are coming out of traditional machine learning. Machine learning is an area, which is pretty hot at the moment and just generally has a lot of funding. I think that there is valuable work to be done in getting better understanding of the pieces that we might assemble into more advanced systems later. If we were only doing research of the type of ‘work out what the pieces are doing’, that would also seem like it was leaving gaps.

Robert Wiblin: Too narrow a focus?

Owen Cotton-Barratt: Yeah.

Robert Wiblin: For the community as a whole?

Owen Cotton-Barratt: That’s right. Too narrow a focus, and again I don’t want to imply that everybody just thinking about the machine learning end of things has that narrow focus. It seems like it is hard to predict exactly where important ideas are going to come from in research. I think there is value in supporting people to do exploration, where they’re at least making a genuine effort to look for ideas that seem important to them. If everybody recognized an idea was important already, probably we’d already have the idea and so collectively we want to be diversifying a bit and exploring different things that seem perhaps promising to different people. Let them go and see if they can produce a more solid version of it. See if they can find something particularly valuable there.

Robert Wiblin: MIRI has received quite a lot of donations over the last 12 months, so I guess you are a little bit on the bleeding edge. People saw what you were doing and decided to copy. Where do you think you’ll give this year? Have you done that analysis yet or is it yet to come?

Owen Cotton-Barratt: I haven’t done that analysis yet, yeah. MIRI recently got a largish grant from Open Philanthropy. This makes it feel less important at the margin as a funding target to meet at the moment. I might do something where I look for small idiosyncratic opportunities to donate, because I’m fairly enmeshed in the research community. It might just be that I see things and I think, “Look, this is a good use of money. I will personally put my money towards this.” I’m going to do thinking about this a bit later in the year.

Robert Wiblin: This it’s the case that you could just run across someone doing a postdoc and you could kind of pay them to go in one direction rather than another? Or help them to buy some more time because they don’t have to keep a part-time job at Burger King or something to pay the bills?

Owen Cotton-Barratt: That would be a bit extreme, but yeah, that’s the, directionally that would be great.

Robert Wiblin: That’s the kind of thing that is hard for a big foundation to find, because it’s just, it’s too small for them to even be thinking about.

Owen Cotton-Barratt: Absolutely.

Robert Wiblin: Yeah, okay. I wonder if that’s something that more people intensely part of the community should do is just give money even to, it doesn’t have to be a registered nonprofit, just to someone who they think can make good use of it, who otherwise isn’t going to get it. They can scarcely apply for a grant for $3,000 to a foundation.

Owen Cotton-Barratt: Might. I’m interested in talking to, I chatted last year to Jacob Steinhardt and recommended he try something like this with his personal giving. At least with a part of it, because he’s also in a position where he’s quite embedded in the research. I haven’t chatting to him recently. I’m interested to hear how that’s gone.

Robert Wiblin: All right, well we should wrap up soon, but it’s kind of a last question. Do you know of any vacancies at the Future of Humanity Institute or other places that are doing similar research to what you’re currently working on? If someone’s listening and they found this really interesting and they want to work on similar problems.

Owen Cotton-Barratt: Yeah. I think that some vacancies open in an ongoing way. It can be an area that’s hard to get into, because it’s generally not stuff that people go and do PhDs in. That means that people don’t have research experience, which means it can be hard to get research jobs. This, I mean this ties back to our, the things we were talking about earlier about field-building and how people should divide their attention. It’s actually something I’ve been quite concerned about over the last year.

At the moment I’m just in the process of trying to set up a program within the Future of Humanity Institute, where we can hire people with or without PhDs as researchers to explore ideas. To exercise their judgment about what is important to work on, to get feedback on that, to try and train that skill and to test what particular high impact research questions they may be a bit of a particularly good fit for.

My hope is that for people who are perhaps good at operating in messy pre-paradigmatic fields, this will be a good space to explore even before getting a PhD. If they take more time to think earlier about topic, it’s more likely that then they can choose a PhD topic that they think is extremely valuable. They can get a PhD in precisely the thing that seems important to them and be set up for relevant expertise. I think that can also be helpful for academic careers, where people are often judged on how much if they’ve done since they got their PhD. This means it’s more costly to take a break and think about different topics after getting a PhD, even beforehand.

Robert Wiblin: My guest today has been Owen Cotton Barratt. Thanks for coming on the podcast Owen.

Owen Cotton-Barratt: Thanks Rob.

Robert Wiblin: Just a reminder that if you want to go to Effective Altruism Global San Francisco, or in Australia or the Netherlands, you need to apply soon at eaglobal.org.

The 80,000 Hours Podcast is produced by Keiran Harris.

Thanks for joining, talk to you next week.

Learn more

Related episodes

About the show

The 80,000 Hours Podcast features unusually in-depth conversations about the world's most pressing problems and how you can use your career to solve them. We invite guests pursuing a wide range of career paths — from academics and activists to entrepreneurs and policymakers — to analyse the case for and against working on different issues and which approaches are best for solving them.

Get in touch with feedback or guest suggestions by emailing [email protected].

What should I listen to first?

We've carefully selected 10 episodes we think it could make sense to listen to first, on a separate podcast feed:

Check out 'Effective Altruism: An Introduction'

Subscribe here, or anywhere you get podcasts:

If you're new, see the podcast homepage for ideas on where to start, or browse our full episode archive.