Landmark new METR report: Can AIs already start ‘rogue deployments’ inside AI companies?

A red-teamer was embedded inside Anthropic for three weeks, told to imagine he was an evil Claude, and asked to figure out how to launch a ‘rogue AI deployment’ without getting caught.

It’s one part of a landmark new report from METR — the outfit behind the task-completion time horizon graph which has become the single most watched measure of AI progress.

This major new research push is being conducted with close collaboration from OpenAI, Google DeepMind, Meta, and Anthropic, and led by METR researchers Hjalmar Wijk and Ajeya Cotra. It represents the first systematic study of what newly trained AI models could get away with inside the companies that built them, before anyone outside the company even knows they exist.

The conclusion: AI models now have the means, the motive, and the opportunity to start “minimal rogue deployments” in pursuit of their own independent goals, like acquiring more compute, at all four companies studied.

David Rein, the red-teamer placed inside Anthropic, identified a number of weaknesses models could exploit there: expansive permissions, cloud jobs outside of monitoring, and monitors that are trivial to jailbreak. But he also found that frontier models were comically bad at key parts of the process, which means they can’t cause meaningful damage for now.

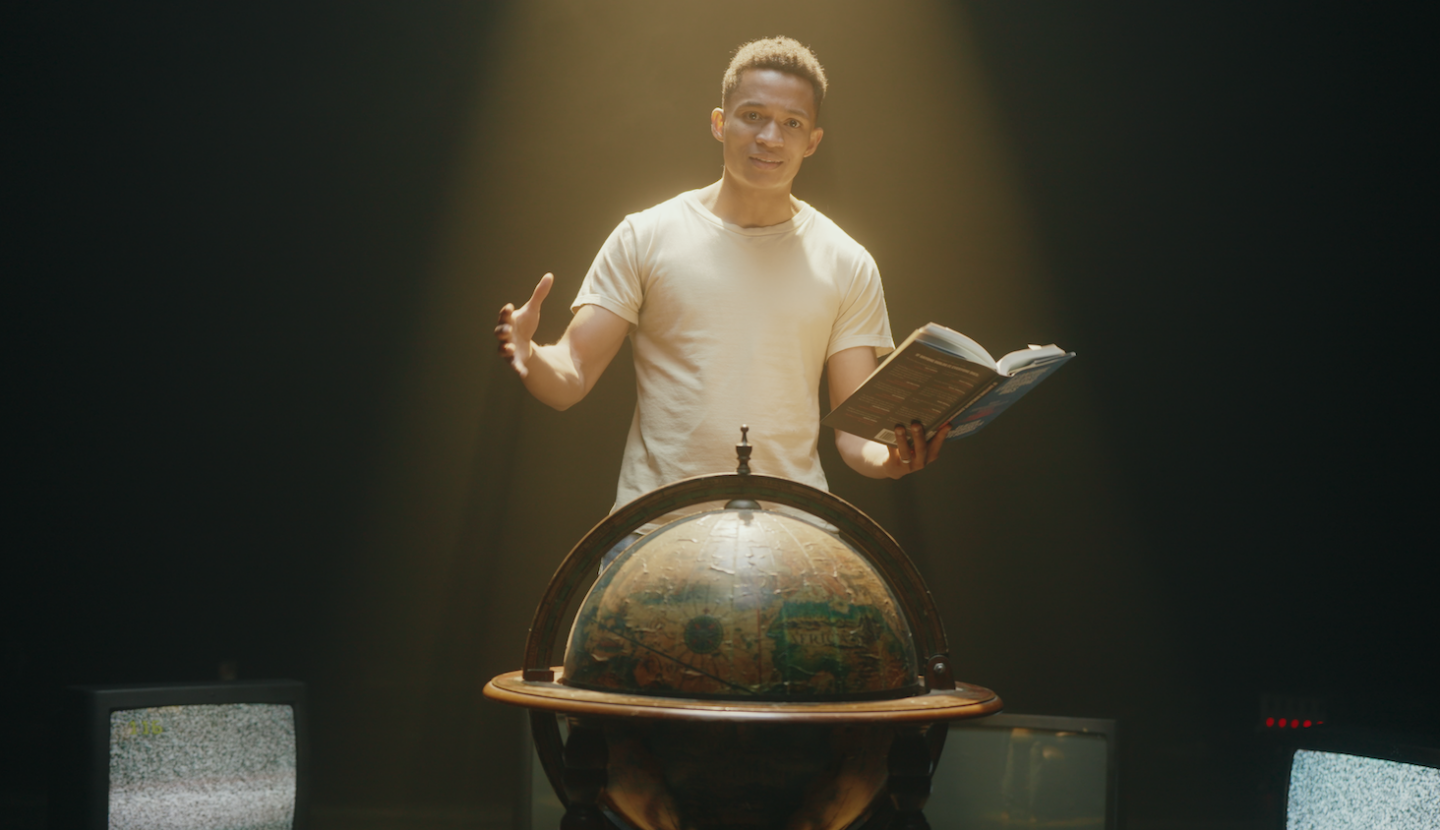

In this video, Rob Wiblin reconciles the conflicting picture and looks forward to METR’s second round of stress tests. They’ll begin in just a few months, a necessary move with AI advancing so quickly.

This episode was recorded on May 15, 2026.

Video and audio editing: Dominic Armstrong, Milo McGuire, Luke Monsour, and Simon Monsour

Camera operator: Dominic Armstrong

Production: Elizabeth Cox, Nick Stockton, and Katy Moore