Transcript

Cold open [00:00:00]

Matt Clancy: Say you have a billion dollars per year. You could either write it to science, or you could spend it improving science: trying to build better scientific institutions, make the scientific machine more effective. And just making up numbers, say that you could make science 10% more effective if you spent $1 billion per year on that project. Well, we spend like $360 billion a year on science. If we could make that money go 10% further and get 10% more discoveries, it’d be like we had an extra $36 billion in value. And if we think each dollar of science generates $70 in social value, this is like an extra $2.5 trillion in value, per year, from this $1 billion investment. That’s a crazy high ROI — like 2,000 times as good.

And that’s the calculation that underlies why we have this Innovation Policy programme, and why we think it’s worth thinking about this stuff, even though there could be these downside risks and so on, instead of just doing something else.

Luisa’s intro [00:00:57]

Luisa Rodriguez: Hi listeners, this is Luisa Rodriguez, one of the hosts of The 80,000 Hours Podcast.

In today’s episode, I speak with Matt Clancy, an economist and research fellow with Open Philanthropy. I’ve been excited to talk to Matt for a while, because he’s been working on what seems to me like a really important issue: one, how to accelerate good scientific progress and innovation; and two, whether it’s even possible to accelerate just good science, or whether by accelerating science, you accelerate bad stuff along with good stuff.

We explore a bunch of different angles on those questions, including:

- How much can we boost health and incomes by investing in scientific progress?

- Scenarios where accelerating science could lead to existential risks — such as advanced biotechnology being used by bad actors.

- How scientific breakthroughs can be used for both good and bad, and often enable completely unexpected technologies — which is why it’s really hard to forecast the long-term consequences of speeding it up.

- Matt’s all-things-considered view on whether we should invest in speeding up scientific progress, even given the risks it might pose to humanity.

- Plus, non-philosophical reasons to discount the long-term future.

And at the end of the episode, we also talk about the reasons why Matt is sceptical that AGI could really cause explosive economic growth.

Without further ado, I bring you Matt Clancy.

The interview begins [00:02:34]

Luisa Rodriguez: Today I’m speaking with Matt Clancy, who runs Open Philanthropy’s Innovation Policy grantmaking programme and who’s a senior fellow at Institute for Progress. He’s probably best known as the creator and author of New Things Under the Sun, which is a living literature review on academic research about science and innovation. And I’m just really grateful to have you on. I’m really looking forward to this interview in particular. So thanks for coming on, Matt.

Matt Clancy: Yeah, thank you so much for having me.

Is scientific progress net positive for humanity? [00:03:00]

Luisa Rodriguez: I hope to talk about why you’re not convinced we’ll see explosive economic growth in the next few decades, despite progress on AI, but first, you’ve written this massive report on whether doing this kind of metascience — this thinking about how to improve science as a whole field — is actually net positive for humanity. So you’re interested in whether a grantmaker like Open Phil would be making the world better or worse by, for example, making grants to scientific institutions to replicate journal articles.

And I think, at least for some people, myself included, this sounds like a pretty bizarre question. It seems really clear to me that scientific progress has been kind of the main thing making life better for people for hundreds of years now. Can you explain why you’ve spent something like the last year looking into whether these metascience improvements that should bring us better technology, better medicine, better kind of everything, why might it not be worth doing all that?

Matt Clancy: Yeah, sure. And to be clear, I was the same when I started on this project. I maybe started thinking about this like a year and a half ago or so. I was like, “Obviously this is the case. It’s very frustrating that people don’t think this is a given.” But then I started to think that taking it as a given seems like a mistake. And in my field, economics of innovation, it is sort of taken as a given that science tends to almost always be good, progress in technological innovation tends to be good. Maybe there’s some exceptions with climate change, but we tend to not think about that as being a technology problem. It’s more like a specific kind of technology as bad.

But anyway, let me give you an example of a concrete scenario that sort of is the seed of beginning to reassess and think that it’s interesting to interrogate that underlying assumption. So suppose we make these grants, we do some of those experiments I talk about. We discover, for example — I’m just making this up — but we give people superforecasting tests when they’re doing peer review, and we find that you can identify people who are super good at picking science. And then we have this much better targeted science, and we’re making progress at a 10% faster rate than we normally would have. Over time, that aggregates up, and maybe after 10 years, we’re a year ahead of where we would have been if we hadn’t done this kind of stuff.

Now, suppose in 10 years we’re going to discover a cheap new genetic engineering technology that anyone can use in the world if they order the right parts off of Amazon. That could be great, but could also allow bad actors to genetically engineer pandemics and basically try to do terrible things with this technology. And if we’ve brought that forward, and that happens at year nine instead of year 10 because of some of these interventions we did, now we start to think that if that’s really bad, if these people using this technology causes huge problems for humanity, it begins to sort of wash out the benefits of getting the science a little bit faster.

And in fact, it could even be worse. Because what if, in year 10, that’s when AGI happens, for example. We get a super AI, and when that happens the world is transformed. We might discuss later why I have some scepticism that it will be so discrete, but I think it’s a possibility. So if that happens, maybe if we invented this cheap genetic engineering technology after that, it’s no risk: the AI can tell you, “Here’s how you mitigate that problem.” But if it comes available before that, then maybe we never get to the AGI, because somebody creates a super terrible virus that wipes out 99% of the population and we’re in some kind of YA dystopian apocalyptic future or something like that.

So anyway, that’s the sort of concrete scenario. And your instinct is to be like, come on. But you start to think about it and you’re like, it could be. We invented nuclear weapons. Those were real. They can lead to a dystopia and they could end civilisation. There’s no reason that science has to always play by the same rules and just always be good for humanity. Things can change. So I started to think it would be interesting to spend time interrogating this kind of assumption, and see if it’s a blind spot for my field and for other people.

Luisa Rodriguez: Cool. Yeah. There’s a lot there, and I want to unpack a few pieces of it. I guess one: I think when I first encountered the idea that someone out there as an individual might realise that they could use some new genetic engineering technology to try to create some civilisation-ending pandemic, I was like, “Who would want to do that? And surely one person can’t do that.” But then I interviewed Kevin Esvelt and got convinced that this was way more plausible than I thought. So we’ll link to that episode. If you’re just like, “What? What are you guys talking about?,” I recommend listening to that.

Maybe the other thing is just that I do find the nuclear weapons example really helpful, because it does just seem really plausible to me that, had science been a little bit further behind, that all of the work that went into making nuclear weapons happen when they did might not quite have been possible. It was already clearly a huge stretch. And clearly some countries did try to make them, but failed to do it at the same pace as the Americans did — and maybe a year of better science would have made the difference for them. And all of these possible effects are really hard to weigh up against each other and make sense of, and decide whether the world would have been better or worse if other countries had nuclear weapons earlier, or if Americans had nuclear weapons later.

It does kind of help me pump that intuition of having science a year faster than we might have otherwise can, in fact, really change the way certain dangerous weapons technologies look and how it plays out. Does that all feel like getting at the right thing?

Matt Clancy: Yeah, I think that’s the kind of core idea, that science matters. There are technologies that cannot be invented until you have the underlying science understood.

Luisa Rodriguez: And some of them are bad.

Matt Clancy: Yeah. And science is, in some sense, morally neutral. It just gives you the ability to do more stuff. And that has tended to be good, but it doesn’t have to be.

Luisa Rodriguez: Yeah. I think there are some reasons people still might be sceptical of this whole question, but maybe before we get to them, I’m also just a bit unsure of how action relevant this all is. Like, if it turned out that you wrote this whole report and it concluded that actually accelerating science is net negative, what would Open Philanthropy do differently? I imagine you wouldn’t stop funding new science. Or maybe you would. Or at the very least, I imagine you wouldn’t go out and start trying to make peer review worse.

Matt Clancy: Yeah. So I joined Open Phil in November 2022 to lead the Innovation Policy programme. And there were a number of people who were concerned about this, and it affects the potential direction that the Innovation Policy programme should go. We’re not going to, as you say, try to fund thwarting science in any way. But there’s finite resources, and there are different things you can do.

So one option would be we don’t pursue stuff that’s going to accelerate science just like across the board, like finding new ways to do better peer review or so on. Instead we just, instead of making grants to those organisations, we make them to other organisations that might have nothing to do with science at all — like farm animal welfare or something like that. So that’s one possible route you could go.

Another route is you could say that we take away that accelerating science is not good, but that’s only one option. There are other options available to you, including trying to pick and choose which kinds of science to fund. And some you might think is better than others. So maybe we should work on developing tools and technologies or social systems or whatever that align science in a direction that we think is more… So you could basically focus less on the speed and more on how does science choose wisely what to focus on and so forth.

So those are different directions you could go. It could be just stuff outside of science, or it could be trying to improve our ability to be wise in what we do with science.

Luisa Rodriguez: OK, fair enough. Let’s then get back to whether this is even a real problem, because I think part of me is still kind of sceptical. I think the thing that makes me most sceptical is just that it does seem like there’s clearly a range in technology. Some technology seems just really clearly only good. I don’t actually know if I can come up with something off the top of my head, but maybe vaccines, arguably. And some technology seems much more obviously dual use — so has some good applications, but also can clearly have some bad ones — like genetic engineering.

Why are people at Open Philanthropy worried that you can’t get those beneficial technologies without getting the dangerous ones by just thinking harder about it?

Matt Clancy: I think that to an extent we do think that is possible. We think, and we have programmes that work on this in specific technology domains, like biosecurity or AI, that make decisions that are the kind you’re talking about — where we think giving a research grant to this would be good, and giving a research grant to that would maybe not be good, so we’re not going to do that kind of thing.

But there’s, as we’ll talk about, a lot of value on the table from just improving overall science. Like, if you could make overall science a bit more effective, and if science is on average good, then you should totally do that. I think that’s the core argument: just that that’s always an option to just pick and choose, but we want to explore whether interventions that can’t be targeted are also worth pursuing, even though they can’t be targeted.

So like high-skilled immigration: it’s not going to be at a level of granularity, like, if there’s some kind of change to legislation that’s like, “If your research project is approved by the NSF as good, then you can get a green card.” It’s just going to be like, “If you meet certain characteristics” — and that’s going to sort of improve the efficacy of science across the board.

Luisa Rodriguez: OK. Yes, you can try to primarily fund safe and valuable science, but if you want to do any of this really high-level, general make science as a field be better, be more efficient, create more outcomes at all, you can’t make sure all of those things trickle down into the right science and not the bad science. That’s just not the level you’re working on.

Matt Clancy: Yeah. And I think that also at a higher level, there are limits to how well you can forecast stuff. So there’s this famous essay by John von Neumann called “Can we survive technology?” And he’s writing in kind of the shadow of the nuclear age and all this. He has this interesting quip, where he’s talking from first hand experience in a way that’s really interesting, where he’s like “It’s obvious to anyone who was involved in deciding what technology should be classified and not” — and I was not involved in that, but presumably he was during the war or something like that — he’s like, “It’s obvious to those of us who’ve been in that position that separating the two is an impossible task over more than a five to 10-year timeframe, because you just can’t parcel out.”

So science is like, really, I think it’s going into the unknown, and trying to figure out how something works when you don’t know what the outcome is going to be. And it’s an area where it’s very hard to predict what the outcome is going to be. It’s not impossible, and we try to make those calls, and that’s good. But I think it’s also important to recognise that there are strong limits to even how well that approach can work.

So for example, consider video games. Video games seem harmless, but the need for video games created demand and incentive to develop chips that can process lots of stuff in parallel, really fast and cheaply. So it seems benign. Another thing that seems benign: let’s digitise the world’s text, put it on a shared web, make it available to everyone. Create forums for people to just talk with each other — Twitter, Reddit, all this stuff — maybe it doesn’t seem as benign, but it doesn’t seem like it’s an existential risk, perhaps. But you combine chips that are really good at processing stuff in parallel with an ocean of text data, and that gives you the ingredients you need to develop large language models, we probably accelerated the development of those things. And the jury’s still out on if these are going to turn out to be good or bad, as you guys have covered before in different episodes.

Luisa Rodriguez: Got it. OK, that makes a bunch more sense to me. So yes, you can forecast whether this very genetic-engineering-related science project is going to advance the field of genetic engineering. But if you go far enough out, the way different technologies end up being used really does become just much more unpredictable. And I don’t know, there was a time, 30 to 40 years before we had nuclear weapons, that probably there was some science that ended up helping create nuclear weapons that no one would have said, “This is probably going to be used to make weapons of mass destruction.”

Matt Clancy: Yeah. And science is full of these sort of serendipity moments where, you know, you find a fungus in the trash can, and it becomes penicillin. Teflon was discovered through people working on trying to come up with better refrigerators. Teflon was also used in seals and stuff in the Manhattan Project. I’m not saying the Manhattan Project wouldn’t have been possible without refrigeration technology, but it contributed a little bit.

The time of biological perils [00:17:50]

Luisa Rodriguez: That’s interesting! OK, let’s talk about the main scientific field you think might end up being net harmful to society, such that we might not want to accelerate it: synthetic biology. What kind of evidence have you found convincing to make you think that there’s some chance that synthetic biology could get accelerated by broad scientific interventions in a way that could make science look bad in the end?

Matt Clancy: So we can focus on kind of two rationales. And just as an aside, there’s lots of other risks that you could focus on. But life sciences is a big part of science, like the big part of science, so that’s one reason we focused on that. AI risk is the thing that’s in the news right now, but I think what’s going on with AI is not primarily driven by academic science; it’s sort of a different bucket. So that’s why we didn’t focus on it in this report.

Luisa Rodriguez: Right. OK, that makes sense.

Matt Clancy: But anyway, turning back to synthetic biology: biology is sort of this nonlinear power where if you can create one special organism, you can unleash it. And if one person could do that, it would have this massive impact on the world.

Why hasn’t that ever happened? Well, one reason is because working with frontier life science and cutting-edge stuff is really hard, and requires a lot of access to specialised knowledge, and getting training. It’s not enough even to just read the papers. Today, you know, you typically need to go to labs, collaborate with people, get trained in techniques that are not easy to describe with text. So this need to collaborate with other people and spend a lot of time learning has been a barrier to people misusing this technology, at least one guardrail against this. And sometimes it gets supplanted, but it’s been a thing that makes it hard for people to work.

And this is kind of a general science thing too, is that frontier science requires working as a team more and more. So it’s harder to do bad stuff when you need a lot of people operating in secret with specialised skills, and how do you find those people and so forth.

The trouble is, AI is a technology that helps leverage access to information, and helps people learn, and helps people figure out how to do stuff that maybe they don’t necessarily know how to do. So this is the concern. It’s not necessarily that AI today is going to be able to help you enough, because as I said, a lot of the technology, a lot of what you need to know is maybe not written down. But it gets better all the time. People are going to maybe start trying to introduce AI into their workflows in their own labs. It seems not at all surprising that we would develop AI to help people learn how to do their postdoc training better and stuff, so maybe this stuff will get in the system eventually. And then if it leaks out, instead of needing lots of time and experience and to work with a bunch of people, maybe an individual or a small group of people can begin to do more frontier work than would have been possible in the past. So that’s one scenario.

Another line of evidence is drawn from a kind of completely different domain, or a different area. There was this forecasting tournament in 2022, called the Existential Risk Persuasion Tournament, which turns out to be a really important part of this report.

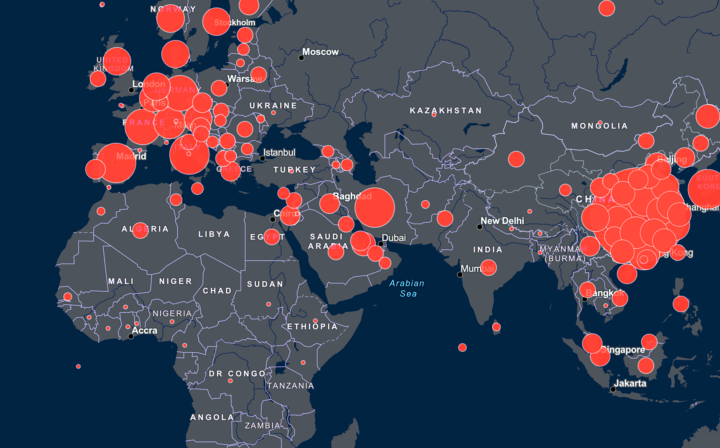

We’ll go over it; I bet we’ll talk about it more later, but in short, there’s like 170 people involved. They forecast a bunch of different questions related to existential risk. Some of those questions relate to the probability that pandemics of different types will happen in the future. They ask people, what’s the probability a genetically engineered pathogen will be the cause of the death of more than 1% of humans before the year 2030? Before the year 2050? Before the year 2100? And because they give these different timelines, you can kind of trace out what people think is the probability of these things happening.

And there’s two major communities that participated in this project: there are experienced superforecasters who’ve got experience in forecasting and have done well in it before; and then there’s domain experts, so people with training in biosecurity issues. And both of those groups forecast an increase in the probability of a genetically engineered pandemic occurring after 2030 relative to before. And they both not only see an increase, but an increase relative to the probability of just a naturally arising pandemic occurring. So you could have been worried that they just think maybe in an interconnected world, more stuff is going to happen. For example, the superforecasters actually think that the probability of a naturally occurring pandemic will go down, but the probability of a genetically engineered pandemic will go up.

That kind of suggests that this is a group that also sees new capabilities coming online that are going to allow new kinds of hazards to come along. And this is a really big group: 170. They were incentivised to try to be accurate in a lot of different ways. They were in this online forum where they could exchange all their ideas with each other. So I think it’s probably the best estimate we have of something that is inherently really nebulous and hard to forecast. But I think this is the best we’ve got, and it sort of does see this increase in risk coming.

Luisa Rodriguez: Cool.

Matt Clancy: Yeah. This whole report, I think, would be way worse if this project had not existed. So thanks to the Forecasting Research Institute for putting this on.

Luisa Rodriguez: OK, so that aside, the headline result is basically that these different communities that did these forecasts do think that there’s going to be some period of heightened risk, and it’s different to just increasing risks of natural pandemics because of the fact that we fly on planes more and can get more people sick or something. It seems like it’s something about the technological capabilities of different kinds of actors improving in this field of biology.

So I think in your report, you give this period the name “the time of biological perils” — or “the time of perils” for short. So all of that is basically how you’re kind of thinking of science as obviously being good, but then also having this cost, and that’s this time of perils.

Matt Clancy: Right. The short model is basically we’re in one era, and then the time of perils begins when there’s this heightened risk. And how long the time of perils… It could last indefinitely. Well, there’s a discount rate that kind of helps, is one way you can get out of it. But the short answer is like, there’s two regimes that you can be in.

Luisa Rodriguez: Yeah. Just to make this idea of the time of biological perils a bit more intuitive, it’s like, I think maybe we’ve talked enough about why we might enter it, why it might start. And obviously it’s not discrete — it’s not like one day we’re not in the time of perils, and the next day we are — but we’re going to talk about it like that for convenience. And then we think it might end because of various reasons. Maybe we create the science we need to end it by just like solving disease, so we can no longer use biological weapons to do a bunch of damage. Maybe it ends in some other way. But it could end, or it could just be like, now we have this technology forever and there’s no real solution. So just an indefinite time of perils might start at some point, and then from that point on, we just have these higher risks. And that’s really bad.

Matt Clancy: Yeah. This isn’t the first time that people have invented new technologies that bad actors use, and then they just become this persistent problem. Bombs, for example.

Luisa Rodriguez: Yeah. Arguably, we’re just in the time of nuclear perils now.

Matt Clancy: Right.

Luisa Rodriguez: Cool. OK, so that’s how to kind of conceptualise that.

Modelling the benefits of science [00:25:48]

Luisa Rodriguez: Let’s talk about the benefits of science. You take on the incredibly ambitious task of modelling the benefits of science. How do you even begin to put a number on the value of, say, a year’s worth of scientific progress?

Matt Clancy: We were inspired by this paper by economists Ben Jones and Larry Summers, which put a dollar value on R&D. And to clarify, like, not all R&D is science. Science is like research about, how does the world work? What are the natural laws? And so forth. A lot of other research is not trying to figure that out; it’s trying to invent better products and services.

And so this paper from [2020] by Larry Summers and Ben Jones was like, what if you shut off R&D for one year? That’s the thought experiment they imagine: what would you lose, and how much money would you save? So you would save money from what you would normally spend on R&D. It’s a little more complicated than that, because it also affects the future path of R&D. But as a first approximation, you lose that year’s R&D spending, or you save that year’s R&D spending, but you lose something too. So they want to add up the dollar value of what you lose.

And the thing that’s clever about it is economists think that all of income growth comes from technology — like the level of income of a society is ultimately driven by the technology it’s using to produce stuff. And the technology that we have available to us, especially in the USA, comes from research and design that is conducted. So if you stop the research, you stop the technology progress, you slow your income growth. So in the US, to a first approximation, GDP per capita income grows about 2% every year, adjusted for inflation. And so if you pause R&D, you don’t grow by 2% for one year at some point — maybe with a delay — and then every year thereafter, you grow by 2%. But because you missed that one year, you’re always 2% poorer than you sort of otherwise would have been.

So we’re going to borrow this idea, but apply it to science. So we’re going to say, if you pause science, you don’t lose all of economic growth — because unlike R&D, some technological progress doesn’t depend on science. So a big problem we’re going to have to solve is what share of growth comes from science. But you lose some, and then you’re a little bit poorer every year thereafter.

But we’re also going to have this flip side, which is since we have this mental framework of this “time of perils,” which begins at some year — and we’re assuming that the time of perils begins because we discover scientific principles that lead to technologies that people can use for bad things. If we delay by one year the development of those scientific discoveries, we’re going to also delay by one year the onset of that time of perils. And that’s going to tend to make us, in this model, spend less overall time in this time of perils. — basically, to a first approximation, one year less time.

So we’ve got kind of benefits — we would get income from a year of science — and we’ve got costs, which is that we get into this time of perils a bit faster. And then we add one third factor, which is: the idea behind the time of perils is that there’s these new biological capabilities that people can use for bad reasons — but obviously, the reason people develop these biological capabilities is to try to help people, to try to cure disease and so forth. So we want to capture the upside that we’re getting from health too, so we also try to model the impact of science on health. And all of this is possible to sort of put together in a common language, because at Open Philanthropy, we’ve got grants across a bunch of different areas, and we have these frameworks for how do we trade off health and income benefits.

And a couple of useful caveats: one, we’re going to be using Open Philanthropy’s framework for how do we value health versus income. And other people may disagree. So that’s one thing. And then of course, you could also argue that there are benefits to science that don’t come from just health and income. There might be value to just knowing things about the world — like intrinsic value of knowledge — and we’ll be missing that in this calculation too.

Luisa Rodriguez: Right. OK, so we’ll keep that in mind. So that’s kind of the framework you’re using. So then from there — and this is incredibly simplified — you make several models with different assumptions, where you first consider how much a year’s worth of scientific progress is worth in terms of increased health and increased incomes for people. Then you consider how big the population is, I guess both at a single time, but also over time, because it’s not just the people alive now that are going to benefit from science; it’s also generations and generations to come. But then you also have a discount rate, which is basically saying that we value the benefits people get from science in the very near future more than we value the theoretical benefits that people in the future might get from science.

And basically, that’s a lot of things. And I want to come back to the discount rates, because that’s actually really important.

Before we move on and talk about how you modelled this all together, I think there’s still a piece that at least hasn’t been hammered home enough for me, because if I’m like, “What if we’d skipped last year’s science and we were stuck at the level of science of a year ago?” then I’d be like, meh. So I think there’s something about how the benefits of science over the last year kind of accrue over time. Can you help me understand why I should feel sad about pausing science for a year?

Matt Clancy: Yeah, sure. And as well, you know, it’s an open question. Maybe you’ll feel happy.

Luisa Rodriguez: Right, right.

Matt Clancy: But no, spoiler alert, I think… Well, it’s complicated, as we’ll see. Anyway, you pause science. Yeah, you don’t miss anything, right? You maybe didn’t get to read as many cool stories in The New York Times about stuff that got discovered, but what’s the big deal?

The impact happens like 20 years later. So what is this now, 2024? We lost all of, say, 2023 science. So we’re working with 2022. That means in 2043 or whatever, we’re working with the 2022 science instead of the 2023 science. And so when finally the tech, not the science, bears fruit and turns into mRNA vaccines or whatever the next thing is going to be in 20 years, that gets delayed a year.

So it’s not that in the immediate term you really notice necessarily the loss to science, but a lot of technology is built on top of science. It takes a long time for those discoveries to get spun out into technologies that are out there in the world. It takes a long time to spin them out. It takes even longer for them to diffuse throughout the broader world, we try to model all this stuff, but that’s when you would notice it. You wouldn’t notice, but you would maybe notice in a couple of decades. You’d be like, “I feel a little bit poorer.” No, you wouldn’t say that.

Income and health gains from scientific progress [00:32:49]

Luisa Rodriguez: Got it. OK, that makes sense. Let’s talk more about your model. How did you estimate how much a year’s worth of scientific progress increases life expectancy and individual incomes?

Matt Clancy: So to start, US GDP per capita, as I said earlier, grows on average like 2% per year for the last century. Some part of that is going to be attributable to science, but not all of it. Some of it is attributable to technological progress. But again, actually not all of it. Even though economists say that in the long run, technological progress is kind of the big thing, in the short run — and the short run can be pretty long, I guess — there’s other stuff going on. For example, women entered the workforce over the 20th century in the US, and a lot more people went to college — and those kinds of things can make your society more productive, even if there’s not technological progress going on at the same time.

So there’s a paper by this economist Chad Jones, “The past and future of economic growth,” where he tries to sort of parcel out what part of that 2% growth per year that the US experiences is productivity growth from technological progress and what’s the rest. He concludes that roughly half is from technological progress — so say 1% a year.

So we’re going to start there. But again, technological progress, part of that comes from science, part of that comes from other stuff — just firms, Apple doing private research inside itself. So how do you figure out the share that you can attribute to science? There’s a couple different sources we looked at.

There was this cool survey in 1994 where they just surveyed corporate R&D managers, and they were like, “How much of your R&D depends on publicly funded research?” They didn’t ask specifically about science, but the government funds most of the publicly funded science, so it’s like a reasonable proxy. And this is 1994, 30 years ago. And they were like, “20% of our [R&D] depends on it.”

Another way you can look is like more recent data. You can look at patents. So patents describe inventions. They’re not perfect, but they are tied to some kind of technology and invention. And they cite stuff; they cite academic papers, for example. About a quarter, actually 26%, of US patents in the year 2018 cited some academic paper. So this is sort of in the same ballpark.

But there’s reasons to think that maybe this 20% to 26% is kind of understating things, because there can also be these indirect linkages to science. So if you’re writing software, if you’re using a computer at all, it runs on chips. Chips are super complicated to make and maybe are built on scientific advances. That’s not going to necessarily be captured if you ask people, “Does your work rely on science?” It relies on computers, but I’m not going to tell you it relies on science.

So one way you can quantify that is again, patents: some patents cite academic papers, and patents also cite other patents. So you could be like, how many patents cite patents that cite papers? Maybe that’s a measure of indirect things. And if you have any kind of connection at all, then you’re up to like 60% of patents are sort of connected to science in this way.

So those are two ways: surveys, patents. And then there’s a third way, which is based on statistical approaches, where you’re basically looking to see if there was a jump in how much science happened — maybe it’s R&D spending, maybe it’s measured by journal publications. What happened to productivity in a relevant sector down the road? Do you see the jumps matched by jumps in productivity later? Those find really big effects — almost one to one: a 20% increase in how much science happens leads to a 20% increase in productivity or so on.

Luisa Rodriguez: Wow.

Matt Clancy: So this indirect science stuff and this other stuff sort of suggests to me that if you just stopped science — we’re done with science; we’re not doing it anymore — I think in the long run, that would almost stop technological progress. That’s just my opinion: we’d probably get a long way from improving what we have without any new scientific understanding, but we’d hit some kind of wall.

But that’s actually not what we’re investigating in this report. We’re not going to shut down science forever; we’re just going to shut it down for one year. And that gets into some of these things that I think you alluded to earlier about maybe it’s not such a big deal; maybe we can push on without one year of science.

And there’s a couple of reasons to think that maybe that evidence I cited earlier is sort of overstating things. Like, you cited a paper, but does that really mean you needed to know what’s in that for you to invent the thing? And we don’t really know. We know that they’re more valuable, and there’s sort of other clues that they were getting something out of this connection to science, but we don’t really know.

And then also, you could imagine innovation is like fishing out good ideas: we’re fishing out of a pond new technologies, and science is like a way to restock the pond, because we fished out all the ideas, we sort of do everything. But science is like, “Actually, you know, there’s this whole electromagnetic spectrum and you can do all sorts of cool stuff with it” or whatever. And maybe if we don’t restock the pond, people just overfish, like fish down the level that’s in there, and there’s some consequence, but it’s not as severe.

So anyway, on balance, you kind of have to make a judgement call, but we end up hewing closer to the sort of direct evidence — so like the surveys where people say it relied directly on evidence, or the patents that directly cite papers. And our assumption is, if you give up science for one year, you lose a quarter of the growth that you get from technological progress. So instead of growing at 1% a year, you grow at 0.75% for one year. And again, that all happens after a very long delay, because it takes a long time for the science to percolate through.

Luisa Rodriguez: Right. In that first year, they’re still mining the science from two decades ago because of how that knowledge disseminates. How do you think about the effects on health?

Matt Clancy: Yeah. So to start there, how are you going to measure? What’s your preferred way of measuring health gains? Health is this sort of multidimensional thing. We’re going to use life expectancy, with the idea that a key distinction of health is like alive or dead.

And there was this paper that Felicity C. Bell and Michael L. Miller wrote in 2005 that collected and tabulated all this data in the US on basically life expectancy patterns over the last century. So they’ve got actually the share of people who live to different ages for every year going back to 1900. So if you’re born in 1920, we can look at this table and say, how many people live to age five, 10, 20, 30, et cetera. We can look again at 1930, if you were born in 1940. And as you would expect, the share of people surviving to every age tends to go up over time.

And because they did this report for the Social Security Administration, and the Social Security Administration’s goal is, “We want to know how much are things going to cost? How much are our benefits going to cost in the future? How many people are going to survive into old age?” — so this report forecasts into the future out through 2100. So they have other tables that are like, if you’re born in 2050, what do we think? How many people will survive to age five, 10, 15, 20?

So I’m going to use that data, rather than trying to invent something on my own. And the thing that’s kind of nice about this is, because they’ve got these estimates for every year, we can have this very concrete idea about if you lose a year of science, you could imagine — we don’t actually do this — but then you lose a year of the gain. So if you would have been born in 2050, but we skip science for a year, instead of being on the 2050 survival curve, you’re on the 2049 survival curve.

So we don’t actually do that because, again, I don’t think you lose everything If you lose a year of science. We end up saying you lose seven months. So how do we come up with that? This is a little bit of a harder one. I don’t think there’s quite as much data as we could draw on. But you can imagine that health comes from scientific medical advances plus a lot of other stuff that is related to, for example, income. Like you can afford more doctors, more sanitation and so on.

And there are some studies that try to portion out across the world how different factors explain health gains. And they say a huge share, 70% or 80%, of the health gains comes from technological progress. But if you dig in, it’s not actually technological progress. It’s more that we can explain 20% to 30% of the variation with measurable stuff, like how many doctors there are or something. The rest, we don’t know what it is. So we’re going to call it technological progress. So we want to be a little cautious with that.

But on the other hand, I think it makes sense that science seems important for health. And you do see that borne out in this data too. Like, if you look at the patent data, medical patents cite academic literature at like twice the rate, basically, as the average patent.

So where we come down is like, we’re going to say 50% of the health gains come from science; the rest comes from income. But a little bit of the income gains also come from science, and that’s how you end up with seven months instead of six months.

Luisa Rodriguez: OK, got it.

Matt Clancy: I guess the last thing to say is that you can tinker with different assumptions in the model and some of this stuff and see how it matters. I think that, are these exact numbers right? No. Are they like in an order of magnitude? Probably, yes. I would be very surprised if they were more than twice or half.

Discount rates [00:42:14]

Luisa Rodriguez: Great. Then let’s talk about discount rates. So usually, when I hear people discount the value of some benefit that some future person I don’t know, living centuries from now, will get, I have some kind of mild moral, “That’s not a good thing to do. Who says that your life is that much more valuable than someone living in a few centuries, just because they’re living in a few centuries and not alive yet?” Why are you discounting future lives the way you are?

Matt Clancy: Yeah, yeah. And this is a super important issue, and the choice of this parameter is really important for the results. And as you said, it’s standard in economics to just weigh the distant future less: it counts for less than the near future, which counts for less than the present. And there’s lots of justifications for why that’s the case. But you know, I take your point that morally it doesn’t seem to reflect our ethical values. Like if you know that you’re causing a death 5,000 years in the future, why isn’t it as bad as causing a death today?

So the paper ends up at the same place, where it’s got a standard economic discount rate. But I think I spend a lot more time trying to justify why we’re there, and giving an interpretation of that discount rate, and trying to more carefully justify it on grounds that are not like, “We morally think people are worth less in the future.” Instead, it’s all about epistemic stuff: what can you know about the impact of your policies? The basic idea is just that the further out you go, the less certain we are about the impact of any policy change we’re going to do.

So remember, ultimately we’re sort of being like, what’s the return on science? And there’s a bunch of reasons why the return on science could change in the distant future. It could be that science develops in a way in the future such that the return on science changes dramatically — like we reach a period where there’s just tonnes of crazy breakthroughs, so it’s crazy valuable that we can do that faster. Or it could be that we enter some worse version of this time of perils, and actually science is just always giving bad guys better weapons, and so it’s really bad.

But there’s a tonne of other scenarios, too. It could be that just that we’re ultimately thinking about evaluating some policy that we think is going to accelerate science, like improving replications or something. But over time, science and the broader ecosystem evolves in a way that actually, the way that we’re incentivising replications has now become like an albatross around the neck. And so what was a good policy has become a bad policy.

Then a third reason is just like, there could be these crazy changes to the state of the world. There could be disasters that happen — like supervolcanoes, meteorite impacts, nuclear war, out-of-control climate change. And if any of that happens, maybe you get to the point now where like, our little metascience policy stuff doesn’t matter anymore. Like, we’ve got way bigger fish to fry, and the return is zero because nobody’s doing science anymore.

It could also be that the world evolves in a way that, you know, the authorities that run the world, we actually don’t like them — they don’t share our values anymore, and now we’re unhappy that they have better science. It could also be that transformative AI happens.

So like, the long story short is the longer time goes on, the more likely it is that the world has changed in a way that the impact of your policy, you can’t predict it anymore. So the paper simplifies all these things; it doesn’t care about all these specific things. Instead, it just says, we’re going to invent this term called an “epistemic regime.” And the idea is that if you’re inside a regime, the future looks like the past, so the past is a good guide to the future. And that’s useful, because we’re saying things like 2% growth has historically occurred; we think it’s going to keep occurring in the future. Health gains have looked this way; we think they’re going to look this way in the future. As long as you’re inside this regime, we’re going to say that’s a valid choice.

And then every period, every year, there’s some small probability the world changes into a new epistemic regime, where all bets are off and the previous stuff is no longer a good guide. And how it could change could be any of those kinds of scenarios that we came up with. Then the choice of discount rate becomes like, what’s the probability that you think the world is going to change so much that historical trends are no longer a useful guide? And I settle on 2% per year, a 1-in-50 chance.

And where does that come from? Open Phil had this AI Worldviews Contest, where there was a panel of people judging the probability of transformative AI happening. And that gave you a spread of people’s views about what’s the probability we get transformative AI by certain years. And you get something a little less than 2% per year is the probability, if you look in the middle of that. Then Toby Ord has this famous book, The Precipice, and in there he has some forecasts about x-risk that is not derived from AI, but that covers some of those disasters. I also looked at, there’s been sort of trend breaks in the history of economic growth — since there’s been one since the Industrial Revolution, and maybe we expect something like that.

Anyway, we settle on a 2% rate. And the bottom line is that we’re sort of saying people in the distant future don’t count for much of anything in this model. But it’s not because we don’t care about them; it’s just that we have no idea if what we will do will help or hurt their situation. Another way to think of 2% is that, on average, every 50 years or so, the world changes so much that you can’t predict, you can’t use historical trends to extrapolate.

And one last caveat — which we’ll come to much later I think — is that extinction, when you think of discounting in this way, extinction is sort of a special class of problem that we will come back to.

Luisa Rodriguez: OK, I actually found that really convincing. I know not everyone shares the view that people living now and people living in the future have the same moral weight, but that is my view. But I’m totally sympathetic to the idea that the world has changed drastically in the past, and the world could change drastically again — and there are all sorts of ways that centuries from now could look so different from the present that we totally shouldn’t be counting the benefits that people living 1,000 years from now could get from our science policy, if there are all sorts of things that could mean our science policy has absolutely no effect on them, or has wildly more effects on them for the reasons you’ve given. So I didn’t expect to be so totally persuaded of having a discount rate like that, but I found that really compelling.

Before we move on, I’m a little sceptical still of 2% in particular. Like, something really weird happening once every 50 years was really helpful for making that very concrete to me. But it also triggered a, “Wait, I don’t feel like something really weird happens every 50 years.” Like, I feel like we’ve done something like move from an epistemic regime to another a couple of times based on kind of how you’re describing it and how I’m imagining it — where one example would be going from very slow economic growth to going to very exponential economic growth. Maybe another is like when we came up with nuclear weapons. Am I kind of underestimating how often these things happen, or is there something else that explains why I’m finding that counterintuitive?

Matt Clancy: No, I think that if you look historically, 2% seems like something changing every 50 years. That seems too often. I think that’s what you’re saying, right?

Luisa Rodriguez: That is what I’m saying.

Matt Clancy: Yeah. It’s rare that things like that happen. And I think that I would share that view. I think that the reason we pick a higher rate is because of this view that the future coming down the road is more likely to be weird than the recent past. So that’s kind of embedded in there. That’s implicit in people’s views on, is there going to be transformative AI? Transformative AI, by definition, is transformative.

I also think that, remember, this is a simplification. I think more realistically what’s going to happen is that there’ll just be a continuous, gradual increase in fuzz and noise around. You’re not going to move from one to another. It’s very convenient to model it as just like we move from one regime to another, and that’s sort of captured by that.

And then lastly, I’d say all else equal, a conservative estimate where you’re a little bit more humble about your ability to project things into the future aligns with my disposition rather than… But I guess other people would say, well then, why not pick 5%? So you still have to pick a number at some point.

Luisa Rodriguez: Yeah, fair enough.

How big are the returns to science? [00:51:08]

Luisa Rodriguez: OK, so that’s kind of the framework behind the model. Let’s talk about how big the returns to science are, if we kind of ignore those costs we talked about earlier, under what basically feel like realistic assumptions to you. So in other words, for every dollar we could spend on science, how much value would we get in terms of improved health and increased income?

Matt Clancy: Yeah. So setting aside that whole bio time of perils, the dangers from technology — so if somebody doesn’t think that’s actually a realistic concern, I think they’ll still find this part useful — our current preferred answer is that every dollar you spend on science, on average, has $70 in benefits.

And that’s subject to two important caveats. One: this is ongoing work, and since the original version of the report went up, we improved the model to incorporate lengthy lags of international diffusion. So when something gets discovered in America, it’s not necessarily when it’s available in Armenia or whatever. And so, the version of the paper with that improvement is the version of the report that’s currently available on archive. Anyway, $70 per dollar.

The other interesting caveat is that a dollar is not always a dollar. A dollar means different things to different people. What we’re talking about, what we care about, is people’s wellbeing and welfare. And a dollar buys different amounts of welfare. If you give a dollar to Bill Gates, you don’t buy very much welfare. If you give a dollar to somebody who’s living on a dollar a day, you buy a lot more welfare with that dollar. So at Open Philanthropy, we have a benchmark standard that we measure the social impact of stuff in terms of the welfare you buy if you give a dollar to somebody who’s earning $50,000 a year — which is roughly a typical American in 2011, when we set this benchmark.

Luisa Rodriguez: Cool. So spending $1 giving you a $70 return seems like a really, really good return to me.

Matt Clancy: Yeah, it’s great. Yeah, it is good. The key insight is that, when you talk about $70 of value, you can imagine somebody just giving you $70. And the benefits we get from science are very different than that kind of benefit. So if I gave the typical American $70, that’s like a one-time gift. And economists have this term, rival, which means like one person can have it at a time. So if I have the $70 and I give it to you, now you have the $70 and I no longer have $70. It’s sort of this transient, one-off thing.

The knowledge that is discovered by science is different. It’s not rival. If I discover an idea, I can give you the idea, and now we both have the idea. And lots of inventors and practice can build on the idea and invent technologies. And technologies, you can think of them as like blueprints for things that do things people want — and that is also a non-rival idea. So when science gives you $70 in benefits, it’s lots of little benefits shared by, in principle, everyone in the world and over many generations. So the individual impact per person is much smaller, but it’s just multiplied by lots of people.

Sort of the baseline is, we spend hundreds of billions of dollars per year on science. And what’s that getting us? It’s getting us a quarter of a percent of income growth in this model, plus this marginal increase in human longevity. But to a first approximation, after lots of decades, everybody gets those benefits. And that’s what’s different about just like a cash transfer.

Another way to think about if this is a lot — and this is my plug for metascience — 70x return is great, but that actually wouldn’t clear Open Phil’s own threshold for the impact we want our grants to make.

Luisa Rodriguez: Interesting.

Matt Clancy: What that means is, we would not find it a good return to just write a cheque to science. We pick specific scientific projects because we think they are more valuable than the average and so on.

But imagine, instead of just writing a cheque to science… Say you have a billion dollars per year. You could either write it to science, or you could spend it improving science: trying to build better scientific institutions, make the scientific machine more effective. And just making up numbers, say that you could make science 10% more effective if you spent $1 billion per year on that project. Well, we spend like $360 billion a year on science. If we could make that money go 10% further and get 10% more discoveries, it’d be like we had an extra $36 billion in value. And if we think each dollar of science generates $70 in social value, this is like an extra $2.5 trillion in value from this, per year, from this $1 billion investment. That’s a crazy high ROI — like 2,000 times as good.

And that’s the calculation that underlies why we have this Innovation Policy programme, and why we think it’s worth thinking about this stuff, even though there could be these downside risks and so on, instead of just doing something else.

Luisa Rodriguez: Right. Cool. That was really concrete. So that seems just like a really insane return on investment, as you’ve said. Does that feel right to you?

Matt Clancy: So it’s tough to say, because as I said, the nature of the benefit is very alien to your lived experience. It’s not like you could know what it’s like to be lots of people getting a little benefit.

But I did do this one exercise to try to check, like a sense check if this is right. And I was like, let’s think about my own life. Spoiler, I’m 40. So I’ve had 40 years of technological progress in my lifetime. And if you use the framework we use to evaluate this in this model, it says if progress is 1% per year, my life should be like 20% to 30% better than if I was my age now, 40 years ago.

So I thought, does it seem plausible that technological progress for me has generated a 20% to 30% improvement? So I spent a while thinking about this. And I think that yeah, it is actually very plausible, and sort of an interesting exercise, because it also helps you realise why maybe it’s hard to see that value.

Like one is: it affects the amount of time I have to do different kinds of things. And when you’re remembering back, you don’t remember that you spent three hours vacuuming versus like one hour vacuuming or something; you just remember you were vacuuming. So it kind of compresses the time, and so you lose that. And then also there’s just so many little things that happened that it’s hard to… Like, it’s easy to evaluate one big-impact thing, because you can imagine if I had it or I didn’t, but when it’s just like 1,000 little things, it’s harder to value. But do you want to hear like a list of little things?

Luisa Rodriguez: Yeah, absolutely. Tell me.

Matt Clancy: All right. So start with little trivial things. Like, I like to work in a coffee shop, and because of the internet and all the technology that came with computing, I can work remotely in a coffee shop most days for part of the day.

I like digital photography. These are just trivial things. And not only do I like taking them, but I’ve got them on my phone. The algorithm is always showing me a new photo every hour. My kids and I look through our pictures of our lives way more often than when I was a kid looking at photo albums.

A little bit less trivial is art, right? So my access to some kind of art is way higher than if I’d lived 40 years ago. The Spotify Wrapped came out in November, and I was like, oh man, I spent apparently 15% of my waking hours listening to music by, it said, 1,000 different artists. Similarly with movies: I’m watching lots of movies that would be hard to access in the past.

Another dimension of life is like learning about the world. I think learning is a great thing, and we just know a lot more about the world, through the mechanisms through science and technology and stuff. But there’s also been this huge proliferation of ways to ingest and learn that information in a way that’s useful to you. So there’s podcasts where you can have people come on and explain things; there’s data — there’s explainers, data visualisation is way better, YouTube videos, large language models are a new thing, and so forth. And there’s living literature reviews, which is what I write. So like a third of what I spend my time doing didn’t exist like 40 years ago.

Another dimension that life is worth living and valuable is like your social connections and so on. And for me, remote work has made a big difference for that. I grew up in Iowa; I have lots of friends and family in Iowa. And Iowa is not the hotspot of economics of innovation stuff, necessarily. But I live here, I work remotely for Open Phil, which is based in San Francisco. And then remote work also has these time effects. So I used to commute for my work 45 minutes a day each way. And I was a teacher, a professor, so that was not all the time — I had the summers off and so on — but anyways, still saving 90 minutes a day, that’s a form of life extension that we don’t normally think of as life extension, but it’s extending my time.

And then there’s tonnes of other things that have the same effect, where they just free up time. I used to, when I was a kid, drive and go shop — walk the shop floors a lot to get the stuff with my parents that you need. Now we have online shopping and a lot of the mundane stuff just comes to our house, shipped, it’s automated and stuff. We’ve got a more fuel efficient car; we’re not going to the gas station as much. We’ve got microwave steamable vegetables that I use instead of cooking in a pot and stuff. We’ve got an electric snow blower; it doesn’t need seasonal maintenance.

Just like a billion tiny little things. Like every time I tap to pay, I’m saving maybe a second or something. And then once added up with the remote work, the shopping, I think this is like giving me weeks per year of stuff that I wouldn’t be doing.

But I can keep going. So there’s like, it’s not just that you don’t have to do stuff that you wouldn’t normally do. There’s other times when it helps you make better use of time that might otherwise not be available to you. So like all these odd little moments that you’re waiting for the bus or for the driver to get here, for the kettle to boil, at the doctor’s office, whatever, you could be on your phone. And that’s on you, how you use that time, but you have the option to learn more and do interesting stuff.

Audio content, the same: for like a decade, half the books I’ve “read” per year have been audiobooks and podcasts. And I’m sure maybe there’s people listening to this podcast right now while they’re doing something that they otherwise normally would not be able to learn anything about the world — so they’re driving or walking or doing the dishes or folding laundry or something like that. So that’s all the tonnes of tiny little things.

And this is just setting aside medicine, which is equally valuable to all that stuff, right? I’ve been lucky in that I haven’t had life-threatening illnesses, but I know people who would be dead if not for advances in the 40 years, and they’re still in my life because of this stuff. And then I benefited, like everyone else, from the mRNA vaccines that ended lockdown and so forth.

So, long story short: it seems very plausible to me that the framework we’re using, which says this should be worth 20% to 30% of my wellbeing, seems plausible over a 40-year lifespan. I’m luckier than some people in some respects, but I’ve also benefited less than other people in some respects. Like, if somebody had a medical emergency that they wouldn’t be alive here today, they could say that they benefited more from science than me. So if this is happening to lots of people now and in the future, that’s where I start to think that $70 per dollar in value starts to seem plausible.

Luisa Rodriguez: I was all ready with my follow up questions that were like, “I’m not so sure that you’re 20% to 30% better off than you were in 1984…” — but I think I’m just sold, so we can move on.

Matt Clancy: Well, thanks for indulging me, because I think it’s hard to get the sense unless you really start to go through a long list, and it’s just so many little things. And if you think of anyone individually — like, “Oh, this guy’s happy that he can go to coffee shops, woo-hoo” — but that’s just like a tiny sliver of all the different things that are happening.

Luisa Rodriguez: Yeah. I think I still have some… It’s closer to a philosophical uncertainty than an empirical one, that’s just like, humans seem to have ranges of wellbeing they can experience. And it might just be that a person that grew up kind of like you or I did — in the US, without anything terrible happening to them — can’t be that much better now than they were 40 years ago, just because of the way our brains are wired. But it also seems like there are some things that clearly do affect wellbeing, and a bunch of those — especially the health things — clearly do.

And I definitely put some weight on all of these small things that add up also making a difference. It does fill me with horror that I couldn’t have listened to the music and podcasts I would have liked to have listened to while doing hours of chores at home — that now, one, I get to do while doing fun stuff, and two, get to do with a Dyson. My Dyson is awesome.

Matt Clancy: Yeah, we have an iRobot that we use on some of our things. But actually, this concern, this is part of what motivated me, is this like, does innovation policy really matter for people who are already living on the frontier? And I came away thinking that it may be hard to see, but I think it does.

Forecasting global catastrophic biological risks from scientific progress [01:05:20]

Luisa Rodriguez: OK, so let’s leave the benefits aside for now and come back to the costs. So the costs we’re thinking of as the increase in global biological catastrophic risks that are caused by scientific progress. And to estimate this, you used forecasts generated as part of the Forecasting Research Institute’s Existential Risk Persuasion Tournament. Can you just start by actually explaining the setup of that tournament?

Matt Clancy: Yeah. This tournament was hugely important, because the credibility of this whole exercise hinges on how are you going to parameterise, or how are you going to estimate these risks from technologies that haven’t yet been invented.

Anyway, the tournament was held in 2022, I think with 169 participants. Half of them are superforecaster generalists, meaning that they’ve got experience with forecasting and they’ve done well in various contests or other things. The other half are domain experts, so they’re not necessarily people with forecasting expertise, but they’re experts in the areas that are being investigated. They got people from four main clusters: biosecurity, AI, nuclear stuff, and AI risk. There might have been climate, but I can’t remember. I focused mostly on the biorisk people. There are 14 biorisk experts. Who they are, we don’t really know. It’s anonymous.

Anyway, the format of this thing: it’s all online, and it proceeded over a long time period. People forecast I think 59 different questions related to existential risk. First they did them by themselves as individuals, then they were put into groups with other people of the same type — so superforecasters were grouped together, biorisk experts were grouped together — and then they collaborate, they talk to each other, and then they can update their forecasts again as individuals. Then the groups are combined. And so you get superforecasters in dialogue with the domain experts, they update again. And then in the last stage, they get access to arguments from other groups that they weren’t part of. And it’s well incentivised to try and do a good job. You get paid if you are better at forecasting things that occur in the near term: 2024, 2030.

But they want to go out to 2100. So it’s a real challenge. How do you incentivise people to come up with good forecasts that we won’t actually be able to validate? And they try a few different things, but one is, for example, you get awards for writing arguments that other people find very persuasive. And then they tried this thing where you get incentivised to be able to predict what other groups predict. There’s this idea, I think Bryan Caplan maybe coined it, of the [ideological] Turing test: can I present somebody else’s opinion in such a way that they would say, yep, that’s my opinion? So this is sort of getting at that. Like, do you understand their arguments? Can you predict what they’re going to say is the outcome?

Fortunately for me, in this project they asked a variety of questions about genetically engineered pandemics and a bunch of other stuff. The main question was like: what’s the probability that a genetically engineered pandemic is going to result in 1% of the people dying over a five-year period? And they debated that a lot. At the end of the tournament, they asked two additional questions: what about 10% of the people dying, and what about the entire human race going extinct? So those last ones, it’s not ideal; they weren’t based on the same level of debate, but these people had all debated similar issues in the context of this one.

Luisa Rodriguez: Right. OK, so that’s the setup. What were the bottom lines relevant to the report? What do they predict? Did they think there’ll be a time of biological perils?

Matt Clancy: So one thing that was super interesting was that there was a sharp disagreement between the domain experts and the superforecasters. And this sharp disagreement never resolved — even though they were in dialogue with each other and incentivised in these ways to try to get it right, they just ended up differing in their opinions in a way that never, the gap was never closed.

And it differs quite substantially. So if you start with the domain experts, the 14 biosecurity experts: they thought that a genetically engineered pandemic disaster is going to be a lot more likely after 2030. The superforecasters thought the same thing, that it’s going to be more likely, but their increase was smaller. And because there’s the sharp disagreement between them, throughout the whole report, I’m basically like, “here’s the model with one set of answers; here’s the model with the other set of answers.”

And the key thing that we have to get from their answers is: what is the increase in the probability that somebody dies due to these new biological capabilities? And like I said, there’s a sharp disagreement. The superforecasters gave the answers that, if I put it in the terms of the model, implies like a 0.0021% probability per year. The domain experts: 0.0385. The numbers don’t matter…The numbers do matter, but they’re not going to be meaningful to somebody who’s never thought of it… [The key thing is that] the domain experts think the risk is 18 times higher than the superforecasters do.

Luisa Rodriguez: Is there a way to put that in context? I don’t know, what’s my risk in the next century or something of dying because of the risk, according to each of those groups?

Matt Clancy: So we all just lived through this pandemic event, so we all actually have some experience of what these things can be like. And The Economist magazine did this estimate that in 2021, the number of excess deaths due to COVID-19 was 0.1% of the world. I think that’s like a useful anchor that we’re familiar with.

So if you want to take the superforecasters, they’re going to say something like that happens every 48 years. And I should say it’s not that a COVID-like event happens every 40 years — it’s very specifically that there is an additional genetically engineered-type COVID every 48 years, as implied by their answers. The domain experts, it’s like this is happening every two and a half years, is their forecast.

And I think another important thing to clarify is that these answers are also consistent with them thinking, for example, that it could be something much worse less often, or it could be something not as bad more often, that kind of thing. But that’s a way to calibrate the order of magnitude that they’re talking about.

Luisa Rodriguez: OK, yeah. It could be something like, at least according to the domain experts, you have something half as bad as COVID once a year, or it could be twice as bad every four years. But that’s the kind of probabilities we’re talking about. And they do seem even more different to me once you put them in context like that. So maybe we should actually just address that head on: how do you think about the fact that the superforecasters and domain experts came up with such different results, even though they had months to argue with each other? And in theory, it would be really nice if they could converge.

Matt Clancy: I think this is a super interesting open question that I’m fascinated by. I didn’t participate in this tournament; I’m not one of those sort of anonymous people. But I did engage in a very long back-and-forth debate format with somebody about explosive economic growth. And we’re on opposite sides of the position. And again, I think there was like no convergence in our views, basically. It’s interesting, because the biorisk people in this are not outliers. There was this disagreement between the superforecasters and domain experts just across the board on different x-risk scenarios.

And I could say more, but I think the core thing, my guess is that people just have different intuitions about how much you can learn from theory. I think some people, maybe myself included, are like, “Until I see data, my opinion can’t be changed that far. You can make an argument, I can’t necessarily pick a hole in it, but you might just be missing something.” Like theory, theoretical speculation has a bad track record, maybe.

But I think that it’s actually an open question. We don’t actually know who’s right. We don’t know if I’m right that people have different intuitions about how much you can learn from theory. We don’t have, as far as I know, good evidence on how much weight should you put in theory? So hopefully we’ll find out over the next century or something. You know, I have views on who’s right, but through the report… I’m also not a biologist, and I didn’t participate. Like I said, I just present them all in parallel, and try to hold my views until the end.

Luisa Rodriguez: OK, so as we talk about the results, we will talk about the results you get if you use the estimates from both of these groups, and we’ll be really clear about what we’re talking about. But then I do want to come back to who you think is right, because people are going to hear both results, but the results in some cases are pretty different and have different impacts, at least in the degree to which you’d believe in one set of policy recommendations over another. And I want to give people a little bit more of something to have when thinking about which set of results they buy. But we’ll come back to that in a bit.

What’s the value of scientific progress, given the risks? [01:15:09]

Luisa Rodriguez: Let’s talk about your headline results. You estimated the net value of one year of science, factoring in the fact that science makes people healthier and richer, but also might bring forward the time of biological perils. What was that headline result?

Matt Clancy: Yeah, so like you said, it depends on whose forecasts you use. Earlier I said that if you just ignore all these issues, the return on science is like $70 per dollar. It was actually $69 per dollar. And that only matters because if you use the superforecaster estimates, the return drops by a dollar to $68 per dollar.

If you use the domain experts, you see it drops from $69 to roughly $50 per dollar. Which, getting $50 for every dollar spent is still really good. But another way to look at it is like these bio capabilities, if they’re right, erase like a quarter of the value that we’re getting from science. Because dropping from $70 to $50 is almost 25% of the value, basically.

I think this whole exercise, you know, we’re not physicists charting with precision if we’re going to see Higgs boson particles or something. This is more like quantitative thought experiments, right? If you quantify the benefits based on historical trends, you quantify the potential costs based on these forecasting things, you can kind of do a horse race.

And my takeaway from this exercise is basically the benefits of science historically have been very good, even relative to these forecasts. So you should keep doing it. But also, if these domain experts are right, the risks are very important, and quantitatively matter a lot. And addressing them is super important too.

Luisa Rodriguez: Yeah. OK, let’s see. I feel like there are a couple of takeaways there. One is just like, in particular if the domain experts are right, the risks are really eating some of this value. And that’s a real shame, even if it still points at science being net positive. I guess also, just to affirm: even though you’ve done this super thoroughly, it’s just such a massive, difficult question that your results are going to have really wide error bars still.

But I love this kind of exercise in general, because I feel like people could make really compelling arguments to me about science being net negative because of engineered pathogens, and people are going to have more and more accessibility to the ability to create a new pathogen we’ve never seen before that could have really horrible impacts on society. And I could be like, “Wow. Yeah, what if science is net negative?” And then I could also be convinced, I guess through just argument, that the gains to science from science are really important. And yes, they increase these risks, but we obviously have to keep doing science or society will really suffer.

And it just really, really matters what numbers, even if they’re wide ranging, you put on those different kinds of possibilities, and you just don’t get that through argument.

Matt Clancy: Yeah, 100%. And when we started this project, that was the state of play — just like, there are arguments that seem very compelling on either side. And I think that in that situation, it’s very tempting to be like, “Well, maybe we’ll just say it’s like 50/50” — but the quantities really matter here. And so when we started this research project, I was like, we have to see if, best-case scenario, one of the benefits way outweigh the costs, or vice versa. Because as you said, there’s going to be these error bars.

But I was like, “That’s not going to happen. I’m sure that we’re going to end up with just this muddled answer, where it’s like, if you buy the arguments that say risk, you get that it’s bad, and if you buy the arguments that say it’s not risky…” So anyway, I’m very glad we checked, and I’m super glad that there was this Existential Risk Persuasion Tournament that I think gave us numbers that were more credible for what reasonable people might forecast — if they’re in a group, they’ve got incentives to try to figure it out and so on. It’s not just, you know, me trying to figure it out.

Luisa Rodriguez: Asking around. Yeah, yeah, yeah. I completely agree. And yeah, I see what you mean. It could have been the case that you play around with a few assumptions that people really disagree on, and that changes the sign of your results, so you still don’t get any clarity. But in fact, this just did give you some clarity. And using even both of these estimates, you get positive returns from science.